Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Technical Blog

Explore in-depth articles, tutorials, and insights on data analytics and machine learning in the Databricks Technical Blog. Stay updated on industry trends, best practices, and advanced techniques.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks Community

- Technical Blog

- Using dbt core with OAuth on Azure Databricks

Databricks Employee

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

09-28-2023

09:36 AM

By Jeroen Meulemans, Solutions Architect at Databricks Amsterdam, jeroen.meulemans@databricks.com, Thu 7 Sep 2023

Introduction

This blog guides you through the process of configuring OAuth credentials for Azure Databricks and dbt, making your data workflows more secure and self-service. dbt (data build tool) is a popular development environment enabling data analysts and engineers to transform data.

One common challenge users face is to generate and refresh time-bound credentials and refresh them programmatically. Today many users are using PATs which is not the most secure solution to access the Databricks Lakehouse with third-party tools like dbt.

Table of content

Introduction

Understanding OAuth and Its Benefits

Prerequisites

OAuth U2M (user-to-machine) workflow

OAuth M2M (machine-to-machine) workflow

Common troubleshooting issues

Recap of the benefits of OAuth for Azure Databricks and dbt.

Understanding OAuth and Its Benefits

What is OAuth?

OAuth is an open standard authorization framework that allows applications to access resources on behalf of a user. OIDC is an authentication layer built on top of OAuth 2.0, designed to provide user authentication and identity information in a standardized way. OAuth framework defines various roles:

- The client, in this case, is dbt

- the authorization server here is Azure AD and

- the resource server is DBSQL API.

Which authentication options are supported for dbt to Databricks?

PAT (Personal Access Tokens) Pros

- Easy to create a PAT via the Databricks workspace, under User Settings > Developer > Access tokens > Generate new token

- Well-supported for Databricks workspace-level resources and operations

PAT (Personal Access Tokens) Cons

- These long-lived tokens by default are valid for 90 days, but can be configured with an indefinite lifespan. If the token leaks it could be used by others, not controlled by Azure AD.

- Refreshing these tokens is manual but can be automated via the Databricks API.

- A separate PAT is required per workspace.

- Some customers also have a compliance policy against the use of custom tokens like PAT whereas OAuth is an industry standard with broad support.

OAuth (OIDC)

- OAuth is an industry-standard with wide adoption by many identity providers and client applications and is supported by the dbt-databricks adapter.

- OAuth allows granting access to a resource server on behalf of a user without sharing credentials such as username/password or PAT tokens.

- OAuth tokens are short-lived and by default only valid for 1 hour, after which they are automatically refreshed, so no manual token rotation is required. Even if the token is leaked, it has very limited use.

- OAuth tokens can be used across different Databricks workspaces.

While PATs are well supported to connect dbt to Databricks, they come with limitations. To address these, Databricks has introduced OAuth support, enabling teams to use their Azure AD account credentials for seamless access.

Prerequisites

- Access to an Azure Databricks Workspace and a Databricks SQL Warehouse. You will require the Server hostname (adb-xxx.azuredatabricks.net), and HTTP path (/sql/1.0/warehouses/xxxxxx).

- Access to a dbt core environment: we deploy a local dbt core environment. dbt Cloud supports a manual connection, and new projects use the dbt-databricks adapter by default. At the time of writing (Sep 2023), dbt Cloud does not support OAuth in combination with DBSQL.

- Azure Active Directory (Azure AD) Account

- Access to the Azure portal for new App registrations

- Optional install Databricks CLI to verify service principal in step 2

OAuth U2M (user-to-machine) workflow

OAuth's User-to-Machine (U2M) workflow allows systems access on behalf of a user, like the dbt client, by securely obtaining an OAuth token through a browser popup from Azure AD. Dbt uses this token to connect to the DBSQL API, simplifying authentication and authorization.

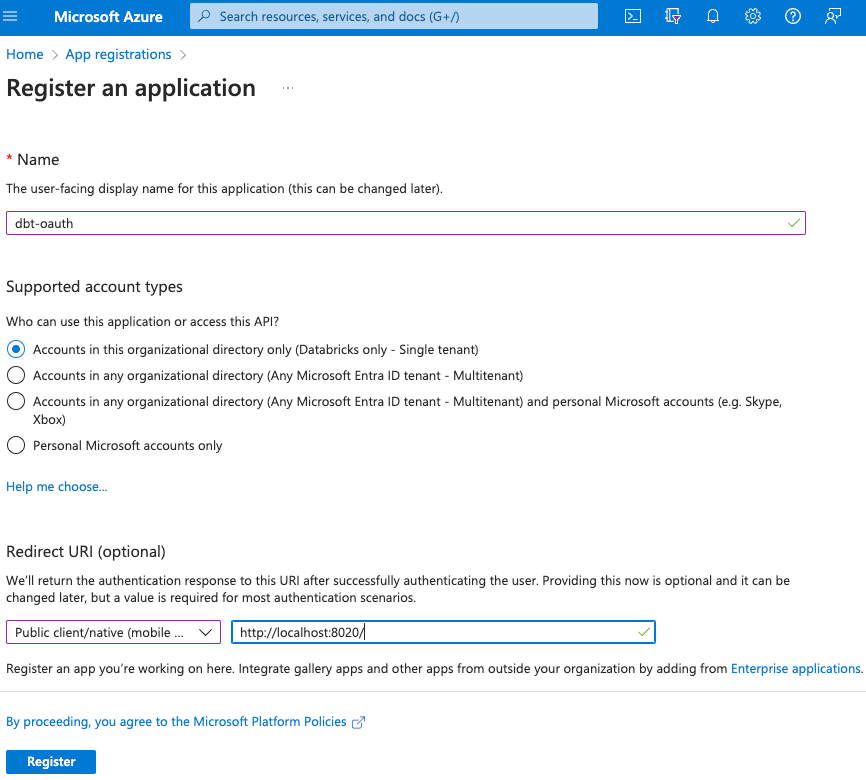

Step 1 - Register an application on Azure.

Go to the Azure portal > App registrations > New registrations:

- Name: eg. dbt-oauth

- Redirect URI select type “Public client/native (mobile & desktop)” toward http://localhost:8020/

- Write down App ID and Tenant ID.

Step 2 - (Optional) Validate Service Principal using Databricks CLI

The Databricks CLI allows validating the configured credentials before connecting to DBSQL with dbt. This step is optional as the Databricks CLI is not required to run dbt, nor does it need to be installed in our automation or CI/CD tooling to run dbt.

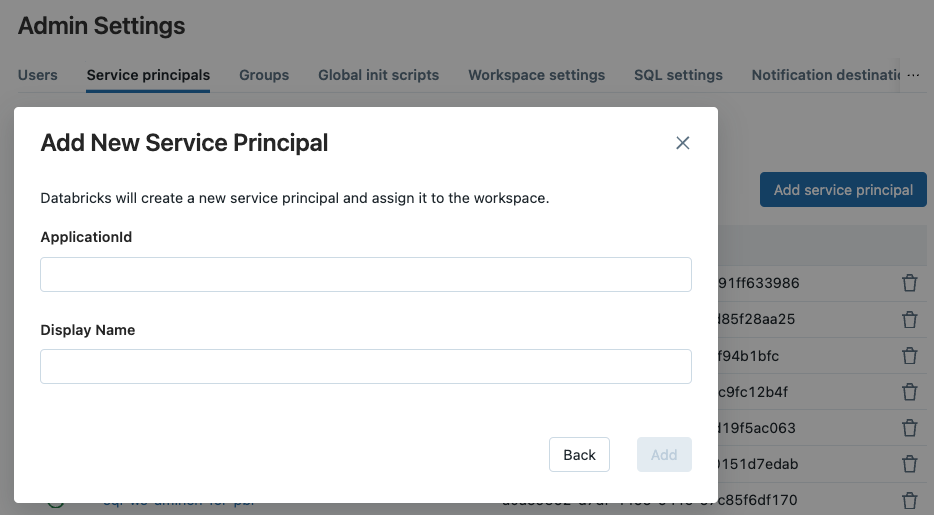

Add Service Principal to a workspace - You can add Service Principals as a Workspace Admin. Go to workspace > Admin Settings > Service Principals > Add service principal > Client ID and use the App ID from the service principal you registered.

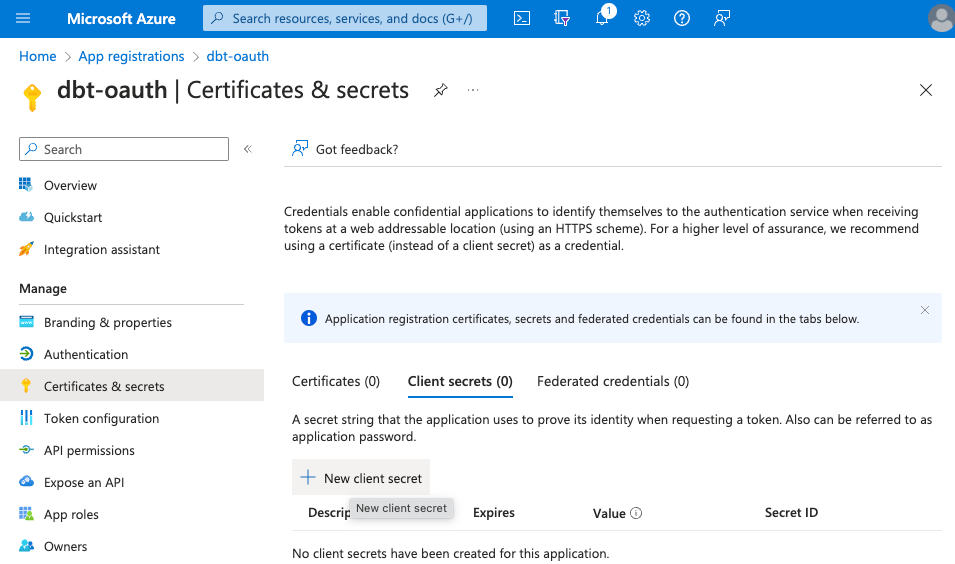

Go to the Azure portal > App registrations > Owned Applications > Search for name, e.g., dbt-oauth > goto application > Certificates & secrets > New Client Secret > Description > Enter a description > Click “Add”. Then, write down the Secret Value (not the Secret ID), this can only be done right after creation.

Copy the values to use them later:

- App ID: eg. 9729e77e-ba94-4d53-8cfb-bb609f43f881

- Tenant ID: eg. f4da2730-4463-4910-98a7-13d0523c0b2a

- Secret: eg. TulipsAndCheese

Add credentials to Databricks CLI config file. This applies to Databricks CLI versions 0.200 and above.

~ cat .databrickscfg [dbt-oauth] host = adb-xxx.azuredatabricks.net azure_tenant_id = 9729e77e-ba94-4d53-8cfb-bb609f43f881 azure_client_id = f4da2730-4463-4910-98a7-13d0523c0b2a azure_client_secret = TulipsAndCheese ~ databricks clusters list -p dbt-oauth Name State Brie TERMINATED Parmigiano TERMINATED Gouda-serverless RUNNING

Check, we verified that the service principal can access the Databricks workspace API. Feel free to remove the credentials from the .databrickscfg file.

Step 3 - Create a local dbt core project via CLI

To make it easier to clean up later we are using a venv (virtual environment) to create an isolated Python environment.

~ python3 -m venv dbt-test-oauth-project ~ source ./dbt-test-oauth-project/bin/activate ~ cd dbt-test-oauth-project ~ pip3 install dbt-databricks ~ dbt init databricks_demo ~ cd databricks_demo

Step 4 - Create profiles.yml

In dbt, the profiles.yml file is a configuration file used to define connection information for your various data warehouses and databases. Dbt uses this file to connect to your data sources and target destinations when running data transformation tasks. Below a profile is added and through the target variable it is set as the default run profile used by dbt.

~ cat ~/.dbt/profiles.yml

databricks_demo:

outputs:

azure-oauth-u2m:

catalog: uc_demos_jeroen

host: "adb-xxx.azuredatabricks.net"

http_path: "/sql/1.0/warehouses/xxxxxx"

schema: databricks_demo

threads: 1

type: databricks

auth_type: oauth

client_id: "9729e77e-ba94-4d53-8cfb-bb609f43f881"

target: azure-oauth-u2m

Step 5 - Run dbt as OAuth U2M (user-to-machine)

~ dbt debug --target azure-oauth-u2m

Step 6 - Browser permission request

Upon the first dbt run you will get the below screen in the browser:

Permissions requested dbt-oauth This application is not published by Microsoft. This app would like to: - Have full access to Azure Databricks - Maintain access to data you have given it access to - View your basic profile

Click Accept to continue.

Step 7 - Output first dbt run

Running dbt with the azure-oauth-u2m target, we don’t need to specify the option here as it is configure the default target in step 4.

~ dbt debug 13:41:08 Running with dbt=1.6.1 13:41:08 dbt version: 1.6.1 13:41:08 python version: 3.11.4 13:41:08 python path: /Users/user/Desktop/test-azure/bin/python3.11 13:41:08 os info: macOS-13.5.1-arm64-arm-64bit 13:41:09 Using profiles dir at /Users/user/.dbt 13:41:09 Using profiles.yml file at /Users/user/.dbt/profiles.yml 13:41:09 Using dbt_project.yml file at /Users/user/Desktop/test-azure/databricks_demo/dbt_project.yml 13:41:09 adapter type: databricks 13:41:09 adapter version: 1.6.2 13:41:09 Configuration: 13:41:09 profiles.yml file [OK found and valid] 13:41:09 dbt_project.yml file [OK found and valid] 13:41:09 Required dependencies: 13:41:09 - git [OK found] 13:41:09 Connection: 13:41:09 host: adb-xxx.azuredatabricks.net 13:41:09 http_path: /sql/1.0/warehouses/xxxxxx 13:41:09 catalog: uc_catalog_name 13:41:09 schema: databricks_demo 13:41:09 Registered adapter: databricks=1.6.2 13:41:10 databricks-sql-connector adapter: Successfully opened session 01ee4cbb-09f2-1aac-989d-a71b61c66a77 13:41:10 databricks-sql-connector adapter: Closing session 01ee4cbb-09f2-1aac-989d-a71b61c66a77 13:41:11 Connection test: [OK connection ok] 13:41:11 All checks passed!

What you see happening is a browser window popup starting your regular authentication workflow. Once you authenticate you are being forwarded to localhost:8020. This means we successfully authenticated to Azure AD and shared the OAuth token with our dbt client. Now dbt can connect to Databricks SQL warehouse without any static API key, secrets, or static credentials. We have authenticated with our Azure AD credentials and are using a short-lived Oauth token to authenticate to the DBSQL API. Mission accomplished!

The first time you log in to your identity provider via the browser popup a token is generated and cached in a secure store. Once the token expires you will have to reauthenticate. During the lifetime of the token, you will not have to provide any credentials anymore. On a Mac, the OAuth token is stored using the keyring. You can access the token on your Mac via the application “Keychain Access” and search for dbt-databricks. Deleting the token from the secure store will trigger a re-authentication to your identity provider via the browser popup.

OAuth M2M (machine-to-machine) workflow

OAuth's Machine-to-Machine (M2M) workflow empowers automated processes, like CI/CD integration, to securely access resources without human intervention, making it perfect for scenarios where, for instance, a dbt client runs as a service principal, acquires an OAuth token from Azure AD through a service principal, and utilizes this token to connect with the DBSQL API.

Step 8 - Create new client secret for M2M access via your Azure service principal

OAuth machine-to-machine (M2M) authentication uses the credentials of the Azure service principal provisioned in dbt directly to request an OAuth token to access the DBSQL API from dbt. This form of OAuth is ideally suited for automation and integration in CI/CD pipelines.

Generate a secret string that the application uses to prove its identity when requesting a token. Let’s reuse the existing App registration for M2M access. Revisit the secret creation in step 2.

Step 9 - Add service principal to workspace

As a Workspace Admin you can add Service Principals to the workspace.

Go to workspace > Admin Settings > Service Principals > Add service principal > Client ID and use the App ID from the service principal you registered. The logical name you are free to choose.

Step 10 - Add M2M principal to profiles.yml

In dbt, the profiles.yml file is a configuration file, where you can define multiple profiles within this file each representing a different data warehouse.

As the profiles.yml file is cleartext, it is recommended to use environment variables to store sensitive information such as client ID and client secret. As the M2M workflow is typically used in automation or CI/CD workflows, we can parametrize the workflows.

~ cat ~/.dbt/profiles.yml

databricks_demo:

outputs:

...

azure-oauth-m2m:

catalog: uc_catalog_name

host: "adb-xxx.azuredatabricks.net"

http_path: "/sql/1.0/warehouses/9196548d010cf14d"

schema: databricks_demo

threads: 1

type: databricks

auth_type: oauth

client_id: "{{ env_var('DATABRICKS_CLIENT_ID') }}"

client_secret: "{{ env_var('DATABRICKS_CLIENT_SECRET') }}"

target: azure-oauth-m2m

Step 11 - Authorize SP to access SQL Warehouse

To add the appropriate privileges, navigate to your Databricks Workspace > SQL Warehouses > "Warehouse" > Permissions > Add Service Principal > “Can Use”.

Step 12 - Run dbt as OAuth machine-to-machine (M2M)

~ dbt debug --target azure-oauth-m2m 09:57:24 Running with dbt=1.6.1 09:57:25 Registered adapter: databricks=1.6.2 09:57:25 Found 2 models, 4 tests, 0 sources, 0 exposures, 0 metrics, 471 macros, 0 groups, 0 semantic models 09:57:25 09:57:26 databricks-sql-connector adapter: Successfully opened session 01ee4d64-f42a-1d9c-b3a8-e301afcc605c 09:57:27 databricks-sql-connector adapter: Closing session 01ee4d64-f42a-1d9c-b3a8-e301afcc605c 09:57:28 databricks-sql-connector adapter: Successfully opened session 01ee4d64-f54f-1931-87cc-386a5147a23c 09:57:28 databricks-sql-connector adapter: Closing session 01ee4d64-f54f-1931-87cc-386a5147a23c 09:57:29 databricks-sql-connector adapter: Successfully opened session 01ee4d64-f60e-1f10-af5e-8628cf1c28a4 09:57:30 databricks-sql-connector adapter: Closing session 01ee4d64-f60e-1f10-af5e-8628cf1c28a4 09:57:30 Concurrency: 1 threads (target='azure-oauth-u2m') 09:57:30 09:57:30 1 of 2 START sql table model databricks_demo.my_first_dbt_model ................ [RUN] 09:57:31 databricks-sql-connector adapter: Successfully opened session 01ee4d64-f743-1d5e-a11b-329d9f58cdab 09:57:36 databricks-sql-connector adapter: Closing session 01ee4d64-f743-1d5e-a11b-329d9f58cdab 09:57:36 1 of 2 OK created sql table model databricks_demo.my_first_dbt_model ........... [OK in 6.10s] 09:57:36 2 of 2 START sql view model databricks_demo.my_second_dbt_model ................ [RUN] 09:57:37 databricks-sql-connector adapter: Successfully opened session 01ee4d64-fae3-1b21-aca8-118247499ade 09:57:38 databricks-sql-connector adapter: Closing session 01ee4d64-fae3-1b21-aca8-118247499ade 09:57:38 2 of 2 OK created sql view model databricks_demo.my_second_dbt_model ........... [OK in 1.88s] 09:57:39 databricks-sql-connector adapter: Successfully opened session 01ee4d64-fc08-1172-8584-a55395f91879 09:57:39 databricks-sql-connector adapter: Closing session 01ee4d64-fc08-1172-8584-a55395f91879 09:57:39 09:57:39 Finished running 1 table model, 1 view model in 0 hours 0 minutes and 14.43 seconds (14.43s). 09:57:39 09:57:39 Completed successfully 09:57:39 09:57:39 Done. PASS=2 WARN=0 ERROR=0 SKIP=0 TOTAL=2

We now successfully authenticated to the Databricks SQL warehouse with our Azure service principal which is ideally suited for automation and integration in CI/CD pipelines.

Common troubleshooting issues

If you run into any issues at this point, you can troubleshoot connectivity to the DBSQL warehouse using a more verbose output from the databricks-dbt adapter to investigate connectivity, authentication, and authorization issues.

~ dbt --debug --log-level-file error debug --target azure-oauth-u2m

Common Oauth troubleshooting examples.

Issue 1 - Error during request to server: : User not authorized.

Solution: The Service Principal is not registered in the Databricks Workspace. Revise Step 9.

Issue 2 - PERMISSION_DENIED: User is not an owner of Table '<catalog>.<schema>.<table>'.

Solution: The initial creator of the table is not the same as the user now trying to write to the table. This could be because the U2M and M2M are seen as two different users, and by default, only the original owner of the table can write to it.

Issue 3 - Sorry, but we’re having trouble with signing you in. AADSTSxx: Application with identifier 'xx-xx-xx-xx-xx' was not found in the directory 'Databricks'.

The client ID configured in profiles.yml does not correspond with a registered service principal. Please revise Step 1.

Issue 4 - invalid_client: AADSTSxx: The request body must contain the following parameter: 'client_assertion' or 'client_secret'

The redirect URI is configured with type “Web”, this should be of type “Mobile and desktop applications”. This is only relevant for the U2M workflow.

Recap of the benefits of OAuth for Azure Databricks and dbt.

By the end of this blog, you should have a clear understanding of how to configure OAuth credentials for Azure Databricks and dbt.

While it may seem more complex to set up initially compared to the simpler configuration of Personal Access Tokens (PAT), the advantages OAuth brings to the table are substantial:

- Reusing existing identity infrastructure such as Azure AD

- Industry-standard

- Short-lived tokens increase security

- Supports a high number of users, not limited per workspace

- Offering a self-service mechanism to data consumers

This makes OAuth a preferred option for many data platform teams and data consumers using dbt on Azure Databricks.

6 Comments

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Related Content

- Databricks now supports importing Tableau and Power BI files into Genie Code to automatically build in Community Articles

- Letting Coding Agents Move Fast Without Breaking Everything in Technical Blog

- The Top 10 Best Practices for AI/BI Dashboards Performance Optimization (Part 2) in Technical Blog

- CI/CD using Workload Identity Federation in Community Articles

- Processing Unstructured Data in Volumes with Unity Catalog Open APIs in Technical Blog