Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Data Engineering

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks

- Data Engineering

- Notebook level automated pipeline monitoring or fa...

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-08-2022 10:13 AM

Hi, is there any way other than adf monitoring where in automated way we can get notebook level execution details without getting to go to each pipeline and checking

Labels:

- Labels:

-

DatabricksNotebook

-

Notebook Level

1 ACCEPTED SOLUTION

Accepted Solutions

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-09-2022 02:06 AM

databricks jobs cannot be used in data factory. Data factory itself creates jobs on databricks.

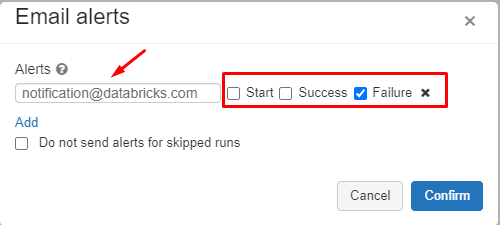

But there are other ways to monitor notebooks. The easiest is using alerts and metrics (which can be found in the monitoring tab of ADF). This will send emails or webhook messages or ... when a certain condition is met. So you can create an alert which sends an e-mail when a databricks pipeline has failed.

Very simple but effective.

This of course does not give you detail about what exactly went wrong so you have to look into the notebook logs.

If you want to have logs on a deeper level, you can use log analytics together with the databricks notebooks.

This is more elaborate monitoring but also more work to set up (and more expensive)

https://docs.microsoft.com/en-us/azure/architecture/databricks-monitoring/

I´d start using alerts and see if that fits your needs. If not, dig deeper (Log Analytics, Azure Monitor)

5 REPLIES 5

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-08-2022 01:40 PM

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-08-2022 11:24 PM

thanks what is the adf resource for this one..if want to include this one as part of adf

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-09-2022 02:06 AM

databricks jobs cannot be used in data factory. Data factory itself creates jobs on databricks.

But there are other ways to monitor notebooks. The easiest is using alerts and metrics (which can be found in the monitoring tab of ADF). This will send emails or webhook messages or ... when a certain condition is met. So you can create an alert which sends an e-mail when a databricks pipeline has failed.

Very simple but effective.

This of course does not give you detail about what exactly went wrong so you have to look into the notebook logs.

If you want to have logs on a deeper level, you can use log analytics together with the databricks notebooks.

This is more elaborate monitoring but also more work to set up (and more expensive)

https://docs.microsoft.com/en-us/azure/architecture/databricks-monitoring/

I´d start using alerts and see if that fits your needs. If not, dig deeper (Log Analytics, Azure Monitor)

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-09-2022 08:50 AM

understood, was able to use the alert and metrics and explore this further. thanks..

Anonymous

Not applicable

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-10-2022 07:17 AM

@Vibhor Sethi - Would you be happy to mark @Werner Stinckens' answer as best if it resolved your question?

Announcements

Welcome to Databricks Community: Lets learn, network and celebrate together

Join our fast-growing data practitioner and expert community of 80K+ members, ready to discover, help and collaborate together while making meaningful connections.

Click here to register and join today!

Engage in exciting technical discussions, join a group with your peers and meet our Featured Members.

Related Content