Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Data Engineering

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks

- Data Engineering

- Cluster Scoped init script through pulumi

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Cluster Scoped init script through pulumi

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-17-2023 01:30 PM

I am trying to run a cluster-scoped init script through Pulumi. I have referred to this documentation

https://learn.microsoft.com/en-us/azure/databricks/clusters/configure#spark-configuration

However, looks like the documentation is not very clear.

I have an init script called set-private-pip-repositories-relay-feed-init-script.sh with the below content.

#!/bin/bash

if [-n $DEVOPS_GIT_PAT]; then

use $DEVOPS_GIT_PAT

else

echo "Couldn't fetch PAT token"

fi

printf "[global]\n" > /etc/pip.conf

printf "extra-index-url=" >> /etc/pip.conf

printf "https://$DEVOPS_GIT_PAT@artificat_url/pypi/simple/\n" >> /etc/pip.conf

echo "script executed"I tried to add the init scripts in the cluster configuration of Pulumi as below

init_scripts=[

db.ClusterInitScriptArgs(

file=db.ClusterInitScriptFileArgs(

destination="./files/set-private-pip-repositories-relay-feed-init-script.sh"

)

)

],Where should I keep the Databricks secret scope? is it in spark_env_vars={"DEVOPS_GIT_PAT": "{{secrets/azureScope/devopsGitPat}}"} ?

Only a few points are mentioned on this subject, so looking forward to getting advice from someone 🙂

Labels:

8 REPLIES 8

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-17-2023 02:28 PM

Hi @Sulfikkar Basheer Shylaja , Why don't you store the init-script on DBFS and just pass the dbfs:/ path of the init script in Pulumi? You could just run this code on a notebook-

%python

dbutils.fs.put("/databricks/init-scripts/set-private-pip-repositories-relay-feed-init-script.sh", """

#!/bin/bash

if [-n $DEVOPS_GIT_PAT]; then

use $DEVOPS_GIT_PAT

else

echo "Couldn't fetch PAT token"

fi

printf "[global]\n" > /etc/pip.conf

printf "extra-index-url=" >> /etc/pip.conf

printf "https://$DEVOPS_GIT_PAT@artificat_url/pypi/simple/\n" >> /etc/pip.conf

echo "script executed"

""", True)This is using dbutils command to upload the script to DBFS in the location dbfs:/databricks/init-scripts/set-private-pip-repositories-relay-feed-init-script.sh

For using Databricks secrets scope, you are correct. Assign the secret to a spark environment variable and reference it in the init script. Please follow these two docs -

https://www.pulumi.com/registry/packages/databricks/api-docs/cluster/#state_spark_env_vars_python

Let me know if this info helps you.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-18-2023 12:33 AM

Hi @Vivian Wilfred , thanks a lot for the response 🙂

I would like to keep the init script in the repo as it can always be managed in the repo. The next reason why I don't want to put the init script in dbfs is that I have a total of 4 environments and I would want to avoid manually uploading the init script(through notebook) to dbfs but I would want to do it through pulumi 🙂 . I might be wrong but could you advise ,

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-18-2023 12:40 AM

Also, I tried by using ClusterInitScriptFileArgs as below ,

init_scripts=[

db.ClusterInitScriptArgs(

file=db.ClusterInitScriptFileArgs(

destination="./files/set-private-pip-repositories-relay-feed-init-script.sh"

)

)

],but its failing with the error as below 😞

databricks:index:Cluster (user-databricks-cluster):

error: 1 error occurred:

* updating urn:pulumi:Platform-development:::databricks:index/cluster:Cluster::user-databricks-cluster: 1 error occurred:

* cannot update cluster: File init scripts (specified with a file:/ prefix) can only be specified for clusters with custom docker containers.

Could you advise here?

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-18-2023 03:03 AM

@Sulfikkar Basheer Shylaja Is your cluster using a custom docker image? The problem here is, the local filesystem path (file:/) is only supported if you use a custom docker image. In regular DBR runtimes, the init script can only be pulled from dbfs, s3, gcs or adls.

You might have to adapt to storing the init script in one of the above locations because the cluster cannot read the file from your local filesystem.

This is not possible even through UI.

There are different ways to upload a file to DBFS that you can explore-

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-24-2023 09:00 PM

@Sulfikkar Basheer Shylaja :

It looks like you are trying to use an init script with a Databricks cluster that has a custom Docker container. However, the ClusterInitScriptFileArgs method is only used for file-based init scripts that can be executed on a Databricks cluster without a custom Docker container.

If your Databricks cluster has a custom Docker container, you need to use

ClusterInitScript method instead. You can pass a list of commands or a path to the script file in the command parameter of the ClusterInitScript method.

Here's an example of how you can use the ClusterInitScript method with a custom Docker container:

init_scripts = [

db.ClusterInitScriptArgs(

script=db.ClusterInitScript(

command=[

"bash",

"/path/to/your/script.sh",

"--param1=value1",

"--param2=value2"

]

)

)

]

cluster = db.Cluster(

"example-cluster",

# ... other parameters ...

init_scripts=init_scripts,

custom_tags=custom_tags

)Make sure to replace /path/to/your/script.sh with the actual path to your init script, and provide any necessary parameters or environment variables in the

command parameter.

Regarding the secret scope, you can pass the value of DEVOPS_GIT_PAT as an environment variable to your init script using the spark_env_vars parameter:

spark_env_vars = {

"DEVOPS_GIT_PAT": db.Secret("azureScope/devopsGitPat")

}

cluster = db.Cluster(

"example-cluster",

# ... other parameters ...

init_scripts=init_scripts,

spark_env_vars=spark_env_vars

)In this example, azureScope/devopsGitPat is the name of the Databricks secret scope that contains the DEVOPS_GIT_PAT secret.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-26-2023 01:44 AM

@Suteja Kanuri , I am using a data bricks cluster without a docker container. Could you advise how to use ClusterinitScript on a Databricks cluster that does not use a docker image?

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-27-2023 11:29 AM

@Sulfikkar Basheer Shylaja :

Yes, you can use the ClusterInitScript on a Databricks cluster that does not use a Docker image. The ClusterInitScript allows you to run a script on the nodes of your cluster when it starts up, which can be used to configure your cluster.

To use ClusterInitScript, you can follow these steps:

- Create a script that you want to run on each node of your cluster during initialization. This script can be a shell script or a Python script.

- Upload the script to a location that is accessible by all nodes of the cluster. You can upload the script to DBFS (Databricks File System), or to a cloud storage location such as Amazon S3 or Azure Blob Storage.

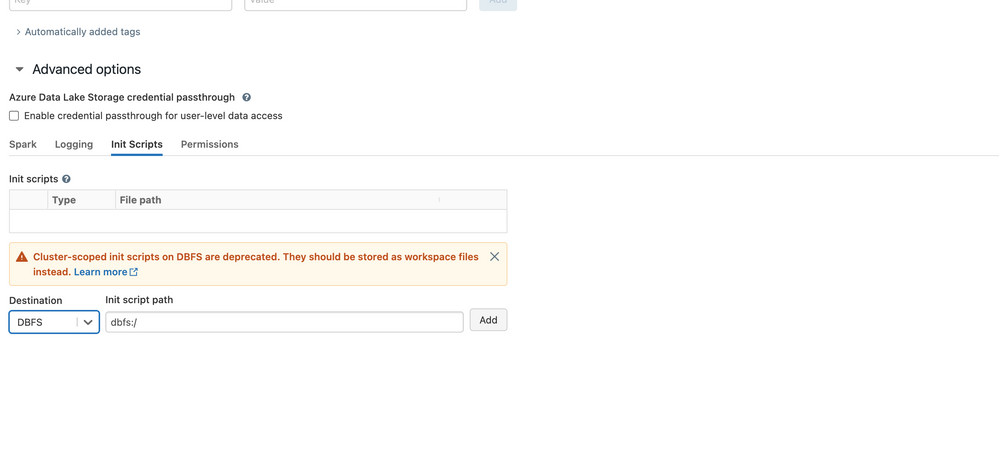

- Open your Databricks workspace and navigate to the cluster that you want to configure.

- Click on the "Advanced Options" tab.

- Scroll down to the "Init Scripts" section.

- Click "Add" to add a new initialization script.

- Enter the URL or path to the script that you uploaded in step 2.

- Optionally, you can specify arguments to pass to the script using the "Parameters" field.

- Click "Confirm" to save the initialization script.

When you start or restart your cluster, the initialization script will be run on each node of the cluster. You can check the logs of each node to ensure that the script was executed successfully.

Note that the ClusterInitScript feature is only available on Databricks clusters that are created using the VPC network configuration. If your cluster is not configured to use VPC, you will need to create a custom image with your initialization script baked in and use it to launch your cluster.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

05-10-2023 05:16 AM

Announcements

Welcome to Databricks Community: Lets learn, network and celebrate together

Join our fast-growing data practitioner and expert community of 80K+ members, ready to discover, help and collaborate together while making meaningful connections.

Click here to register and join today!

Engage in exciting technical discussions, join a group with your peers and meet our Featured Members.

Related Content

- Query is taking too long to run in Data Engineering

- Unity Catalog Enabled Clusters using PrivateNIC in Administration & Architecture

- SparkContext lost when running %sh script.py in Data Engineering

- Databricks is taking too long to run a query in Administration & Architecture

- Azure Databricks with standard private link cluster event log error: "Metastore down"... in Administration & Architecture