- 1166 Views

- 2 replies

- 0 kudos

Can AWS workspaces share subnets?

The docs state:"You can choose to share one subnet across multiple workspaces or both subnets across workspaces."as well as:"You can reuse existing security groups rather than create new ones."and on this page:"If you plan to share a VPC and subnets ...

- 1166 Views

- 2 replies

- 0 kudos

- 0 kudos

Hi @spd_dat, I will check with the team internally and try to replicate the same in my workspace. This should be possible as documentation indicates. Based on the error you are hitting, could you please share the configuration you are setting in your...

- 0 kudos

- 639 Views

- 1 replies

- 0 kudos

Databricks SSO enableed to Azure AD and this set up was deleted

HI Team,The SSO was enabled on the Databricks account with Azure AD and the environment is on AWS platform.The enterprise application which was used is been deleted from azure AD. No emergency user access has been set up.It is locked up, possible to ...

- 639 Views

- 1 replies

- 0 kudos

- 0 kudos

Hi @Ashok_AWS, Could you file a case with us, we can help you. Send a mail to: help@databricks.comhttps://docs.databricks.com/aws/en/resources/support

- 0 kudos

- 1834 Views

- 3 replies

- 2 kudos

Question about moving to Serverless compute

Hi my organization is using Databricks in Canada Est region and Serverless isn't available in our region yet? at all?I would like to know if it is worth the effort to change region for the Canada Central, where Serverless compute is available. We do ...

- 1834 Views

- 3 replies

- 2 kudos

- 2 kudos

hi Takuya Omi, thank you for responding. My question is: if we are to migrate our existing workspaces (3) and UC to Canada Central. Is it doable? Is it worth it? What does it imply? What are the best practices to do so?Thank you.

- 2 kudos

- 1149 Views

- 2 replies

- 0 kudos

Removing storage account location from metastore fails

I am trying to remove the storage account location for our UC metastore. I am getting the error:I have tried assigning my user and service principal permission to create external location.

- 1149 Views

- 2 replies

- 0 kudos

- 0 kudos

I accomplished it by creating a storage account credential and external location manually. Then I was able to able the remove the metastore path.What then happen was that the path for __databricks_internal catalog was set to the storage account that ...

- 0 kudos

- 2637 Views

- 2 replies

- 0 kudos

Resolved! How does a non-admin user read a public s3 bucket on serverless?

As an admin, I can easily read a public s3 bucket from serverless:spark.read.parquet("s3://[public bucket]/[path]").display()So can a non-admin user, from classic compute. But why does a non-admin user, from serverless (both environments 1 & 2) get t...

- 2637 Views

- 2 replies

- 0 kudos

- 0 kudos

Hi @spd_dat, Is the S3 bucket in the same region as your workspace? It might required using a IAM role / S3 bucket to allow the bucket even if it is public. Just for a test can you try giving the user who is trying the below permission: GRANT SELECT ...

- 0 kudos

- 996 Views

- 1 replies

- 0 kudos

AWS custom role for Databricks clusters - no instance profile ARN

Try to follow the instructions to create custom IAM role for EC2 instance in Databricks clusters, but I can't find the instance profile ARN on the role. If I create a regual IAM role on EC2, I can find both role ARN and instance profile ARN.https://d...

- 996 Views

- 1 replies

- 0 kudos

- 0 kudos

@Wayne I need to understand more about what you’re trying to achieve,but if you’re looking to grant permissions to the EC2 instances running behind a Databricks cluster using an instance profile, the following documentation provides a detailed explan...

- 0 kudos

- 12616 Views

- 2 replies

- 0 kudos

- 12616 Views

- 2 replies

- 0 kudos

- 0 kudos

You can get the job details from the jobs get api, which takes the job id as a parameter. This will give you all the information available about the job, specifically the job name. Please note that there is not a field called "job description" in the...

- 0 kudos

- 1890 Views

- 3 replies

- 0 kudos

system schema permission

I've Databricks workspace admin permissions and want to run few queries on system.billing schema to get more info on billing of dbx. Getting below errror: [INSUFFICIENT_PERMISSIONS] Insufficient privileges: User does not have USE SCHEMA on Schema 'sy...

- 1890 Views

- 3 replies

- 0 kudos

- 0 kudos

Hi @PoojaD, You should access an admin to get you access: GRANT USE SCHEMA ON SCHEMA system.billing TO [Your User];GRANT SELECT ON TABLE system.billing.usage TO [Your User];

- 0 kudos

- 1004 Views

- 1 replies

- 0 kudos

Removing the trial version as it is running cost

HI, I have a trial version on my AWS which keeps running and is eating up a dollar per day for the last couple of days. How do I disable it and use it only when required or completely remove it?

- 1004 Views

- 1 replies

- 0 kudos

- 0 kudos

Hello @psgcbe, You can follow below steps: Terminate All Compute Resources:First, navigate to the AWS Management Console.Go to the EC2 Dashboard.Select Instances and terminate any running instances related to your trial. Cancel Your Subscription:Afte...

- 0 kudos

- 1467 Views

- 1 replies

- 1 kudos

Resolved! Timeout settings for Postgresql external catalog connection?

Is there any way to configure timeouts for external catalog connections? We are getting some timeouts with complex queries accessing a pgsql database through the catalog. We tried configuring the connection and we got this error │ Error: cannot upda...

- 1467 Views

- 1 replies

- 1 kudos

- 1 kudos

Hello @ErikApption, there is no direct support for a connectTimeout option in the connection settings through Unity Catalog as of now. You might need to explore these alternative timeout configurations or consider adjusting your database handling to ...

- 1 kudos

- 2000 Views

- 3 replies

- 0 kudos

Cannot create a workspace on GCP

Hi,I have been using Databricks for a couple of months and been spinning up workspaces with Terraform. The other day we decided to end our POC and move on to a MVP. This meant cleaning up all workspaces and GCP. after the cleanup was done I wanted to...

- 2000 Views

- 3 replies

- 0 kudos

- 0 kudos

Did you try from Marketplace? You may get there more detailed error.

- 0 kudos

- 2201 Views

- 2 replies

- 0 kudos

Can we create an external location from a different tenant in Azure

We are looking to add an external location which points to a storage account in another Azure tenant. Is this possible? Could you point to any documentation around this.Currently, when we try to add a new credential providing a DBX access connector a...

- 2201 Views

- 2 replies

- 0 kudos

- 0 kudos

Thanks for the response @Alberto_Umana .Looks like the IDs are all provided correctly. Here is the config -Tenant A Tenant BDatabricks is hosted here ...

- 0 kudos

- 831 Views

- 0 replies

- 0 kudos

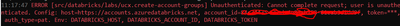

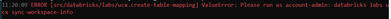

UCX Account Admin authentication error in Azure Databricks

Hi Team,I am using Azure Databricks to implement UCX. The UCX installation is completed properly. But facing issues when I am executing commands with account admin role. I am account admin in Azure Databricks (https://accounts.azuredatabricks.net/). ...

- 831 Views

- 0 replies

- 0 kudos

- 2032 Views

- 1 replies

- 0 kudos

creating Workspace in AWS with Quickstart is giving error

Hello, While creating workspace in AWS using Quickstart, I get below error. I used both admin Account and root account to create this but both gave the same issue. Any help is appreciated. The resource CopyZipsFunction is in a CREATE_FAILED stateT...

- 2032 Views

- 1 replies

- 0 kudos

- 0 kudos

Hello @eondatatech, Ensure that both the admin and the root account you are using to create the workspace have the necessary IAM permissions to create and manage Lambda functions. Specifically, check if the CreateFunction and PassRole permissions are...

- 0 kudos

- 2378 Views

- 3 replies

- 1 kudos

Databricks on GCP with GKE | Cluster stuck in starting status | GKE allocation ressource failing

Hi Databricks Community,I’m currently facing several challenges with my Databricks clusters running on Google Kubernetes Engine (GKE). I hope someone here might have insights or suggestions to resolve the issues.Problem Overview:I am experiencing fre...

- 2378 Views

- 3 replies

- 1 kudos

- 1 kudos

I am having similar issues. first time I am using the `databricks_cluster` resource, my terraform apply does not gracefully complete, and I see numerous errors about:1. Can’t scale up a node pool because of a failing scheduling predicateThe autoscale...

- 1 kudos

-

Access control

1 -

Apache spark

1 -

Azure

7 -

Azure databricks

5 -

Billing

2 -

Cluster

1 -

Compliance

1 -

Data Ingestion & connectivity

5 -

Databricks Runtime

1 -

Databricks SQL

2 -

DBFS

1 -

Dbt

1 -

Delta Sharing

1 -

DLT Pipeline

1 -

GA

1 -

Gdpr

1 -

Github

1 -

Partner

74 -

Public Preview

1 -

Service Principals

1 -

Unity Catalog

1 -

Workspace

2

- « Previous

- Next »

| User | Count |

|---|---|

| 118 | |

| 53 | |

| 38 | |

| 36 | |

| 25 |