- 3284 Views

- 3 replies

- 0 kudos

Rest endpoint for data bricks audit logs

I am trying to find official documentation link to get audit logs of data bricks. unable to find it. referred on.https://docs.databricks.com/en/administration-guide/account-settings/audit-logs.htmlhttps://docs.databricks.com/api/workspace/introductio...

- 3284 Views

- 3 replies

- 0 kudos

- 0 kudos

@madhura I could not find any endpoint that can be used to get the Audit logs. However, you can enable system tables in your workspace and try to read the data just like you read from any other table. Please check this to enable system tables: https...

- 0 kudos

- 3570 Views

- 1 replies

- 0 kudos

Databricks Job alerts

I'm currently running jobs on job clusters and would like these jobs to time out after 168 hours (7 days), at which point a new job cluster will be assigned. This timeout is specifically to ensure that jobs don't run on the same cluster for too long,...

- 3570 Views

- 1 replies

- 0 kudos

- 0 kudos

@Priyam1 Good day!Based on the information provided, it seems that we do not have a direct way to mute notifications for timed-out jobs while still receiving alerts for job failures. You can reduce the number of notifications sent by filtering out no...

- 0 kudos

- 7214 Views

- 6 replies

- 3 kudos

Azure Databricks with standard private link cluster event log error: "Metastore down"...

We have Azure Databricks with standard private link (back-end and front-end private link).We are able to successfully attach a Databricks workspace to the Databricks metastore (ADLS Gen2 storage).However, when trying to create tables in a catalog in ...

- 7214 Views

- 6 replies

- 3 kudos

- 3 kudos

can confirm that the approach will solve your error. Ran into a similar issue a while back.

- 3 kudos

- 3264 Views

- 4 replies

- 0 kudos

Secrets ACL API Behavior Change

Hey all,Has the behavior of the Secrets ACL API changed over the last 24 hours? With no code changes on our scope-deployment pipeline, I am suddenly getting strange errors back from this endpoint.Anybody else noticing a change?Thanks,Alex

- 3264 Views

- 4 replies

- 0 kudos

- 0 kudos

Idk, I control the resource group myself and I don't remember ever granting or revoking contributor roles on that RG for any of these users which are now suddenly throwing errors. Interesting to see that line from the docs... I wonder if that was alw...

- 0 kudos

- 1398 Views

- 0 replies

- 0 kudos

Unity Catalog Enabled Clusters using PrivateNIC

Hello,When reviewing the VM settings for Databricks worker VMs, we can see that there are two(2) NICs.A primary ( PublicNIC (primary)) and a secondary (PrivateNIC (primary)).The workers VM is always assigned the PublicNIC and this is reachable from w...

- 1398 Views

- 0 replies

- 0 kudos

- 5083 Views

- 4 replies

- 1 kudos

Databricks is taking too long to run a query

I ran a simple query. It is taking too much time and it doesn't stop running. I am the only one who is using cluster. It's happening to every notebook in the workspace.

- 5083 Views

- 4 replies

- 1 kudos

- 1 kudos

Do you see spark jobs running in Spark UI ?

- 1 kudos

- 4435 Views

- 2 replies

- 1 kudos

Resolved! Native service principal support in JDBC/ODBC drivers

Read from Databricks integration best practises about the native support for Service Principal authentication on JDBC/ODBC drivers. The timetable mentioned for this was "expected to land in 2023", is this referring to the https://docs.databricks.com/...

- 4435 Views

- 2 replies

- 1 kudos

- 1 kudos

@harripy As it says in the documentation, JDBC driver 2.6.36 and above supports Azure Databricks OAuth secrets for OAuth M2M or OAuth 2.0 client credentials authentication. Microsoft Entra ID secrets are not supported.https://learn.microsoft.com/en-u...

- 1 kudos

- 3324 Views

- 1 replies

- 2 kudos

Resolved! What happens to the Workspace when deleting the user that created that workspace

Hi,Background:In Azure-Datebricks:We have a personal account that created a workspace, this workspace is our main workspace. The users Microsoft Office account is already deleted but the user still exist in Databricks.Question 1 is:What happens to th...

- 3324 Views

- 1 replies

- 2 kudos

- 2 kudos

What happens to this workspace if we delete the User from workspace? Will the Workspace be deleted? → Workspace will not get deleted. It will continue to function.Is it possible to change Workspace own to another account i.e. a service account. If so...

- 2 kudos

- 5003 Views

- 1 replies

- 1 kudos

Resolved! Import folder (no .whl or .jar files) and run the `python3 setup.py bdist_wheel` for lib install

I want to import the ibapi python module in Azure Databricks Notebook.Before this, I downloaded the the TWS API folder from https://interactivebrokers.github.io/# I need to go through the following steps to install the API:Download and install TWS Ga...

- 5003 Views

- 1 replies

- 1 kudos

- 1 kudos

You can try to upload the folder in the workspace location and try to cd in the desired folder and try to install in via notebook. But it would be a notebook scope installation. If you are looking for a cluster scoped installation then you would need...

- 1 kudos

- 2859 Views

- 3 replies

- 0 kudos

Permission denied using patchelf

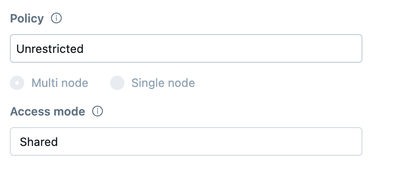

Hello all I would like to run a Python script on a Shared cluster: The Python script tries to do, under the hood, a call to `patchelf` utility, in order to set a r-path. Something along those lines:execute('patchelf',['--set-rpath', rpath, lib])The p...

- 2859 Views

- 3 replies

- 0 kudos

- 0 kudos

I basically followed this tutorial https://learn.microsoft.com/en-gb/azure/databricks/data-governance/unity-catalog/get-started and it seemed to be working, so I guess Unity Catalog is enabled?

- 0 kudos

- 3412 Views

- 1 replies

- 0 kudos

Why does use of Azure SSO require Databricks PAT enabled ?

My org uses Databricks and SSO. We are keen to disable the use of PAT but have noticed that when it's disabled, we're not able to use SSO. May I ask why does SSO have a dependency on PATs [arguably they are two distinct authentication methods] ?Also,...

- 3412 Views

- 1 replies

- 0 kudos

- 6753 Views

- 2 replies

- 0 kudos

Resolved! Call an Azure Function App with Access Restrictions from a Databricks Workspace

Hello,As the title says, I am trying to call an function from an Azure Function App configured with access restrictions from a python notebook in my Databricks workspace. The Function App resource is in a different subscription as the Databricks work...

- 6753 Views

- 2 replies

- 0 kudos

- 0 kudos

Update : Problem was fixed ! The key was to set an VNET rule in the access restriction, giving access directly to the subnets used by Databricks.It seems like for Microsoft to Microsoft connections, the IP addresses are not used, so adding the IP ran...

- 0 kudos

- 2801 Views

- 0 replies

- 0 kudos

Azure Devops repos access

I have a Databricks setup, where the users and their permissions are handled in Microsoft Azure using AD groups and then provisioned (account level) using a provisioning connector to Databricks. The code repositories are in Azure Devops where users a...

- 2801 Views

- 0 replies

- 0 kudos

- 8415 Views

- 3 replies

- 2 kudos

How to bind a User assigned Managed identity to Databricks to access external resources?

Is there a way to bind a user assigned managed identity to Databricks? We want to access some SQL DBs, Redis cache from our Spark code running on Databricks using Managed Identity instead of Service Principals and basic authentication.As of today, Da...

- 8415 Views

- 3 replies

- 2 kudos

- 2 kudos

@Carpender correcting my comment above, Databricks assigned Managed Identity is working and we are able to access but as stated in the original question we are looking for authorization using User Assigned Managed Identity (UAMI). With UAMI we cannot...

- 2 kudos

- 6360 Views

- 1 replies

- 0 kudos

How to setup service principal to assing account-level groups to workspaces using terraform

Based on best practices, we have set up SCIM provisioning using Microsoft Entra ID to synchronize Entra ID groups to our Databricks account. All workspaces have identity federation enabled.However, how should workspace administrators assign account-l...

- 6360 Views

- 1 replies

- 0 kudos

- 0 kudos

Have you tried giving Manager role on the group to the service principal which is workspace admin? Once you do this you may be able to use the settings to In workspace context, adding account-level group to a workspace in databricks_permission_assig...

- 0 kudos

-

Access control

1 -

Apache spark

1 -

Azure

7 -

Azure databricks

5 -

Billing

2 -

Cluster

1 -

Compliance

1 -

Data Ingestion & connectivity

5 -

Databricks Runtime

1 -

Databricks SQL

2 -

DBFS

1 -

Dbt

1 -

Delta Sharing

1 -

DLT Pipeline

1 -

GA

1 -

Gdpr

1 -

Github

1 -

Partner

82 -

Public Preview

1 -

Service Principals

1 -

Unity Catalog

1 -

Workspace

2

- « Previous

- Next »

| User | Count |

|---|---|

| 119 | |

| 54 | |

| 38 | |

| 36 | |

| 25 |