We had a databricks job that has strange behavior,

when we passing 'output_path' to function saveAsTextFile and not output_path variable the data saved to the following path:

s3://dev-databricks-hy1-rootbucket/nvirginiaprod/3219117805926709/output_path

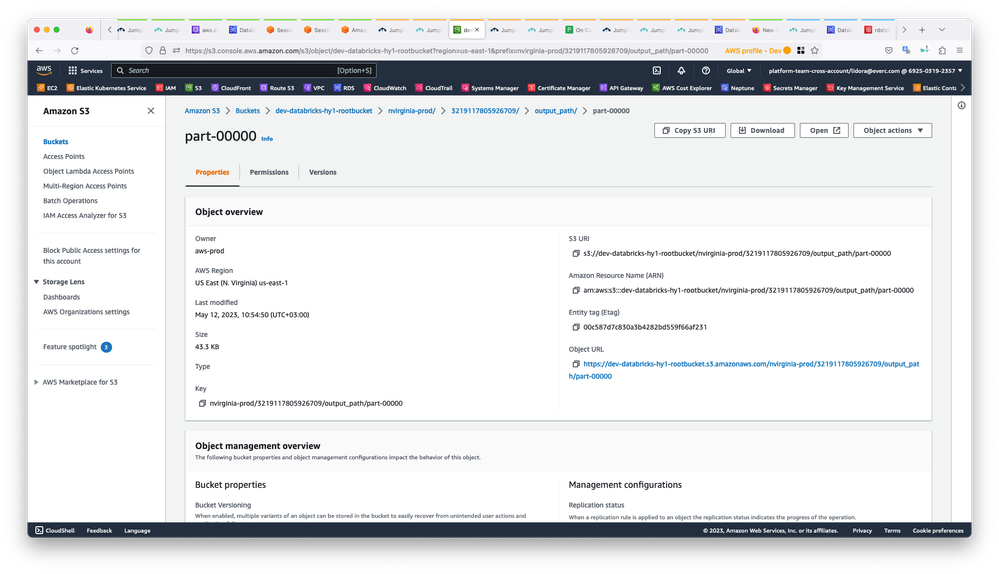

The problem is that the object ownership for each object that saved in above path is not belongs to us - aws-prod.

So the question why the object owner is aws-prod and not our prod aws account?

Thanks for help.

Attached the notebook of job and also screenshoot from s3 bucket.