Hi @utkarshamone,

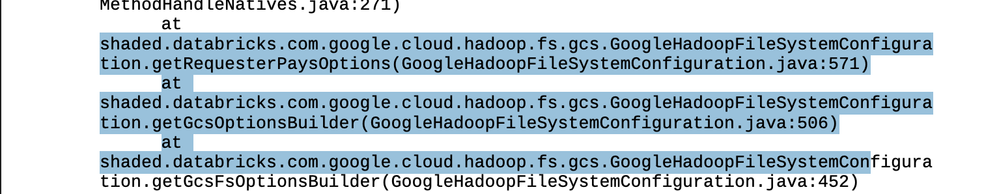

After looking into your error dump, it appears the driver is hanging while trying to initialise a DBFS mount backed by GCS... As an example, the highlighted paths below only appear when Databricks is resolving a DBFS mount (or root) that points to a GCS bucket, not when you’re using Unity Catalog external locations or volumes.

Because you’ve moved the job to Unity Catalog + DBR 16.4 LTS with data_security_mode = "USER_ISOLATION" (Standard access mode), this is a strong signal that the job (or its cluster/init scripts) still references a DBFS mount path, e.g., dbfs:/mnt/..., that points to your bucket.

Under UC + Standard access mode on recent runtimes, DBFS mounts are a legacy pattern and can cause driver startup problems or runtime errors, especially when mixed with UC-governed storage.

Here are some options for you to look at. Firstly, search your code, job parameters and init scripts to see if there are any references to the DBFS mount points in this job. If you find any, make a note of them and migrate those mounts to the Unity Catalog. You can then update the job to use the UC paths instead of the DBFS mount points.

Before you go ahead and make the changes, you can (if that is possible) temporarily switch the job to dedicated access mode. If it only fails in standard (USER_ISOLATION) and only when the mount is present, that confirms the mount as the culprit.

If this answer resolves your question, could you mark it as “Accept as Solution”? That helps other users quickly find the correct fix.

Regards,

Ashwin | Delivery Solution Architect @ Databricks

Helping you build and scale the Data Intelligence Platform.

***Opinions are my own***