Why We Used Two Bronze Tables Instead of One — And Why It Mattered

Post removed — reposting with corrections

- 84 Views

- 0 replies

- 0 kudos

Post removed — reposting with corrections

Saving logs from an all-purpose cluster to Volume or S3 is not consistent, because stderr, stdout, and log4j-active.log get overwritten when the cluster is restarted between minutes 01 and 59.Tested case:A job is configured to start every 20 minutes,...

Hi @ccsalt , This is a known limitation. Log rotation (renaming to log4j-YYYY-MM-DD-HH.log.gz) only happens on the hour boundary. The active log file log4j-active.log has always the same name and is overwritten if a cluster restart happens within one...

Hi everyone,I'm using Azure Databricks with a customer who has a SQL Server database federated on the Unity Catalog.It seems that, while converting some date functions to the SQL Server dialect, Databricks uses the function "extract", which is not re...

Hi @Alessio_F ,This happens because in Databricks SQL both year and month functions are just aliases over following patterns:- extract (YEAR FROM expr)- extract(MONTH FROM expr) When Databricks pushes a predicate or expression down to the remote SQL ...

Hi everyone,I am looking to create a notebook that, when executed by a user, performs the following actions:Retrieves all Databricks jobs created by the current userChecks whether a specific role already has permissions on those jobsAutomatically add...

Hi @Raj_DB You can use databricks SDK to retrieve all jobs filter them by selecting only those where owner is current usersomething like thisfrom databricks.sdk import WorkspaceClient w = WorkspaceClient() # Specify the user email/username you want...

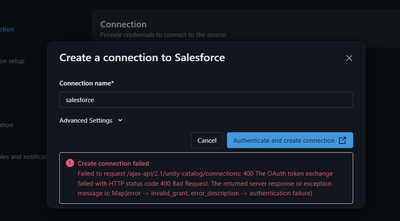

HI All,I have been trying to setup Salesforce using Lakeflow Connect and followed instructions on the docshttps://docs.databricks.com/aws/en/connect/managed-ingestion#sfdcHowever I face into invalid_grant error However login history on salesforce sh...

Hi Vedanth,The invalid_grant error usually occurs due to authentication or OAuth configuration issues between Salesforce and Databricks Lakeflow Connect.Could you please verify the following points:Ensure the Salesforce user account is not locked and...

EnvironmentCloud: AWSCompute: ServerlessTable: a_big_tableTable type: Streaming Table (SDP pipeline)Table size: 641 GB, 6,210 filesLiquid Clustering columns: [event_time, integer_userId]delta.dataSkippingStatsColumns:event_time, integer_userId, integ...

Hello @aonurdemir , I looked into your query and have compiled some helpful tips: I don't have direct access to your workspace internals, so I can't prove this definitively. But what you're seeing is consistent with how Delta's stats-based data skipp...

In Azure Databricks Unity Catalog, I have two storage credentials that use the same connector_id / Azure Databricks Access Connector.One credential works and can access ADLS Gen2 successfully, but the other fails with: Failed to access cloud storag...

Hi @kcyugesh How are you getting on so far?It might also be worth checking the privileges associated with each credential to see if they differ.And secondly check the credential type on the credential, as a manaded identity in comparison to a service...

Hi everyone,I am facing a data consistency issue in my Databricks incremental pipeline where records are being skipped because of a time gap between when a record is processed and when the physical file is finalized in Azure Blob Storage (ABFS).Our A...

You can handle it as belowFix the Bronze Write - The 20+ minutes commit gap suggests metadata contention or "Small File Issues" in the bronze delta tables. You can optimize tables manually or enable Optimized Write and Auto Optimize if feasible. This...

This is a small deficiency, but a fix would be nice to have.For a long time now, the Sample Data previewer in the Unity Catalog explorer has been unable to show tables that contain a certain kind of column. Instead of showing sample rows of the tabl...

Yes, my vector space is commonly of dimension 4000 or 8000.I don't write any dense vectors to table; can't speak to what happens previewing that type.Thanks for taking up the issue!

I have a batch job that runs thousands of Deep Clone commands, it uses a ForEach task to run multiple Deep Clones in parallel. It was taking a very long time and I realized that the Driver was the main culprit since it was using up all of its memory ...

You’re seeing (a monotonic / stair‑step climb in driver RAM over thousands of DEEP CLONE statements) is a very common pattern when the driver is not “holding data”, but holding metadata, query artifacts, and per‑command state that accumulates faster ...

Auto CDC flow works with source table CDF enabled, but fails when CDF is disabled.The source table is updated via INSERT OVERWRITE.IS CDF mandatory?

Yes, @kevinzhang29 . For Auto CDC with a Delta source table, a change data feed (CDF) (i.e., a CDC feed) is required. AUTO CDC is explicitly designed to read from a CDC/change feed source such as Delta CDF, not from plain snapshots. When you don’t ha...

Hi everyone,I’m working on a use case where I need to retain 30 days of historical data in a Delta table and use it to build trend reports.I’m looking for the best approach to reliably maintain this historical data while also making it suitable for r...

Hey @Raj_DB , The TLDR is time travel is great for short-term ops and debugging, but brittle as your primary reporting history, and its cost profile is harder to control and reason about than a purpose-built history table. Docs 1,2 explicitly say De...

Title: Spark Structured Streaming – Airport Counts by CountryThis notebook demonstrates how to set up a Spark Structured Streaming job in Databricks Community Edition.It reads new CSV files from a Unity Catalog volume, processes them to count airport...

Hi Experts I have around 100 table in the bronze layer (DLT pipeline). We have created silver layer based on some logic around 20 silver layer tables.How to run the specific pipeline in silver layer when ever there is some update happens in the bronz...

Thanks @anuj_lathi for the Detailed explanation. This helps a lot .

Hi everyone,I’m trying to create an Azure Key Vault-backed secret scope in a Databricks Premium workspace, but I keep getting this error: Fetch request failed due expired user sessionSetup details:Databricks workspace: PremiumAzure Key Vault: Owner p...

Hi @AnandGNR ,Try to do following. Go to your KeyVault, then in Firewalls and virtual networks set:"Allow trusted Microsoft services to bypass this firewall."