Resolved! PySpark: Writing Parquet Files to the Azure Blob Storage Container

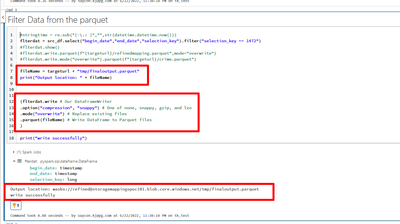

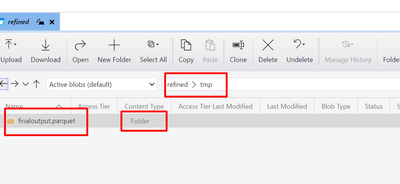

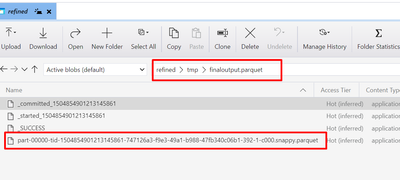

Currently I am having some issues with the writing of the parquet file in the Storage Container. I do have the codes running but whenever the dataframe writer puts the parquet to the blob storage instead of the parquet file type, it is created as a f...

- 31858 Views

- 4 replies

- 4 kudos

Latest Reply

This is my approach:from databricks.sdk.runtime import dbutils from pyspark.sql.types import DataFrame output_base_url = "abfss://..." def write_single_parquet_file(df: DataFrame, filename: str): print(f"Writing '{filename}.parquet' to ABFS") ...

- 4 kudos