Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Data Engineering

Join discussions on data engineering best practices, architectures, and optimization strategies within the Databricks Community. Exchange insights and solutions with fellow data engineers.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks Community

- Data Engineering

- Importing python module

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Importing python module

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

09-13-2022 07:46 AM

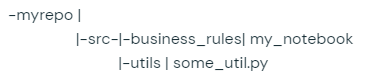

I'm not sure how a simple thing like importing a module in python can be so broken in such a product. First, I was able to make it work using the following:

import sys

sys.path.append("/Workspace/Repos/Github Repo/sparkling-to-databricks/src")

from utils.some_util import *I was able to use the imported function. But then I restarted the cluster and this would not work even though the path is in sys.path.

I also tried the following:

spark.sparkContext.addPyFile("/Workspace/Repos/Github Repo/my-repo/src/utils/some_util.py")This did not work either. Can someone please tell me what I'm doing wrong here and suggest a solution. Thanks.

4 REPLIES 4

Anonymous

Not applicable

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

09-13-2022 07:58 AM

https://docs.databricks.com/libraries/index.html the docs should help.

Keep in mind that paths on the cluster are distributed paths and that paths in python are local to the driver. If you do %fs ls / you'll get a different result than if you do %sh fs ls /

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

09-13-2022 08:09 AM

So there's no other way than creating a library?

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

09-13-2022 09:36 PM

I too wonder the same thing. How can importing a python module be so difficult and not even documented lol.

No need for libraries..

Here's what worked for me..

Step1: Upload the module by first opening a notebook >> File >> Upload Data >> drag and drop your module

Step2: Click on Next

Step3: Copy the databricks path for your module. (this path is diplayed in the pop up that you see just after click on Next)

For me , if my module is named test_module the path looks like

- dbfs:/FileStore/shared_uploads/krishz@company.com/test_module.py

Step4: Append the above to Path (albeit with changes)

- Change 1: Change dbfs:/ to /dbfs/

- Change 2: remove your module name from the path 🙂

Now my path to append looks like

- /dbfs/FileStore/shared_uploads/krishz@company.com

Step5:

import sys

sys.path.append("/dbfs/FileStore/shared_uploads/krishz@company.com")Step6:

Now you can import simply by using below:

import test_modulePost this, you may write code as if you had imported test_module in your usual Jupyter notebook - No need to worry about any databricks intricacies.

Lemme know if you are unclear about any step. Honestly speaking, I don't know why you were recommended to use libraries for such a simple request.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

09-13-2022 10:00 PM

Announcements

Related Content

- How to import python modules in a notebook? in Data Engineering

- Best-practice structure for config.yaml, utils, and databricks.yaml in ML project (Databricks) in Data Engineering

- Intermittent failure with Python IMPORTS statements after upgrading to DBR18.0 in Data Engineering

- INTERNAL_ERROR occurred while converting Iceberg format table to Delta format using Spark in Data Engineering

- Using built-in display method modules in Data Engineering