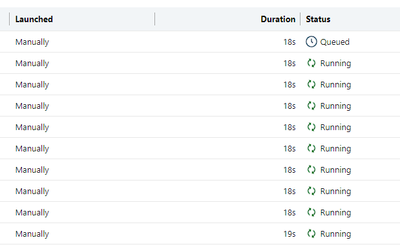

I have a process that should run the same notebook with varying parameters, thus translating to a job with queue and concurrency enabled. When the first executions are triggered the Jobs Runs work as expected, i.e. if the job has a max concurrency set to 10, all executions start running concurrently as expected and new executions are queued up.

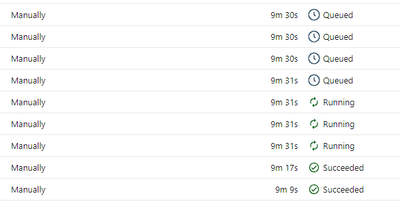

So, after the current concurrent executions end it's expected the queued items to start being processed as the slots are being freed up, but what is happening is only a few queued items execute concurrently after the first ones finish:

Most of the time only a couple of jobs run concurrently after the first batch.

It appears to be a bug in the queueing mechanism or am I missing something?