HI Everyone,

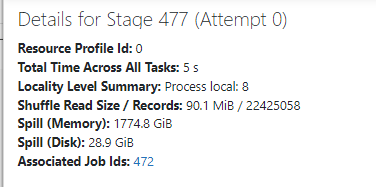

Im trying to merge two delta tables who have data more than 200 million in each of them. These tables are properly optimized. But upon running the job, the job is taking a long time to execute and the memory spills are huger (1TB-3TB) recorded. And the jobs are still running. I work with 5 executor nodes with Standard_DS5_V2 Configuration. Can someone help me on how to optimize the code.

Any help would be greatly appreciated.