Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Data Engineering

Join discussions on data engineering best practices, architectures, and optimization strategies within the Databricks Community. Exchange insights and solutions with fellow data engineers.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks Community

- Data Engineering

- Pyspark Dataframes orderby only orders within part...

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Pyspark Dataframes orderby only orders within partition when having multiple worker

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

03-21-2024 02:46 AM

I came across a pyspark issue when sorting the dataframe by a column. It seems like pyspark only orders the data within partitions when having multiple worker, even though it shouldn't.

from pyspark.sql import functions as F

import matplotlib.pyplot as plt

import numpy as np

num_rows = 1000000

num_cols = 300

# Create DataFrame mit 1 Million Rows and 300 columns with random data

columns = ["col_" + str(i) for i in range(num_cols)]

data = spark.range(0, num_rows)

# create id column first

data = data.repartition(1) # we need this here so monotonically_increasing_id gives all numbers sequentially

data = data.withColumn("test1", F.monotonically_increasing_id())

data = data.orderBy(F.rand())

data = data.repartition(10)

for col_name in columns:

data = data.withColumn(col_name, F.rand())

# default sorting which leads to wrong sorting

data = data.orderBy("test1", ascending=False)

test2 = data.select(F.collect_list("test1")).first()[0]

plt.plot(test2, color="red")

# test sorting after repartioning to 1 partition

data2 = data.repartition(1)

data2 = data2.orderBy("test1", ascending=False)

test3 = data2.select(F.collect_list("test1")).first()[0]

plt.plot(test3, color="red")

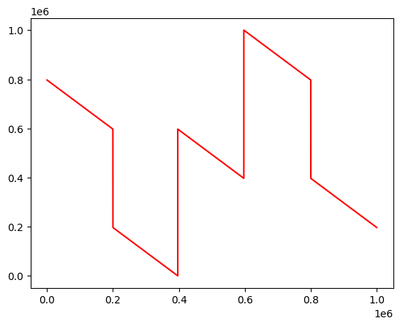

The first plot looks like this:

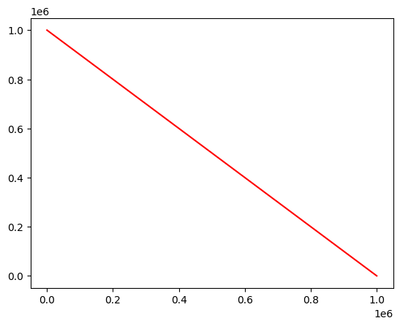

The second plot looks like this:

The second plot (after repartitioning to 1 partition) shows the correct sorting.

Is this a known issue? If so it is a pyspark issue or a databricks issue? When having only one worker both plots are correct.

7 REPLIES 7

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

03-21-2024 05:23 AM

Thanks for your quick answer. Where can I find the information that orderby() or sort() is only sorting within the partition? The official doc does not mention this.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

03-21-2024 05:52 AM

Sorry if I have to ask again, but I am a bit confused by this.

I thought, that pysparks `orderBy()` and `sort()` do a shuffle operation before the sorting for exact this reason. There is another command `sortWithinPartitions()` that does not do that and does a partition wise sorting. I am acutally suprised that `sort()` also only works partition wise. But then: Why does it work on a singleNode Cluster on a partitioned DataFrame?

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-16-2025 08:01 PM

The orderBy function in PySpark is expected to perform a global sort, which involves shuffling the data across partitions to ensure that the entire DataFrame is sorted. This is different from sortWithinPartitions, which only sorts data within each partition.

Let me try your program and understand the results further.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-17-2025 03:11 AM

Both before and after repartition I see the same results for orderBy

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-17-2025 03:39 AM

Did you try with a multiple worker cluster? Which Runtime with which spark version did you use?

Maybe it would be good to test with Runtime 13.3, then we would know that it was fixed in the meantime.

I found this on StackOverflow. Seems someone had a similar problem: https://stackoverflow.com/questions/55860388/pyspark-dataframe-orderby-partition-level-or-overall

There is also a very old HIVE bug ticket that was never resolved. Not sure, if it could be connected:

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-26-2025 03:26 AM

okay, I do see a difference in 13.3, while not in 15.4.

For your tests would you be able to use higher DBR?

It means, that the issue is resolved in higher DBR and could be some improvement.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-26-2025 10:28 PM

Hi @dbx-user7354 ,

OrderBy() should perform a global sort as showed in plot-2, but as per your problem it is sorting the data within the partitions which is the behavior of sortWithinPartitions(), so to solve this error. Please try with the latest DBR runtime and then try to check the result. I think the problem is from databricks runtime side.

If this helps, mark it as solution.

Regards,

Avinash N

Announcements

Related Content

- Partition optimization strategy for task that massively inflate size of dataframe in Data Engineering

- How to get MLflow OpenAI autolog traces from PySpark mapInPandas workers (and some pitfalls) in Generative AI

- unionbyname several streaming dataframes of different sources in Data Engineering

- Python DataSource API utilities/ Import Fails in Spark Declarative Pipeline in Data Engineering

- How to Stop Driver Node from Overloading When Using ThreadPoolExecutor in Databricks in Data Engineering