Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Data Engineering

Join discussions on data engineering best practices, architectures, and optimization strategies within the Databricks Community. Exchange insights and solutions with fellow data engineers.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks Community

- Data Engineering

- Spark CSV file read option to read blank/empty val...

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Spark CSV file read option to read blank/empty value from file as empty value only instead Null

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-18-2024 02:22 AM - edited 04-18-2024 02:25 AM

Hi,

I am trying to read one file which having some blank value in column and we know spark convert blank value to null value during reading, how to read blank/empty value as empty value ?? tried DBR 13.2,14.3

I have tried all possible way but its not working

display(spark.read.option("emptyValue", "").csv('/FileStore/tables/test2.csv',header=True,inferSchema=True))

display(spark.read.option("emptyValue","None").csv('/FileStore/tables/test2.csv',header=True,inferSchema=True))

spark.read.option("nullValue", "None").csv('/FileStore/tables/test2.csv',header=True,inferSchema=False)

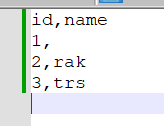

Sample file below as input csv

7 REPLIES 7

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-18-2024 03:06 AM

May I ask why you do not want null? It is THE way to indicate a value is missing (and gives you filtering possibilities using isNull/isNotNull).

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-18-2024 03:48 AM

Hi @-werners- , User wants data in landing table like this only, they have some data like None as well... And can have some case when statement based on blank value and null value in next layer

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-18-2024 03:51 AM

.option("nullValue", "") should do the trick.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-18-2024 07:16 AM

.option(nullValue, "")

empty strings are interpreted as null values by default. If you set nullValue to anything but "", like "null" or "none", empty strings will be read as empty strings and not as null values anymore.

Please check-

dataframe - Read spark csv with empty values without converting to null - Stack Overflow

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-18-2024 07:32 AM - edited 04-18-2024 07:34 AM

dont quote something from stackoverflow because those are old version in spark tried.. have you tried the thing on your own to verify if this really working or not in spark3??

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-18-2024 08:40 AM

afaik nullValue, "" should do the trick. But I tested myself on your example and indeed it does not work.

Gonna do some checking...

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-24-2024 12:43 AM

OK, after some tests:

The trick is in surrounding text in your csv with quotes. Like that spark can actually make a difference between a missing value and an empty value. Missing values are null and can only be converted to something else implicitely (by using coalesce f.e.).

When a column contains '', nullvalue = "''" will create an empty value and not null.

The same for emptyValue if you want.

Not sure if it is workable for you though.

Announcements

Related Content

- Auto Loader on UC Volumes stopped resolving wildcards in Data Engineering

- Data bricks + Network security perimeter (storage account) Error in Data Engineering

- Create External Catalog when dbname has special characters in Data Engineering

- Workspaces stuck in a provisioning state in Administration & Architecture

- Custom and community connectors in Data Engineering