Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Data Engineering

Join discussions on data engineering best practices, architectures, and optimization strategies within the Databricks Community. Exchange insights and solutions with fellow data engineers.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks Community

- Data Engineering

- Re: Transfer of Jobs/ETL Pipelines/Workflows/Works...

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-27-2026 10:11 PM

We need to transfer the Jobs/ETL Pipelines/Workflows/Workspace Notebooks from One Azure Subscription to another Azure subscription. Manual way of exporting the notebook and jobs is not feasible as we have around 100s of notebook and workflows. Suggest a suitable way

1 ACCEPTED SOLUTION

Accepted Solutions

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-27-2026 11:57 PM

Try Databricks today: https://dbricks.co/3EAWLK6. This video introduces Databricks Asset Bundles (DABs) as a solution to simplify and standardize CI/CD pipelines on Databricks. It highlights the challenges of existing tools, which were often complex, incomplete, or lacked native support. The video

8 REPLIES 8

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-27-2026 11:02 PM

we can use Databricks asset Bundles and Teraform

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-27-2026 11:28 PM

@parvati_sharma8 Can you share some links which will provide step by step process to do this?

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-27-2026 11:57 PM

Try Databricks today: https://dbricks.co/3EAWLK6. This video introduces Databricks Asset Bundles (DABs) as a solution to simplify and standardize CI/CD pipelines on Databricks. It highlights the challenges of existing tools, which were often complex, incomplete, or lacked native support. The video

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-28-2026 12:40 AM

Asset Bundles are definitely a great approach, but as an alternative, if all your resources are already in Git, you can simply sync them to a new subscription.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-28-2026 01:29 AM

@AJ270990 - It has to be the combination of Git Repo and Asset Bundles.

Using DAB, you could have your jobs/clusters definitions in the ADO and then from ADO you could get your code in the new subscription.

RG #Driving Business Outcomes with Data Intelligence

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-28-2026 05:36 PM

Thanks @Raman_Unifeye In our case we dont have ADO, so you meant Github actions?

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-28-2026 06:01 PM

If you cant use DABS for any reason terraform exporter utility would be helpful as well .

More information -

https://www.databricks.com/blog/2022/12/20/reuse-existing-workflows-through-terraform.html

https://medium.com/mphasis-datalytyx/portable-databricks-how-to-migrate-databricks-from-one-cloud-to...

Thank You

Pradeep Singh - https://www.linkedin.com/in/dbxdev

Pradeep Singh - https://www.linkedin.com/in/dbxdev

Portable Databricks: How to migrate Databricks from one cloud to another 🚢 Imagine your delight; you have just finished deploying Databricks for your large organization, onto your chosen Cloud ...

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-28-2026 06:59 PM

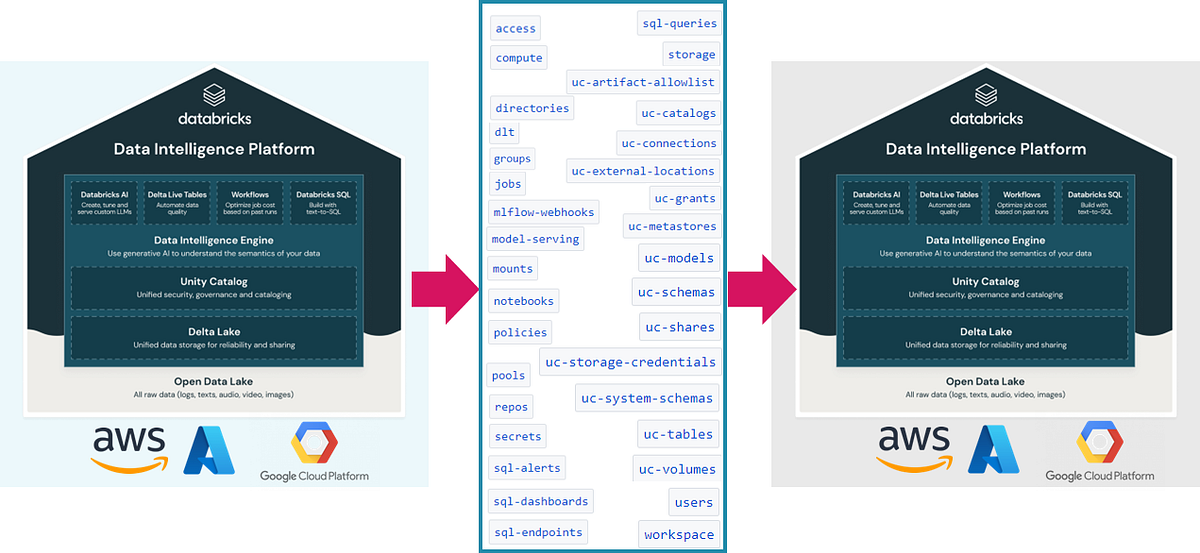

If you dont have these resource in dabs already writing/testing configuration might be a good amount of work . with terraform exporter utility you can export all the resource from one workspace as terraform code and deploy it to new workspace quite easily with considerable less work .

Thank You

Pradeep Singh - https://www.linkedin.com/in/dbxdev

Pradeep Singh - https://www.linkedin.com/in/dbxdev

Announcements

Related Content

- Genie Code inline execution is very slow in Generative AI

- R plots not rendering in Data Engineering

- Automating Job Permission Updates in Databricks Using a Notebook in Data Engineering

- How does Genie look under the hood in Generative AI

- Best Compute Option for Near-Real-Time Databricks API Ingestion Pipeline in Data Engineering