Hi Palash

Thanks for your reply.

In short, renaming the column before the join and before the column rename works (as does selecting only the columns that aren't duplicate keys).

The aliasing does not work. In fact, I've used the standard example from the Pyspark documentation and withColumnRenamed() doesn't work when I add the last two lines here:

from pyspark.sql.functions import col, desc

df = spark.createDataFrame(

[(14, "Tom"), (23, "Alice"), (16, "Bob")], ["age", "name"])

df_as1 = df.alias("df_as1")

df_as2 = df.alias("df_as2")

joined_df = df_as1.join(df_as2, col("df_as1.name") == col("df_as2.name"), 'inner')

joined_df.select(

"df_as1.name", "df_as2.name", "df_as2.age").sort(desc("df_as1.name")).show()

renamed_df = joined_df.withColumnRenamed("df_as1.age", "age_renamed")

renamed_df.show()

I get exceptions for both of the last two lines when running in a Azure Databricks 14.3 LTS notebook.

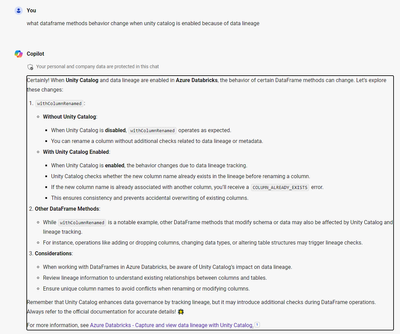

For what it’s worth, I did ask an AI what is going on here and received a response that the behavior of `withColumnRenamed()` changes when Unity Catalog is enabled because of data lineage tracking:

However I cannot find any official references to this. (And this isn't exactly what we are experiencing.)

Does anyone know anything about this? Thanks for your help.

Kevin