Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Data Governance

Join discussions on data governance practices, compliance, and security within the Databricks Community. Exchange strategies and insights to ensure data integrity and regulatory compliance.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks Community

- Data Governance

- Re: "Create external Hive function is not supporte...

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

"Create external Hive function is not supported in Unity Catalog" when registering Hive UDFs

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-01-2023 10:24 AM

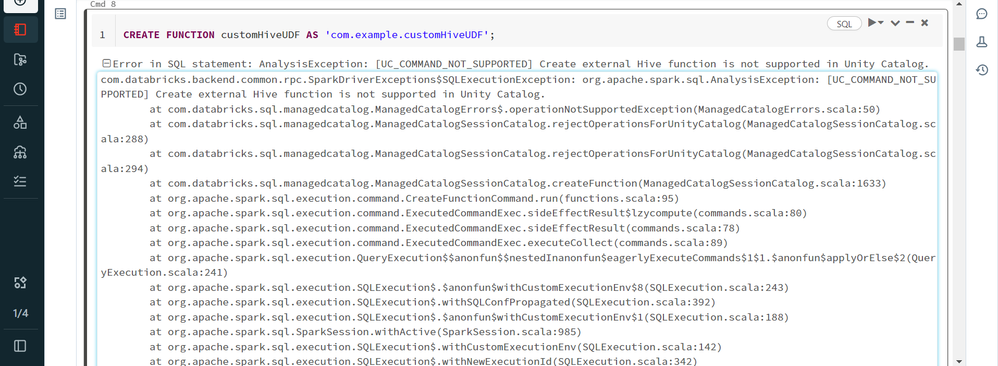

But when I do so, I get this error:

com.databricks.backend.common.rpc.SparkDriverExceptions$SQLExecutionException: org.apache.spark.sql.AnalysisException: [UC_COMMAND_NOT_SUPPORTED] Create external Hive function is not supported in Unity Catalog.

at com.databricks.sql.managedcatalog.ManagedCatalogErrors$.operationNotSupportedException(ManagedCatalogErrors.scala:50)

at com.databricks.sql.managedcatalog.ManagedCatalogSessionCatalog.rejectOperationsForUnityCatalog(ManagedCatalogSessionCatalog.scala:288)

at com.databricks.sql.managedcatalog.ManagedCatalogSessionCatalog.rejectOperationsForUnityCatalog(ManagedCatalogSessionCatalog.scala:294)

at com.databricks.sql.managedcatalog.ManagedCatalogSessionCatalog.createFunction(ManagedCatalogSessionCatalog.scala:1633)

at org.apache.spark.sql.execution.command.CreateFunctionCommand.run(functions.scala:95)

at org.apache.spark.sql.execution.command.ExecutedCommandExec.sideEffectResult$lzycompute(commands.scala:80)

at org.apache.spark.sql.execution.command.ExecutedCommandExec.sideEffectResult(commands.scala:78)

at org.apache.spark.sql.execution.command.ExecutedCommandExec.executeCollect(commands.scala:89)

at org.apache.spark.sql.execution.QueryExecution$$anonfun$$nestedInanonfun$eagerlyExecuteCommands$1$1.$anonfun$applyOrElse$2(QueryExecution.scala:241)

at org.apache.spark.sql.execution.SQLExecution$.$anonfun$withCustomExecutionEnv$8(SQLExecution.scala:243)

at org.apache.spark.sql.execution.SQLExecution$.withSQLConfPropagated(SQLExecution.scala:392)

at org.apache.spark.sql.execution.SQLExecution$.$anonfun$withCustomExecutionEnv$1(SQLExecution.scala:188)

at org.apache.spark.sql.SparkSession.withActive(SparkSession.scala:985)

at org.apache.spark.sql.execution.SQLExecution$.withCustomExecutionEnv(SQLExecution.scala:142)

at org.apache.spark.sql.execution.SQLExecution$.withNewExecutionId(SQLExecution.scala:342)

at org.apache.spark.sql.execution.QueryExecution$$anonfun$$nestedInanonfun$eagerlyExecuteCommands$1$1.$anonfun$applyOrElse$1(QueryExecution.scala:241)

at org.apache.spark.sql.execution.QueryExecution.org$apache$spark$sql$execution$QueryExecution$$withMVTagsIfNecessary(QueryExecution.scala:226)

at org.apache.spark.sql.execution.QueryExecution$$anonfun$$nestedInanonfun$eagerlyExecuteCommands$1$1.applyOrElse(QueryExecution.scala:239)

at org.apache.spark.sql.execution.QueryExecution$$anonfun$$nestedInanonfun$eagerlyExecuteCommands$1$1.applyOrElse(QueryExecution.scala:232)

at org.apache.spark.sql.catalyst.trees.TreeNode.$anonfun$transformDownWithPruning$1(TreeNode.scala:512)

at org.apache.spark.sql.catalyst.trees.CurrentOrigin$.withOrigin(TreeNode.scala:99)

at org.apache.spark.sql.catalyst.trees.TreeNode.transformDownWithPruning(TreeNode.scala:512)

at org.apache.spark.sql.catalyst.plans.logical.LogicalPlan.org$apache$spark$sql$catalyst$plans$logical$AnalysisHelper$$super$transformDownWithPruning(LogicalPlan.scala:31)

at org.apache.spark.sql.catalyst.plans.logical.AnalysisHelper.transformDownWithPruning(AnalysisHelper.scala:268)

at org.apache.spark.sql.catalyst.plans.logical.AnalysisHelper.transformDownWithPruning$(AnalysisHelper.scala:264)

at org.apache.spark.sql.catalyst.plans.logical.LogicalPlan.transformDownWithPruning(LogicalPlan.scala:31)

at org.apache.spark.sql.catalyst.plans.logical.LogicalPlan.transformDownWithPruning(LogicalPlan.scala:31)

at org.apache.spark.sql.catalyst.trees.TreeNode.transformDown(TreeNode.scala:488)

at org.apache.spark.sql.execution.QueryExecution.$anonfun$eagerlyExecuteCommands$1(QueryExecution.scala:232)

at org.apache.spark.sql.catalyst.plans.logical.AnalysisHelper$.allowInvokingTransformsInAnalyzer(AnalysisHelper.scala:324)

at org.apache.spark.sql.execution.QueryExecution.eagerlyExecuteCommands(QueryExecution.scala:232)

at org.apache.spark.sql.execution.QueryExecution.commandExecuted$lzycompute(QueryExecution.scala:186)

at org.apache.spark.sql.execution.QueryExecution.commandExecuted(QueryExecution.scala:177)

at org.apache.spark.sql.Dataset.<init>(Dataset.scala:238)

at org.apache.spark.sql.Dataset$.$anonfun$ofRows$2(Dataset.scala:107)

at org.apache.spark.sql.SparkSession.withActive(SparkSession.scala:985)

at org.apache.spark.sql.Dataset$.ofRows(Dataset.scala:104)

at org.apache.spark.sql.SparkSession.$anonfun$sql$1(SparkSession.scala:820)

at org.apache.spark.sql.SparkSession.withActive(SparkSession.scala:985)

at org.apache.spark.sql.SparkSession.sql(SparkSession.scala:815)

at org.apache.spark.sql.SQLContext.sql(SQLContext.scala:695)

at com.databricks.backend.daemon.driver.SQLDriverLocal.$anonfun$executeSql$1(SQLDriverLocal.scala:91)

at scala.collection.immutable.List.map(List.scala:293)

at com.databricks.backend.daemon.driver.SQLDriverLocal.executeSql(SQLDriverLocal.scala:37)

at com.databricks.backend.daemon.driver.SQLDriverLocal.repl(SQLDriverLocal.scala:145)

at com.databricks.backend.daemon.driver.DriverLocal.$anonfun$execute$23(DriverLocal.scala:728)

at com.databricks.unity.UCSEphemeralState$Handle.runWith(UCSEphemeralState.scala:41)

at com.databricks.unity.HandleImpl.runWith(UCSHandle.scala:99)

at com.databricks.backend.daemon.driver.DriverLocal.$anonfun$execute$20(DriverLocal.scala:711)

at com.databricks.logging.UsageLogging.$anonfun$withAttributionContext$1(UsageLogging.scala:398)

at scala.util.DynamicVariable.withValue(DynamicVariable.scala:62)

at com.databricks.logging.AttributionContext$.withValue(AttributionContext.scala:147)

at com.databricks.logging.UsageLogging.withAttributionContext(UsageLogging.scala:396)

at com.databricks.logging.UsageLogging.withAttributionContext$(UsageLogging.scala:393)

at com.databricks.backend.daemon.driver.DriverLocal.withAttributionContext(DriverLocal.scala:62)

at com.databricks.logging.UsageLogging.withAttributionTags(UsageLogging.scala:441)

at com.databricks.logging.UsageLogging.withAttributionTags$(UsageLogging.scala:426)

at com.databricks.backend.daemon.driver.DriverLocal.withAttributionTags(DriverLocal.scala:62)

at com.databricks.backend.daemon.driver.DriverLocal.execute(DriverLocal.scala:688)

at com.databricks.backend.daemon.driver.DriverWrapper.$anonfun$tryExecutingCommand$1(DriverWrapper.scala:622)

at scala.util.Try$.apply(Try.scala:213)

at com.databricks.backend.daemon.driver.DriverWrapper.tryExecutingCommand(DriverWrapper.scala:614)

at com.databricks.backend.daemon.driver.DriverWrapper.executeCommandAndGetError(DriverWrapper.scala:533)

at com.databricks.backend.daemon.driver.DriverWrapper.executeCommand(DriverWrapper.scala:568)

at com.databricks.backend.daemon.driver.DriverWrapper.runInnerLoop(DriverWrapper.scala:438)

at com.databricks.backend.daemon.driver.DriverWrapper.runInner(DriverWrapper.scala:381)

at com.databricks.backend.daemon.driver.DriverWrapper.run(DriverWrapper.scala:232)

at java.lang.Thread.run(Thread.java:750)

Labels:

4 REPLIES 4

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-01-2023 09:45 PM

Hi, Custom UDFs are not yet supported in Unity Catalog.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-01-2023 11:11 PM

Oh. Is this info officially documented somewhere in the Databricks documentation for Unity Catalog?

Also, will there be any issue if I register the UDFs as temporary functions?

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-06-2023 09:38 AM

According to the docs from here https://docs.databricks.com/data-governance/unity-catalog/index.html

- Python UDF support on shared clusters is supported in Private Preview. Contact your account team for access.

Anonymous

Not applicable

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

04-08-2023 08:05 PM

Hi @Franklin George

Hope everything is going great.

Just wanted to check in if you were able to resolve your issue. If yes, would you be happy to mark an answer as best so that other members can find the solution more quickly? If not, please tell us so we can help you.

Cheers!

Announcements

Related Content

- Registering Delta tables from external storage GCS , S3 , Azure Blob in Databricks Unity Catalog in Data Engineering

- How to deploy an Agent in Generative AI

- Is there a supported method to register a custom PySpark DataSource so that it becomes visible in th in Data Engineering

- Is there a way to register S3 compatible tables? in Administration & Architecture

- LLAMA3.1 logging using mlflow in Generative AI

This widget could not be displayed.

This widget could not be displayed.