- 556 Views

- 2 replies

- 1 kudos

What are the best ways to implement transcription in podcast apps?

I am starting this discussion for everyone who can answer my query.

- 556 Views

- 2 replies

- 1 kudos

- 1 kudos

1. Use Speech-to-Text Models via MLflowIntegrate open-source models like OpenAI Whisper, Hugging Face Wav2Vec2, or AssemblyAI API.Log the model in MLflow for versioning and reproducibility.Deploy as a Databricks Model Serving endpoint for real-time t...

- 1 kudos

- 3730 Views

- 3 replies

- 1 kudos

How can I Resolve QB Desktop Update Error 15225?

I'm encountering QB Desktop update error 15225. What could be causing this issue, and how can I resolve it? It's disrupting my workflow, and I need a quick fix.

- 3730 Views

- 3 replies

- 1 kudos

- 1 kudos

If you're seeing Update Error 15225, don’t worry — it’s usually fixable. First, check that your internet connection is stable and make sure your computer’s date and time are correct. Then, open Internet Options and verify that SSL settings are turned...

- 1 kudos

- 643 Views

- 1 replies

- 2 kudos

Databricks One Lake

Microsoft Ignite always brings exciting updates but the real question is: what do these announcements actually mean for the business, not just for technology teams?That’s exactly what this article is about. I’m breaking down the new Databricks–OnevLa...

- 643 Views

- 1 replies

- 2 kudos

- 2 kudos

Great article @bianca_unifeye Teh move is certainly going to build and unifye the Governance bridge between Azure Databricks and OneLake.

- 2 kudos

- 567 Views

- 1 replies

- 1 kudos

Resolved! Databricks Dashboard Issue: No Mouse-Based Navigation When Dashboard Tabs Exceed the Top Ribbon

When dashboards have many pages, the top tab bar overflows and can’t be navigated using the mouse. Only left keyboard arrow, right keyboard arrow works, which is slow and inconvenient not user friendly.Expected: ability to scroll tabs with mouse e.g....

- 567 Views

- 1 replies

- 1 kudos

- 1 kudos

Hello @surajitDE! You can use the horizontal scroll bar to navigate through the dashboard pages.If you’re on a trackpad, you can simply scroll horizontally. If you’re using a mouse, you can: Hold Shift and scroll with the mouse wheel (easiest), orDra...

- 1 kudos

- 5728 Views

- 4 replies

- 6 kudos

Resolved! How to use variable-overrides.json for environment-specific configuration in Asset Bundles?

Hi all,Could someone clarify the intended usage of the variable-overrides.json file in Databricks Asset Bundles?Let me give some context. Let's say my repository layout looks like this:databricks/ ├── notebooks/ │ └── notebook.ipynb ├── resources/ ...

- 5728 Views

- 4 replies

- 6 kudos

- 6 kudos

It does. Thanks for the reponse. I also continued playing around with it and found a way using the variable-overrides.json file. I'll leave it here just in case anyone is interested:Repository layout:databricks/ ├── notebooks/ │ └── notebook.ipynb ...

- 6 kudos

- 3509 Views

- 4 replies

- 1 kudos

Best practices for optimizing Spark jobs

What are some best practices for optimizing Spark jobs in Databricks, especially when dealing large datasets? Any tips or resources would be greatly appreciated! I’m trying to analyze data on restaurant menu prices so that insights would be especiall...

- 3509 Views

- 4 replies

- 1 kudos

- 1 kudos

In addition to above cool comments, try to use clusters with VMs enabled for disk caching as well. This caches data at parquet files level in VM local storage, acting as a great complement to spark caching.

- 1 kudos

- 531 Views

- 0 replies

- 1 kudos

Agent Bricks Webinar

Our Databricks x Unifeye Meetup community just hit 150 members! A huge milestone, especially considering we’ve consistently had 50+ people joining every webinar. The momentum is real, and the audience keeps growing! This week, we’re taking it one s...

- 531 Views

- 0 replies

- 1 kudos

- 1506 Views

- 2 replies

- 1 kudos

Resolved! SQL cell v spark.sql in notebooks

I am fairly new to Databricks, and indeed Python, so apologies if this has been answered elsewhere but I've been unable to find it.I have been mainly working in notebooks as opposed to the SQL editor, but coding in SQL where possible using SQL cells ...

- 1506 Views

- 2 replies

- 1 kudos

- 1 kudos

Thanks Louis, really good explanation and helpful examples!

- 1 kudos

- 8336 Views

- 5 replies

- 3 kudos

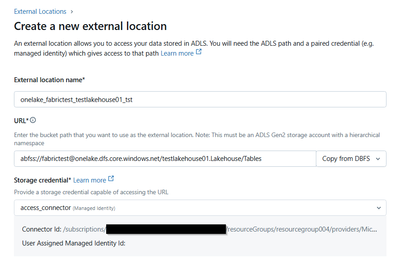

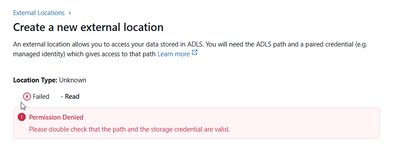

Connect to Onelake using Service Principal, Unity Catalog and Databricks Access Connector

We are trying to connect Databricks to OneLake, to read data from a Fabric workspace into Databricks, using a notebook. We also use Unity Catalog. We are able to read data from the workspace with a Service Principal like this:from pyspark.sql.types i...

- 8336 Views

- 5 replies

- 3 kudos

- 3 kudos

As commented you need to assign "Storage Blob Data Contributor or Storage Account Contributor to the service principal you're using in the "connection" provided to the "external location". Another more advanced and even better option would be to use ...

- 3 kudos

- 4845 Views

- 1 replies

- 0 kudos

Updating a Delta Table in Delta Live Tables (DLT) from Two Event Hubs

I am working with Databricks Delta Live Tables (DLT) and need to ingest data from two different Event Hubs. My goal is to:Ingest initial data from the first Event Hub (Predictor) and store it in a Delta Table (data_predictions).Later, update this tab...

- 4845 Views

- 1 replies

- 0 kudos

- 0 kudos

To achieve robust, persistent CDC (Change Data Capture)–style updates in Databricks DLT with your scenario—while keeping data_predictions as a Delta Table (not a Materialized View)—you need to carefully avoid streaming joins and side effects across s...

- 0 kudos

- 4097 Views

- 1 replies

- 1 kudos

Resolved! Databricks UMF Best Practice

Hi there, I would like to get some feedback on what are the ideal/suggested ways to get UMF data from our Azure cloud into Databricks. For context, UMF can mean either:User Managed FileUser Maintained FileBasically, a UMF could be something like a si...

- 4097 Views

- 1 replies

- 1 kudos

- 1 kudos

Several effective patterns exist for ingesting User Managed Files (UMF) such as CSVs from Azure into Databricks, each with different trade-offs depending on governance, user interface preferences, and integration with Microsoft 365 services. Common A...

- 1 kudos

- 4629 Views

- 1 replies

- 0 kudos

DLT detecting changes but not applying them

We have three source tables used for a streaming dimension table in silver. Around 50K records are changed in one of the source tables, and the DLT pipeline shows that it has updated those 50K records, but they remain unchanged. The only way to pick ...

- 4629 Views

- 1 replies

- 0 kudos

- 0 kudos

The most likely reason your DLT pipeline shows 50K updates but the records remain unchanged is related to how Delta Live Tables (DLT) handle streaming tables, update logic, and schema constraints. When the target table uses an auto-increment ID (espe...

- 0 kudos

- 3838 Views

- 1 replies

- 0 kudos

Issue with Disabled "Repair DAG", "Repair All DAGs" Buttons in Airflow UI, functionality is working.

We are encountering an issue in the Airflow UI where the 'Repair DAG' and 'Repair All DAGs' options are disabled when a specific task fails. While the repair functionality itself is working properly (i.e., the DAGs can still be repaired through execu...

- 3838 Views

- 1 replies

- 0 kudos

- 0 kudos

The issue with the 'Repair DAG' and 'Repair All DAGs' options being disabled in the Airflow UI when using the Databricks Workflow Operator is a known UI-specific problem that does not affect backend execution or the actual repair functionality. While...

- 0 kudos

- 5008 Views

- 1 replies

- 1 kudos

How to Fetch Azure OpenAI api_version and engine Dynamically After Resource Creation via Python?

Hello,I am using Python to automate the creation of Azure OpenAI resources via the Azure Management API. I am successfully able to create the resource, but I need to dynamically fetch the following details after the resource is created:API Version (a...

- 5008 Views

- 1 replies

- 1 kudos

- 1 kudos

Hi Sudheer, It's been a while since you posted, but are you still facing this issue? Here are a few things you could check if needed: API version In Azure OpenAI, api-version is a query parameter on the data-plane (inference) requests, not a proper...

- 1 kudos

- 1691 Views

- 5 replies

- 2 kudos

Resolved! #data bricks snowflake dialect

Hello,I’m encountering an issue while converting SQL code to the Lake bridge Snowflake dialect. It seems that DML and DDL statements may not be supported in the Snowflake dialect within Lake bridge.Could you please confirm whether DML and DDL stateme...

- 1691 Views

- 5 replies

- 2 kudos

- 2 kudos

@jadhav_vikas , I did some digging through internal docs and I have some hints/suggestions. Short answer Databricks Lakehouse Federation (often referred to as “Lakehouse Bridge”) provides read‑only access to Snowflake; DML and DDL are not supported ...

- 2 kudos

-

.CSV

1 -

Access Data

2 -

Access Databricks

3 -

Access Delta Tables

2 -

Account reset

1 -

adcAws databricks

1 -

ADF Pipeline

1 -

ADLS Gen2 With ABFSS

1 -

Advanced Data Engineering

2 -

AI

5 -

Analytics

1 -

Apache spark

1 -

Apache Spark 3.0

1 -

api

1 -

Api Calls

1 -

API Documentation

4 -

App

2 -

Application

2 -

Architecture

1 -

asset bundle

1 -

Asset Bundles

3 -

Auto-loader

1 -

Autoloader

4 -

Aws databricks

1 -

AWS security token

1 -

AWSDatabricksCluster

1 -

Azure

7 -

Azure data disk

1 -

Azure databricks

16 -

Azure Databricks Delta Table

1 -

Azure Databricks Job

1 -

Azure Databricks SQL

6 -

Azure databricks workspace

1 -

Azure Unity Catalog

6 -

Azure-databricks

1 -

AzureDatabricks

1 -

AzureDevopsRepo

1 -

best practices

1 -

Big Data Solutions

1 -

Billing

1 -

Billing and Cost Management

2 -

Blackduck

1 -

Bronze Layer

1 -

Business Intelligence

1 -

CDC

1 -

Certification

3 -

Certification Exam

1 -

Certification Voucher

3 -

CICDForDatabricksWorkflows

1 -

Cloud_files_state

1 -

CloudFiles

1 -

Cluster

3 -

Cluster Init Script

1 -

Comments

1 -

Community Edition

4 -

Community Edition Account

1 -

Community Event

1 -

Community Group

2 -

Community Members

1 -

Community site

1 -

CommunityArticle

1 -

Compute

3 -

Compute Instances

1 -

conditional tasks

1 -

Connection

1 -

Contest

1 -

Credentials

1 -

csv

1 -

Custom Python

1 -

CustomLibrary

1 -

Data

1 -

Data + AI Summit

1 -

Data Engineer Associate

1 -

Data Engineering

4 -

Data Explorer

1 -

Data Governance

1 -

Data Ingestion & connectivity

1 -

Data Ingestion Architecture

1 -

Data Processing

1 -

Databrick add-on for Splunk

1 -

databricks

4 -

Databricks Academy

1 -

Databricks AI + Data Summit

1 -

Databricks Alerts

1 -

Databricks App

1 -

Databricks Assistant

1 -

Databricks autoloader

1 -

Databricks Certification

1 -

Databricks Cluster

2 -

Databricks Clusters

1 -

Databricks Community

10 -

Databricks community edition

3 -

Databricks Community Edition Account

1 -

Databricks Community Rewards Store

3 -

Databricks connect

1 -

Databricks Dashboard

3 -

Databricks delta

2 -

Databricks Delta Table

2 -

Databricks Demo Center

1 -

Databricks Documentation

4 -

Databricks genAI associate

1 -

Databricks JDBC Driver

1 -

Databricks Job

1 -

Databricks Lakeflow

1 -

Databricks Lakehouse Platform

6 -

Databricks Migration

1 -

Databricks Model

1 -

Databricks notebook

2 -

Databricks Notebooks

4 -

Databricks Platform

2 -

Databricks Pyspark

1 -

Databricks Python Notebook

1 -

Databricks Repo

1 -

Databricks Runtime

1 -

Databricks Serverless

2 -

Databricks SQL

5 -

Databricks SQL Alerts

1 -

Databricks SQL Warehouse

1 -

Databricks Terraform

1 -

Databricks UI

1 -

Databricks Unity Catalog

4 -

Databricks User Group

1 -

Databricks Workflow

2 -

Databricks Workflows

2 -

Databricks workspace

3 -

Databricks-connect

1 -

databricks_cluster_policy

1 -

DatabricksJobCluster

1 -

DataCleanroom

1 -

DataDays

1 -

Datagrip

1 -

DataMasking

2 -

DataVersioning

1 -

dbdemos

2 -

DBFS

1 -

DBRuntime

1 -

DBSQL

1 -

DDL

1 -

Dear Community

1 -

deduplication

1 -

Delt Lake

1 -

Delta Live Pipeline

3 -

Delta Live Table

5 -

Delta Live Table Pipeline

5 -

Delta Live Table Pipelines

4 -

Delta Live Tables

7 -

Delta Sharing

2 -

Delta Time Travel

1 -

deltaSharing

1 -

Deny assignment

1 -

Development

1 -

DevOps

1 -

DLT

10 -

DLT Pipeline

7 -

DLT Pipelines

5 -

Dolly

1 -

Download files

1 -

DQX

1 -

Dynamic Variables

1 -

Engineering With Databricks

1 -

env

1 -

ETL Pipelines

1 -

Event Driven

1 -

External Sources

1 -

External Storage

2 -

FAQ for Databricks Learning Festival

2 -

Feature Store

2 -

File Trigger

1 -

Filenotfoundexception

1 -

Free Edition

1 -

Free trial

1 -

friendsofcommunity

1 -

GCP Databricks

1 -

GenAI

2 -

GenAI and LLMs

1 -

GenAI Course Material

1 -

Getting started

3 -

Google Bigquery

1 -

HIPAA

1 -

Hubert Dudek

2 -

import

2 -

Integration

1 -

JDBC Connections

1 -

JDBC Connector

1 -

Job Task

1 -

JSON Object

1 -

LakeflowDesigner

1 -

Learning

2 -

Lineage

1 -

LLM

1 -

Login

1 -

Login Account

1 -

Machine Learning

3 -

MachineLearning

1 -

Materialized Tables

2 -

Medallion Architecture

1 -

meetup

2 -

Metadata

1 -

Migration

1 -

ML Model

2 -

MlFlow

2 -

Model

1 -

Model Serving

1 -

Model Training

1 -

Module

1 -

Monitoring

1 -

Networking

2 -

Notebook

1 -

Onboarding Trainings

1 -

OpenAI

1 -

Pandas udf

1 -

Permissions

1 -

personalcompute

1 -

Pipeline

2 -

Plotly

1 -

PostgresSQL

1 -

Pricing

1 -

provisioned throughput

1 -

Pyspark

1 -

Python

5 -

Python Code

1 -

Python Wheel

1 -

Quickstart

1 -

Read data

1 -

Repos Support

1 -

Reset

1 -

Rewards Store

2 -

Salesforce with Databricks

1 -

Sant

1 -

Schedule

1 -

Serverless

3 -

serving endpoint

1 -

Session

1 -

Sign Up Issues

2 -

Software Development

1 -

Spark

1 -

Spark Connect

1 -

Spark scala

1 -

sparkui

2 -

Speakers

1 -

Splunk

2 -

SQL

8 -

streamlit

1 -

Summit23

7 -

Support Tickets

1 -

Sydney

2 -

Table Download

1 -

Tags

3 -

terraform

1 -

Training

2 -

Troubleshooting

1 -

Unity Catalog

4 -

Unity Catalog Metastore

2 -

Update

1 -

user groups

2 -

Venicold

3 -

Vnet

1 -

Voucher Not Recieved

1 -

Watermark

1 -

Weekly Documentation Update

1 -

Weekly Release Notes

2 -

Women

1 -

Workflow

2 -

Workspace

3

- « Previous

- Next »

| User | Count |

|---|---|

| 144 | |

| 135 | |

| 57 | |

| 45 | |

| 42 |