- 10434 Views

- 3 replies

- 3 kudos

Resolved! DLT Job Clusters: Continuous vs Triggered Cluster Start Times

Hi there,I'm curious if anyone is able to definitively help me answer how DLT Job Clusters operate/run.For example, the following is my baseline understanding of DLT Job Clusters. If I run a Triggered DLT Pipeline (e.g. daily) the job cluster takes m...

- 10434 Views

- 3 replies

- 3 kudos

- 3 kudos

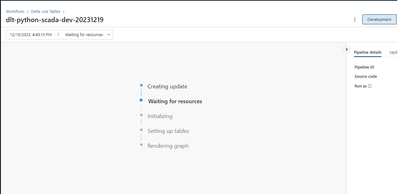

Ideally one would expect clusters used for DLT pipeline to terminate after the pipeline execution has finished. However, while running in `development` environment, you'll notice it doesn't terminate on its own, whereas in `production` it terminates ...

- 3 kudos

- 11828 Views

- 2 replies

- 3 kudos

DLT Primary Key Deduplication: Expectations vs. Constraints vs. Other?

I'm trying to figure out what's the best way to "de-duplicate" data via DLT. Currently, my only leads are:Manage data quality with Delta Live Tables | Databricks on AWSVia "Drop invalid records"Constraints on Databricks | Databricks on AWSVia "pre-de...

- 11828 Views

- 2 replies

- 3 kudos

- 3 kudos

Hey @ChristianRRL ,Based on my understanding you want to de-duplicate your data during your DLT pipeline processing unfortunately I was not able to find a solution to this when I ran into this problem due to the native feature limitations.Limitations...

- 3 kudos

- 6601 Views

- 2 replies

- 1 kudos

Resolved! DLT Notebook and Pipeline Separation vs Consolidation

Super basic question. For DLT pipelines I see there's an option to add multiple "Paths". Is it generally best practice to completely separate `bronze` from `silver` notebooks? Or is it more recommended to bundle both raw `bronze` and clean `silver` d...

- 6601 Views

- 2 replies

- 1 kudos

- 1 kudos

This is great! I completely missed the list view before.

- 1 kudos

- 10885 Views

- 5 replies

- 2 kudos

DLT Compute Resources - What Compute Is It???

Hi there, I'm wondering if someone can help me understand what compute resources DLT uses? It's not clear to me at all if it uses the last compute cluster I had been working on, or something else entirely.Can someone please help clarify this?

- 10885 Views

- 5 replies

- 2 kudos

- 2 kudos

Well, one thing they emphasize in the 'Adavanced Data Engineer' Training is that job-clusters will terminate within 5 minutes after a job is completed. So this could be in support of your theory to lower costs. I think job-cluster are actually design...

- 2 kudos

- 3217 Views

- 2 replies

- 0 kudos

Auto Loader Use Case Question - Centralized Dropzone to Bronze?

Good day,I am trying to use Auto Loader (potentially extending into DLT in the future) to easily pull data coming from an external system (currently located in a single location) and organize it and load it respectively. I am struggling quite a bit a...

- 3217 Views

- 2 replies

- 0 kudos

- 0 kudos

Quick follow-up on this @Retired_mod (or to anyone else in the Databricks multi-verse who is able to help clarify this case).I understand that the proposed solution would work for a "one-to-one" case where many files are landing in a specific dbfs pa...

- 0 kudos

- 1845 Views

- 1 replies

- 0 kudos

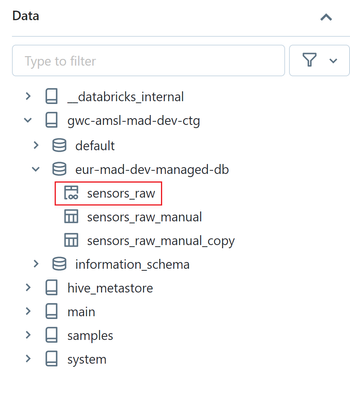

Problem sharing a streaming table created in Delta Live Table via Delta Sharing

Hi all,I hope you could help me to figure out what I am missing.I'm trying to do a simple thing. To read the data from the data ingestion zone (csv files saved to Azure Storage Account) using the Delta Live Tables pipeline and share the resulting tab...

- 1845 Views

- 1 replies

- 0 kudos

- 0 kudos

Sorry, I think I've created the post in the wrong thread. Created the same post in the Community Cove.

- 0 kudos

- 3591 Views

- 1 replies

- 3 kudos

Announcing that the Data + AI Summit 2023 Session Catalog is now live! With more than 250 sessions, Data + AI Summit has something for everyone. Choos...

Announcing that the Data + AI Summit 2023 Session Catalog is now live!With more than 250 sessions, Data + AI Summit has something for everyone. Choose from technical deep dives, hands-on training, lightning talks and more. Check out the catalog and u...

- 3591 Views

- 1 replies

- 3 kudos

-

.CSV

1 -

Access Data

2 -

Access Databricks

3 -

Access Delta Tables

2 -

Account reset

1 -

adcAws databricks

1 -

ADF Pipeline

1 -

ADLS Gen2 With ABFSS

1 -

Advanced Data Engineering

2 -

AI

5 -

Analytics

1 -

Apache spark

1 -

Apache Spark 3.0

1 -

api

1 -

Api Calls

1 -

API Documentation

4 -

App

2 -

Application

2 -

Architecture

1 -

asset bundle

1 -

Asset Bundles

3 -

Auto-loader

1 -

Autoloader

4 -

Aws databricks

1 -

AWS security token

1 -

AWSDatabricksCluster

1 -

Azure

7 -

Azure data disk

1 -

Azure databricks

16 -

Azure Databricks Delta Table

1 -

Azure Databricks Job

1 -

Azure Databricks SQL

6 -

Azure databricks workspace

1 -

Azure Unity Catalog

6 -

Azure-databricks

1 -

AzureDatabricks

1 -

AzureDevopsRepo

1 -

best practices

1 -

Big Data Solutions

1 -

Billing

1 -

Billing and Cost Management

2 -

Blackduck

1 -

Bronze Layer

1 -

CDC

1 -

Certification

3 -

Certification Exam

1 -

Certification Voucher

3 -

CICDForDatabricksWorkflows

1 -

Cloud_files_state

1 -

CloudFiles

1 -

Cluster

3 -

Cluster Init Script

1 -

Comments

1 -

Community Edition

4 -

Community Edition Account

1 -

Community Event

1 -

Community Group

2 -

Community Members

1 -

Community site

1 -

Compute

3 -

Compute Instances

1 -

conditional tasks

1 -

Connection

1 -

Contest

1 -

Credentials

1 -

csv

1 -

Custom Python

1 -

CustomLibrary

1 -

Data

1 -

Data + AI Summit

1 -

Data Engineer Associate

1 -

Data Engineering

4 -

Data Explorer

1 -

Data Governance

1 -

Data Ingestion & connectivity

1 -

Data Ingestion Architecture

1 -

Data Processing

1 -

Databrick add-on for Splunk

1 -

databricks

4 -

Databricks Academy

1 -

Databricks AI + Data Summit

1 -

Databricks Alerts

1 -

Databricks App

1 -

Databricks Assistant

1 -

Databricks autoloader

1 -

Databricks Certification

1 -

Databricks Cluster

2 -

Databricks Clusters

1 -

Databricks Community

10 -

Databricks community edition

3 -

Databricks Community Edition Account

1 -

Databricks Community Rewards Store

3 -

Databricks connect

1 -

Databricks Dashboard

3 -

Databricks delta

2 -

Databricks Delta Table

2 -

Databricks Demo Center

1 -

Databricks Documentation

4 -

Databricks genAI associate

1 -

Databricks JDBC Driver

1 -

Databricks Job

1 -

Databricks Lakeflow

1 -

Databricks Lakehouse Platform

6 -

Databricks Migration

1 -

Databricks Model

1 -

Databricks notebook

2 -

Databricks Notebooks

4 -

Databricks Platform

2 -

Databricks Pyspark

1 -

Databricks Python Notebook

1 -

Databricks Repo

1 -

Databricks Runtime

1 -

Databricks Serverless

2 -

Databricks SQL

5 -

Databricks SQL Alerts

1 -

Databricks SQL Warehouse

1 -

Databricks Terraform

1 -

Databricks UI

1 -

Databricks Unity Catalog

4 -

Databricks User Group

1 -

Databricks Workflow

2 -

Databricks Workflows

2 -

Databricks workspace

3 -

Databricks-connect

1 -

databricks_cluster_policy

1 -

DatabricksJobCluster

1 -

DataCleanroom

1 -

DataDays

1 -

Datagrip

1 -

DataMasking

2 -

DataVersioning

1 -

dbdemos

2 -

DBFS

1 -

DBRuntime

1 -

DBSQL

1 -

DDL

1 -

Dear Community

1 -

deduplication

1 -

Delt Lake

1 -

Delta Live Pipeline

3 -

Delta Live Table

5 -

Delta Live Table Pipeline

5 -

Delta Live Table Pipelines

4 -

Delta Live Tables

7 -

Delta Sharing

2 -

Delta Time Travel

1 -

deltaSharing

1 -

Deny assignment

1 -

Development

1 -

Devops

1 -

DLT

10 -

DLT Pipeline

7 -

DLT Pipelines

5 -

Dolly

1 -

Download files

1 -

DQX

1 -

Dynamic Variables

1 -

Engineering With Databricks

1 -

env

1 -

ETL Pipelines

1 -

Event Driven

1 -

External Sources

1 -

External Storage

2 -

FAQ for Databricks Learning Festival

2 -

Feature Store

2 -

File Trigger

1 -

Filenotfoundexception

1 -

Free Edition

1 -

Free trial

1 -

friendsofcommunity

1 -

GCP Databricks

1 -

GenAI

2 -

GenAI and LLMs

1 -

GenAI Course Material

1 -

Getting started

3 -

Google Bigquery

1 -

HIPAA

1 -

Hubert Dudek

2 -

import

2 -

Integration

1 -

JDBC Connections

1 -

JDBC Connector

1 -

Job Task

1 -

JSON Object

1 -

LakeflowDesigner

1 -

Learning

2 -

Lineage

1 -

LLM

1 -

Login

1 -

Login Account

1 -

Machine Learning

3 -

MachineLearning

1 -

Materialized Tables

2 -

Medallion Architecture

1 -

meetup

2 -

Metadata

1 -

Migration

1 -

ML Model

2 -

MlFlow

2 -

Model

1 -

Model Serving

1 -

Model Training

1 -

Module

1 -

Monitoring

1 -

Networking

2 -

Notebook

1 -

Onboarding Trainings

1 -

OpenAI

1 -

Pandas udf

1 -

Permissions

1 -

personalcompute

1 -

Pipeline

2 -

Plotly

1 -

PostgresSQL

1 -

Pricing

1 -

provisioned throughput

1 -

Pyspark

1 -

Python

5 -

Python Code

1 -

Python Wheel

1 -

Quickstart

1 -

Read data

1 -

Repos Support

1 -

Reset

1 -

Rewards Store

2 -

Sant

1 -

Schedule

1 -

Serverless

3 -

serving endpoint

1 -

Session

1 -

Sign Up Issues

2 -

Software Development

1 -

Spark

1 -

Spark Connect

1 -

Spark scala

1 -

sparkui

2 -

Speakers

1 -

Splunk

2 -

SQL

8 -

streamlit

1 -

Summit23

7 -

Support Tickets

1 -

Sydney

2 -

Table Download

1 -

Tags

3 -

terraform

1 -

Training

2 -

Troubleshooting

1 -

Unity Catalog

4 -

Unity Catalog Metastore

2 -

Update

1 -

user groups

2 -

Venicold

3 -

Vnet

1 -

Voucher Not Recieved

1 -

Watermark

1 -

Weekly Documentation Update

1 -

Weekly Release Notes

2 -

Women

1 -

Workflow

2 -

Workspace

3

- « Previous

- Next »