- 3570 Views

- 2 replies

- 1 kudos

Resolved! Databricks SQL warehouse best practices

How best we can design Databricks SQL warehouse for multiple environments, and multiple data marts, is there any best practices or guidelines?

- 3570 Views

- 2 replies

- 1 kudos

- 1 kudos

Hi @Phani1 We haven't heard from you since the last response from @Vinay_M_R , and I was checking back to see if her suggestions helped you. Or else, If you have any solution, please share it with the community, as it can be helpful to others. Also...

- 1 kudos

- 2721 Views

- 2 replies

- 3 kudos

Amazing Women in Data AI session at Data AI Summit 2023

Great first time experience attending the keynotes, sessions, trainings and certifications. It was a great opportunity to connect with like minded individuals and women in Data AI panel and learn about the community.

- 2721 Views

- 2 replies

- 3 kudos

- 3 kudos

I agreed with you 100%. I really like it.

- 3 kudos

- 1120 Views

- 0 replies

- 0 kudos

AWS S3 Bucket Access from Unity Catalog?

Asking for a new logo customer…Let's say my unity catalog is in account A of AWS. The buckets that I need to access are in account B of AWS. The unity catalog is unable to create an external location based of this bucket even though all the necessary...

- 1120 Views

- 0 replies

- 0 kudos

- 1716 Views

- 0 replies

- 1 kudos

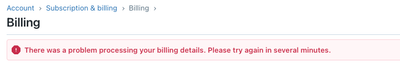

Card billing issues

Does anybody know if this is an ongoing issue (see screenshot below)? Trying to do business here, but not able to go through. We tried using two different cards using different networks.

- 1716 Views

- 0 replies

- 1 kudos

- 2850 Views

- 3 replies

- 1 kudos

Databricks on AWS

I want to host databricks on AWS. I want to know if we create databricks on top of AWS, will it be created in same account's VPC or will it be created out of my AWS account?If it is going to be created in my account, will it create a new VPC for me?T...

- 2850 Views

- 3 replies

- 1 kudos

- 1 kudos

Hi, If you want to know more about the how to properly setup the databricks on top of AWS. I would really recommend to do the AWS platform administrator course of Databricks. In this everything is explained what you need to know. Hopes this helps.Kin...

- 1 kudos

- 3416 Views

- 1 replies

- 0 kudos

503 Error from Databricks when Cluster Inactive/Starting Up via Alteryx

Hello,I have been connecting to Databricks via Alteryx. It works fine when our cluster is active, but returns a 503 Service Unavailable error if the Cluster is inactive/starting up. I have previously reached out to Alteryx, but they have told me this...

- 3416 Views

- 1 replies

- 0 kudos

- 0 kudos

I should have mentioned in the original post, we are using Microsoft Azure and a Simba Spark ODBC Driver.

- 0 kudos

- 1446 Views

- 0 replies

- 0 kudos

How to access ADLS Gen2 hdfs from a databricks cluster which has credential passthrough enabled?

When executing through a Databricks cluster with credential passthrough enabled, I wish to obtain supplementary file attributes in ADLS, such as the file's last modified time, which are currently unavailable in the databricks dbutils.fs.ls function.W...

- 1446 Views

- 0 replies

- 0 kudos

- 3892 Views

- 1 replies

- 1 kudos

No points shown in databricks new community page

There are no points displayed in Databricks new community page. Is it the same for all or only for me if I have done something wrong.

- 3892 Views

- 1 replies

- 1 kudos

- 1 kudos

same concerns with you. on my account also didnot find where place display how many point in my account

- 1 kudos

- 23764 Views

- 19 replies

- 49 kudos

The updated Databricks Community welcomes you!

To ensure we continue to evolve and mature to deliver greater value to you, we are happy to unveil this revamped Databricks Community experience and platform with an improved user interface, content and discussion categories organised based on areas ...

- 23764 Views

- 19 replies

- 49 kudos

- 49 kudos

Does anyone know how to change the email address in the new community?

- 49 kudos

- 5241 Views

- 1 replies

- 4 kudos

Resolved! Raffle contest Swag recevied

Hello Everyone,Today i Recevied DAIS swag.Thank you databricks for providing such nice swag @Retired_mod @Sujitha

- 5241 Views

- 1 replies

- 4 kudos

- 1140 Views

- 0 replies

- 0 kudos

Unity Catalog - Add File Error (was working fine before volumes were added)

Using unity catalog Add File following error is happening, is something to do with permissions that are recently changed, was working fine before (2 weeks ago), users have all required permissions (MODIFY, SELECT, EXECUTE,CREATE TABLE, USE CATALOG, U...

- 1140 Views

- 0 replies

- 0 kudos

- 5581 Views

- 3 replies

- 1 kudos

Unable to access S3 objects from Databricks using IAM access keys in both AWS and Azure Databricks

Hi Team,We are trying to connect to Amazon S3 bucket from both Databricks running on AWS and Azure using IAM access keys directly through Scala code in Notebook and we are facing com.amazonaws.services.s3.model.AmazonS3Exception: Forbidden; with stat...

- 5581 Views

- 3 replies

- 1 kudos

- 1 kudos

Hi @Obulreddy We haven't heard from you since the last response from @KaKa , and I was checking back to see if her suggestions helped you. Or else, If you have any solution, please share it with the community, as it can be helpful to others. Also,...

- 1 kudos

- 29882 Views

- 5 replies

- 5 kudos

What are the best practices for spark DataFrame caching?

Hi,When caching a DataFrame, I always use "df.cache().count()".However, in this reference, it is suggested to save the cached DataFrame into a new variable:When you cache a DataFrame create a new variable for it cachedDF = df.cache(). This will allow...

- 29882 Views

- 5 replies

- 5 kudos

- 5 kudos

In addition to other comments, I will just add that make sure you do the cache only when necessary. i.e. if you need to save a data frame for a time being to be referenced later in the code, then you should consider doing a cache. But if your code ha...

- 5 kudos

- 2854 Views

- 0 replies

- 2 kudos

Databricks Community rewards portal is down

When can we expect the Databricks Community rewards portal to be up and running. The page shows the warning message as website is under construction. Attached the screen shot of the message for your reference. Kindly resolve the issue and reload the ...

- 2854 Views

- 0 replies

- 2 kudos

- 7659 Views

- 4 replies

- 4 kudos

Resolved! What is the best approach to display DataFrame without re-executing the logic each time we display?

Hi,I have a DataFrame and different transformations are applied on the DataFrame. I want to display DataFrame after several transformations to check the results. However, according to the Reference, every time I try to display results, it runs the e...

- 7659 Views

- 4 replies

- 4 kudos

- 4 kudos

Thanks.In this reference, it is suggested to save the cached DataFrame into a new variable:When you cache a DataFrame create a new variable for it cachedDF = df.cache(). This will allow you to bypass the problems that we were solving in our example, ...

- 4 kudos

-

.CSV

1 -

Access Data

2 -

Access Databricks

3 -

Access Delta Tables

2 -

Account reset

1 -

adcAws databricks

1 -

ADF Pipeline

1 -

ADLS Gen2 With ABFSS

1 -

Advanced Data Engineering

2 -

AI

5 -

Analytics

1 -

Apache spark

1 -

Apache Spark 3.0

1 -

api

1 -

Api Calls

1 -

API Documentation

4 -

App

2 -

Application

2 -

Architecture

1 -

asset bundle

1 -

Asset Bundles

3 -

Auto-loader

1 -

Autoloader

4 -

Aws databricks

1 -

AWS security token

1 -

AWSDatabricksCluster

1 -

Azure

7 -

Azure data disk

1 -

Azure databricks

16 -

Azure Databricks Delta Table

1 -

Azure Databricks Job

1 -

Azure Databricks SQL

6 -

Azure databricks workspace

1 -

Azure Unity Catalog

6 -

Azure-databricks

1 -

AzureDatabricks

1 -

AzureDevopsRepo

1 -

best practices

1 -

Big Data Solutions

1 -

Billing

1 -

Billing and Cost Management

2 -

Blackduck

1 -

Bronze Layer

1 -

CDC

1 -

Certification

3 -

Certification Exam

1 -

Certification Voucher

3 -

CICDForDatabricksWorkflows

1 -

Cloud_files_state

1 -

CloudFiles

1 -

Cluster

3 -

Cluster Init Script

1 -

Comments

1 -

Community Edition

4 -

Community Edition Account

1 -

Community Event

1 -

Community Group

2 -

Community Members

1 -

Community site

1 -

Compute

3 -

Compute Instances

1 -

conditional tasks

1 -

Connection

1 -

Contest

1 -

Credentials

1 -

csv

1 -

Custom Python

1 -

CustomLibrary

1 -

Data

1 -

Data + AI Summit

1 -

Data Engineer Associate

1 -

Data Engineering

4 -

Data Explorer

1 -

Data Governance

1 -

Data Ingestion & connectivity

1 -

Data Ingestion Architecture

1 -

Data Processing

1 -

Databrick add-on for Splunk

1 -

databricks

4 -

Databricks Academy

1 -

Databricks AI + Data Summit

1 -

Databricks Alerts

1 -

Databricks App

1 -

Databricks Assistant

1 -

Databricks autoloader

1 -

Databricks Certification

1 -

Databricks Cluster

2 -

Databricks Clusters

1 -

Databricks Community

10 -

Databricks community edition

3 -

Databricks Community Edition Account

1 -

Databricks Community Rewards Store

3 -

Databricks connect

1 -

Databricks Dashboard

3 -

Databricks delta

2 -

Databricks Delta Table

2 -

Databricks Demo Center

1 -

Databricks Documentation

4 -

Databricks genAI associate

1 -

Databricks JDBC Driver

1 -

Databricks Job

1 -

Databricks Lakeflow

1 -

Databricks Lakehouse Platform

6 -

Databricks Migration

1 -

Databricks Model

1 -

Databricks notebook

2 -

Databricks Notebooks

4 -

Databricks Platform

2 -

Databricks Pyspark

1 -

Databricks Python Notebook

1 -

Databricks Repo

1 -

Databricks Runtime

1 -

Databricks Serverless

2 -

Databricks SQL

5 -

Databricks SQL Alerts

1 -

Databricks SQL Warehouse

1 -

Databricks Terraform

1 -

Databricks UI

1 -

Databricks Unity Catalog

4 -

Databricks User Group

1 -

Databricks Workflow

2 -

Databricks Workflows

2 -

Databricks workspace

3 -

Databricks-connect

1 -

databricks_cluster_policy

1 -

DatabricksJobCluster

1 -

DataCleanroom

1 -

DataDays

1 -

Datagrip

1 -

DataMasking

2 -

DataVersioning

1 -

dbdemos

2 -

DBFS

1 -

DBRuntime

1 -

DBSQL

1 -

DDL

1 -

Dear Community

1 -

deduplication

1 -

Delt Lake

1 -

Delta Live Pipeline

3 -

Delta Live Table

5 -

Delta Live Table Pipeline

5 -

Delta Live Table Pipelines

4 -

Delta Live Tables

7 -

Delta Sharing

2 -

Delta Time Travel

1 -

deltaSharing

1 -

Deny assignment

1 -

Development

1 -

Devops

1 -

DLT

10 -

DLT Pipeline

7 -

DLT Pipelines

5 -

Dolly

1 -

Download files

1 -

DQX

1 -

Dynamic Variables

1 -

Engineering With Databricks

1 -

env

1 -

ETL Pipelines

1 -

Event Driven

1 -

External Sources

1 -

External Storage

2 -

FAQ for Databricks Learning Festival

2 -

Feature Store

2 -

File Trigger

1 -

Filenotfoundexception

1 -

Free Edition

1 -

Free trial

1 -

friendsofcommunity

1 -

GCP Databricks

1 -

GenAI

2 -

GenAI and LLMs

1 -

GenAI Course Material

1 -

Getting started

3 -

Google Bigquery

1 -

HIPAA

1 -

Hubert Dudek

2 -

import

2 -

Integration

1 -

JDBC Connections

1 -

JDBC Connector

1 -

Job Task

1 -

JSON Object

1 -

LakeflowDesigner

1 -

Learning

2 -

Lineage

1 -

LLM

1 -

Login

1 -

Login Account

1 -

Machine Learning

3 -

MachineLearning

1 -

Materialized Tables

2 -

Medallion Architecture

1 -

meetup

2 -

Metadata

1 -

Migration

1 -

ML Model

2 -

MlFlow

2 -

Model

1 -

Model Serving

1 -

Model Training

1 -

Module

1 -

Monitoring

1 -

Networking

2 -

Notebook

1 -

Onboarding Trainings

1 -

OpenAI

1 -

Pandas udf

1 -

Permissions

1 -

personalcompute

1 -

Pipeline

2 -

Plotly

1 -

PostgresSQL

1 -

Pricing

1 -

provisioned throughput

1 -

Pyspark

1 -

Python

5 -

Python Code

1 -

Python Wheel

1 -

Quickstart

1 -

Read data

1 -

Repos Support

1 -

Reset

1 -

Rewards Store

2 -

Sant

1 -

Schedule

1 -

Serverless

3 -

serving endpoint

1 -

Session

1 -

Sign Up Issues

2 -

Software Development

1 -

Spark

1 -

Spark Connect

1 -

Spark scala

1 -

sparkui

2 -

Speakers

1 -

Splunk

2 -

SQL

8 -

streamlit

1 -

Summit23

7 -

Support Tickets

1 -

Sydney

2 -

Table Download

1 -

Tags

3 -

terraform

1 -

Training

2 -

Troubleshooting

1 -

Unity Catalog

4 -

Unity Catalog Metastore

2 -

Update

1 -

user groups

2 -

Venicold

3 -

Vnet

1 -

Voucher Not Recieved

1 -

Watermark

1 -

Weekly Documentation Update

1 -

Weekly Release Notes

2 -

Women

1 -

Workflow

2 -

Workspace

3

- « Previous

- Next »

| User | Count |

|---|---|

| 143 | |

| 135 | |

| 57 | |

| 46 | |

| 42 |