- 534 Views

- 2 replies

- 2 kudos

Resolved! Looking for resources to learn Databricks

Hi Community Members,I'm working as a Power BI Developer and interested to upskill into Databricks platform as a Data Analyst and Data Engineering.Request to share the resources(documentation/video tutorials) in a sequential order.Thank You!Best Rega...

- 534 Views

- 2 replies

- 2 kudos

- 2 kudos

For Bite-size overviews check the demo center - https://www.databricks.com/resources/demos/library This youtube channel is great for more detailed oriented discussion around specific features .https://www.youtube.com/@nextgenlakehouseFor more structu...

- 2 kudos

- 4565 Views

- 3 replies

- 0 kudos

Unlocking the Power of Databricks: A Comprehensive Guide for Beginners

In the rapidly evolving world of big data, Databricks has emerged as a leading platform for data engineering, data science, and machine learning. Whether you're a data professional or someone looking to expand your knowledge, understanding Databricks...

- 4565 Views

- 3 replies

- 0 kudos

- 0 kudos

This is a clear and helpful guide to Databricks. You explained its key features and learning steps in a beginner-friendly way, making it easy for readers to get started and build practical skills in data analytics and machine learning. There is sone ...

- 0 kudos

- 8609 Views

- 6 replies

- 2 kudos

Resolved! Understanding Autoscaling in Databricks: Under What Conditions Does Spark Add a New Worker Node?

I’m currently working with Databricks autoscaling configurations and trying to better understand how Spark decides when to spin up additional worker nodes. My cluster has a minimum of one worker and can scale up to five. I know that tasks are assigne...

- 8609 Views

- 6 replies

- 2 kudos

- 2 kudos

Is the above information true for job clusters as well? Looks like the enhanced auto scalar is only available for pipelines

- 2 kudos

- 280 Views

- 2 replies

- 3 kudos

Resolved! Am i publishing article in a correct way or not?

Hello Community,I’d like to check with the contributors whether the article I recently published follows the correct approach. Did I choose the right options and the appropriate place to publish it in the Databricks Community?https://community.databr...

- 280 Views

- 2 replies

- 3 kudos

- 3 kudos

Hi @Kirankumarbs ,Yes, you did everything in correct manner. You put your article in correct place which is "Community Articles".Anyway, thanks for sharing with us

- 3 kudos

- 231 Views

- 0 replies

- 1 kudos

Better Diff for Jupyter Notebooks in Bitbucket

Comparing versions of Jupyter Notebooks (new preferred format on Databricks) in Bitbucket is much more difficult than the previous format. TPlease use the link below vote on adding better Jupyter Notebooks comparison to Bitbucket.Enable rich renderin...

- 231 Views

- 0 replies

- 1 kudos

- 4981 Views

- 6 replies

- 1 kudos

Cannot import editable installed module in notebook

Hi,I have the following directory structure:- mypkg/ - setup.py - mypkg/ - __init__.py - module.py - scripts/ - main # notebook From the `main` notebok I have a cell that runs:%pip install -e /path/to/mypkgThis command appears to succ...

- 4981 Views

- 6 replies

- 1 kudos

- 1 kudos

Sorry to triple post but I have another update: it seems to work for standalone clusters, but it refuses to build the wheel (I get a write permission error) on the job clusters.

- 1 kudos

- 3380 Views

- 2 replies

- 0 kudos

Best practices for tableau to connect to Databricks

Having problem in connecting to Databrikcs with service principal from tableau . Wanted to how how tableau extracts refreshing connecting to databricks , is it via individual Oauth or service principal

- 3380 Views

- 2 replies

- 0 kudos

- 0 kudos

Hi @cheerwthraj, To connect Tableau to Databricks and refresh extracts, you can use either OAuth or service principal authentication. For best practices, please refer to the below link, https://docs.databricks.com/en/partners/bi/tableau.html#best-pr...

- 0 kudos

- 542 Views

- 2 replies

- 1 kudos

Resolved! cluster and workflow issue

com.databricks:spark-xml_2.12:0.18.0 com.crealytics:spark-excel_2.12:3.4.3_0.20.4 in prerequisites_maven.yml and i created cluster and ran from this updated cluster notebook running but jobs failing UnknownException: (java.util.ServiceConfiguratio...

- 542 Views

- 2 replies

- 1 kudos

- 1 kudos

You can now natively read Excel files https://docs.databricks.com/aws/en/query/formats/excel

- 1 kudos

- 433 Views

- 1 replies

- 1 kudos

Resolved! cannot see "User Provisioning " in settings in Databricks Account management console

Hi Team , I came across below issues , need help to resolve the issue's .Issue 1 :- cannot see "User Provisioning " in settings in Databricks Account management console.Issue 2: - Account Admin -Toggle - Failed to provision user. Please ensure the...

- 433 Views

- 1 replies

- 1 kudos

- 1 kudos

Hey dpavanbo! 1. In the account console, go to Security > User provisioning. If you see “Automatic identity management,” that’s expected on Azure; it replaces traditional SCIM UI and handles JIT on first sign‑in. 2. Automatic identity management: ...

- 1 kudos

- 182 Views

- 1 replies

- 0 kudos

I placed an swag but did not reiceve it just want to lnow the status

I want to know the status of my swag

- 182 Views

- 1 replies

- 0 kudos

- 0 kudos

Hello @Ritika-08! Could you please share a few more details about the swag you’re referring to, such as which program or event it was associated with?

- 0 kudos

- 2330 Views

- 1 replies

- 1 kudos

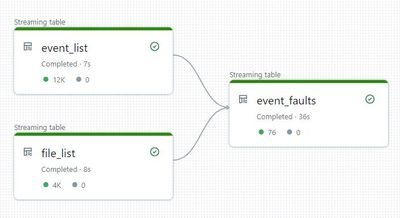

Left Outer Join returns an Inner Join in Delta Live Tables

In our Delta Live Table pipeline I am simply joining two streaming tables to a new streaming table.We use the following code: @Dlt.create_table() def fact_event_faults(): events = dlt.read_stream('event_list').withWatermark('TimeStamp', '4 hours'...

- 2330 Views

- 1 replies

- 1 kudos

- 1 kudos

did you ever get this resolved? struggling with a similar problem

- 1 kudos

- 618 Views

- 3 replies

- 3 kudos

Resolved! CDC / Event Driven Data Ingestion

Hello Guys,I am planning to implement Event Driven Data Ingestion from Bronze -> Silver -> Gold layer in my project. Currently we are having batch processing approach for our data ingestion pipelines. We have decided to move away from batch process t...

- 618 Views

- 3 replies

- 3 kudos

- 3 kudos

Hi Mey,Please also consider databrick file arrival trigger for your event driven data ingestion journey.https://docs.databricks.com/aws/en/jobs/file-arrival-triggersRegards, Kartik

- 3 kudos

- 721 Views

- 2 replies

- 4 kudos

A Smarter Approach to Data Quality Monitoring

For a long time, data quality has been one of the most painful parts of data engineering.Most of us have written rules and thresholds that looked correct but didn’t reflect how data was actually used. We ended up with too many alerts that didn’t matt...

- 721 Views

- 2 replies

- 4 kudos

- 4 kudos

agentic data quality monitoring is a focused approach for what really matters...

- 4 kudos

- 4762 Views

- 2 replies

- 2 kudos

Stream failure JsonParseException

Hi all! I am having the following issue with a couple of pyspark streams. I have some notebooks running each of them an independent file structured streaming using delta bronze table (gzip parquet files) dumped from kinesis to S3 in a previous job....

- 4762 Views

- 2 replies

- 2 kudos

- 2 kudos

Thanks for the detail answer I've been searching for. If you play at online casinos, you should check out the best online casinos that payout that offer the best gaming experiences.

- 2 kudos

- 359 Views

- 1 replies

- 1 kudos

Resolved! Databricks Spatial SQL - examples and tutorials?

Hello,I recently learned about Databricks being now able to handle geospatial data. With my background in geospatial, that got me curious for sure I was wondering if anybody has used examples, maybe a tutorial, to learn about geospatial in databricks...

- 359 Views

- 1 replies

- 1 kudos

- 1 kudos

HI @AnneEst I havent played around with spatial data but if I had to i would start with these two blogs . https://www.databricks.com/blog/introducing-spatial-sql-databricks-80-functions-high-performance-geospatial-analyticshttps://www.databricks.com/...

- 1 kudos

-

.CSV

1 -

Access Data

2 -

Access Databricks

3 -

Access Delta Tables

2 -

Account reset

1 -

adcAws databricks

1 -

ADF Pipeline

1 -

ADLS Gen2 With ABFSS

1 -

Advanced Data Engineering

2 -

AI

6 -

Analytics

1 -

Apache spark

1 -

Apache Spark 3.0

1 -

api

1 -

Api Calls

1 -

API Documentation

4 -

App

2 -

Application

2 -

Architecture

1 -

asset bundle

1 -

Asset Bundles

3 -

Auto-loader

1 -

Autoloader

4 -

Aws databricks

1 -

AWS security token

1 -

AWSDatabricksCluster

1 -

Azure

7 -

Azure data disk

1 -

Azure databricks

16 -

Azure Databricks Delta Table

1 -

Azure Databricks Job

1 -

Azure Databricks SQL

6 -

Azure databricks workspace

1 -

Azure Unity Catalog

6 -

Azure-databricks

1 -

AzureDatabricks

1 -

AzureDevopsRepo

1 -

best practices

1 -

Big Data Solutions

1 -

Billing

1 -

Billing and Cost Management

2 -

Blackduck

1 -

Bronze Layer

1 -

CDC

1 -

Certification

3 -

Certification Exam

1 -

Certification Voucher

3 -

CICDForDatabricksWorkflows

1 -

Cloud_files_state

1 -

CloudFiles

1 -

Cluster

3 -

Cluster Init Script

1 -

Comments

1 -

Community Edition

4 -

Community Edition Account

1 -

Community Event

1 -

Community Group

2 -

Community Members

1 -

Community site

1 -

Compute

3 -

Compute Instances

1 -

conditional tasks

1 -

Connection

1 -

Contest

1 -

Credentials

1 -

csv

1 -

Custom Python

1 -

CustomLibrary

1 -

Data

1 -

Data + AI Summit

1 -

Data Engineer Associate

1 -

Data Engineering

4 -

Data Explorer

1 -

Data Governance

1 -

Data Ingestion & connectivity

1 -

Data Ingestion Architecture

1 -

Data Processing

1 -

dataannotation

1 -

Databrick add-on for Splunk

1 -

databricks

4 -

Databricks Academy

1 -

Databricks AI + Data Summit

1 -

Databricks Alerts

1 -

Databricks App

1 -

Databricks Assistant

1 -

Databricks autoloader

1 -

Databricks Certification

1 -

Databricks Cluster

2 -

Databricks Clusters

1 -

Databricks Community

10 -

Databricks community edition

3 -

Databricks Community Edition Account

1 -

Databricks Community Rewards Store

3 -

Databricks connect

1 -

Databricks Dashboard

3 -

Databricks delta

2 -

Databricks Delta Table

2 -

Databricks Demo Center

1 -

Databricks Documentation

4 -

Databricks genAI associate

1 -

Databricks JDBC Driver

1 -

Databricks Job

1 -

Databricks Lakeflow

1 -

Databricks Lakehouse Platform

6 -

Databricks Migration

1 -

Databricks Model

1 -

Databricks notebook

2 -

Databricks Notebooks

4 -

Databricks Platform

2 -

Databricks Pyspark

1 -

Databricks Python Notebook

1 -

Databricks Repo

1 -

Databricks Runtime

1 -

Databricks Serverless

2 -

Databricks SQL

5 -

Databricks SQL Alerts

1 -

Databricks SQL Warehouse

1 -

Databricks Terraform

1 -

Databricks UI

1 -

Databricks Unity Catalog

4 -

Databricks User Group

1 -

Databricks Workflow

2 -

Databricks Workflows

2 -

Databricks workspace

3 -

Databricks-connect

1 -

databricks_cluster_policy

1 -

DatabricksJobCluster

1 -

DataCleanroom

1 -

DataDays

1 -

Datagrip

1 -

DataMasking

2 -

DataVersioning

1 -

dbdemos

2 -

DBFS

1 -

DBRuntime

1 -

DBSQL

1 -

DDL

1 -

Dear Community

1 -

deduplication

1 -

Delt Lake

1 -

Delta Live Pipeline

3 -

Delta Live Table

5 -

Delta Live Table Pipeline

5 -

Delta Live Table Pipelines

4 -

Delta Live Tables

7 -

Delta Sharing

2 -

Delta Time Travel

1 -

deltaSharing

1 -

Deny assignment

1 -

Development

1 -

Devops

1 -

DLT

10 -

DLT Pipeline

7 -

DLT Pipelines

5 -

Dolly

1 -

Download files

1 -

DQX

1 -

Dynamic Variables

1 -

Engineering With Databricks

1 -

env

1 -

ETL Pipelines

1 -

Event Driven

1 -

External Sources

1 -

External Storage

2 -

FAQ for Databricks Learning Festival

2 -

Feature Store

2 -

File Trigger

1 -

Filenotfoundexception

1 -

Free Edition

1 -

Free trial

1 -

friendsofcommunity

1 -

GCP Databricks

1 -

GenAI

2 -

GenAI and LLMs

1 -

GenAI Course Material

1 -

Getting started

3 -

Google Bigquery

1 -

HIPAA

1 -

Hubert Dudek

2 -

import

2 -

Integration

1 -

JDBC Connections

1 -

JDBC Connector

1 -

Job Task

1 -

JSON Object

1 -

LakeflowDesigner

1 -

Learning

2 -

Lineage

1 -

LLM

1 -

Login

1 -

Login Account

1 -

Machine Learning

3 -

MachineLearning

1 -

Materialized Tables

2 -

Medallion Architecture

1 -

meetup

2 -

Metadata

1 -

Migration

1 -

ML Model

3 -

MlFlow

2 -

Model

1 -

Model Serving

1 -

Model Training

1 -

Module

1 -

Monitoring

1 -

Networking

2 -

Notebook

1 -

Onboarding Trainings

1 -

OpenAI

1 -

Pandas udf

1 -

Permissions

1 -

personalcompute

1 -

Pipeline

2 -

Plotly

1 -

PostgresSQL

1 -

Pricing

1 -

provisioned throughput

1 -

Pyspark

1 -

Python

5 -

Python Code

1 -

Python Wheel

1 -

Quickstart

1 -

Read data

1 -

Repos Support

1 -

Reset

1 -

Rewards Store

2 -

Sant

1 -

Schedule

1 -

Serverless

3 -

serving endpoint

1 -

Session

1 -

Sign Up Issues

2 -

Software Development

1 -

Spark

1 -

Spark Connect

1 -

Spark scala

1 -

sparkui

2 -

Speakers

1 -

Splunk

2 -

SQL

8 -

streamlit

1 -

Summit23

7 -

Support Tickets

1 -

Sydney

2 -

Table Download

1 -

Tags

3 -

terraform

1 -

Training

2 -

Troubleshooting

1 -

Unity Catalog

4 -

Unity Catalog Metastore

2 -

Update

1 -

user groups

2 -

Venicold

3 -

Vnet

1 -

Voucher Not Recieved

1 -

Watermark

1 -

Weekly Documentation Update

1 -

Weekly Release Notes

2 -

Women

1 -

Workflow

2 -

Workspace

3

- « Previous

- Next »

| User | Count |

|---|---|

| 143 | |

| 135 | |

| 57 | |

| 46 | |

| 42 |