Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Technical Blog

Explore in-depth articles, tutorials, and insights on data analytics and machine learning in the Databricks Technical Blog. Stay updated on industry trends, best practices, and advanced techniques.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks Community

- Technical Blog

- Basics of Databricks Workflows - Part 2: Monitorin...

Databricks Employee

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

02-07-2024

10:47 AM

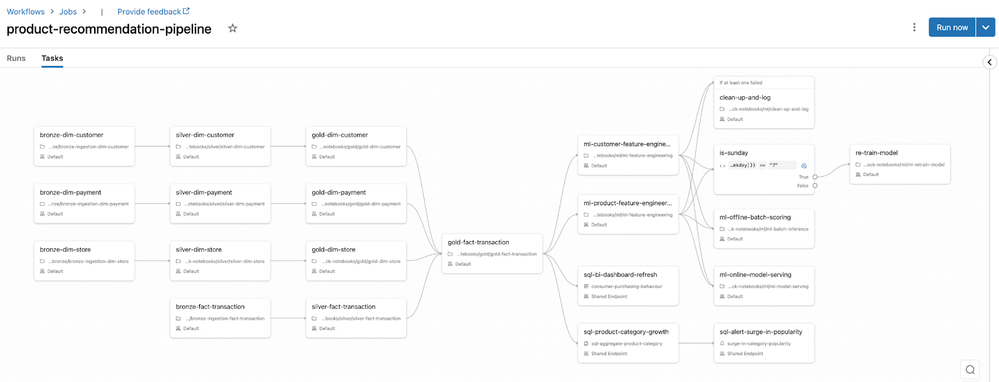

Welcome to Part 2 of our blog series on the Basics of Databricks Workflows! In Part 1 - Creating your pipeline, we explored the essential building blocks of creating a Databricks Workflow. We explored an example of how a retailer can orchestrate a product recommendation pipeline involving comprehensive integration of ETL components, BI dashboards, SQL reporting, and a sophisticated Machine Learning model.

In this blog we'll continue using the recommender use case to explore scheduling and triggering mechanisms, job-level configurations, as well as monitoring and alerting capabilities designed to enhance your pipelines' reliability. Finally, we will discuss how you can author and deploy your Workflows programmatically.

Table of Contents

Schedule your data pipeline

You should choose your scheduling and trigger mechanisms based on the nature of your data and processing needs. This can be determined by analyzing how often your data arrives, how large data volumes are, and how much computing resources you have available.

You should also factor in how often your business will consume the data products. For example, if you receive data from your ERP daily, but your finance team consumes reports weekly, then you can save costs and still provide the same business value by scheduling the respective reporting pipeline to match the business SLA as opposed to data arrival frequency.

Let's explore Workflow triggers tailored to different use cases:

- Batch-based: The Scheduled trigger enables you to automate the execution of your Databricks Workflow at different time intervals and designate specific times for execution. Additionally, you have the flexibility to choose a specific time zone for your schedule. Note that batch-based scheduling is not intended for low-latency use cases as Databricks enforces a minimum of 10 seconds between subsequent runs.

- Event-based: You can utilise File Arrival triggers to initiate a Databricks Workflow when new files land in cloud storage. This is particularly beneficial where data lands irregularly. You must be Unity Catalog enabled to be able to leverage File Arrival triggers.

- Real-time Streaming: By setting the trigger type to Continuous, Databricks will ensure there is always one active run of the job. A new Workflow run will automatically kick off if the previous run completes or fails. You cannot use task dependencies with a continuous job nor can you set retry policies.

Continuing with our example from part one, product recommender data sources provide data daily, so we will schedule our pipeline to run daily.

|

Note: Schedules are defined at a Workflow level. If you need to schedule individual tasks at a different cadence, then you can leverage the If/Else Condition task as we did with the model re-training task in Part 1. |

Enhance operational rigour with additional Workflow configurations

Let's focus on three key features that can help enhance the resilience of your data pipelines and provide real-time insights into Workflow status and performance.

|

Configuration |

Description |

Business Benefit |

Technical Benefit |

|

Automatic Retries |

Automatically re-attempt the task in case of unexpected failures, ensuring resilience and continuity in data processing. |

Minimizes disruptions, maintains operational flow, and reduces manual intervention. |

Enhances system robustness by overcoming intermittent issues or transient failures, contributing to job success. |

|

Task/Job Notifications |

Receive notifications on task/job start, success, or failure, providing real-time updates on the job's status. |

Facilitates prompt awareness of task/job outcomes, enabling swift response and decision-making. |

Improves communication and situational awareness, aiding in quick issue resolution and facilitating proactive management. |

|

Late Task/Job Warnings |

Set up warnings for tasks/jobs exceeding expected durations and/or implement terminations after a specified threshold, ensuring timely identification of potential issues and efficient resource allocation. |

Enhances resource management and prevents prolonged task/job execution, contributing to operational efficiency. |

Enables proactive identification of performance issues, preventing resource over-utilization and ensuring optimal workflow execution. |

You can define each of the configurations above at a Databricks Workflow level or individually at a task level.

Improve governance and enforce access controls

We now turn our attention to the crucial aspect of governance and access controls. Establishing strong, robust governance is paramount for ensuring the integrity and security of your data pipelines. Here are three tips on how you can improve governance and enforce access controls.

Tip 1: Apply tags to your Workflows for better filtering and tracking of costs

Tags applied to your Databricks Workflows also propagate to the clusters that are created when a Workflow is run. This enables you to use tags with your existing cluster monitoring and business unit charge-back attributions. Furthermore, applying tags simplifies the process of filtering and identifying clusters based on specific criteria. This makes it easier to track, monitor, and optimize resources within your Databricks environment.

Tip 2: Implement access controls based on the principle of least privilege

Configuring access control for jobs allows you to control who can view and manage runs of a job. There are five permission levels: No Permissions, Can View, Can Manage Run, Is Owner, and Can Manage. Assigning any of these permissions to users, groups, or service principals and should be done based on the principle of least privilege. Notably, only Workspace Admins can transfer ownership of a Workflow, which can only be done to an individual user and not groups.

Refer to the table in the documentation for a comprehensive understanding of job permissions.

Tip 3: Improve data security with "Run As Service Principal” for Workflow execution

Apart from Workflow permissions, you have the option to configure them to execute under a different identity using the Run As feature. By default, a Workflow runs with the identity of its owner. This implies that the owner would need extensive permissions in production environments involving the transformation and modification of tables – a practice contrary to data security principles.

To mitigate this security risk we recommend you modify the Run As identity to that of a Service Principal. This adjustment enables the job to adopt the permissions linked to the service principal, establishing a more controlled, secure, and centralized approach to access management.

Monitor your data pipeline

Once your job is fully configured and scheduled for execution, Databricks offers robust monitoring capabilities to ensure seamless operations.

On the Runs tab, you can efficiently assess the pipeline run history, pinpoint failed runs & tasks, and identify outliers & long-running batches. Hovering over each individual box reveals the associated metadata of the task, including the start and end dates, status, duration, cluster details, and error messages in the event of failure.

Identifying job failures

As seen in the image below, we have our most recent pipeline job run fail, with the task bronze-fact-transactions erroring.

Hovering over or clicking on the specific task allows us to identify the root cause of the failure. The details of the task run appear displaying the below error message clearly on top:

NameError: name 'input_file_name' is not defined.

We can quickly correct this by including the appropriate function with the imports above.

Repairing Workflow failures

Once the fix has been put in place, we can choose to re-run the Workflow; however, this would be inefficient as we are:

- Re-processing tasks that have already been successfully completed

- Paying for uptime for the cluster to perform the redundant work

- Potentially re-loading duplicate data if not handled correctly in the pipeline

All of this equates to spending more than the necessary DBUs on orchestrating your data pipelines. Instead we can use Repair Run, which will restart the Workflow from the point of failure instead of re-running the entire pipeline.

To do so we click into the specific Workflow run, bringing up which tasks have successfully completed, which failed, and which were skipped due to dependencies not being met. Clicking Repair Run on the top right, we can be more performant and cost-efficient as we save on cluster processing and up-time.

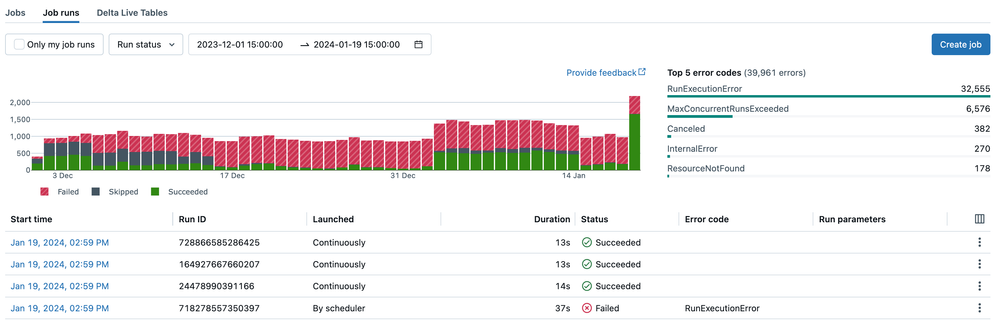

Observing your overall data pipeline landscape

So far, we have looked at monitoring at an individual data pipeline level; however, your production landscape will likely encompass multiple pipelines.

For a comprehensive overview of your workflow landscape, navigate to the Job Runs tab in the top banner within Workflows. Here you'll find data and visualizations to simplify monitoring:

- The Finished Runs graph presents the number of job runs completed within the last 48 hours, stacking the failed, skipped, and successful job runs. You can customise the graph by filtering based on specific run statuses or narrowing it down to a particular time range.

- In the Jobs list table, you can observe the metadata and status of the last five runs for each job. Hovering over icons reveals a tooltip with run details, including error codes. This feature allows for a quick assessment of job status, trend identification, and easy navigation to problematic runs.

- The Top 5 Error Types table provides a list of the most frequent error types. This table enables you to swiftly identify the most common causes of job issues in your workspace, offering valuable insights into potential areas for improvement.

Authoring your Workflows programmatically

When authoring your Databricks Workflows, leveraging the Databricks Terraform Provider or Databricks Asset Bundles (DABs) are powerful and recommended approaches.

Databricks Terraform Provider

The Databricks Terraform Provider offers a programmatic way to author and manage your Databricks Workflows. Terraform codifies infrastructure and configurations, enabling you to define, version, and deploy your Databricks resources as code. This approach facilitates automation, collaboration through version control, and supports infrastructure-as-code best practices.

To explore the capabilities of the Databricks Terraform Provider, please read the documentation.

Databricks Asset Bundles (DABs)

If you are unfamiliar with Terraform, DABs are a great choice to reap much of the same benefits. They allow you to programmatically define and manage your Workflows using a declarative YAML syntax. With DABs, you can package notebooks, libraries, and other assets, simplifying the deployment process and ensuring consistency across dev, test and production environments. Versioning Workflow definitions with DABs reduces errors and promotes reproducibility across your organization. If you want to learn more, check out the self-guided demo of DABs in the Databricks Demo Center.

Conclusion

To conclude Part 2 of our blog series on the Basics of Databricks Workflows, let's recap.

We explored the different scheduling mechanisms and additional Workflow configurations. We dived deeper into governance and access controls, emphasising the importance of applying tags and implementing robust access controls. We also looked at the different monitoring visualizations available out of the box in Databricks Workflows. We also learnt how the Databricks Terraform Provider and Databricks Asset Bundles (DABs) empower you to codify and version control your workflows, promoting collaboration and reproducibility.

As you continue your Databricks journey, remember that mastering these Workflow management fundamentals lays a strong foundation for orchestrating complex data pipelines for your business.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Related Content

- Databricks Platform Observability AI BI Dashboard in Technical Blog

- It's time to treat AI as a peer, not a tool. What if your AI already knew Databricks? in Community Articles

- Train and Deploy YOLO Vision Model on Databricks AI Runtime (AIR) in Technical Blog

- 🚨 Big news from Databricks — Databricks One Mobile has just been announced 📲 in MVP Articles

- [PARTNER BLOG] How to Write a Single Output File with Custom Naming in Spark in Technical Blog