Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Technical Blog

Explore in-depth articles, tutorials, and insights on data analytics and machine learning in the Databricks Technical Blog. Stay updated on industry trends, best practices, and advanced techniques.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks Community

- Technical Blog

- How to implement Slowly Changing Dimensions when y...

Databricks Employee

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

08-31-2023

07:18 AM

Maintaining Slowly Changing Dimensions (SCD) is a common practice in data warehousing to manage and track changes in your records over time. It enables businesses to make more informed and strategic decisions based on historical patterns and trends. However, often in real-world scenarios data is riddled with issues such as duplicate records which can complicate maintaining slowly changing dimensions effectively. Failure to address duplicate records in your source data will introduce inconsistencies and inaccuracies that will lead to incorrect results in reporting, flawed analysis for decision-makers, and ultimately a loss of trust in the data by the business.

In this blog series, we will present how to implement SCD Type 1 and Type 2 tables on the Databricks Lakehouse when met with the obstacles posed by duplicate records.

- In Part 1, we will provide a general overview of the different types of duplicate records, their impacts on strategic decision-making if left unchecked, and what to consider when remediating these when implementing your Type 1 and Type 2 tables.

- In Part 2, we will explore how to implement the strategies discussed in Part 1 leveraging streaming tables created in Delta Live Tables pipelines with code snippets and example unit test results

- In Part 3, we will explore how to implement the same strategies when leveraging Spark Structured Streaming for your data pipelines.

Table of Contents

- A quick refresher on Slowly Changing Dimensions

- More about duplicates

- What if we didn’t handle duplicate records?

- Conclusion

- Coming up next!

A quick refresher on Slowly Changing Dimensions

In a data warehouse, dimensions provide descriptive information about the business entities being analyzed, such as customers, products, or locations. When the attributes of these dimensions change, it becomes necessary to manage and track those changes effectively.

The two most popular SCD types commonly implemented to handle those changes are Type 1 and Type 2 tables.

A Type 1 table does not track changes in dimensional attributes - the new value overwrites the existing value. Here, we do not preserve historical changes in data.

A Type 2 Table tracks change over time by creating new rows for each change. A new dimension record is inserted with a high-end date or one with NULL. The previous record is "closed" with an end date. This approach maintains a complete history of changes and allows for as-was reporting use cases.

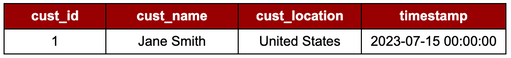

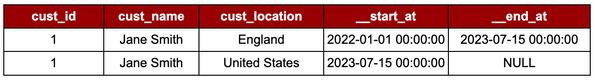

Example: Our customer, Jane Smith, joins in 2022 from England. Jane later moves to the United States midway through 2023 and informs us of this change.

SCD Type 1 : No history is maintained; changes are updated in place!

Figure 1 - As SCD Type 1 tables are hard-updated with any changed records, only the latest version of Jane's customer record and location is stored. The table does not track the history of where and when Jane moves.

SCD Type 2 : History is maintained; changes are upserted!

Figure 2 - SCD Type 2 tables update the `__end_at` column with the new record’s `__start_at` timestamp. A new record is then inserted with an `__end_at` timestamp of NULL (or another commonly used value is ‘9999-12-31 23:59:59). Semantically, this is referred to as the high-end dated record - denoting the most current version.

More about duplicates

Different types of duplicate records can exist in your data pipeline. Understanding which types of duplicates are relevant to your use case is essential to maintaining data quality and consistency.

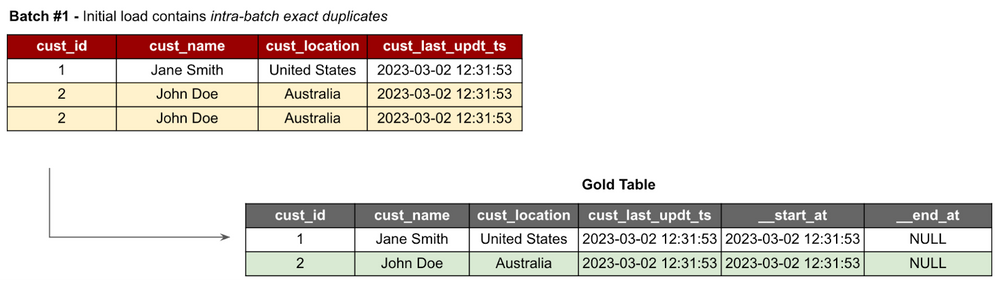

Intra-Batch Duplicates

These refer to duplicated records within a single batch of data. These duplicates occur when the same data is unintentionally loaded or processed multiple times, resulting in redundant entries.

Dealing with Intra-Batch duplicates is relatively straightforward as they can be identified and handled within the same batch using windowing functions such as RANK or ROW_NUMBER.

Figure 3 - The ‘red’ table represents the initial load from our source data feed in which an intra-batch duplicate record is present. The desired outcome of the target table is shown on the right after the pipeline correctly handles the duplicated records.

Inter-Batch Duplicates

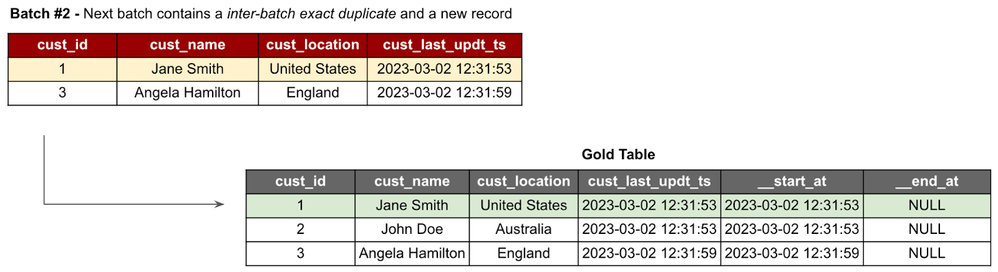

Unlike intra-batch duplicates, inter-batch duplicates pose a more complex challenge and occur when records are the same across different batches of data. Handling Inter-Batch duplicates requires comparing data previously written in the target table.

Figure 4 - After successfully ingesting our initial load, our next batch contains an inter-batch duplicate. After handling the duplicated record, only the new record is inserted into the target table.

Duplicates with the Same Business Meaning

Duplicates with the same business meaning present a unique challenge as they do not involve exact replicas of records. Instead, they occur when only the values of technical columns are altered, but the overall business meaning of the record remains the same. These changes may include updates to metadata or non-critical information that does not affect the interpretation or analysis of the data.

Duplicates with the same business meaning can manifest as Intra-Batch and Inter-Batch duplicates.

Note! Handling these duplicates requires careful consideration and understanding of the underlying data and its business context to ensure that changes that do not impact the overall business interpretation are treated accordingly to maintain data integrity and accuracy. |

Figure 5 - In the next batch of data, we have not received the same business meaning duplicates but they are not exact as the cust_last_updt_ts values are different. However, as a change in this column in isolation does not change the business meaning of the record, we handle both intra- and inter-batch same business meaning duplicates as agreed by the business.

Out-of-Order Records

Although these records are not classified as duplicates, the impact on data quality can be significant when dealing with records that arrive out of order in both SCD Type 1 and Type 2 tables.

- In SCD Type 1 tables, out-of-order records can lead to inconsistencies as records holding ‘out-dated’ data will be updated as the ‘current’ value leading to inaccurate analysis.

- Similarly, in SCD Type 2 tables, records arriving out of order disrupt the historical tracking of changes rendering data to be inaccurate and unreliable. Effectively handling these data quality use cases is vital to ensure that data integrity is not compromised and that decision-making is not impacted.

These edge cases can appear intra-batch or inter-batch.

Out-of-order records can be correctly stitched back into your table but this can potentially increase the complexity of your data pipeline – unless you are using frameworks that help simplify managing these edge cases like Delta Live Tables pipelines!

Another solution could be to filter and drop the ‘stale’ records; however, this needs to be agreed upon by both the relevant Data and Business teams.

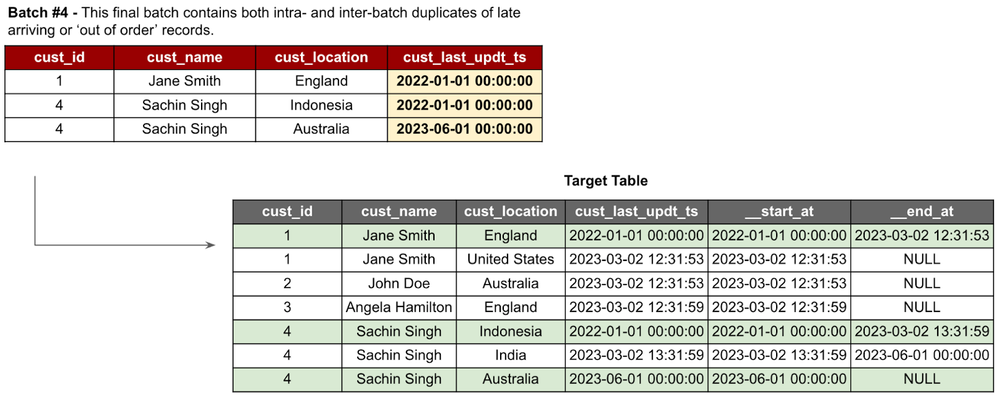

Figure 6 - In this final batch, we receive a late-arriving record indicating Jane previously lived in England before moving to the United States; and two intra-batch, very out-of-order records for Sachin.

Note! |

What if we didn’t handle duplicate records?

Intra-Batch Duplicates for SCD Type 1 & Type 2

Not handling intra-batch duplicates in SCD Type 1 and Type 2 tables can cause detrimental effects on data integrity and analysis. If not correctly addressed, these duplicate records can be inserted into the table, leading to inconsistent results and potential join-growth. This can exacerbate poor data analysis, as the duplicated records distort the accuracy of aggregations and calculations.

Inter-Batch Duplicates for SCD Type 1

Since SCD Type 1 tables do not maintain historical versions or track changes over time, there is no functional need to handle inter-batch duplicates to maintain data accuracy or integrity. The “changed” records are hard-updated with the latest values, or in our case, the same values. Due to this characteristic of SCD Type 1 tables, there is no resulting risk of join growth, inaccuracy, or data inconsistency.

Inter-Batch Duplicates for SCD Type 2

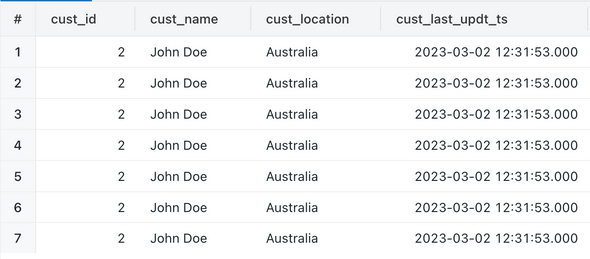

Not handling inter-batch duplicates in SCD Type 2 tables can lead to join growth when performing analysis which will compromise integrity and lead to flawed decision-making. This will occur when your inter-batch duplicate records are not correctly identified and are inserted with the high-end date.

However, there is also a scenario where your pipeline accumulates and upserts the same record multiple times over. This redundancy can unnecessarily inflate table sizes and, if left unchecked, can also lead to an inaccurate business understanding of the data.

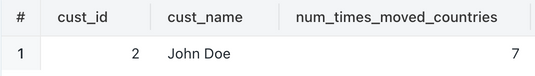

For example, our table below indicates that John has relocated countries seven times. Looking at the data in its current query representation, we can see that these are just duplicate records not being handled correctly.

However, if we were to perform a GROUP BY to determine the number of times our customers moved countries, we would be presented with an inaccurate analysis. This loss of data integrity would then hinder our ability to make informed decisions.

Conclusion

In conclusion, understanding the various types of duplicates that can arise in data pipelines is crucial for maintaining data integrity and ensuring accurate strategic decision-making. Whether they are intra-batch duplicates, inter-batch duplicates, duplicates with the same business meaning, or late-arriving records; each type poses unique challenges and requires careful consideration during remediation. Failing to address duplicates can result in data inconsistencies, compromised analysis, and flawed decision-making. However, by implementing techniques and adopting suitable frameworks (like Delta Live Tables pipelines!) data practitioners can build resilient and efficient data pipelines that can effectively handle duplicates.

Coming up next!

In Part 2 of this blog series, we will walk through a practical implementation of a data pipeline using Delta Live Tables equipped with the capabilities to handle the different data duplicates.

1 Comment

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Related Content

- Lakebase as the Operational Data Store: Bringing Back the Tactical Data Layer in Lakebase Blogs

- Fraud Detection Feature Engineering with Structured Streaming Real-Time Mode and Lakebase in Technical Blog

- Building a Production‑Style SCD Type 2 Dimension on Delta Lake — Using Databricks Community Edition in Community Articles

- From Traces to Evaluation: An Autonomous MLflow Agent Harness for AI AgentOps in Technical Blog

- [PARTNER BLOG] Zerobus Ingest on Databricks in Technical Blog