Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Technical Blog

Explore in-depth articles, tutorials, and insights on data analytics and machine learning in the Databricks Technical Blog. Stay updated on industry trends, best practices, and advanced techniques.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks Community

- Technical Blog

- Prepare Your Journey to Migrate from AWS Glue Data...

Databricks Employee

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

04-23-2024

08:12 AM

In this blog, we will look at the migration from AWS Glue Data Catalog to Unity Catalog. We cover how to plan this migration as a step-by-step approach and emphasize meticulous planning, phased migration, and minimal disruption. It covers inventory gathering, migration strategy, the coexistence of Glue and Unity Catalog, design considerations, data migration, adjusting pipelines, and downstream system adaptation.

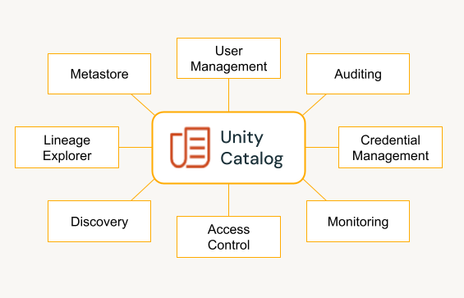

Why Unity Catalog

Unity Catalog (UC) from Databricks is a comprehensive data governance solution designed to streamline data management across diverse platforms. It is a single point of control for handling all your data and AI assets like tables, views, machine learning models, notebooks, dashboards, files, and other artifacts. Unity Catalog is instrumental in fostering collaboration by facilitating secure data and AI asset discovery, access, and sharing. It provides a unified governance layer that enhances collaboration among data analysts, data scientists, and data engineers, enabling data teams to work more efficiently. By adopting UC, organizations can benefit from a centralized metadata and data management system, which allows precise data access controls and boosts productivity.

Prior to UC, you could configure the Databricks Runtime to use the AWS Glue Data Catalog as its metastore, which served as a drop-in replacement for a Hive metastore (HMS). Migrating to UC from Glue Data Catalog offers benefits such as a three-layer namespace for improved data organization, built-in access control, and centralized object storage access simplifying management along with enhanced security. UC also opens the door for advanced features like lineage and data insights.

Laying the Groundwork: Planning the Migration

Embarking on a migration project requires meticulous planning to ensure a successful transition. The first step involves compiling a comprehensive inventory of your current Glue metastore assets.

This inventory should include, but is not limited to:

- Tables categorized by storage location, type, and format

- Other data and AI assets such as views, models, dashboards, queries, and notebooks

- Jobs

- Volume of data

- Data in dbfs root

- Used storage locations

- Unsupported DBR versions (UC requires Databricks Runtime (DBR) 11 or higher)

- Mount points

- Global init scripts

- Delta Live Tables

- Workspaces

- Regions in use

Databricks Labs UCX is a versatile tool for your Unity Catalog migration, including the crucial task of inventory gathering. This step is vital as it helps estimate the effort required for the migration and provides an idea of which teams will be impacted during this process. UCX inventory gathering can be run at various stages of migration to get the latest list of resources to be migrated and thus track the migration progress. The volume of data to migrate and the necessary code changes directly influence the effort required.

The following sections provide an overview of the tasks involved in the migration. Based on this, you can identify the various stages and tasks of the migration, determine which stages can be parallelized, and develop a migration timeline. It’s important to communicate this information to all stakeholders well in advance to facilitate resource allocation and individual team planning.

Also, you should identify tasks that can be automated and those that can be performed centrally. This will help accelerate the migration process and minimize the impact on your stakeholders, ensuring a smooth and efficient transition to the Unity Catalog.

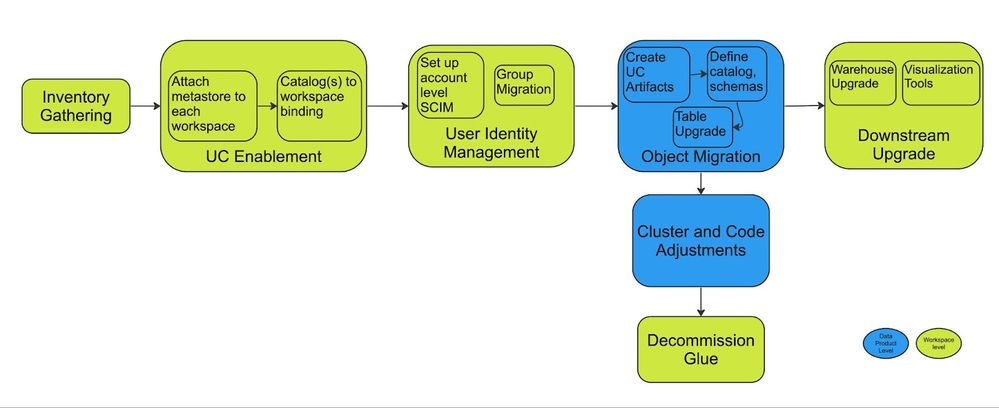

Migration Strategy and Steps

A Phased Approach: Coexistence of Glue and Unity Catalog

In large organizations, a sudden, one-off migration from AWS Glue Data Catalog to Unity Catalog is not feasible due to the volume of workloads and the number of teams involved. Therefore, a phased migration approach is recommended, which can be structured based on either the data products or the teams responsible for the workload.

During this transition period, it’s required for both Glue and Unity Catalog to coexist. This dual operation caters to the workloads that are still in the process of migration as well as those that have already transitioned. It’s common for data products to be in different metastores during this migration, hence the need to maintain synchronization between Unity Catalog and Glue. This ensures that consumers consistently access the most recent state of data objects, irrespective of their source.

To achieve this, use the SYNC command to update external tables’ schema changes from AWS Glue to Unity Catalog. For updating changes from Unity Catalog to Glue tables, fetch the DDL of the tables in Unity Catalog and execute a CREATE OR REPLACE command. The syncing process can be automated using Databricks system tables and AWS CloudWatch events. The forthcoming HMS federation functionality will further simplify this process by enabling the reading of Glue tables through Unity Catalog during the migration.

Designing the Unity Catalog Landscape

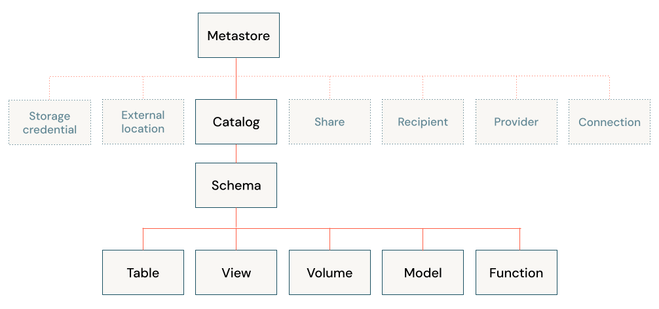

Before embarking on the actual migration process, it’s crucial to finalize the design of your Unity Catalog (UC) landscape. UC offers a 3-level namespace design, which requires careful consideration during this design phase.

You’ll need to make key decisions on segregating data objects, determining the groups to be created, and establishing the necessary permissions for these groups on each data object. Unity Catalog assigns one metastore to each region, with every workspace in that region subsequently assigned to that metastore. Ideally, the catalog/schema design should mirror your organization’s structure and SDLC environments. Once the metastore is set up, you can attach the workspaces to it.

|

Tip! To minimize the changes during the migration, keep the same design as you have in Glue if it’s already segregated well. A typical existing Glue structure will have separate catalogs for development, testing, and production. UC design could mimic the same structure, thus avoiding changes in downstream jobs and queries. |

|

Risk! Jobs run by external orchestrators could break at this point since the default data_security_mode for new clusters (>= DBR 11) will be set to either USER_ISOLATION or SINGLE_USER, rather than the previously expected NONE once the workspace is attached to a UC Metastore. |

Streamlining Group Migration

The migration process also involves transitioning workspace-level groups to the account level. The first step in this process is to enable account level SCIM application, which synchronizes users from your Identity Provider (IdP) to your Databricks account. Capturing and migrating all permissions for all principles is crucial to this process. However, manual migration is not feasible in multi-workspace environments. Therefore, we recommend using UCX for a seamless group migration. UCX captures and applies all existing permissions to the new account-level groups post-migration.

When using the Glue Data Catalog, permissions are typically managed using instance profiles in conjunction with AWS Lake Formation. During the migration to Unity Catalog, these permissions should be captured and replicated in Unity Catalog, thereby achieving unified governance.

|

Tip! Create the account level groups and sync users before migrating the permissions to avoid downtime. Do not remove the renamed workspace-level groups until the migrated groups have been tested and run in production for some time. |

Establishing Unity Catalog Artifacts

Creating Unity Catalog (UC) artifacts is one of the initial steps in the migration process. This involves setting up storage credentials and external locations. You’ll also need to create UC catalogs, schemas, and volumes.

The corresponding data objects, which include tables, views, and functions, should be established as part of the synchronization job discussed in the previous sections.

|

Tip! Assign the catalogs to specific workspaces to avoid undesired data access. |

Navigating the Data Migration Phase

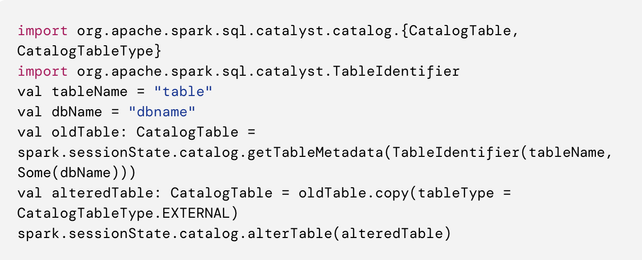

During the data migration phase, your data objects are transferred to Unity Catalog (UC). Glue tables can be categorized as either Managed or External. It’s important to tread carefully here to avoid triggering large-scale data movement. To prevent this, convert any managed tables stored in a DBFS-mounted cloud location to a Glue external table as shown below

Utilize the SYNC command to migrate all external tables to UC. For the remaining managed tables, use CTAS or DEEP CLONE. As mentioned in previous sections, employ a two-way sync job to maintain synchronization between the migrated tables and Glue tables during migration. For all non-tabular data access, create Volumes over the cloud storage path. However, if it’s a DBFS path, you should copy the data to a volume created over a cloud storage path.

Migrating Pipelines: Cluster and Code Adjustments

Cluster Adjustments

For your jobs and queries to run smoothly, they must operate on a Unity Catalog (UC) supported cluster. You can choose between shared access mode and single-user access mode. UC necessitates a DBR version of 11 or higher, with the latest LTS version being the recommended choice. It’s important to note that the cluster should not connect to Glue and UC simultaneously. All access controls should be managed through UC, which means instance profiles should not be attached to the upgraded clusters, except in certain scenarios discussed later.

|

Tip! Opt for shared access mode whenever possible. Leverage cluster policies to implement access mode changes, instance profile restrictions, and configuration changes. This reduces the effort required by individual teams. To expedite the UC migration process, consider upgrading your existing clusters to the latest DBR version beforehand. |

Code Adjustments

The code needs to be modified to read from and write to the tables in UC. All mount references should be updated to refer to volumes for non-tabular data and to the table name for paths referring to tables. Any existing global init scripts should also be replaced. If BOTO3 is in use, it should be replaced with volumes when possible.

If dynamic views are present, they should be modified to use the ‘is_account_group_member’ function. Additionally, use the row filters and column masking to enable fine-grained access at the row and column levels. Clone your DLT pipelines to write data to the UC tables.

|

Tip! Set the default catalog at the cluster level through cluster policies. This avoids changes to the jobs/notebooks and facilitates the switch from glue to UC. |

Adapting Downstream Systems

In the migration process, it’s essential to adjust your downstream systems including reporting and visualization tools, to read from Unity Catalog (UC) tables. This also involves enabling UC in the SQL warehouses that are utilized by these downstream systems.

Interacting with External Systems and AWS services

For external systems to consume data produced by workloads within the Databricks ecosystem, it’s recommended to use volumes and delta sharing. There may be scenarios where interaction with other AWS services is required or when you need to read data from AWS accounts not managed by your data platform. In these cases, attach an instance profile with only the necessary permissions.

This also applies to scenarios where external libraries use AWS specific services. For instance, Great Expectations uses BOTO3 internally. Therefore, when using Great Expectations, an instance profile must be attached.

Moreover, any existing external workloads using AWS Athena can also be migrated to run on UC clusters. Since all of the metadata is available in UC, this unifies your data workloads.

Decommission

The workloads and clusters upgraded to UC shouldn’t interact with glue tables. Once all the workloads are moved to UC, you can stop the sync job and remove the tables from the glue.

Wrap-up

Migrating from AWS Glue Data Catalog to Unity Catalog is a significant step towards enhancing your data governance and management. This blog post has provided a comprehensive guide on planning and executing this migration with minimal impact on your downstream customers. We recommend using the open-source UCX project by Databricks Labs to simplify and automate this migration.

Upgrade your workloads to Unity Catalog today, benefit from unified governance, and unlock a world of efficient, collaborative, and productive data management. Get in touch with your Databricks representative to learn more about Unity Catalog and get assistance with your migration to Unity Catalog.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Related Content

- Azure Databricks — Reverse SSH Tunnel for On-Premises Connectivity in Technical Blog

- The next generation of Databricks Genie in Announcements

- Stripe + Databricks: Finally, Real-Time Payments Data Without the Headache in MVP Articles

- Securely send first-party conversion signals with Snapchat Conversions API on Databricks Marketplace in Announcements

- CUSTOMER STORY | easyJet: Creating better travel experience for all in Announcements