Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Technical Blog

Explore in-depth articles, tutorials, and insights on data analytics and machine learning in the Databricks Technical Blog. Stay updated on industry trends, best practices, and advanced techniques.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks Community

- Technical Blog

- Why Databricks SQL Serverless is the best for BI w...

Databricks Employee

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

12-08-2023

07:14 AM

Authors: Andrey Mirskiy (@AndreyMirskiy) and Marco Scagliola (@MarcoScagliola)

Introduction

With this blog post we start our series on Databricks SQL Serverless, using tangible examples to explain why it is the best fit for BI workloads. We will cover various techniques and features available in Databricks SQL; discuss key considerations; share sample reproducible code for you to test and learn. This will include (but not limited) features which enable Databricks SQL Serverless performance and scalability at large scale BI workloads to unlock the value of data in enterprise Lakehouses.

In the realm of BI workloads, fast data retrieval is vital to meet customer-defined Service Level Agreements (SLAs), Service Level Objectives (SLOs) and Service Level Indicators (SLIs). Within Databricks SQL we leverage within the product a variety of optimization techniques. These not only speed up query execution but also support efficiency, leading to cost reductions and optimized resource use within analytical workloads.

This blog post explores the high-performance capabilities of Databricks SQL Serverless for BI workloads, with insights illustrated via sample test scenarios.

Readers are encouraged to use the configuration and code provided in this article to replicate the test cases. The SQL code leverages Databricks built-in sample catalog, eliminating the need for additional database setups or data loading.

Disk Cache Overview

Caching in databases enhances performance by minimizing Input and Output (I/O) read and write operations.

Databricks has several methods of caching. In this blog post we are focusing on Disk Cache (Azure, AWS, GCP). By leveraging locally attached Solid State Drives (SSD), this method enhances query performance through on-disk data storage, leading to faster data retrieval.

Demo Scenario

Demo Setup

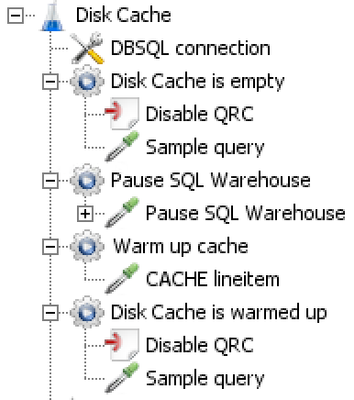

This demo uses the ‘samples’ catalog and ‘tpch’ schema. These samples come preinstalled in all workspaces. In image 1 we can see the JMeter configuration pane for this first test case. The ‘DBSQL connection’ contains the JDBC connection to the Databricks SQL Warehouse, this is common to all test cases and that the QRC feature has been turned off.

Image 1: JMeter configuration pane for the Disk Cache test case.

Code 1 below, shows the query used for this initial test case.

-- Sample Query Used for the test

select

l_returnflag,

l_linestatus,

sum(l_quantity) as sum_qty,

sum(l_extendedprice) as sum_base_price,

sum(l_extendedprice * (1 - l_discount)) as sum_disc_price,

sum(l_extendedprice * (1 - l_discount) * (1 + l_tax)) as sum_charge,

avg(l_quantity) as avg_qty,

avg(l_extendedprice) as avg_price,

avg(l_discount) as avg_disc,

count(*) as count_order

from lineitem

where

l_shipdate <= date '1998-12-01' - interval '79' day

group by

l_returnflag,

l_linestatus

order by

l_returnflag,

l_linestatus;

Code 1: Query used for the Disk Cache test plan.

Cold Cache

A "cold cache" refers to a cache area that hasn't yet stored any frequently accessed data or has been cleared of its previous contents.

In performance testing or benchmarking, it's often useful to understand how systems perform with cold and warm caches, as this provides insights into both the worst-case (cold) and best-case (warm) scenarios regarding data access latency speed.

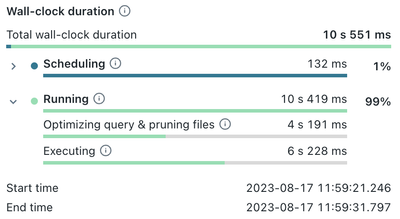

We start our test by executing the first query instruction, with an ‘empty’ Disk Cache. As shown in image 2 below, the query execution time exceeds ten (10) seconds.

Image 2: Wall-clock duration metrics for the initial query with cold Disk cache.

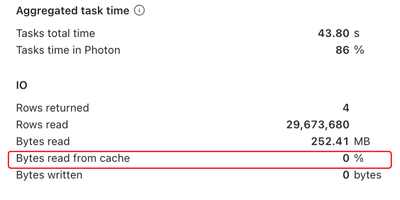

Because the Disk Cache is empty, the query engine must retrieve all data from the remote cloud storage. Observe, on image 3 below, the “Bytes read from cache” metric which is at 0%.

Image 3: Query execution metrics while the Disk Cache is ‘empty’.

Warm Cache

Warming up the Disk Cache refers to the process of preloading frequently accessed data into the cache before it's actually needed for primary operations. This can be achieved by leveraging the CACHE statement, as shown in code 2.

Please note that before executing the statement shown below (e.g., code 2) and the test SQL-query (e.g., code 1) you need to restart SQL warehouse in order to empty the Disk Cache.

---Warming up the cache

CACHE SELECT * FROM lineitem;

Code 2: Query to cache the content of the lineitem table.

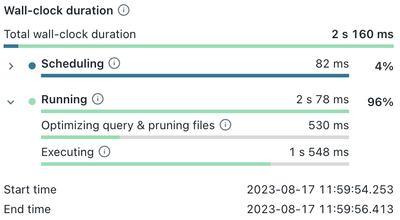

When executing the test query (e.g., code 1), we can see that the performance is notably faster, taking about only two (2) seconds. In comparison, the previous execution with a cold cache, which took over ten (10) seconds.This represents an 80% improvement in the query execution time.

Image 4: Wall-clock duration metrics for the query after the Disk Cache has been warmed up.

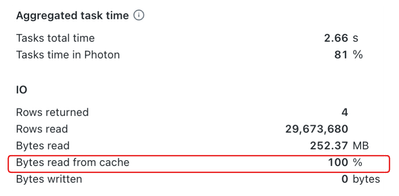

Notice in Image 5, the metric “Bytes read from cache” which stands at 100%. This suggests that all the data needed for this query is readily available in the Disk Cache, leading to significantly faster execution.

Image 5: Query execution metrics leveraging a Warm Cache.

Conclusion

Based on the test case detailed in this blog post, it's clear that caching plays a pivotal role in enhancing data retrieval processes. By storing frequently accessed ('hot') data in cache, there's a noticeable reduction in the need for constant data fetching. This approach leads to decreased latency, faster application responses, and a more seamless user experience. Essentially, caching strikes a balance between speed and efficiency, refining data retrieval for optimal performance. Databricks cache provides added versatility, enabling users to apply caching to whole tables or to specific query results. Organizations can further elevate the efficiency and user experience of data access by leveraging this technique in their BI workloads.

While in traditional data warehousing, there is often a concern about how the size of the data impacts the choice and sizing of the data warehouse, in the case of Databricks SQL, data isn't permanently stored on the warehouse itself. Instead, the data is stored in a cloud object store.

With Serverless architecture, you can size the Databricks SQL warehouse independently of data storage. This decoupling allows for flexibility in adjusting the computational resources allocated to the queries without worrying about the amount of data stored. Users can scale up or down based on the complexity of their queries or the volume of concurrent queries without directly impacting data storage costs.

In the next post we will discuss Apache jMeter which will be used in the following posts to demonstrate Databricks SQL features.

2 Comments

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Related Content

- %uv pip in MVP Articles

- Unit Testing TransformWithState Logic with TwsTester in Technical Blog

- Introducing 5XL SQL Warehouses: A Practical Guide to Meeting SLAs for Your Most Demanding Workloads in Technical Blog

- Performing DML Operations on Unity Catalog Managed Tables from External Engines in Technical Blog

- Scaling Enterprise AI with Databricks Without Losing Control in Community Articles