- 2656 Views

- 4 replies

- 2 kudos

Agent outside databricks communication with databricks MCP server

Hello Community!I have a following use case in my project:User -> AI agent -> MCP Server -> Databricks data from unity catalog.- AI agent is not created in the databricks- MCP server is created in the databricks and should expose tools to get data fr...

- 2656 Views

- 4 replies

- 2 kudos

- 2 kudos

Hello @mark_ott thank you very much for this! It gives me a lot of knowledge! I think that since data is stored in databricks we will go with MCP deployed there as well.I have next portion of questions - can you please tell me how to deploy my mcp se...

- 2 kudos

- 1155 Views

- 2 replies

- 1 kudos

Task Hanging issue on DBR 15.4

Hello,I am running strucutred streaming pipeline with 5 models loaded using pyfunc.spark_udf. Lately we have been noticing very strange issue of tasks getting hanged and batch is taking very long time finishing its execution.CPU utilization is around...

- 1155 Views

- 2 replies

- 1 kudos

- 1 kudos

On DBR 15.4 the DeadlockDetector: TASK_HANGING message usually just means Spark has noticed some very long-running tasks and is checking for deadlocks. With multiple pyfunc.spark_udf models in a streaming query the tasks often appear “stuck” because ...

- 1 kudos

- 3571 Views

- 4 replies

- 3 kudos

Resolved! Asset bundle vs terraform

I would like to understand the differences between Terraform and Asset Bundles, especially since in some cases, they can do the same thing. I’m not talking about provisioning storage, networking, or the Databricks workspace itself—I know that is Terr...

- 3571 Views

- 4 replies

- 3 kudos

- 3 kudos

First, DAB uses terraform in the background. Having said that, my recommendation is to use DAB for whatever component already included and only other tools for IaC not supported yet or non-databricks specific (private VNets, external storages, etc.) ...

- 3 kudos

- 862 Views

- 3 replies

- 0 kudos

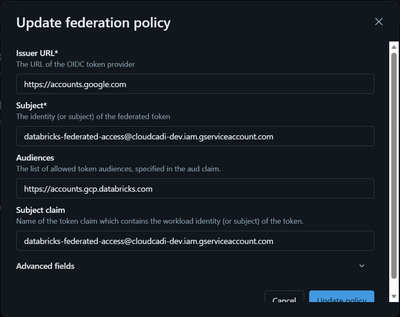

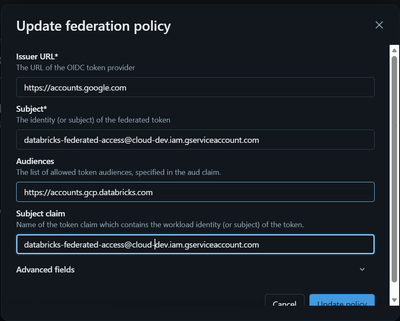

Databricks Federated Token Exchange Returns HTML Login Page Instead of Access Token(GCP →Databricks)

Hi everyone,I’m trying to implement federated authentication (token exchange) from Google Cloud → Databricks without using a client ID / client secret only using a Google-issued service account token. I have also created a federation policy in Databr...

- 862 Views

- 3 replies

- 0 kudos

- 0 kudos

You might want to check whether the issue is related to your federation policy configuration.Try reviewing the following documentation to confirm that your policy is correctly set up (issuer, audiences, and other expected claims):https://docs.databri...

- 0 kudos

- 2613 Views

- 3 replies

- 0 kudos

Azure VM quota for databricks jobs - demand prediction

Hey folks,a quick check -wanted to gather thoughts on how you manage demand for azure VM quota so you don't run into quota limits issues.In our case, we have several data domains (finance, master data, supply chain...) executing their projects in Dat...

- 2613 Views

- 3 replies

- 0 kudos

- 0 kudos

Yes, Azure Databricks compute policies let you define “quota-like” limits, but only within Databricks, not Azure subscription quotas themselves. You still rely on Azure’s own quota system for vCPU/VM core limits at the subscription level. What you c...

- 0 kudos

- 866 Views

- 1 replies

- 0 kudos

Deploying Jobs in Databricks

How can I use the Databricks Python SDK from azure devops to create or update a job and explicitly assign it to a cluster policy (by policy ID or name)? Could you show me an example where the job definition includes a task and a job cluster that refe...

- 866 Views

- 1 replies

- 0 kudos

- 0 kudos

To use the Databricks Python SDK from Azure DevOps to create or update a job and assign it explicitly to a cluster policy, specify the cluster policy by its ID in the job cluster section of your job definition. This ensures the cluster spawned for ...

- 0 kudos

- 970 Views

- 1 replies

- 0 kudos

Unable to create connection in Power platform

When i try to create the connection, I get the error message "Connection test failed. Please review your configuration and try again."Here is the response in the network trace:My connection credentials are correct. So, i'm not sure what i am doing wr...

- 970 Views

- 1 replies

- 0 kudos

- 0 kudos

The error message "Connection test failed. Please review your configuration and try again." when connecting Databricks to Power Platform can occur due to several common issues, even if your credentials are correct. Key Troubleshooting Steps Double-c...

- 0 kudos

- 459 Views

- 1 replies

- 0 kudos

Need to create an Identity Federation between my Databricks workspace/account and my GCP account

I am trying to authenticate my Databricks account using the Federation for fetching the data. I have created a service account in GCP, and also using Google Auth, I have generated a token, but I don't know how to exchange the token to authenticate Da...

- 459 Views

- 1 replies

- 0 kudos

- 0 kudos

Hi @GeraldBriyolan , You may need to use a Google ID Token to do what you are trying to do: https://docs.databricks.com/gcp/en/dev-tools/auth/authentication-google-id

- 0 kudos

- 1469 Views

- 3 replies

- 0 kudos

Cap on OIDC (max 20) Enable workload identity federation for GitHub Actions

Hi Databricks community,I have followed below page and created Github OIDCs but there seems to be a cap on how many OIDC's a Service Principal can create (20 max). Is there any work around for this or some other solution apart from using Client ID an...

- 1469 Views

- 3 replies

- 0 kudos

- 0 kudos

I can't speak for specifically why, but allowing wildcards creates security risks and most identity providers and standards guidance require exact, pre-registered URLs.

- 0 kudos

- 987 Views

- 5 replies

- 3 kudos

Prevent Access to AI Functions Execution

As a workspace Admin, I want to prevent unexpected API costs from unrestricted usage of AI Functions (AI_QUERY() etc.), how can we control that only a particular group-users can execute AI Functions ?I understand the function execution cost can be vi...

- 987 Views

- 5 replies

- 3 kudos

- 3 kudos

ok, so it has to be done at individual end-point and function level

- 3 kudos

- 1582 Views

- 1 replies

- 0 kudos

Azure DB Workspace Not Connected to DB Account Unity Catalog & Admin Console Missing (identity=null)

Hi team,I created a brand-new Azure environment and an Azure Databricks workspace, but the workspace appears to be in classic (legacy) mode and is not connected to a Databricks Account, so Unity Catalog cannot be enabled.Below are all the details and...

- 1582 Views

- 1 replies

- 0 kudos

- 0 kudos

I think you need a "corporate" account with Azure Global Administrator role to enable/access Databricks account. For instance, in some of my demo workspaces I can't access to UC with my "hotmail" account. I haven't looked deeper into it so far. So, a...

- 0 kudos

- 2820 Views

- 7 replies

- 1 kudos

Resolved! Deployment of private databricks workspace.

I tried to create configuration of Databricks with Vlan injection and I faced few problem during deploymen.1. I tried to deploy my workspace using IaC and terraform. Whole time I face issue with NSG even when I create configuration as follow in this ...

- 2820 Views

- 7 replies

- 1 kudos

- 1 kudos

All issues was resolvedReady to deploy codelocals { default_tags = { terraform = "true" workload = var.app env = var.environment } } resource "azurerm_databricks_access_connector...

- 1 kudos

- 554 Views

- 1 replies

- 0 kudos

Printing Notebook Dashboards

Is it possible to print the tables in a notebook dashboard to a PDF? I have about 10 tables for stratifications in a dashboard that would be great to print all at once into a clean pdf report.

- 554 Views

- 1 replies

- 0 kudos

- 0 kudos

Hi @DArcher - are you using legacy dashboard or modern Lakeview(AI/BI) dashboard?In the legacy one via notebook, there is no such direct way to export. You will have to do custom python script perhaps to write the output in html and then print to PDF...

- 0 kudos

- 3347 Views

- 6 replies

- 2 kudos

cloud_infra_costs

I was looking at the system catalog and realized that there is an empty table called cloud_infra_costs. Could you tell me what is this for and why it is empty?

- 3347 Views

- 6 replies

- 2 kudos

- 2 kudos

You can also take a look at this built-in cost control dashboard explained in the below video or official databricks documentation at https://docs.databricks.com/aws/en/admin/usage/ . Concerning the dashboard, relevant subject for me was you can insp...

- 2 kudos

- 1327 Views

- 1 replies

- 0 kudos

Resolved! Account Creation

I attempted to create my account, but I ran into an issue during the process. Here is what I did: - I visited your website and entered my verification code. - When asked about how I will use the database, I selected “For Work, Set up with my cloud” s...

- 1327 Views

- 1 replies

- 0 kudos

- 0 kudos

Hello @minkun81! It looks like you’re stuck in a verification-code loop. Could you try using a different browser, switching to incognito mode, or clearing your cache and cookies before attempting the login again?Also, please make sure you're followin...

- 0 kudos

-

Access control

1 -

Apache spark

1 -

Azure

7 -

Azure databricks

5 -

Billing

2 -

Cluster

1 -

Compliance

1 -

Data Ingestion & connectivity

5 -

Databricks Runtime

1 -

Databricks SQL

2 -

DBFS

1 -

Dbt

1 -

Delta Sharing

1 -

DLT Pipeline

1 -

GA

1 -

Gdpr

1 -

Github

1 -

Partner

82 -

Public Preview

1 -

Service Principals

1 -

Unity Catalog

1 -

Workspace

2

- « Previous

- Next »

| User | Count |

|---|---|

| 119 | |

| 54 | |

| 38 | |

| 36 | |

| 25 |