- 1922 Views

- 2 replies

- 2 kudos

Impossible to access Terraform created external location?!

Hi all,There seems to be an external location created that nobody within the organization can actually see or manage, because it has been created with a Google service account in Terraform.Here is the problem:DESCRIBE EXTERNAL LOCATION `gcsbucketname...

- 1922 Views

- 2 replies

- 2 kudos

- 2 kudos

I would agree that the metastore admin(s) should be able to see the external location. This issue can happen with terraform scripts if the script doesn't grant additional rights on the external location.

- 2 kudos

- 1274 Views

- 1 replies

- 0 kudos

Unexpected Behavior with Azure Databricks and Entra ID SCIM Integration

Hi everyone,I'm currently running some tests for a company that uses Entra ID as the backbone of its authentication system. Every employee with a corporate email address is mapped within the organization's Entra ID.Our company's Azure Databricks is c...

- 1274 Views

- 1 replies

- 0 kudos

- 0 kudos

Hello @antonionuzzo, This behavior is occurring because Azure Databricks allows workspace administrators to invite users from their organization's Entra ID directory into the Databricks workspace. This capability functions independently of whether th...

- 0 kudos

- 2080 Views

- 3 replies

- 1 kudos

Monitor workspace admin activities

Hello everyone,I am conducting tests on Databricks AWS and have noticed that in an organization with multiple workspaces, each with different workspace admins, a workspace admin can invite a user who is not mapped within their workspace but is alread...

- 2080 Views

- 3 replies

- 1 kudos

- 1 kudos

You do have some control over what workspace admins can do. Databricks allows account admins to restrict workspace admin permissions by enabling the RestrictWorkspaceAdmins setting. Have a look here: https://docs.databricks.com/aws/en/admin/workspace...

- 1 kudos

- 1961 Views

- 1 replies

- 2 kudos

Resolved! Predictive Optimization with multiple workspaces

We currently have an older instance of Azure Databricks that i migrated to Unity Catalog. Unfortunately i ran into some weird issues that don't seem fixable so i created a new instance and pointed it to the same metastore. The setting at the metastor...

- 1961 Views

- 1 replies

- 2 kudos

- 2 kudos

Hi @KIRKQUINBAR, if you enable Predictive Optimization at the metastore level in Unity Catalog, it automatically applies to all Unity Catalog managed tables within that metastore, no matter which workspace is accessing them. PO runs centrally, so the...

- 2 kudos

- 1970 Views

- 2 replies

- 0 kudos

Restrict serverless options to a subset of users

Hi,It seems as if there is no way to restrict serverless options to only only a subset of users. If a user has no budget policy, I assumed he could not run a serverless workload. Unfortunately, this is not the case and it will become a cost governanc...

- 1970 Views

- 2 replies

- 0 kudos

- 0 kudos

Any news on this? This is a major blocker for us to enable serverless as we only have a handful of expert users

- 0 kudos

- 3158 Views

- 3 replies

- 0 kudos

Resolved! Help with Databricks SQL Queries

Hi everyone,I’m relatively new to Databricks and trying to optimize some SQL queries for better performance. I’ve noticed that certain queries take longer to run than expected. Does anyone have tips or best practices for writing efficient SQL in Data...

- 3158 Views

- 3 replies

- 0 kudos

- 0 kudos

When working with large datasets in Databricks SQL, here are some practical tips to boost performance:Leverage Partitioning: Partition large Delta tables on columns with high cardinality and frequent filtering (like date or region). It helps Databric...

- 0 kudos

- 4702 Views

- 3 replies

- 2 kudos

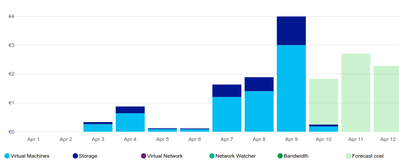

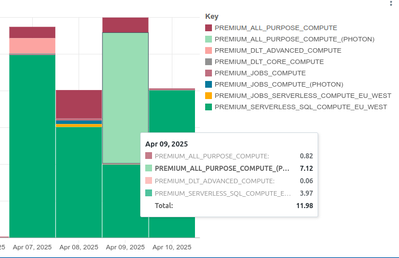

Resolved! How does reported billing in Azure relate to Databricks?

Hi,I'm confused by how costs in Azure relate to costs in Databricks. I'm currently on Azure Pay-as-you-Go and Databricks Trial. There's nothing on my Azure account going on apart from Databricks.This is the costs bar chart on Azure (€):This is the co...

- 4702 Views

- 3 replies

- 2 kudos

- 2 kudos

Thanks.I can't find a documentation on how DBU translates to $/€. The pricing calculator only works for AWS/GCP. Where would I find that info?

- 2 kudos

- 2832 Views

- 3 replies

- 0 kudos

Disable 'Allow trusted Microsoft services to bypass this firewall' for Azure Key Vault

Currently even when using vnet injected Databricks workspace, we are unable to fetch the secrets from AKV if the 'Allow trusted Microsoft services to bypass this firewall' is disabled.The secret is used a AKV backed secret scope and the key vault is ...

- 2832 Views

- 3 replies

- 0 kudos

- 0 kudos

Any update on this? Is it possible to disable the ' Allow Trusted services....' rule if you are using a private endpoint or whitelist certain IPs? Or is it required no matter what?

- 0 kudos

- 2684 Views

- 5 replies

- 0 kudos

Issue creating a workspace with Databricks Partner Account & AWS Sandbox Environment

Subject:Hello,My name is Priya, and my organization is a Databricks partner. We've been given access to the Partner Academy, and my company has set up an AWS sandbox environment to support our certification preparation.I'm currently trying to set up ...

- 2684 Views

- 5 replies

- 0 kudos

- 0 kudos

I do not see anything created under network configuration. Private access settings and vpc endpoints both show 403 status when clicking on them, and there is a default policy under network policies. I will reach out to the account executive.

- 0 kudos

- 1365 Views

- 1 replies

- 0 kudos

Metastore consolidated-northeuropec2-prod-metastore-2.mysql.database.azure.com

Hi,One of network requirments on Databricks site is to allow connection to one of these public addressesconsolidated-northeuropec2-prod-metastore-2.mysql.database.azure.com, do you know if this address is for the legacy hive metastore or it's used by...

- 1365 Views

- 1 replies

- 0 kudos

- 0 kudos

The answear is that this is used for the legacy hive and if you disable it, you don't need to create an exception in your firewall.

- 0 kudos

- 1502 Views

- 1 replies

- 0 kudos

Why is Databricks Using Private IP Instead of NAT Gateway's Public IP to Connect with Source System

I have a publicly accessible SQL database that is protected by a firewall. I am trying to connect this SQL database to Databricks, but I'm encountering an authentication error. I have double-checked the credentials, port, and host, and they are all c...

- 1502 Views

- 1 replies

- 0 kudos

- 0 kudos

The issue occurs because the Databricks cluster's outbound traffic isn't routed through the NAT Gateway due to misconfigured network settings or conflicting outbound connectivity configurations. This should be mostly resolved by your Network team but...

- 0 kudos

- 4019 Views

- 4 replies

- 2 kudos

How to delete or clean Legacy Hive Metastore after successful completion of UC migration

Say we have completed the migration of tables from Hive Metastore to UC. All the users, jobs and clusters are switched to UC. There is no more activity on Legacy Hive Metastore.What is the best recommendation on deleting or cleaning the Hive Metastor...

- 4019 Views

- 4 replies

- 2 kudos

- 2 kudos

Sometime in the last couple of days, this setting was pushed to my account, it looks like what you want:To see if you've been added, go to your Account Console and look under Previews.

- 2 kudos

- 2867 Views

- 4 replies

- 2 kudos

Resolved! Disable ability to choose PHOTON

Dear all,as an administrator, I want to restrict developers from choosing 'photon' option in job clusters. I see this in the job definition when they choose it -"runtime_engine": "PHOTON"How can I pass this as input in the policy and restrict develop...

- 2867 Views

- 4 replies

- 2 kudos

- 2 kudos

You also need to make sure the policy permissions are set up properly. You can/should fix preexisting compute affected by the policy with the wizard in the policy edit screen.

- 2 kudos

- 10205 Views

- 5 replies

- 1 kudos

Databricks App in Azure Databricks with private link cluster (no Public IP)

Hello,I've deployed Azure Databricks with a standard Private Link setup (no public IP). Everything works as expected—I can log in via the private/internal network, create clusters, and manage workloads without any issues.When I create a Databricks Ap...

- 10205 Views

- 5 replies

- 1 kudos

- 1 kudos

Behwar : you should have to create a specific private DNS zone for azure.databricksapps.com - if you do a nslookup on your apps url - you will see that it points to your workspace. In Azure using Azure (recursive) DNS you can see an important behavio...

- 1 kudos

- 753 Views

- 1 replies

- 0 kudos

remove s3 buckets

Hi,My databricks is based on AWS S3, I deleted my buckets, now Databricks is not working, how do I delete my Databricks?regards

- 753 Views

- 1 replies

- 0 kudos

- 0 kudos

Hello @owly! To delete Databricks after AWS S3 bucket deletion: - Terminate all clusters and instance pools.- Clean up associated resources, like IAM roles, S3 storage configurations, and VPCs.- Delete the workspace from the Databricks Account Consol...

- 0 kudos

-

Access control

1 -

Apache spark

1 -

Azure

7 -

Azure databricks

5 -

Billing

2 -

Cluster

1 -

Compliance

1 -

Data Ingestion & connectivity

5 -

Databricks Runtime

1 -

Databricks SQL

2 -

DBFS

1 -

Dbt

1 -

Delta Sharing

1 -

DLT Pipeline

1 -

GA

1 -

Gdpr

1 -

Github

1 -

Partner

82 -

Public Preview

1 -

Service Principals

1 -

Unity Catalog

1 -

Workspace

2

- « Previous

- Next »

| User | Count |

|---|---|

| 119 | |

| 54 | |

| 38 | |

| 36 | |

| 25 |