- 504 Views

- 3 replies

- 1 kudos

Conflicting with predictive optimization.

Hi. We have a continuous DLT pipeline with tables updating every minute and partitioned by partition_key column. Every 3-5 days, we encounter a below conflict error caused by predictive optimization. The pipeline runs fine after restarting, but I nee...

- 504 Views

- 3 replies

- 1 kudos

- 1 kudos

As per the error message i see who is causing the issue: Predictive Optimization Job-d453e56b-97f1-425d-a33d-841bd8a3771f. This is a classic Optimistic Concurrency Issue!What's happening is predictive optimization runs a background job that sets tab...

- 1 kudos

- 291 Views

- 0 replies

- 2 kudos

Lakeventory: Automated Asset Discovery for Databricks Workspaces

Lakeventory is an open-source inventory tool that automatically discovers and catalogs everything in your Databricks workspaces in minutes. What It Collects?- Workspace: notebooks, files, directories- Compute: jobs, clusters, instance pools, policie...

- 291 Views

- 0 replies

- 2 kudos

- 597 Views

- 2 replies

- 0 kudos

Resolved! Databricks One Redirectio

Hello, I have an Entra ID group linked to Databricks with the Consumer Access entitlement enabled, other entitlements are unchecked. They also have "use catalog" on the a specific catalog. They have "select" and "use schema" to a gold level schema wi...

- 597 Views

- 2 replies

- 0 kudos

- 0 kudos

Hi @NatJ, You are correct that users with only the Consumer Access entitlement are intended to see the Databricks One interface when they log in. However, the behavior you are observing with direct URLs to the catalog explorer is expected, and here i...

- 0 kudos

- 575 Views

- 3 replies

- 0 kudos

Workspace Folder ACL design

How should the Databricks workspace folder architecture be designed to support cross-team collaboration, access governance, and scalability in an enterprise platform? Please suggest below or share some ideas from your experience ThanksNote: I'm new t...

- 575 Views

- 3 replies

- 0 kudos

- 0 kudos

Hi @APJESK, To address your follow-up questions about the two behaviors you observed after implementing the folder ACL structure: ISSUE 1: USERS CAN STILL CREATE NOTEBOOKS IN THEIR HOME FOLDER This is by-design behavior. Every Databricks user automat...

- 0 kudos

- 1124 Views

- 4 replies

- 0 kudos

Table level data masking issue

Hi,We are seeing this error and does anyone know the ways to fix it?[RequestId=6cf95ee8-f312-47cd-846c-dcd87158c939 ErrorClass=INVALID_PARAMETER_VALUE.ROW_COLUMN_ACCESS_POLICIES_NOT_SUPPORTED_ON_ASSIGNED_CLUSTERS] Query on table with row filter or co...

- 1124 Views

- 4 replies

- 0 kudos

- 0 kudos

Hi @Harish_Kumar_M, The error you are seeing, "Query on table with row filter or column mask not supported on assigned clusters" (INVALID_PARAMETER_VALUE.ROW_COLUMN_ACCESS_POLICIES_NOT_SUPPORTED_ON_ASSIGNED_CLUSTERS), occurs because your cluster's ac...

- 0 kudos

- 809 Views

- 2 replies

- 2 kudos

Resolved! NetSuite JDBC Driver 8.10.184.0 Suppor

Hello,I am currently attempting to integrate NetSuite with Databricks using the NetSuite JDBC driver version 8.10.184.0. When I attempt to ingestion information from NetSuite to Databricks, I find that the job fails with a checksum error and informs ...

- 809 Views

- 2 replies

- 2 kudos

- 2 kudos

Hi @b_pinter, The NetSuite JDBC driver version 8.10.184.0 is indeed supported by Databricks for managed ingestion via Lakeflow Connect. The officially supported driver versions are 8.10.147.0, 8.10.170.0, and 8.10.184.0. The "JAR checksum does not ma...

- 2 kudos

- 1012 Views

- 5 replies

- 1 kudos

Resolved! Advise on "airlocking" Databricks service

Need advice: I'm building a data analysis service solution on top of DataBricks and need to protect it from unauthorized data leaks, specifically file downloads.As far as I can tell, I need some sort of remote browser isolation (RBI).Is this the corr...

- 1012 Views

- 5 replies

- 1 kudos

- 1 kudos

I do not require an "NSA-level airlock." Indeed, a malicious actor could develop a script that projects scrolled data onto the screen and records it with an external device, or more effectively, they could create a series of QR code movies to address...

- 1 kudos

- 1999 Views

- 2 replies

- 0 kudos

Resolved! Regarding - Managed vs External volumes and tables

From a creation perspective, the steps for managed and external volumes appear almost identical:Both require a storage credentialBoth require an external locationBoth point to customer-owned S3So what exactly makes a volume “managed” vs “external”?Wh...

- 1999 Views

- 2 replies

- 0 kudos

- 0 kudos

Hi @APJESK, You are right that the setup steps look similar on the surface, but the differences between managed and external volumes (and tables) are meaningful once you understand what Unity Catalog does with the data after creation. WHAT "MANAGED" ...

- 0 kudos

- 539 Views

- 1 replies

- 0 kudos

Databricks on AWS Marketplace – Unity Catalog & S3 Access Failing with SSL “Connection reset”

Hi All,I’m facing an issue accessing AWS S3 and Unity Catalog from a Databricks AWS Marketplace workspace.Problem:Whenever Databricks tries to access S3 or Unity Catalog, it fails with:javax.net.ssl.SSLException: Connection resetWhat works:Spark job...

- 539 Views

- 1 replies

- 0 kudos

- 0 kudos

Hi @asim_mirza_12, The javax.net.ssl.SSLException: Connection reset error you are seeing with Unity Catalog and S3 operations, while basic Spark jobs and curl commands work, typically points to a networking layer issue where the JVM's SSL handshake i...

- 0 kudos

- 3077 Views

- 5 replies

- 4 kudos

Best practices for 3-layer access control in Databricks

Identity and access management model for Databricks and want to implement a clear 3-layer authorization approach:Account level: Account RBAC roles (account admin, metastore admin, etc.)Workspace level: Workspace roles/entitlements + workspace ACLs (c...

- 3077 Views

- 5 replies

- 4 kudos

- 4 kudos

Hi @APJESK, Your 3-layer model (Account RBAC, Workspace ACLs, Unity Catalog privileges) is the right framework. I want to address both the overall design and the specific follow-up you posted about the Home folder and compute behavior, since those ar...

- 4 kudos

- 789 Views

- 4 replies

- 3 kudos

Resolved! How to delete and "Account Level" Storage Credential ? (... I think)

This is not a production platform, but I'd like to know the answer. I suspect I have done something stupid.Using Account APIs, I created a Storage Credential.Q1: I cannot see this in a workspace, and I do not know how to see it in the account console...

- 789 Views

- 4 replies

- 3 kudos

- 3 kudos

Hi @ThePussCat, You’re not missing anything. This is mostly about where UC is surfaced, not about who controls it. Unity Catalog objects (including storage credentials and their workspace bindings) are metastore‑scoped, and the metastore is attached ...

- 3 kudos

- 541 Views

- 2 replies

- 0 kudos

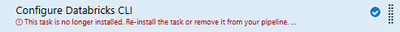

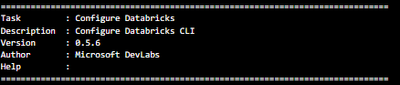

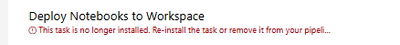

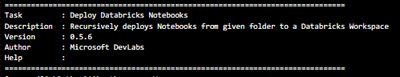

Azure DevOps Release (CD) pipeline - Databricks tasks no longer available

Hello and happy new year everyone.We've noticed that our Azure DevOps Release (CD) pipelines have got all of their Databricks tasks uninstalled, and we cannot find them in the marketplace anymore. The author for both is Microsoft DevLabsWe mainly rel...

- 541 Views

- 2 replies

- 0 kudos

- 0 kudos

Hi @BigAlThePal,As @szymon_dybczak mentioned, the "DevOps for Azure Databricks" extension by Microsoft DevLabs (which provided the "Configure Databricks CLI" and "Deploy Notebooks to Workspace" tasks) was deprecated and has since been removed from th...

- 0 kudos

- 3101 Views

- 2 replies

- 0 kudos

GitHub Actions OIDC with Databricks: wildcard subject for pull_request workflows

Hi,I’m configuring GitHub Actions OIDC authentication with Databricks following the official documentation:https://docs.databricks.com/aws/en/dev-tools/auth/provider-githubWhen running a GitHub Actions workflow triggered by pull_request, authenticati...

- 3101 Views

- 2 replies

- 0 kudos

- 0 kudos

Hi @Valerio,The challenge you are running into is a common one when setting up OIDC federation for pull_request-triggered workflows. Here is a breakdown of the issue and several approaches to solve it.UNDERSTANDING THE SUBJECT CLAIM FOR PULL REQUESTS...

- 0 kudos

- 937 Views

- 7 replies

- 0 kudos

No workspace in Free Edition

Hi, I have been using free edition from some time using my this mail id. But from last 3-4 days I can’t see any workspace. when ever I am logging in I am getting two accounts name and In no workspace is available. When I tried creating another accoun...

- 937 Views

- 7 replies

- 0 kudos

- 0 kudos

when I logging I am getting above page. where no workspace space and no way to create a new one

- 0 kudos

- 469 Views

- 2 replies

- 2 kudos

User Management tab not showing

Hi,I created the workspace with my contributor role from the Azure portal. However, while logged in, I cannot find the User Management tab. I am trying to set up Unity Catalog for user administration.How can I access this?Thanks

- 469 Views

- 2 replies

- 2 kudos

- 2 kudos

Hi @ZafarJ, This is a common point of confusion when getting started with Azure Databricks, and the answer depends on which level of user management you need. WORKSPACE-LEVEL USER MANAGEMENT As a workspace admin, you can manage users directly in your...

- 2 kudos

-

Access control

1 -

Apache spark

1 -

Azure

7 -

Azure databricks

5 -

Billing

2 -

Cluster

1 -

Compliance

1 -

Data Ingestion & connectivity

5 -

Databricks Runtime

1 -

Databricks SQL

2 -

DBFS

1 -

Dbt

1 -

Delta Sharing

1 -

DLT Pipeline

1 -

GA

1 -

Gdpr

1 -

Github

1 -

Partner

81 -

Public Preview

1 -

Service Principals

1 -

Unity Catalog

1 -

Workspace

2

- « Previous

- Next »

| User | Count |

|---|---|

| 119 | |

| 54 | |

| 38 | |

| 36 | |

| 25 |