- 3170 Views

- 1 replies

- 3 kudos

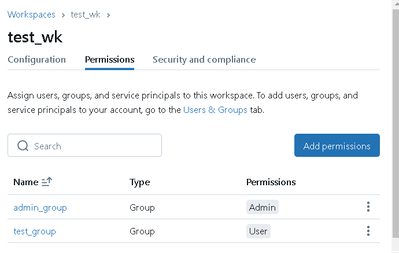

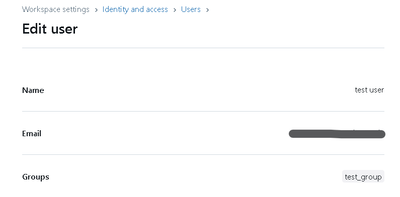

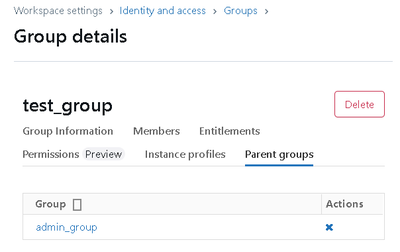

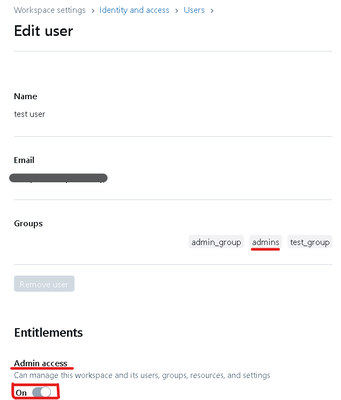

How to Grant Workspace Admin Permissions to an ID Using Parent Groups

Hello,There are several ways to grant Workspace Admin permissions in Databricks. While this may seem straightforward, I found it a bit confusing when I started using Databricks, so I’d like to share my experience. This guide is aimed at beginners.How...

- 3170 Views

- 1 replies

- 3 kudos

- 854 Views

- 0 replies

- 0 kudos

Learn Data Engineering on Databricks step by step

For new aspiring Data Engineers, it has always been difficult to start their learning. With decade of experience in Data Engineering now I have put together a series of article that can help new aspirants. The list is small attempt to help new Data E...

- 854 Views

- 0 replies

- 0 kudos

- 2915 Views

- 3 replies

- 1 kudos

Is there any way to add a Matplotlib visualizaton to a notebook Dashboard?

So I love that databricks lets you display a dataframe, create a visualization of it, then add that visualization to notebook dashboard to present. However, the visualizations lack some customization that I would like. For example the heat map vis...

- 2915 Views

- 3 replies

- 1 kudos

- 1 kudos

This is correct, it seems the way you want to implement is not currently supported

- 1 kudos

- 2696 Views

- 0 replies

- 0 kudos

Unlock the Full Potential of Databricks with the Demo Center!

Hello Databricks community!If you're eager to explore how Databricks can revolutionize your data workflows, I highly recommend checking out the Databricks Demo Center. It’s packed with insights and tools designed to cater to both beginners and season...

- 2696 Views

- 0 replies

- 0 kudos

- 4639 Views

- 0 replies

- 0 kudos

Understanding Databricks Workspace IP Access List

What is a Databricks Workspace IP Access List?The Databricks Workspace IP Access List is a security feature that allows administrators to control access to the Databricks workspace by specifying which IP addresses or IP ranges are allowed or denied a...

- 4639 Views

- 0 replies

- 0 kudos

- 902 Views

- 0 replies

- 1 kudos

Python step-through debugger for Databricks Notebooks and Files is now Generally Available

Python step-through debugger for Databricks Notebooks and Files is now Generally Availablehttps://www.databricks.com/blog/announcing-general-availability-step-through-debugging-databricks-notebooks-and-files

- 902 Views

- 0 replies

- 1 kudos

- 2260 Views

- 1 replies

- 4 kudos

Orchestrate Databricks jobs with Apache Airflow

You can Orchestrate Databricks jobs with Apache AirflowThe Databricks provider implements the below operators:DatabricksCreateJobsOperator : Create a new Databricks job or reset an existing jobDatabricksRunNowOperator : Runs an existing Spark job run...

- 2260 Views

- 1 replies

- 4 kudos

- 4 kudos

Good one @Sourav-Kundu! Your clear explanations of the operators really simplify job management, plus the resource link you included makes it easy for everyone to dive deeper .

- 4 kudos

- 3182 Views

- 1 replies

- 2 kudos

Use Retrieval-augmented generation (RAG) to boost performance of LLM applications

Retrieval-augmented generation (RAG) is a method that boosts the performance of large language model (LLM) applications by utilizing tailored data.It achieves this by fetching pertinent data or documents related to a specific query or task and presen...

- 3182 Views

- 1 replies

- 2 kudos

- 2 kudos

Thanks for sharing such valuable insight, @Sourav-Kundu . Your breakdown of how RAG enhances LLMs is spot on- clear and concise!

- 2 kudos

- 2958 Views

- 1 replies

- 2 kudos

You can use Low Shuffle Merge to optimize the Merge process in Delta lake

Low Shuffle Merge in Databricks is a feature that optimizes the way data is merged when using Delta Lake, reducing the amount of data shuffled between nodes.- Traditional merges can involve heavy data shuffling, as data is redistributed across the cl...

- 2958 Views

- 1 replies

- 2 kudos

- 2 kudos

Great post, @Sourav-Kundu. The benefits you've outlined, especially regarding faster execution and cost efficiency, are valuable for anyone working with large-scale data processing. Thanks for sharing!

- 2 kudos

- 1357 Views

- 0 replies

- 0 kudos

Utilize Unity Catalog alongside your Delta Live Tables pipelines

Delta Live Tables support for Unity Catalog is in Public PreviewDatabricks recommends setting up Delta Live Tables pipelines using Unity Catalog.When configured with Unity Catalog, these pipelines publish all defined materialized views and streaming ...

- 1357 Views

- 0 replies

- 0 kudos

- 1091 Views

- 0 replies

- 1 kudos

Databricks Asset Bundles package and deploy resources like notebooks and workflows as a single unit.

Databricks Asset Bundles help implement software engineering best practices like version control, testing and CI/CD for data and AI projects.1. They allow you to define resources such as jobs and notebooks as source files, making project structure, t...

- 1091 Views

- 0 replies

- 1 kudos

- 1439 Views

- 0 replies

- 0 kudos

Databricks serverless budget policies are now available in Public Preview

Databricks serverless budget policies are now available in Public Preview, enabling administrators to automatically apply the correct tags to serverless resources without relying on users to manually attach them.1. This feature allows for customized ...

- 1439 Views

- 0 replies

- 0 kudos

- 5783 Views

- 0 replies

- 1 kudos

How to recover Dropped Tables in Databricks

Have you ever accidentally dropped a table in Databricks, or had someone else mistakenly drop it?Databricks offers a useful feature that allows you to view dropped tables and recover them if needed.1. You need to first execute SHOW TABLES DROPPED2. T...

- 5783 Views

- 0 replies

- 1 kudos

- 4056 Views

- 4 replies

- 2 kudos

Resolved! Want to learn LakeFlow Pipelines in community edition.

Hello Everyone. I want to explore LakeFlow Pipelines in the community version but don’t have access to Azure or AWS. I had a bad experience with Azure, where I was charged $85 while just trying to learn. Is there a less expensive, step-by-step learni...

- 4056 Views

- 4 replies

- 2 kudos

- 2 kudos

Hi @nafikazi ,Sorry, this is not possible in community edition. Your only option is to have AWS or Azure account.

- 2 kudos

- 5886 Views

- 6 replies

- 7 kudos

🚀 Databricks Custom Apps! 🚀

Whether you're a data scientist or a sales executive, Databricks is making it easier than ever to build, host, and share secure data applications. With our platform, you can now run any Python code on serverless compute, share it with non-technical c...

- 5886 Views

- 6 replies

- 7 kudos

- 7 kudos

Can we somehow play with hosting, and expose this app outside?

- 7 kudos

-

Access Data

1 -

ADF Linked Service

1 -

ADF Pipeline

1 -

Advanced Data Engineering

3 -

agent bricks

1 -

Agentic AI

3 -

AI

1 -

AI Agents

3 -

AI Readiness

1 -

Apache spark

3 -

Apache Spark 3.0

2 -

ApacheSpark

1 -

Associate Certification

1 -

Auto-loader

1 -

Automation

1 -

AWSDatabricksCluster

1 -

Azure

1 -

Azure databricks

3 -

Azure Databricks Job

2 -

Azure Delta Lake

2 -

Azure devops integration

1 -

AzureDatabricks

2 -

BI

1 -

BI Integrations

1 -

Big data

1 -

Billing and Cost Management

2 -

Blog

1 -

Caching

2 -

CDC

1 -

CICDForDatabricksWorkflows

1 -

Cluster

1 -

Cluster Policies

1 -

Cluster Pools

1 -

Collect

1 -

Community Event

1 -

CommunityArticle

2 -

Cost Optimization Effort

2 -

CostOptimization

2 -

custom compute policy

1 -

CustomLibrary

1 -

Data

1 -

Data Analysis with Databricks

1 -

Data Architecture

1 -

Data Driven AI Roadmap

1 -

Data Engineering

7 -

Data Governance

1 -

Data Ingestion

1 -

Data Ingestion & connectivity

1 -

Data Mesh

1 -

Data Processing

1 -

Data Quality

1 -

Data warehouse

1 -

databricks

1 -

Databricks App

1 -

Databricks Assistant

2 -

Databricks Community

1 -

Databricks Dashboard

2 -

Databricks Delta Table

1 -

Databricks Demo Center

1 -

databricks genie

1 -

Databricks Job

1 -

Databricks Lakehouse

1 -

Databricks Migration

3 -

Databricks Mlflow

1 -

Databricks Notebooks

1 -

Databricks Serverless

1 -

Databricks Support

1 -

Databricks Training

1 -

Databricks Unity Catalog

2 -

Databricks Workflows

2 -

DatabricksML

1 -

DBR Versions

1 -

Declartive Pipelines

1 -

DeepLearning

1 -

Delta Lake

7 -

Delta Live Table

1 -

Delta Live Tables

1 -

Delta Time Travel

1 -

Devops

1 -

DimensionTables

1 -

DLT

2 -

DLT Pipelines

3 -

DLT-Meta

1 -

Dns

1 -

Dynamic

1 -

Free Databricks

3 -

Free Edition

1 -

GenAI agent

2 -

GenAI and LLMs

2 -

GenAIGeneration AI

2 -

Generative AI

1 -

Genie

1 -

Governance

1 -

Governed Tag

1 -

hackathon

1 -

Hive metastore

1 -

Hubert Dudek

42 -

Hybrid Lakehouse

1 -

Lakeflow Pipelines

1 -

Lakehouse

2 -

Lakehouse Migration

1 -

Lazy Evaluation

1 -

Learning

1 -

Library Installation

1 -

Llama

1 -

LLMs

1 -

mcp

2 -

Medallion Architecture

2 -

Metric Views

1 -

Microsoft Teams

1 -

Migrations

1 -

MSExcel

3 -

Multi-Table Transactions

1 -

Multiagent

3 -

Networking

2 -

NotMvpArticle

1 -

Partitioning

1 -

Partner

1 -

Performance

2 -

Performance Tuning

2 -

Private Link

1 -

Pyspark

2 -

Pyspark Code

1 -

Pyspark Databricks

1 -

Pytest

1 -

Python

1 -

Reading-excel

2 -

Scala Code

1 -

Scripting

1 -

SDK

1 -

Serverless

2 -

slack

1 -

Spark

5 -

Spark Caching

1 -

Spark Performance

1 -

SparkSQL

1 -

SQL

2 -

Sql Scripts

2 -

SQL Serverless

1 -

Students

2 -

Support Ticket

1 -

Sync

1 -

Training

1 -

Tutorial

1 -

UCSD

1 -

Unit Test

1 -

Unity Catalog

8 -

Unity Catlog

1 -

University Alliance

1 -

Variant

1 -

Warehousing

1 -

Workflow Jobs

1 -

Workflows

7 -

Zerobus

1

- « Previous

- Next »

| User | Count |

|---|---|

| 85 | |

| 72 | |

| 50 | |

| 44 | |

| 42 |