Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Data Engineering

Join discussions on data engineering best practices, architectures, and optimization strategies within the Databricks Community. Exchange insights and solutions with fellow data engineers.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks Community

- Data Engineering

- Re: How to deploy a databricks managed workspace m...

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-10-2021 08:50 PM

I wanted to deploy a registered model present in databricks managed MLFlow to a sagemaker via databricks notebook?

As of now, it is not able to run mlflow sagemaker build-and-push container command directly. What all configurations or steps needed to do that? I assume that a manual push of docker image from outside of databricks should not be required just like in open source MLFlow. There has to be an alternate way.

Also, When I am trying to test it locally via API, then I am getting the below error.

Code:

import mlflow.sagemaker as mfs

mfs.run_local(model_uri=model_uri,port=8000,image="test")

Error:

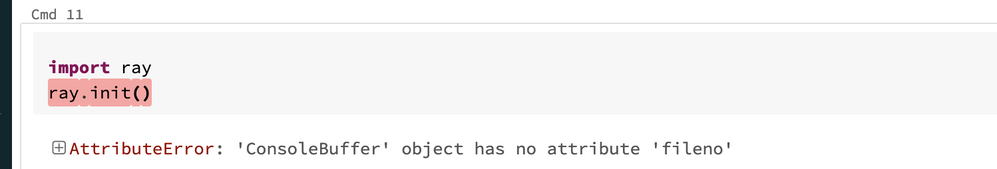

AttributeError: 'ConsoleBuffer' object has no attribute 'fileno'

Can someone show some light on this topic?

Labels:

- Labels:

-

Model Deployment

1 ACCEPTED SOLUTION

Accepted Solutions

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-13-2022 10:59 PM

@Atanu Sarkar @Gobinath Viswanathan @Kaniz Fatma :

I have been trying to push a registered model in DB managed mlflow to sagemaker endpoint. Although I have been able to do it but there are some manual steps that I needed to do on my local system in order to make it work. Could you help me to understand, Am I doing it correctly or Is there a bug in the Databricks ML runtime.

Below are the steps that I did:

- Step1: Log the model

- Ran a model code and registered it in mlflow. Moved the model into production stage.

- Step2: Deploy the model

- Installed AWS CLI (via pip) and configured the AWS target env./account. This account have a role ARN setup with Sagemaker full access and ECRContainerRegistry full access.

- Able to connect the target AWS account via databricks notebook.

- While I was deploying the model as Sagemaker endpoint via “mlflow.sagemaker.deploy”, All intermediate obejcts are being created but I was getting the error because it was not able to find the container image in ECR. My initial assumption was that the function itself should be able to create containers by using the current model code.

So, I downloaded the model files into a folder on DB local path using mlflow library.

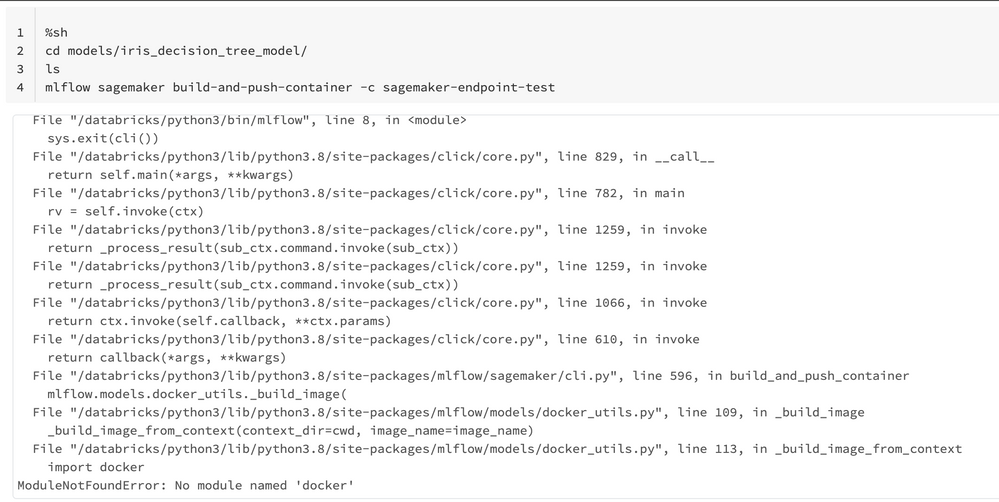

- Now In order to create a container, I am using “mlflow sagemaker build-and-push-container” command from the DB local path where model files are present.It is showing me error “no module named docker” from the “mlflow.docker_utils” module.

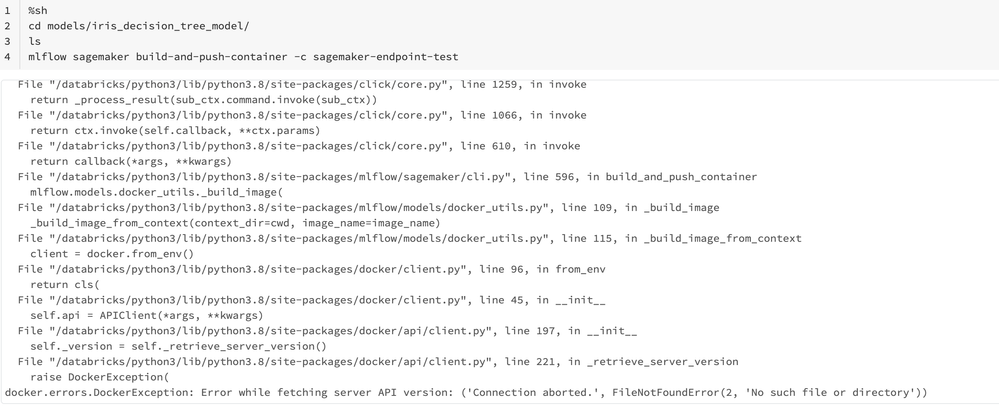

- In order to resolve this I did “pip install docker”. But after that I am getting the error: docker.errors.DockerException: Error while fetching server API version: ('Connection aborted.', FileNotFoundError(2, 'No such file or directory'))"

I have checked that this error comes when the docker daemon processes itself are not working. I also haven’t been able to find any docker process executable file in “/etc/init.d/” path where general service executables are present.

The only way all things works is when I downloaded all model based files on my local system, ran the docker desktop for docker daemons to be up and then ran “mlflow sagemaker build-and-push-container” command from inside the models folder. It had created an image in the ECR which is being correctly referred by “mlflow.sagemaker.deploy” command.

My question is that, Is this the right process? Do we need to build the image locally in order to make it work?

My assumption was that the “mlflow.sagemaker.deploy” command would be able to take care of all things Or atmost the “mlflow sagemaker build-and-push-container” command should be able to run from databricks notebook itself.

9 REPLIES 9

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-16-2021 09:12 PM

@Kaniz Fatma : Thanks for replying.

I am using databricks runtime 9 ML.

Open source MLFlow implementation is working fine but I am getting error while running mlflow sagemaker command on top of databricks notebooks.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-23-2021 09:15 PM

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-23-2021 09:17 PM

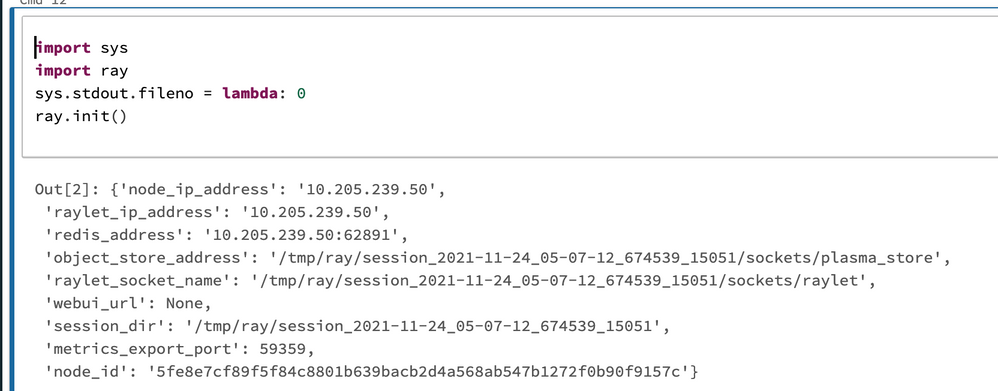

@Saurabh Verma Please try!

import mlflow.sagemaker as mfs

sys.stdout.fileno = lambda: 0

mfs.run_local(model_uri=model_uri,port=8000,image="test")Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-24-2021 10:55 PM

@Gobinath Viswanathan : Still getting below error:

Have tried to install docker explicitly too but the error still persists.

Note: I am running this inside Databricks notebook on managed AWS databricks.

Error:

Using the python_function flavor for local serving!

2021/11/24 13:01:07 INFO mlflow.sagemaker: launching docker image with path /tmp/tmpq622qyl6/model

2021/11/24 13:01:07 INFO mlflow.sagemaker: executing: docker run -v /tmp/tmpq622qyl6/model:/opt/ml/model/ -p 5432:8080 -e MLFLOW_DEPLOYMENT_FLAVOR_NAME=python_function --rm test serve

FileNotFoundError: [Errno 2] No such file or directory: 'docker'

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-27-2021 07:06 AM

https://docs.docker.com/engine/reference/builder/

https://forums.docker.com/t/no-such-file-or-directory-after-building-the-image/66143

this 2 references might be helpful from docker side. let us know if this helps . @Saurabh Verma

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-28-2021 08:54 PM

@Atanu Sarkar @Gobinath Viswanathan @Kaniz Fatma : Thanks for reaching out. Unfortunately the above links mentioned by you is working only in case I am doing things via open-source MLFlow where I have control over editing the folder structure and create a separate docker file.

But the same is not allowed in managed Databricks env. The model artefacts are stored on a path which can only be assessed by MLFLow API.

In case, if you have tried some other way and it worked for you then please let me know the complete steps. I am looking to push the models registered in Databricks managed MLFLow registry to the Sagemaker endpoints and also wanted to test this setup via Sagemaker local command.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-03-2022 04:53 AM

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-11-2022 05:37 AM

@Kaniz Fatma @Gobinath Viswanathan @Atanu Sarkar :

Hi All,

The above direct methods are not working. So, I downloaded the model files using mlflow and trying to run "mlflow sagemaker build-and-push-containers" in order to push the model image to ECR.

This step is also not running. Getting "no module named docker" error from the "mlflow.models.docker_utils" module

I am currently running Databricks 10.2 ML runtime.

After installing docker via "pip install docker". Now I am getting error as

"docker.errors.DockerException: Error while fetching server API version: ('Connection aborted.', FileNotFoundError(2, 'No such file or directory'))"

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-13-2022 10:59 PM

@Atanu Sarkar @Gobinath Viswanathan @Kaniz Fatma :

I have been trying to push a registered model in DB managed mlflow to sagemaker endpoint. Although I have been able to do it but there are some manual steps that I needed to do on my local system in order to make it work. Could you help me to understand, Am I doing it correctly or Is there a bug in the Databricks ML runtime.

Below are the steps that I did:

- Step1: Log the model

- Ran a model code and registered it in mlflow. Moved the model into production stage.

- Step2: Deploy the model

- Installed AWS CLI (via pip) and configured the AWS target env./account. This account have a role ARN setup with Sagemaker full access and ECRContainerRegistry full access.

- Able to connect the target AWS account via databricks notebook.

- While I was deploying the model as Sagemaker endpoint via “mlflow.sagemaker.deploy”, All intermediate obejcts are being created but I was getting the error because it was not able to find the container image in ECR. My initial assumption was that the function itself should be able to create containers by using the current model code.

So, I downloaded the model files into a folder on DB local path using mlflow library.

- Now In order to create a container, I am using “mlflow sagemaker build-and-push-container” command from the DB local path where model files are present.It is showing me error “no module named docker” from the “mlflow.docker_utils” module.

- In order to resolve this I did “pip install docker”. But after that I am getting the error: docker.errors.DockerException: Error while fetching server API version: ('Connection aborted.', FileNotFoundError(2, 'No such file or directory'))"

I have checked that this error comes when the docker daemon processes itself are not working. I also haven’t been able to find any docker process executable file in “/etc/init.d/” path where general service executables are present.

The only way all things works is when I downloaded all model based files on my local system, ran the docker desktop for docker daemons to be up and then ran “mlflow sagemaker build-and-push-container” command from inside the models folder. It had created an image in the ECR which is being correctly referred by “mlflow.sagemaker.deploy” command.

My question is that, Is this the right process? Do we need to build the image locally in order to make it work?

My assumption was that the “mlflow.sagemaker.deploy” command would be able to take care of all things Or atmost the “mlflow sagemaker build-and-push-container” command should be able to run from databricks notebook itself.

Announcements

Related Content

- DLT pipeline's compute policy when Instance pool Id used it ignores the VM series. in Data Engineering

- Databricks excel plugin error in Warehousing & Analytics

- Databricks Runtime, Pyspark and Spark Versions in Data Engineering

- Import Data from Databricks to SQL Server in Data Engineering

- Seeking Volunteers with Lakehouse, Fabric, Databricks, or Snowflake Experience in Data Engineering