Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Data Engineering

Join discussions on data engineering best practices, architectures, and optimization strategies within the Databricks Community. Exchange insights and solutions with fellow data engineers.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks Community

- Data Engineering

- Re: How to enable/verify cloud fetch from PowerBI

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

How to enable/verify cloud fetch from PowerBI

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-30-2022 08:01 AM

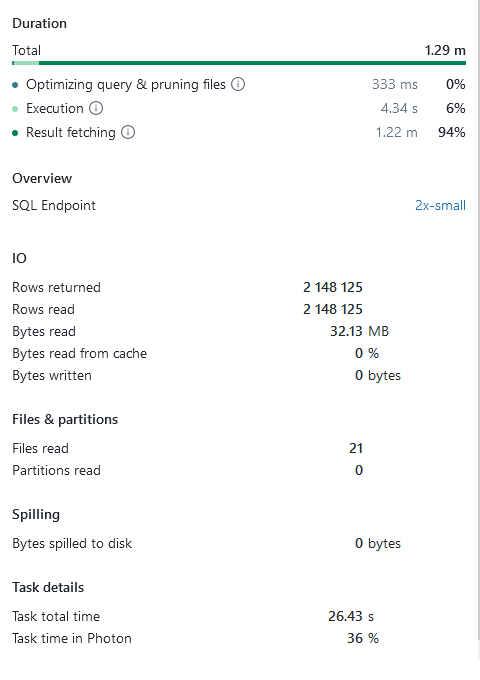

I tried to benchmark the Powerbi Databricks connector vs the powerbi Delta Lake reader on a dataset of 2.15million rows. I found that the delta lake reader used 20 seconds, while importing through the SQL compute endpoint took ~75 seconds.

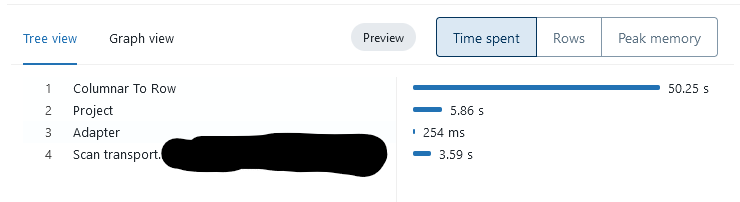

When I look at the query profile in SQL compute I see that 50 seconds are spendt in the "Columnar To Row" step. This makes me rather suspicios, since I got the impression that with an updated PowerBI we would take advantage of "cloud fetch" which creates files containing Apache Arrow batches, which is a columnar format. So why the conversion to rows? Maybe it is not actually using cloud fetch? Is there any way to verify that I am actually using cloud fetch? Either in PowerBi logs or in the Databricks SQL compute endpoint web interface?

Labels:

- Labels:

-

Cloud

-

Delt Lake

-

Powerbi

-

Powerbi Databricks

-

SQL

19 REPLIES 19

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-23-2022 12:53 AM

You would need to set EnableQueryResultsDownload Flag to 0 (zero) which will disable cloud fetch.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

06-23-2022 12:54 AM

So why is ColumnarToRow required?

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

10-26-2022 04:47 AM

Hi everyone, check out my latest blog post to verify whether or not cloudfetch is actually used, maybe you also find some other optimizations there:

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-27-2023 07:24 AM

Guys, is there any way to switch off CloudFetch and fall back to ArrowResultSet by default irrespective of size? using the latest version of Spark Simba ODBC driver?

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-23-2025 07:40 AM

I'm troubleshooting slow speeds (~6Mbps) from Azure Databricks to the PowerBI Service (Fabric) via dataflows.

- Drivers are up to date. ✅ PowerBI is using Microsoft's Spark ODBC driver Version 2.7.6.1014, confirmed via log4j.

- HybridCloudStoreResultHandler is being used. ✅ Confirmed via log4j.

- MapPartitionInternals is NOT using CloudStoreCollector. ⚠️ The Spark DAG ends with mapPartitionsInternal at HybridResultCollector.scala.

Does this mean that CloudFetch is not enabled here?

In the neo4j logs I also see CloudStoreBasedResultHandler receiving and responding to getNextCloudStoreBasedSet which I interpret as cloudFetch being enabled?

- « Previous

-

- 1

- 2

- Next »

Announcements

Related Content

- VNet Data Gateway unable to connect to Azure Databricks Serverless SQL via Private Endpoint in Administration & Architecture

- Unable to create secret scope -"Fetch request failed due expired user session" in Data Engineering

- Unity Catalog Table Permissions in Data Governance

- Effects of materialized view with Cluster BY in Data Engineering

- Failed to fetch tables for the space when using Genie MCP via Model Serving in Generative AI