Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Data Engineering

Join discussions on data engineering best practices, architectures, and optimization strategies within the Databricks Community. Exchange insights and solutions with fellow data engineers.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks Community

- Data Engineering

- Support for Parquet brotli compression or a work a...

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-31-2023 05:31 PM

Spark 3.3.1 supports the brotli compression codec, but when I use it to read parquet files from S3, I get:

INVALID_ARGUMENT: Unsupported codec for Parquet page: BROTLIExample code:

df = (spark.read.format("parquet")

.option("compression", "brotli")

.load("s3://<bucket>/<path>/<file>.parquet")

df.write.saveAsTable("tmp_test")I have a large amount of data stored with this compression, so switching right now would be difficult. It looks like Koalas supports it or I could manually ingest it by spinning up my own Spark session, but that would defeat the point of having Databricks / Delta Lake / Autoloader. Any suggestions on a work around?

edit:

More output:

Caused by: java.lang.RuntimeException: INVALID_ARGUMENT: Unsupported codec for Parquet page: BROTLI

at com.databricks.sql.io.caching.NativePageWriter$.create(Native Method)

at com.databricks.sql.io.caching.DiskCache$PageWriter.<init>(DiskCache.scala:318)

at com.databricks.sql.io.parquet.CachingPageReadStore$UnifiedCacheColumn.populate(CachingPageReadStore.java:1183)

at com.databricks.sql.io.parquet.CachingPageReadStore$UnifiedCacheColumn.lambda$getPageReader$0(CachingPageReadStore.java:1177)

at com.databricks.sql.io.caching.NativeDiskCache$.get(Native Method)

at com.databricks.sql.io.caching.DiskCache.get(DiskCache.scala:515)

at com.databricks.sql.io.parquet.CachingPageReadStore$UnifiedCacheColumn.getPageReader(CachingPageReadStore.java:1178)

at com.databricks.sql.io.parquet.CachingPageReadStore.getPageReader(CachingPageReadStore.java:1012)

at com.databricks.sql.io.parquet.DatabricksVectorizedParquetRecordReader.checkEndOfRowGroup(DatabricksVectorizedParquetRecordReader.java:741)

at com.databricks.sql.io.parquet.DatabricksVectorizedParquetRecordReader.nextBatch(DatabricksVectorizedParquetRecordReader.java:603)

Labels:

- Labels:

-

Compression

-

DeltaLake

1 ACCEPTED SOLUTION

Accepted Solutions

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-01-2023 01:48 PM

Given the new information I appended, I looked into the Delta caching and I can disable it:

.option("spark.databricks.io.cache.enabled", False)This works as a work around while I read these files in to save them locally in DBFS, but does it have performance repercussions? I'm only doing this to ingest files from S3 uploaded from an external process. I'm worried there might be a larger number of reads from S3 increasing ingestion costs.

4 REPLIES 4

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-31-2023 09:33 PM

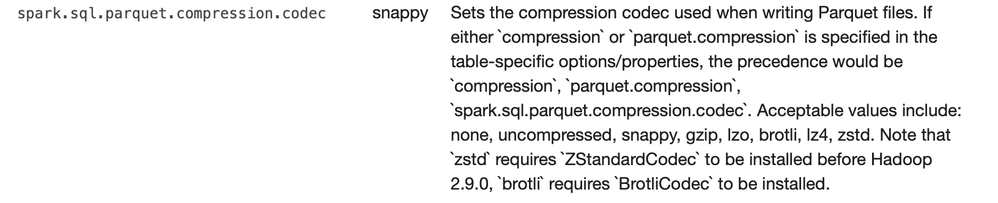

Hi, Could you please check if this helps: https://spark.apache.org/docs/2.4.3/sql-data-sources-parquet.html

Also, you can refer to https://community.databricks.com/s/question/0D53f00001HKHSsCAP/how-can-i-change-the-parquet-compress...

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-01-2023 10:03 AM

Right. In the description there, it says the precedence is in order of 'compression' followed b y 'parquet.compression' followed by this option. As you can see in the code above, I am using 'compression', but I did test with this option as well. Same error.

I believe this to be an issue specific to Databrick's layer over Spark / Delta Tables, most likely that they have a codec validation and didn't add brotli, as its addition to Spark is 'more recent'.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-18-2024 04:38 PM

Hi , how/where do I install 'BrotliCodec' in order to use brotli compression?

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-01-2023 01:48 PM

Given the new information I appended, I looked into the Delta caching and I can disable it:

.option("spark.databricks.io.cache.enabled", False)This works as a work around while I read these files in to save them locally in DBFS, but does it have performance repercussions? I'm only doing this to ingest files from S3 uploaded from an external process. I'm worried there might be a larger number of reads from S3 increasing ingestion costs.

Announcements

Related Content

- Is there a Sample Java Program using Databricks Connect Library to query a table In the Free Editio? in Warehousing & Analytics

- Issues while running SYNC SCHEMA (HIVE-6384) in Data Governance

- Scaling Declarative Streaming Pipelines for CDC from On-Prem Database to Lakehouse in Data Engineering

- Delta Sharing - Open, Secure, and Barrier-Free Data & AI Collaboration in Data Governance

- Can't enable "variantType-preview" using DLTs in Data Engineering