- 2775 Views

- 3 replies

- 2 kudos

Problems registering models via /api/2.0/mlflow/model-versions/create

Initially, I tried registering while logging a model from mlflow and got:"Got an invalid source 'dbfs:/Volumes/compute_integration_tests/default/compute-external-table-tests-models/test_artifacts/1078d04b4d4b4537bdf7a4b5e94e9e7f/artifacts/model'. Onl...

- 2775 Views

- 3 replies

- 2 kudos

- 2 kudos

I know, right! Look at the error message I posted:"Got an invalid source 'dbfs:/Volumes/compute_integration_tests/default/compute-external-table-tests-models/test_artifacts/1078d04b4d4b4537bdf7a4b5e94e9e7f/artifacts/model'. Only DBFS locations are cu...

- 2 kudos

- 4058 Views

- 2 replies

- 2 kudos

Azure Databricks to GCP Databricks Migration

Hi Team, Can you provide your thoughts on moving Databricks from Azure to GCP? What services are required for the migration, and are there any limitations on GCP compared to Azure? Also, are there any tools that can assist with the migration? Please ...

- 4058 Views

- 2 replies

- 2 kudos

- 2 kudos

Hello Team, Adding to @sunnydata comments: Moving Databricks from Azure to GCP involves several steps and considerations. Here are the key points based on the provided context: Services Required for Migration:Cloud Storage Data: Use GCP’s Storage T...

- 2 kudos

- 2131 Views

- 1 replies

- 0 kudos

Structuring RAG Projects in Python Using

Understanding Retrieval-Augmented Generation (RAG)Retrieval-Augmented Generation (RAG) is a cutting-edge AI paradigm that enhances traditional generative models by integrating real-time data retrieval. By combining retrieval and generation, RAG ensur...

- 2131 Views

- 1 replies

- 0 kudos

- 0 kudos

Here's a demo using RAG LLM: https://www.databricks.com/resources/demos/tutorials/data-science-and-ai/lakehouse-ai-deploy-your-llm-chatbot

- 0 kudos

- 2515 Views

- 1 replies

- 0 kudos

Databricks Support

Hi everyoneI'm new to databricks and to this platform. My organization just got started with databricks and we're looking to procure/purchase enterprise support to help with things like training, set up, maintenance and development of our warehousing...

- 2515 Views

- 1 replies

- 0 kudos

- 0 kudos

Hi @victor_okrobodo, Please see: https://www.databricks.com/professional-services Let me know if you have any other questions.

- 0 kudos

- 3695 Views

- 4 replies

- 5 kudos

Data Modelling

What is the 'implicit' or 'by default' data model of databricks or unity catalog ? Is it Data Vault ?

- 3695 Views

- 4 replies

- 5 kudos

- 5 kudos

Thank you so much for the information. You made it easy for me.

- 5 kudos

- 1840 Views

- 3 replies

- 0 kudos

Suggest ways to get unity catalog data to Aws s3 or sagemaker

Please suggest best ways to get databricks unity catalog data to Aws s3 or sagemaker. Data could be around 1gb in some tables and 20gb in others.currently sagemaker pipelines use data from s3 as batches in different parquet files. But now we would li...

- 1840 Views

- 3 replies

- 0 kudos

- 0 kudos

You could try by using Delta Sharing with your provider as mentioned in doc https://docs.databricks.com/en/delta-sharing/set-up.html

- 0 kudos

- 1813 Views

- 2 replies

- 0 kudos

Unable to capture the Query result via JDBC client execution

As shown in below screenshots MERGE INTO command produces information about the result (num_affected_rows, num_updated_rows, num_deleted_rows, num_inserted_rows).Unable to get this information when the same query is being executed via JDBC client. Is...

- 1813 Views

- 2 replies

- 0 kudos

- 0 kudos

Delta API can help you get these details. Reference - https://docs.databricks.com/en/delta/history.html#history-schema

- 0 kudos

- 2344 Views

- 1 replies

- 0 kudos

DIAS 2023 -- recommend the training!

Did the SparkUI training yesterday with Mark Ott, and I highly recommend it. It was super helpful and provided a lot of clarity around some of the vaguer terms and metrics, and some surprise penalties.In-memory partition size is the the main thing to...

- 2344 Views

- 1 replies

- 0 kudos

- 0 kudos

Hi, I can't find this course... can you please share the full name of this course? Thanks in advance

- 0 kudos

- 2445 Views

- 3 replies

- 4 kudos

Resolved! How to read Databricks UniForm format tables present in ADLS

We have Databricks UniForm format (iceberg) tables are present in azure data lake storage (ADLS) which has already integrated with Databricks unity catalog. How to read Uniform format tables using Databricks as a query engine?

- 2445 Views

- 3 replies

- 4 kudos

- 4 kudos

Query Using Unity Catalog:SQL:sqlCopiar códigoSELECT * FROM catalog_name.schema_name.table_name;PySpark:pythonCopiar códigodf = spark.sql("SELECT * FROM catalog_name.schema_name.table_name") df.display()Direct Access by Path: If not using Unity Catal...

- 4 kudos

- 10883 Views

- 2 replies

- 0 kudos

Cluster Memory Issue (Termination)

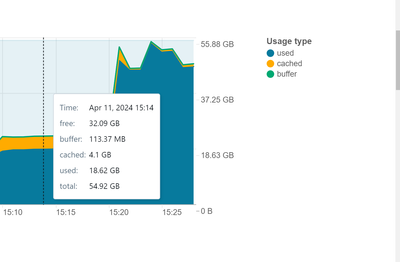

Hi,I have a single-node personal cluster with 56GB memory(Node type: Standard_DS5_v2, runtime: 14.3 LTS ML). The same configuration is done for the job cluster as well and the following problem applies to both clusters:To start with: once I start my ...

- 10883 Views

- 2 replies

- 0 kudos

- 2701 Views

- 2 replies

- 1 kudos

Creating table in Unity Catalog with file scheme dbfs is not supported

code:# Define the path for the staging Delta tablestaging_table_path = "dbfs:/user/hive/warehouse/staging_order_tracking"spark.sql( f"CREATE TABLE IF NOT EXISTS staging_order_tracking USING DELTA LOCATION '{staging_table_path}'" )Creating table in U...

- 2701 Views

- 2 replies

- 1 kudos

- 1 kudos

I believe, using mount point only we can able to connect to our storage account - containers. If this is anti pattern by data bricks what is the way? Can you please explain what is external location of UC is it our local system folders or something n...

- 1 kudos

- 3387 Views

- 2 replies

- 3 kudos

Resolved! Cannot find "Databricks Apps"

Hi, I saw a demo about "Databricks Apps" 2 months ago. I haven't used Databricks for about 3 months, and I recently recreated a Premium Workspace to try something out (I use Azure), however I can't find "Apps" when I click "New". How can I enable and...

- 3387 Views

- 2 replies

- 3 kudos

- 2003 Views

- 4 replies

- 0 kudos

Issue Querying Registered Tables on Glue Catalog via Data bricks

Im having an issue to query registered tables on glue catalog thru databricks with the following error: AnalysisException: [TABLE_OR_VIEW_NOT_FOUND] The table or view looker.ccc_data cannot be found.Verify the spelling and correctness of the schema a...

- 2003 Views

- 4 replies

- 0 kudos

- 0 kudos

Can you specify the full catalog.schema.table? and also check the current schema SELECT current_schema();

- 0 kudos

- 1266 Views

- 2 replies

- 1 kudos

Delete non-community Databricks account

Hi everyone!I have mistakenly created a non-community account using my personal email address.I would like to delete it in order to create a new account using my business email.How should I proceed? I tried to find this option on the console, with no...

- 1266 Views

- 2 replies

- 1 kudos

- 1 kudos

Hello @gabrielsantana!Could you please try raising a ticket with the Databricks support team?

- 1 kudos

- 6579 Views

- 1 replies

- 1 kudos

How to overwrite the existing file using databricks cli

If i use databricks fs cp then it does not overwrite the existing file, it just skip copying the file. Any suggestion how to overwrite the file using databricks cli?

- 6579 Views

- 1 replies

- 1 kudos

- 1 kudos

You can use the --overwrite option to overwrite your file.https://docs.databricks.com/en/dev-tools/cli/fs-commands.html

- 1 kudos

-

.CSV

1 -

Access Data

2 -

Access Databricks

3 -

Access Delta Tables

2 -

Account reset

1 -

adcAws databricks

1 -

ADF Pipeline

1 -

ADLS Gen2 With ABFSS

1 -

Advanced Data Engineering

2 -

AI

5 -

Analytics

1 -

Apache spark

1 -

Apache Spark 3.0

1 -

api

1 -

Api Calls

1 -

API Documentation

4 -

App

2 -

Application

2 -

Architecture

1 -

asset bundle

1 -

Asset Bundles

3 -

Auto-loader

1 -

Autoloader

4 -

Aws databricks

1 -

AWS security token

1 -

AWSDatabricksCluster

1 -

Azure

7 -

Azure data disk

1 -

Azure databricks

16 -

Azure Databricks Delta Table

1 -

Azure Databricks Job

1 -

Azure Databricks SQL

6 -

Azure databricks workspace

1 -

Azure Unity Catalog

6 -

Azure-databricks

1 -

AzureDatabricks

1 -

AzureDevopsRepo

1 -

best practices

1 -

Big Data Solutions

1 -

Billing

1 -

Billing and Cost Management

2 -

Blackduck

1 -

Bronze Layer

1 -

Business Intelligence

1 -

CDC

1 -

Certification

3 -

Certification Exam

1 -

Certification Voucher

3 -

CICDForDatabricksWorkflows

1 -

Cloud_files_state

1 -

CloudFiles

1 -

Cluster

3 -

Cluster Init Script

1 -

Comments

1 -

Community Edition

4 -

Community Edition Account

1 -

Community Event

1 -

Community Group

2 -

Community Members

1 -

Community site

1 -

CommunityArticle

1 -

Compute

3 -

Compute Instances

1 -

conditional tasks

1 -

Connection

1 -

Contest

1 -

Credentials

1 -

csv

1 -

Custom Python

1 -

CustomLibrary

1 -

Data

1 -

Data + AI Summit

1 -

Data Engineer Associate

1 -

Data Engineering

4 -

Data Explorer

1 -

Data Governance

1 -

Data Ingestion & connectivity

1 -

Data Ingestion Architecture

1 -

Data Processing

1 -

Databrick add-on for Splunk

1 -

databricks

4 -

Databricks Academy

1 -

Databricks AI + Data Summit

1 -

Databricks Alerts

1 -

Databricks App

1 -

Databricks Assistant

1 -

Databricks autoloader

1 -

Databricks Certification

1 -

Databricks Cluster

2 -

Databricks Clusters

1 -

Databricks Community

10 -

Databricks community edition

3 -

Databricks Community Edition Account

1 -

Databricks Community Rewards Store

3 -

Databricks connect

1 -

Databricks Dashboard

3 -

Databricks delta

2 -

Databricks Delta Table

2 -

Databricks Demo Center

1 -

Databricks Documentation

4 -

Databricks genAI associate

1 -

Databricks JDBC Driver

1 -

Databricks Job

1 -

Databricks Lakeflow

1 -

Databricks Lakehouse Platform

6 -

Databricks Migration

1 -

Databricks Model

1 -

Databricks notebook

2 -

Databricks Notebooks

4 -

Databricks Platform

2 -

Databricks Pyspark

1 -

Databricks Python Notebook

1 -

Databricks Repo

1 -

Databricks Runtime

1 -

Databricks Serverless

2 -

Databricks SQL

5 -

Databricks SQL Alerts

1 -

Databricks SQL Warehouse

1 -

Databricks Terraform

1 -

Databricks UI

1 -

Databricks Unity Catalog

4 -

Databricks User Group

1 -

Databricks Workflow

2 -

Databricks Workflows

2 -

Databricks workspace

3 -

Databricks-connect

1 -

databricks_cluster_policy

1 -

DatabricksJobCluster

1 -

DataCleanroom

1 -

DataDays

1 -

Datagrip

1 -

DataMasking

2 -

DataVersioning

1 -

dbdemos

2 -

DBFS

1 -

DBRuntime

1 -

DBSQL

1 -

DDL

1 -

Dear Community

1 -

deduplication

1 -

Delt Lake

1 -

Delta Live Pipeline

3 -

Delta Live Table

5 -

Delta Live Table Pipeline

5 -

Delta Live Table Pipelines

4 -

Delta Live Tables

7 -

Delta Sharing

2 -

Delta Time Travel

1 -

deltaSharing

1 -

Deny assignment

1 -

Development

1 -

DevOps

1 -

DLT

10 -

DLT Pipeline

7 -

DLT Pipelines

5 -

Dolly

1 -

Download files

1 -

DQX

1 -

Dynamic Variables

1 -

Engineering With Databricks

1 -

env

1 -

ETL Pipelines

1 -

Event Driven

1 -

External Sources

1 -

External Storage

2 -

FAQ for Databricks Learning Festival

2 -

Feature Store

2 -

File Trigger

1 -

Filenotfoundexception

1 -

Free Edition

1 -

Free trial

1 -

friendsofcommunity

1 -

GCP Databricks

1 -

GenAI

2 -

GenAI and LLMs

1 -

GenAI Course Material

1 -

Getting started

3 -

Google Bigquery

1 -

HIPAA

1 -

Hubert Dudek

2 -

import

2 -

Integration

1 -

JDBC Connections

1 -

JDBC Connector

1 -

Job Task

1 -

JSON Object

1 -

LakeflowDesigner

1 -

Learning

2 -

Lineage

1 -

LLM

1 -

Login

1 -

Login Account

1 -

Machine Learning

3 -

MachineLearning

1 -

Materialized Tables

2 -

Medallion Architecture

1 -

meetup

2 -

Metadata

1 -

Migration

1 -

ML Model

2 -

MlFlow

2 -

Model

1 -

Model Serving

1 -

Model Training

1 -

Module

1 -

Monitoring

1 -

Networking

2 -

Notebook

1 -

Onboarding Trainings

1 -

OpenAI

1 -

Pandas udf

1 -

Permissions

1 -

personalcompute

1 -

Pipeline

2 -

Plotly

1 -

PostgresSQL

1 -

Pricing

1 -

provisioned throughput

1 -

Pyspark

1 -

Python

5 -

Python Code

1 -

Python Wheel

1 -

Quickstart

1 -

Read data

1 -

Repos Support

1 -

Reset

1 -

Rewards Store

2 -

Salesforce with Databricks

1 -

Sant

1 -

Schedule

1 -

Serverless

3 -

serving endpoint

1 -

Session

1 -

Sign Up Issues

2 -

Software Development

1 -

Spark

1 -

Spark Connect

1 -

Spark scala

1 -

sparkui

2 -

Speakers

1 -

Splunk

2 -

SQL

8 -

streamlit

1 -

Summit23

7 -

Support Tickets

1 -

Sydney

2 -

Table Download

1 -

Tags

3 -

terraform

1 -

Training

2 -

Troubleshooting

1 -

Unity Catalog

4 -

Unity Catalog Metastore

2 -

Update

1 -

user groups

2 -

Venicold

3 -

Vnet

1 -

Voucher Not Recieved

1 -

Watermark

1 -

Weekly Documentation Update

1 -

Weekly Release Notes

2 -

Women

1 -

Workflow

2 -

Workspace

3

- « Previous

- Next »

| User | Count |

|---|---|

| 144 | |

| 135 | |

| 57 | |

| 45 | |

| 42 |