- 5441 Views

- 3 replies

- 0 kudos

Issue with importing data into Power BI from a view containing 100 million records in ADB

Hello, everyone. I'm trying to import data into Power BI from a view in Azure Databricks that contains over 10 million records. Every time it's failing with this following error"Failed to save modifications to the server. Error returned: 'OLE DB or O...

- 5441 Views

- 3 replies

- 0 kudos

- 0 kudos

Users have the option of either using OAuth or switching to Personal Access Token as a solution. I am sorry to say that this is the only method I am aware of at the present. hi

- 0 kudos

- 3020 Views

- 0 replies

- 0 kudos

Databricks summit 2024 unity catalog migration!!

Databricks summit 2024 unity catalog migration!!

- 3020 Views

- 0 replies

- 0 kudos

- 963 Views

- 0 replies

- 0 kudos

Hi all, I recently enabled SSO on my Databricks account. Now, when a user signs in they see "No work

Hi all, I recently enabled SSO on my Databricks account. Now, when a user signs in they see "No workspaces have been enabled for your account", which is the expected behavior as I haven't created any workspaces yet

- 963 Views

- 0 replies

- 0 kudos

- 1055 Views

- 0 replies

- 0 kudos

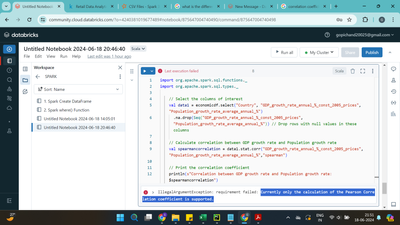

Does databricks support spearman rank correlation

while working on spark scala. I tried working on code using pearson correaltion it works but when I tried to work on spearman rank correlation it displayed error like Currently only the calculation of the Pearson Correlation coefficient is support...

- 1055 Views

- 0 replies

- 0 kudos

- 1635 Views

- 0 replies

- 0 kudos

Concurrent State Update from Worker Nodes Possible?

For a data processing pipeline I use structured streaming and arbitrary stateful processing. I was wondering if the partitioning over several worker nodes and thus updating the state from different worker nodes has to be considered (e.g. using a lock...

- 1635 Views

- 0 replies

- 0 kudos

- 1754 Views

- 1 replies

- 0 kudos

What is the vector database to generate in DataBricks?

What is the vector database to generate in DataBricks?

- 1754 Views

- 1 replies

- 0 kudos

- 0 kudos

it is used for RAG models (generative AI) and contains embeddings used by those models.https://docs.databricks.com/en/generative-ai/vector-search.html

- 0 kudos

- 5175 Views

- 3 replies

- 1 kudos

Confused with databricks Tips and Tricks - Optimizations regarding partitining

Hello Community,Today I was in Tips and Tricks - Optimizations webinar and I started being confused, they said:"Don't partition tables <1TB in size and plan carefully when partitioning• Partitions should be >=1GB" Now my confusion is if this recommen...

- 5175 Views

- 3 replies

- 1 kudos

- 1 kudos

that is partitions on disk.Defining the correct amount of partitions is not that easy. One would think that more partitions is better because you can process more data in parallel.And that is true if you only have to do local transformations (no shu...

- 1 kudos

- 1659 Views

- 1 replies

- 0 kudos

Certification exam

Hello Team, Today my exam was scheduled at 7:30 AM. No one contacted me before the exam for any validation/guideline. About one hour after, the proctor suspended my exam. Please check the video. I was constantly looking at the screen only. I have rai...

- 1659 Views

- 1 replies

- 0 kudos

- 0 kudos

Hi Databricks community and @Cert-Team,It is more than 24 hours and I did not get any update after my Databricks Professional Exam got suspended.Please help me to sort this out so that I can complete the exam. It is very important for me to complete ...

- 0 kudos

- 3023 Views

- 4 replies

- 1 kudos

Databricks DataEngineering Learning

I am new to Databricks. I am trying to do the lab work given Databricks DataEngineering course, at workbook 4.1 I am getting below error. Please help to resolve.Expected one and only one cluster definition.Edit the config via the JSON interface to re...

- 3023 Views

- 4 replies

- 1 kudos

- 1 kudos

I am unable to resolve this. Any help would be appreciated. Thanks

- 1 kudos

- 2039 Views

- 1 replies

- 0 kudos

[offisiell] Nexalyn Norge Erfaringer anmeldelser – Nexalyn Ingredienser pris, kjøp

Nexalyn Norge Opplevelser Dose, inntak: I en verden hvor vitalitet og ytelse ofte er synonymt med suksess, er det viktig å opprettholde topp fysisk form. For menn strekker dette seg ofte utover bare kondisjon til områder med vitalitet, virilitet og g...

- 2039 Views

- 1 replies

- 0 kudos

- 0 kudos

Klikk her for å kjøpe nå fra den offisielle nettsiden til Nexalyn

- 0 kudos

- 3186 Views

- 1 replies

- 0 kudos

Vector Database

What is the vector database to generate in DataBricks?

- 3186 Views

- 1 replies

- 0 kudos

- 0 kudos

Not sure if i understood the question. If you want to use Databricks Vector Database, just go to your table > create vector search index. First you need to create a Vector Search Endpoint (compute > vector search), and you need to have an enabled ser...

- 0 kudos

- 1031 Views

- 1 replies

- 1 kudos

How do you analyze performance

Curious to hear how you guys optimize compute. As in how you dig into the details of the Spark execution and improve?

- 1031 Views

- 1 replies

- 1 kudos

- 1 kudos

That is it. Usually, people take the time it takes to run a job/query/process as their KPI. Then you start to check which processes are taking more time, drilling down one by one. Sometimes it could be a misplaced .cache(), .collect() or display() t...

- 1 kudos

- 2147 Views

- 0 replies

- 0 kudos

Creating Zip file on Azure storage explorer

I need to create zip file which will contain csv files which I have created from the dataframe. But I am unable to create valid zip file which will be containing all csv files. Is it possible to create zip file from code in databricks on azure storag...

- 2147 Views

- 0 replies

- 0 kudos

- 2943 Views

- 2 replies

- 2 kudos

New member

Excited to be at Data Summit 2024

- 2943 Views

- 2 replies

- 2 kudos

- 6375 Views

- 2 replies

- 0 kudos

How can i create multiple workspaces in existing single azure databricks resource?

I have an azure databricks resource created in my Azure portal. I want to achieve departmental secracy in single databricks resource. Hence, I am looking for a solution where I can add multiple workspaces to my single Databricks resource. Is it even ...

- 6375 Views

- 2 replies

- 0 kudos

- 0 kudos

Hi, It is possible to create multiple workspace from a single azure account.Go to Azure portal click on Azure Databricks.Click on Create.Fill all the details and your new workspace is ready.

- 0 kudos

-

.CSV

1 -

Access Data

2 -

Access Databricks

3 -

Access Delta Tables

2 -

Account reset

1 -

adcAws databricks

1 -

ADF Pipeline

1 -

ADLS Gen2 With ABFSS

1 -

Advanced Data Engineering

2 -

AI

5 -

Analytics

1 -

Apache spark

1 -

Apache Spark 3.0

1 -

api

1 -

Api Calls

1 -

API Documentation

4 -

App

2 -

Application

2 -

Architecture

1 -

asset bundle

1 -

Asset Bundles

3 -

Auto-loader

1 -

Autoloader

4 -

Aws databricks

1 -

AWS security token

1 -

AWSDatabricksCluster

1 -

Azure

7 -

Azure data disk

1 -

Azure databricks

16 -

Azure Databricks Delta Table

1 -

Azure Databricks Job

1 -

Azure Databricks SQL

6 -

Azure databricks workspace

1 -

Azure Unity Catalog

6 -

Azure-databricks

1 -

AzureDatabricks

1 -

AzureDevopsRepo

1 -

best practices

1 -

Big Data Solutions

1 -

Billing

1 -

Billing and Cost Management

2 -

Blackduck

1 -

Bronze Layer

1 -

Business Intelligence

1 -

CDC

1 -

Certification

3 -

Certification Exam

1 -

Certification Voucher

3 -

CICDForDatabricksWorkflows

1 -

Cloud_files_state

1 -

CloudFiles

1 -

Cluster

3 -

Cluster Init Script

1 -

Comments

1 -

Community Edition

4 -

Community Edition Account

1 -

Community Event

1 -

Community Group

2 -

Community Members

1 -

Community site

1 -

CommunityArticle

1 -

Compute

3 -

Compute Instances

1 -

conditional tasks

1 -

Connection

1 -

Contest

1 -

Credentials

1 -

csv

1 -

Custom Python

1 -

CustomLibrary

1 -

Data

1 -

Data + AI Summit

1 -

Data Engineer Associate

1 -

Data Engineering

4 -

Data Explorer

1 -

Data Governance

1 -

Data Ingestion & connectivity

1 -

Data Ingestion Architecture

1 -

Data Processing

1 -

Databrick add-on for Splunk

1 -

databricks

4 -

Databricks Academy

1 -

Databricks AI + Data Summit

1 -

Databricks Alerts

1 -

Databricks App

1 -

Databricks Assistant

1 -

Databricks autoloader

1 -

Databricks Certification

1 -

Databricks Cluster

2 -

Databricks Clusters

1 -

Databricks Community

10 -

Databricks community edition

3 -

Databricks Community Edition Account

1 -

Databricks Community Rewards Store

3 -

Databricks connect

1 -

Databricks Dashboard

3 -

Databricks delta

2 -

Databricks Delta Table

2 -

Databricks Demo Center

1 -

Databricks Documentation

4 -

Databricks genAI associate

1 -

Databricks JDBC Driver

1 -

Databricks Job

1 -

Databricks Lakeflow

1 -

Databricks Lakehouse Platform

6 -

Databricks Migration

1 -

Databricks Model

1 -

Databricks notebook

2 -

Databricks Notebooks

4 -

Databricks Platform

2 -

Databricks Pyspark

1 -

Databricks Python Notebook

1 -

Databricks Repo

1 -

Databricks Runtime

1 -

Databricks Serverless

2 -

Databricks SQL

5 -

Databricks SQL Alerts

1 -

Databricks SQL Warehouse

1 -

Databricks Terraform

1 -

Databricks UI

1 -

Databricks Unity Catalog

4 -

Databricks User Group

1 -

Databricks Workflow

2 -

Databricks Workflows

2 -

Databricks workspace

3 -

Databricks-connect

1 -

databricks_cluster_policy

1 -

DatabricksJobCluster

1 -

DataCleanroom

1 -

DataDays

1 -

Datagrip

1 -

DataMasking

2 -

DataVersioning

1 -

dbdemos

2 -

DBFS

1 -

DBRuntime

1 -

DBSQL

1 -

DDL

1 -

Dear Community

1 -

deduplication

1 -

Delt Lake

1 -

Delta Live Pipeline

3 -

Delta Live Table

5 -

Delta Live Table Pipeline

5 -

Delta Live Table Pipelines

4 -

Delta Live Tables

7 -

Delta Sharing

2 -

Delta Time Travel

1 -

deltaSharing

1 -

Deny assignment

1 -

Development

1 -

DevOps

1 -

DLT

10 -

DLT Pipeline

7 -

DLT Pipelines

5 -

Dolly

1 -

Download files

1 -

DQX

1 -

Dynamic Variables

1 -

Engineering With Databricks

1 -

env

1 -

ETL Pipelines

1 -

Event Driven

1 -

External Sources

1 -

External Storage

2 -

FAQ for Databricks Learning Festival

2 -

Feature Store

2 -

File Trigger

1 -

Filenotfoundexception

1 -

Free Edition

1 -

Free trial

1 -

friendsofcommunity

1 -

GCP Databricks

1 -

GenAI

2 -

GenAI and LLMs

1 -

GenAI Course Material

1 -

Getting started

3 -

Google Bigquery

1 -

HIPAA

1 -

Hubert Dudek

2 -

import

2 -

Integration

1 -

JDBC Connections

1 -

JDBC Connector

1 -

Job Task

1 -

JSON Object

1 -

LakeflowDesigner

1 -

Learning

2 -

Lineage

1 -

LLM

1 -

Login

1 -

Login Account

1 -

Machine Learning

3 -

MachineLearning

1 -

Materialized Tables

2 -

Medallion Architecture

1 -

meetup

2 -

Metadata

1 -

Migration

1 -

ML Model

2 -

MlFlow

2 -

Model

1 -

Model Serving

1 -

Model Training

1 -

Module

1 -

Monitoring

1 -

Networking

2 -

Notebook

1 -

Onboarding Trainings

1 -

OpenAI

1 -

Pandas udf

1 -

Permissions

1 -

personalcompute

1 -

Pipeline

2 -

Plotly

1 -

PostgresSQL

1 -

Pricing

1 -

provisioned throughput

1 -

Pyspark

1 -

Python

5 -

Python Code

1 -

Python Wheel

1 -

Quickstart

1 -

Read data

1 -

Repos Support

1 -

Reset

1 -

Rewards Store

2 -

Salesforce with Databricks

1 -

Sant

1 -

Schedule

1 -

Serverless

3 -

serving endpoint

1 -

Session

1 -

Sign Up Issues

2 -

Software Development

1 -

Spark

1 -

Spark Connect

1 -

Spark scala

1 -

sparkui

2 -

Speakers

1 -

Splunk

2 -

SQL

8 -

streamlit

1 -

Summit23

7 -

Support Tickets

1 -

Sydney

2 -

Table Download

1 -

Tags

3 -

terraform

1 -

Training

2 -

Troubleshooting

1 -

Unity Catalog

4 -

Unity Catalog Metastore

2 -

Update

1 -

user groups

2 -

Venicold

3 -

Vnet

1 -

Voucher Not Recieved

1 -

Watermark

1 -

Weekly Documentation Update

1 -

Weekly Release Notes

2 -

Women

1 -

Workflow

2 -

Workspace

3

- « Previous

- Next »

| User | Count |

|---|---|

| 144 | |

| 135 | |

| 57 | |

| 45 | |

| 42 |