ipywidgets not loading in DataBricks Community Edition

Hi All,I am trying to run commands with ipywidgets but it just says :Loading widget...Same error occurs even when I re run the cell.DataBricks version used : 14.2

- 1107 Views

- 0 replies

- 0 kudos

Hi All,I am trying to run commands with ipywidgets but it just says :Loading widget...Same error occurs even when I re run the cell.DataBricks version used : 14.2

Hi All,I have installed the following libraries on my cluster (11.3 LTS that includes Apache Spark 3.3.0, Scala 2.12):numpy==1.21.4flair==0.12 on executing `from flair.models import TextClassifier`, I get the following error:"numpy.ndarray size chan...

You have changed the numpy version, and presumably that is not compatible with other libraries in the runtime. If flair requires later numpy, then use a later DBR runtime for best results, which already has later numpy versions

Dear Community Members -I am trying to deploy a workflow using DAB. After deploying if I am updating the same workflow with different bundle name it is creating a new workflow instead of updating the existing workflow. Also when I am trying to use sa...

@nicole_lu_PM : Do you have any suggestions or feedback for the above question ? It will be really helpful if we can get some insights.

I want to restore the committed changes (before and after view) in my branch. As this pull request was abandoned in Azure DevOps, then the branch was not merged. Therefore, the modified notebooks still exist but not the commits.How can I retrieve aga...

Hi, how can one go about assessing the value created due to the implementation of DBRX?

How does Datbricks differ from snowflake with respect to AI tooling

org.apache.spark.SparkException: Job aborted due to stage failure:

Along with Job aborted due to stage failure: if you see slave lost... then it is due to less memory allocated for executors, more cores per executor more memory required or the other possibility is you have used max cpu available in cluster and the d...

I'm using a shared access cluster and am getting this error while trying to upload to Qdrant. #embeddings_df = embeddings_df.limit(5) options = { "qdrant_url": QDRANT_GRPC_URL, "api_key": QDRANT_API_KEY, "collection_name": QDRANT_COLLEC...

We have a use case , where there may be chance of 200 jobs executing at once. But Few notebooks are failing with an issue "run failed with error message Too many execution contexts are open right now.(Limit set currently to 150)." Can anyone help how...

Hello, you can refer to the following KB article for information and best practices in regards the issue you are facing: https://kb.databricks.com/en_US/notebooks/too-many-execution-contexts-are-open-right-now Best practices Use a job cluster instea...

There is no distinction to make, it's VM's and you can't choose. Databricks SQL Serverless Warehouses uses K8s under the hood though.

Hello, everyone. I'm trying to import data into Power BI from a view in Azure Databricks that contains over 10 million records. Every time it's failing with this following error"Failed to save modifications to the server. Error returned: 'OLE DB or O...

Users have the option of either using OAuth or switching to Personal Access Token as a solution. I am sorry to say that this is the only method I am aware of at the present. hi

Databricks summit 2024 unity catalog migration!!

Hi all, I recently enabled SSO on my Databricks account. Now, when a user signs in they see "No workspaces have been enabled for your account", which is the expected behavior as I haven't created any workspaces yet

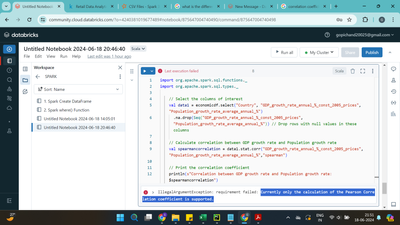

while working on spark scala. I tried working on code using pearson correaltion it works but when I tried to work on spearman rank correlation it displayed error like Currently only the calculation of the Pearson Correlation coefficient is support...

For a data processing pipeline I use structured streaming and arbitrary stateful processing. I was wondering if the partitioning over several worker nodes and thus updating the state from different worker nodes has to be considered (e.g. using a lock...

| User | Count |

|---|---|

| 143 | |

| 135 | |

| 57 | |

| 46 | |

| 42 |