- 5087 Views

- 1 replies

- 0 kudos

if any user has only permission 'select table' in unityCatalog but not having permission to ext loc

Hi,Suppose one use having access 'Select' permission the table but user not having any permission to table external location in the 'external location'.. User will be able to read the data from table?? if yes how can user will be able to read the wh...

- 5087 Views

- 1 replies

- 0 kudos

- 0 kudos

Hi @Retired_mod , thanks for response.. Why the hyperlink command not showing full?

- 0 kudos

- 4591 Views

- 5 replies

- 0 kudos

How to switch Workspaces via menue

Hello,In various webinars and videos featuring Databricks instructors, I have noticed that it is possible to switch between different workspaces using the top menu within a workspace. However, in our organization, we have three separate workspaces wi...

- 4591 Views

- 5 replies

- 0 kudos

- 0 kudos

Hi @RobinK looking at screenshots provided i can see you have access to different workspaces but still the dropdown is not visible for you, i also checked if there is any setting for same but i didnt found it.you can raise a ticket to databricks and ...

- 0 kudos

- 4319 Views

- 1 replies

- 1 kudos

Resolved! Issue with creating cluster on Community Edition

I have recently signed up for Databricks Community Edition and have yet to succesfully create a cluster.I get this message when trying to create a cluster:"Self-bootstrap failure during launch. Please try again later and contact Databricks if the pro...

- 4319 Views

- 1 replies

- 1 kudos

- 1 kudos

Hi @dustint121 It's Databricks internal issue; wait for some time and it will resolve.

- 1 kudos

- 5016 Views

- 3 replies

- 1 kudos

Resolved! Databricks community edition down?

I am getting this error when trying to create a cluster: "Self-bootstrap failure during launch. Please try again later and contact Databricks if the problem persists. Node daemon fast failed and did not answer ping for instance"

- 5016 Views

- 3 replies

- 1 kudos

- 1 kudos

I still have this issue, and have yet to successfully create a cluster instance.Please advise on how this error was fixed.

- 1 kudos

- 4127 Views

- 3 replies

- 0 kudos

Autoloader update table when new changes are made

Hello,Everyday a new file of the same name gets sent to my storage account with old and new data appended at the end. Columns may also be added during one of these file updates. This file does a complete overwrite of the previous file. Is it possibl...

- 4127 Views

- 3 replies

- 0 kudos

- 0 kudos

This may be helpful - the bit on allow overwritehttps://docs.databricks.com/en/ingestion/auto-loader/faq.html

- 0 kudos

- 4210 Views

- 1 replies

- 0 kudos

System Tables - Billing schema

Hi Experts!We enabled UC and also the system table (Billing) to start monitoring usage and cost. We were able to create a dashboard where we can see the usage and cost for each workspace. The usage table in the billing schema has workspace_id but I'd...

- 4210 Views

- 1 replies

- 0 kudos

- 0 kudos

@Retired_mod Im also not seeing the compute names logged in the system billing tables. Is this located elsewhere?

- 0 kudos

- 1403 Views

- 0 replies

- 0 kudos

Azure Oauth Passthrough with the Go Driver

Can anyone point me towards some resources for achieving this? I already have the token.Trying with: dbsql.WithAccessToken(settings.Token)But I'm getting the following error:Unable to load OAuth Config: request error after 1 attempt(s): unexpected HT...

- 1403 Views

- 0 replies

- 0 kudos

- 3432 Views

- 1 replies

- 0 kudos

Can browse external Storage, but can not create a Table from there - VNET, ADLSGen2

Hi there!Hope somebody here can help me. We have created a new Databricks Account on Azure with the ARM template for VNET injection.We have all the subnets etc., unitiy catalog active and the connector for databricks.I want now to create my first tab...

- 3432 Views

- 1 replies

- 0 kudos

- 0 kudos

Hi,To solve this problem, the following Microsoft documentation can be used to configure the NCC to enable the connection between the private Azure storage and the serverless resources.https://learn.microsoft.com/en-us/azure/databricks/security/netwo...

- 0 kudos

- 7492 Views

- 6 replies

- 1 kudos

DataFrame to CSV write has issues due to multiple commas inside an row value

Hi alliam working on a data containing JSON fields with embedded commas into CSV format. iam facing challenges due to the commas within the JSON being misinterpreted as column delimiters during the conversion process.i tried several methods to modify...

- 7492 Views

- 6 replies

- 1 kudos

- 1 kudos

Hi Sai, I assume that the problem comes not from the PySpark, but from Excel. I tried to reproduce the error and didn't find the way - that a good thing, right ? Please try the following : df.write.format("csv").save("/Volumes/<my_catalog_name>/<m...

- 1 kudos

- 4563 Views

- 1 replies

- 0 kudos

Access Delta sharing from Azure Data Factory

I recently got access to delta sharing and I am looking to access the data from the tables in share through ADF. I used linked services such as REST API and HTTP and successfully established connection using the credential file token and http path, h...

- 4563 Views

- 1 replies

- 0 kudos

- 0 kudos

Hey, I think you'll need to use a Databricks activity instead of Copy See : https://learn.microsoft.com/en-us/azure/data-factory/connector-overview#integrate-with-more-data-storeshttps://learn.microsoft.com/en-us/azure/data-factory/transform-data-dat...

- 0 kudos

- 5002 Views

- 4 replies

- 1 kudos

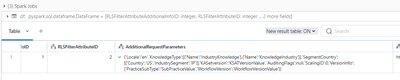

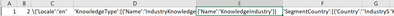

Redefine ETL strategy with pypskar approach

Hey everyone!I've some previous experience with Data Engineering, but totally new in Databricks and Delta Tables.Starting this thread hoping to ask some questions and asking for help on how to design a process.So I have essentially 2 delta tables (sa...

- 5002 Views

- 4 replies

- 1 kudos

- 1 kudos

Hi @databird , You can review the code of each demo by opening the content via "View the Notebooks" or by exploring the following repo : https://github.com/databricks-demos (you can try to search for "merge" to see all the occurrences, for example) T...

- 1 kudos

- 3207 Views

- 2 replies

- 0 kudos

There is no certification number in my Databricks certificate that i had received after passing the

I enrolled myself for the Databricks data engineer certification recently and gave a shot at the exam and i did clear it successfully. I have received the certificate in the form of a pdf file along with a URL in which i can see my certificate and ba...

- 3207 Views

- 2 replies

- 0 kudos

- 0 kudos

Hi @vinay076 Thanks for asking! Our support team can provide you with a credential ID. Please file a ticket with our support team, give them your email associated with your certification, and they can get you the credential ID.

- 0 kudos

- 10810 Views

- 5 replies

- 4 kudos

Resolved! How obtain a list of workflows in Databricks?

I need to obtain a list of my Databricks workflows with their job IDs in a notebook Databricks

- 10810 Views

- 5 replies

- 4 kudos

- 4 kudos

Hi @VabethRamirez , Also, instead of using directly the API, you can use databricks Python sdk : %pip install databricks-sdk --upgrade dbutils.library.restartPython()from databricks.sdk import WorkspaceClient w = WorkspaceClient() job_list = w.jobs...

- 4 kudos

- 2275 Views

- 1 replies

- 0 kudos

Can api for query history /api/2.0/sql/history/queries return data which is older than 30 days?

I am using this api but it is returning the data for only last 30 days. Can this api return data which is older than 30 days?

- 2275 Views

- 1 replies

- 0 kudos

- 0 kudos

Hi @RahulChaubey, The query history system table was announced during the Q1 roadmap webinar (see the recording, 32:25). There is a chance that it will provide data with a horizon beyond 30 days. Meanwhile, you can enable system tables - I hope some ...

- 0 kudos

- 3886 Views

- 2 replies

- 0 kudos

Does Delta Table can be the source of streaming/auto loader?

Hi,Since the Auto Loader only accept "append-only" data as the source, I am wondering if the "Delta Table" can also be the source.Does VACCUM(deleting stale files) or _delta_log(creating nested and different file format than parquet) going to break A...

- 3886 Views

- 2 replies

- 0 kudos

- 0 kudos

Hi @QPeiran, Auto-loader is a feature that allows to integrate files into the Data Platform. Once your data is stored into the Delta Table, you can rely on spark.readStream.table("<my_table_name>") to continuously read from the table. Take a look at ...

- 0 kudos

-

.CSV

1 -

Access Data

2 -

Access Databricks

3 -

Access Delta Tables

2 -

Account reset

1 -

adcAws databricks

1 -

ADF Pipeline

1 -

ADLS Gen2 With ABFSS

1 -

Advanced Data Engineering

2 -

AI

5 -

Analytics

1 -

Apache spark

1 -

Apache Spark 3.0

1 -

api

1 -

Api Calls

1 -

API Documentation

4 -

App

2 -

Application

2 -

Architecture

1 -

asset bundle

1 -

Asset Bundles

3 -

Auto-loader

1 -

Autoloader

4 -

Aws databricks

1 -

AWS security token

1 -

AWSDatabricksCluster

1 -

Azure

7 -

Azure data disk

1 -

Azure databricks

16 -

Azure Databricks Delta Table

1 -

Azure Databricks Job

1 -

Azure Databricks SQL

6 -

Azure databricks workspace

1 -

Azure Unity Catalog

6 -

Azure-databricks

1 -

AzureDatabricks

1 -

AzureDevopsRepo

1 -

best practices

1 -

Big Data Solutions

1 -

Billing

1 -

Billing and Cost Management

2 -

Blackduck

1 -

Bronze Layer

1 -

Business Intelligence

1 -

CDC

1 -

Certification

3 -

Certification Exam

1 -

Certification Voucher

3 -

CICDForDatabricksWorkflows

1 -

Cloud_files_state

1 -

CloudFiles

1 -

Cluster

3 -

Cluster Init Script

1 -

Comments

1 -

Community Edition

4 -

Community Edition Account

1 -

Community Event

1 -

Community Group

2 -

Community Members

1 -

CommunityArticle

1 -

Compute

3 -

Compute Instances

1 -

conditional tasks

1 -

Connection

1 -

Contest

1 -

Credentials

1 -

csv

1 -

Custom Python

1 -

CustomLibrary

1 -

Data

1 -

Data + AI Summit

1 -

Data Engineer Associate

1 -

Data Engineering

4 -

Data Explorer

1 -

Data Governance

1 -

Data Ingestion & connectivity

1 -

Data Ingestion Architecture

1 -

Data Processing

1 -

Databrick add-on for Splunk

1 -

databricks

5 -

Databricks Academy

1 -

Databricks AI + Data Summit

1 -

Databricks Alerts

1 -

Databricks App

1 -

Databricks Assistant

1 -

Databricks autoloader

1 -

Databricks Certification

1 -

Databricks Cluster

2 -

Databricks Clusters

1 -

Databricks Community

10 -

Databricks community edition

3 -

Databricks Community Edition Account

1 -

Databricks Community Rewards Store

3 -

Databricks connect

1 -

Databricks Dashboard

3 -

Databricks delta

2 -

Databricks Delta Table

2 -

Databricks Demo Center

1 -

Databricks Documentation

4 -

Databricks genAI associate

1 -

Databricks JDBC Driver

1 -

Databricks Job

1 -

Databricks Lakeflow

1 -

Databricks Lakehouse Platform

6 -

Databricks Migration

1 -

Databricks Model

1 -

Databricks notebook

2 -

Databricks Notebooks

4 -

Databricks Platform

2 -

Databricks Pyspark

1 -

Databricks Python Notebook

1 -

Databricks Repo

1 -

Databricks Runtime

1 -

Databricks Serverless

2 -

Databricks SQL

5 -

Databricks SQL Alerts

1 -

Databricks SQL Warehouse

1 -

Databricks Terraform

1 -

Databricks UI

1 -

Databricks Unity Catalog

4 -

Databricks User Group

1 -

Databricks Workflow

2 -

Databricks Workflows

2 -

Databricks workspace

3 -

Databricks-connect

1 -

databricks_cluster_policy

1 -

DatabricksAutomation

1 -

DatabricksJobCluster

1 -

DatabricksOptimization

1 -

DataCleanroom

1 -

DataDays

1 -

DataEngineering

1 -

Datagrip

1 -

DataMasking

2 -

DataVersioning

1 -

dbdemos

2 -

DBFS

1 -

DBRuntime

1 -

DBSQL

1 -

DDL

1 -

Dear Community

1 -

deduplication

1 -

Delt Lake

1 -

Delta Live Pipeline

3 -

Delta Live Table

5 -

Delta Live Table Pipeline

5 -

Delta Live Table Pipelines

4 -

Delta Live Tables

7 -

Delta Sharing

2 -

Delta Time Travel

1 -

DeltaLake

1 -

deltaSharing

1 -

Deny assignment

1 -

Development

1 -

DevOps

1 -

DLT

10 -

DLT Pipeline

7 -

DLT Pipelines

5 -

Dolly

1 -

Download files

1 -

DQX

1 -

Dynamic Variables

1 -

Engineering With Databricks

1 -

env

1 -

ETL Pipelines

1 -

Event Driven

1 -

External Sources

1 -

External Storage

2 -

FAQ for Databricks Learning Festival

2 -

Feature Store

2 -

File Trigger

1 -

Filenotfoundexception

1 -

Free Edition

1 -

Free trial

1 -

friendsofcommunity

1 -

GCP Databricks

1 -

GenAI

3 -

GenAI and LLMs

2 -

GenAI Course Material

1 -

Getting started

3 -

Google Bigquery

1 -

HIPAA

1 -

Hubert Dudek

2 -

import

2 -

Integration

1 -

JDBC Connections

1 -

JDBC Connector

1 -

Job Task

1 -

JSON Object

1 -

LakeflowDesigner

1 -

Learning

2 -

Lineage

1 -

LiquidClustering

1 -

LLM

2 -

Login

1 -

Login Account

1 -

Machine Learning

3 -

MachineLearning

1 -

Materialized Tables

2 -

Medallion Architecture

1 -

meetup

2 -

Metadata

1 -

Migration

1 -

ML Model

2 -

MlFlow

2 -

Model

1 -

Model Serving

1 -

Model Training

1 -

Module

1 -

Monitoring

1 -

Networking

2 -

Notebook

1 -

Onboarding Trainings

1 -

OpenAI

1 -

Pandas udf

1 -

Permissions

1 -

personalcompute

1 -

Pipeline

2 -

Plotly

1 -

PostgresSQL

1 -

PredictiveOptimization

1 -

Pricing

1 -

provisioned throughput

1 -

Pyspark

1 -

Python

5 -

Python Code

1 -

Python Wheel

1 -

Quickstart

1 -

Read data

1 -

Repos Support

1 -

Reset

1 -

Rewards Store

2 -

Salesforce with Databricks

1 -

Sant

1 -

Schedule

1 -

Serverless

3 -

serving endpoint

1 -

Session

1 -

Sign Up Issues

2 -

Software Development

1 -

Spark

1 -

Spark Connect

1 -

Spark scala

1 -

sparkui

2 -

Speakers

1 -

Splunk

2 -

SQL

8 -

streamlit

1 -

Summit23

7 -

Support Tickets

1 -

Sydney

2 -

Table Download

1 -

Tags

3 -

terraform

1 -

Training

2 -

Troubleshooting

1 -

Unity Catalog

4 -

Unity Catalog Metastore

2 -

Update

1 -

user groups

2 -

Venicold

3 -

Vnet

1 -

Voucher Not Recieved

1 -

Watermark

1 -

Weekly Documentation Update

1 -

Weekly Release Notes

2 -

Women

1 -

Workflow

2 -

Workspace

3 -

Zordering

1

- « Previous

- Next »

| User | Count |

|---|---|

| 142 | |

| 135 | |

| 57 | |

| 45 | |

| 42 |