Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Technical Blog

Explore in-depth articles, tutorials, and insights on data analytics and machine learning in the Databricks Technical Blog. Stay updated on industry trends, best practices, and advanced techniques.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks Community

- Technical Blog

- Overwatch: The Observability Tool for Databricks

Databricks Employee

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

11-28-2023

01:19 PM

What is Overwatch?

Overwatch is maintained as part of Databricks Labs and supports all the major clouds: Azure, AWS, and GCP. In this post we will look at a variety of analytics made possible by Overwatch, then discuss what a multi-Workspace deployment is and how to implement it!

Features of Overwatch

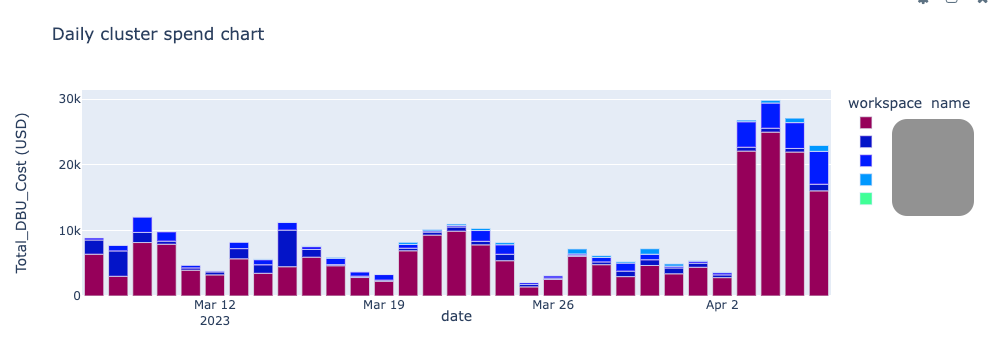

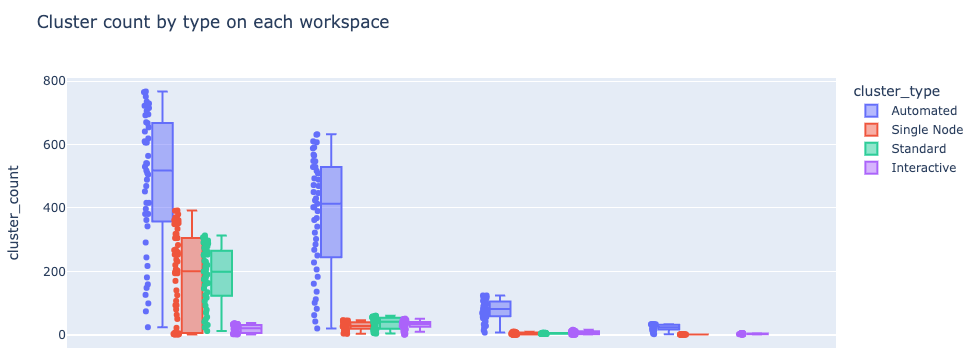

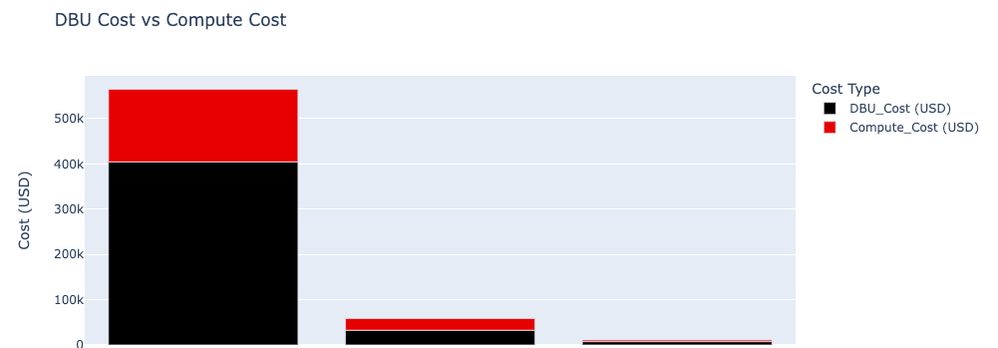

Monitoring Workspaces

- Total spend

2. Cluster count by type

3. DBU cost vs compute cost

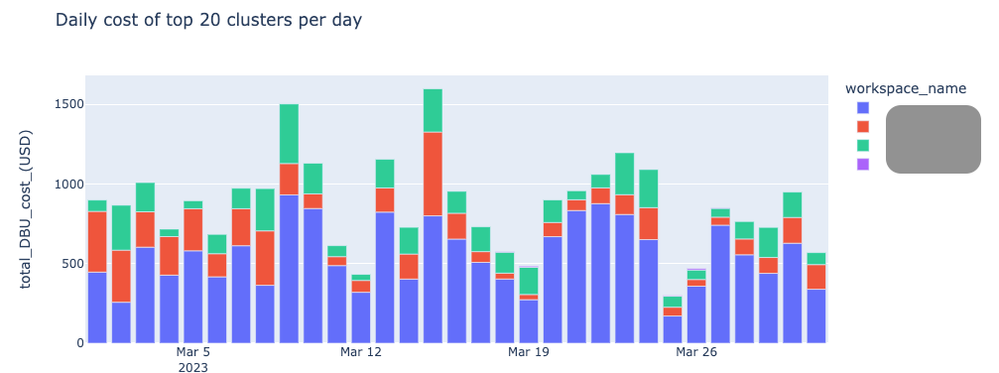

Monitoring Clusters

- Most expensive clusters by day

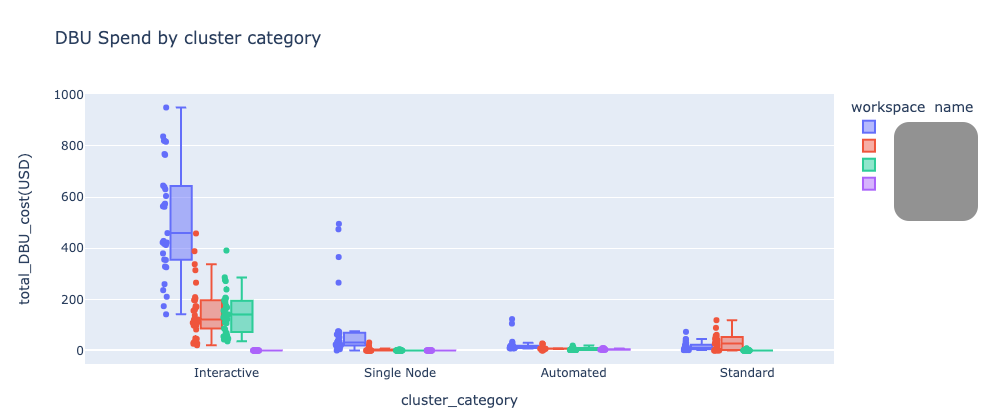

2. DBU spend by cluster type

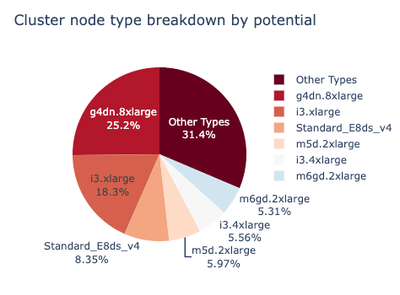

3. Cluster node types

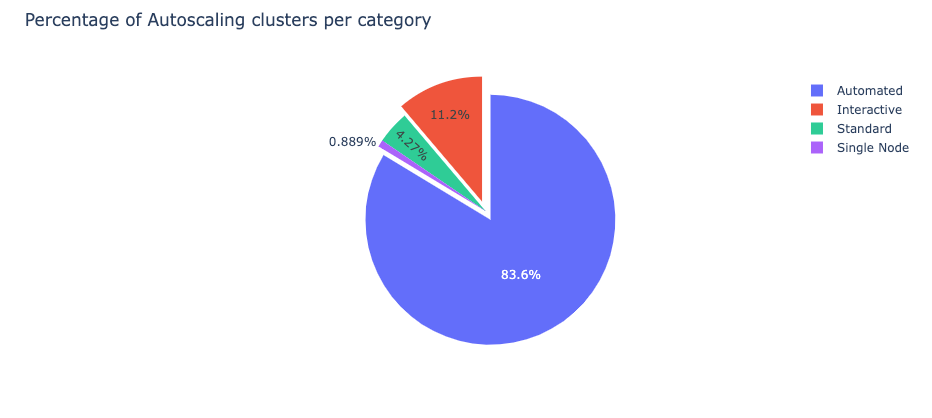

4.Percentage of auto-scaling clusters

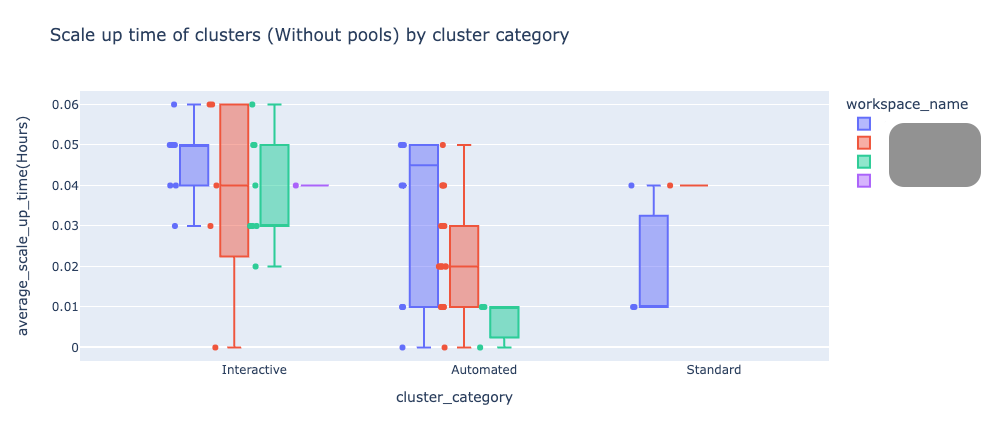

5. Scale up time of clusters without pools

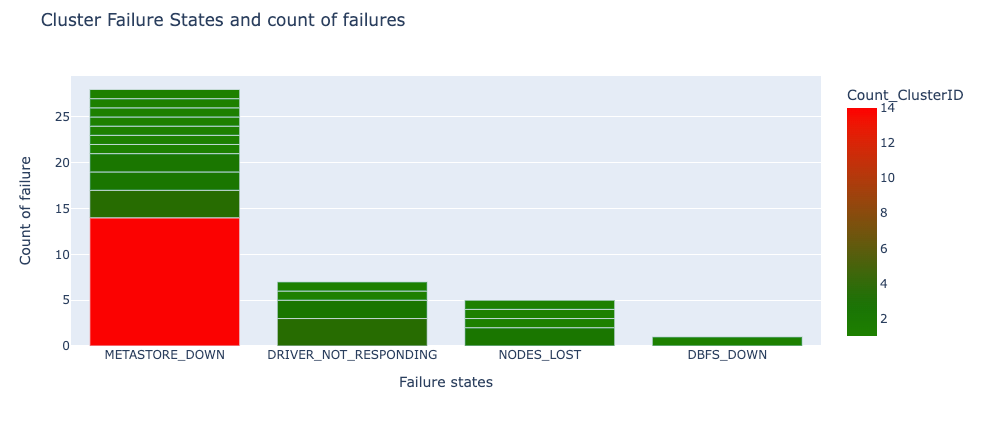

6.Cluster failure state and count of failures

Monitoring Jobs

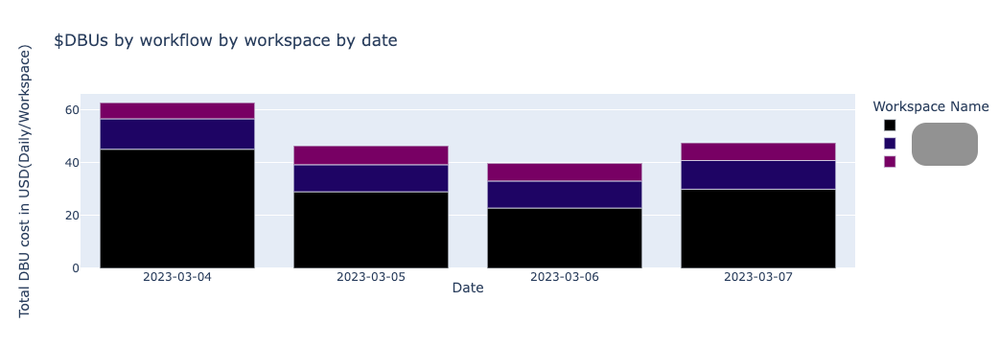

- DBUs by Workflow by Workspace by date

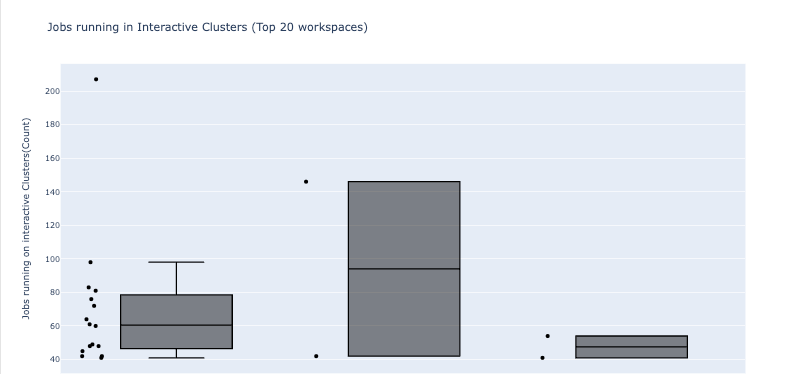

2. Jobs running in Interactive clusters

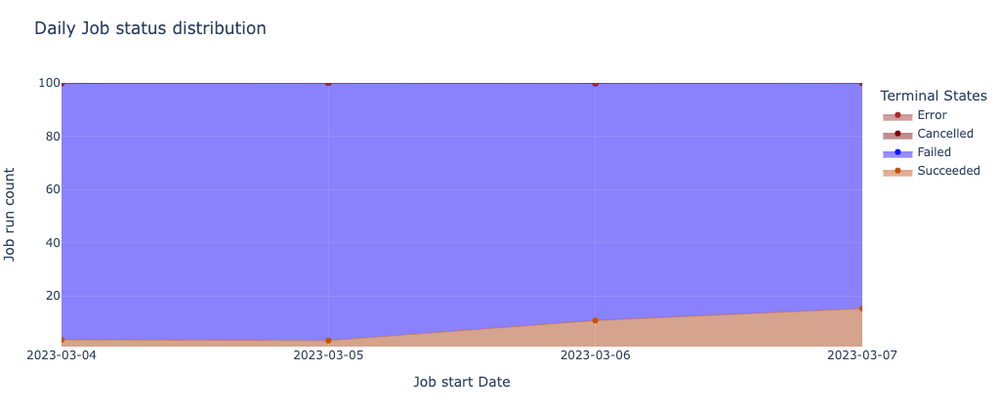

3.Daily job status distribution

4.Impact of failure by Workspace

Here are some other analyses you can perform with Overwatch:

- Last 30 days spend

Aggregate cost of cluster spend in all workspaces for the last 30 days. - Month-over-month change in spend

Percentage change of cluster spend compared with previous month. For example, if the percentages drop below zero, it signifies that usage is down from the previous month, and vice versa. - Top 3 cluster spend by workspace in the last 30 days

Provides information on the top three clusters that spend the most, per Workspace. - Week-over-week top 10 fastest growing clusters by Workspace

Top 10 clusters with fastest growth in spend compared with previous week. For example, if the percentages drop below zero, it signifies dip in growth previous week, and vice versa. - Last 7 days of spend by Databricks Workflow

Expenses for each job in the last 7 days. - Last 7 days of spend for Databricks Workflows executed on interactive clusters

Expenses for jobs performed on interactive clustersin the previous 7 days

What is a multi-Workspace deployment of Overwatch?

If you possess multiple Databricks workspaces and wish to oversee them collectively, you can implement a multi-Workspace deployment. If the prospect of monitoring jobs across each of your 100 workspaces seems daunting, the solution is at hand. Through a multi-Workspace deployment, a single job in one Workspace can aggregate data from all specified Workspaces and seamlessly incorporate it into a centralized database in your Lakehouse. This enables you to query the data from any workspace of your choosing, streamlining the monitoring process.

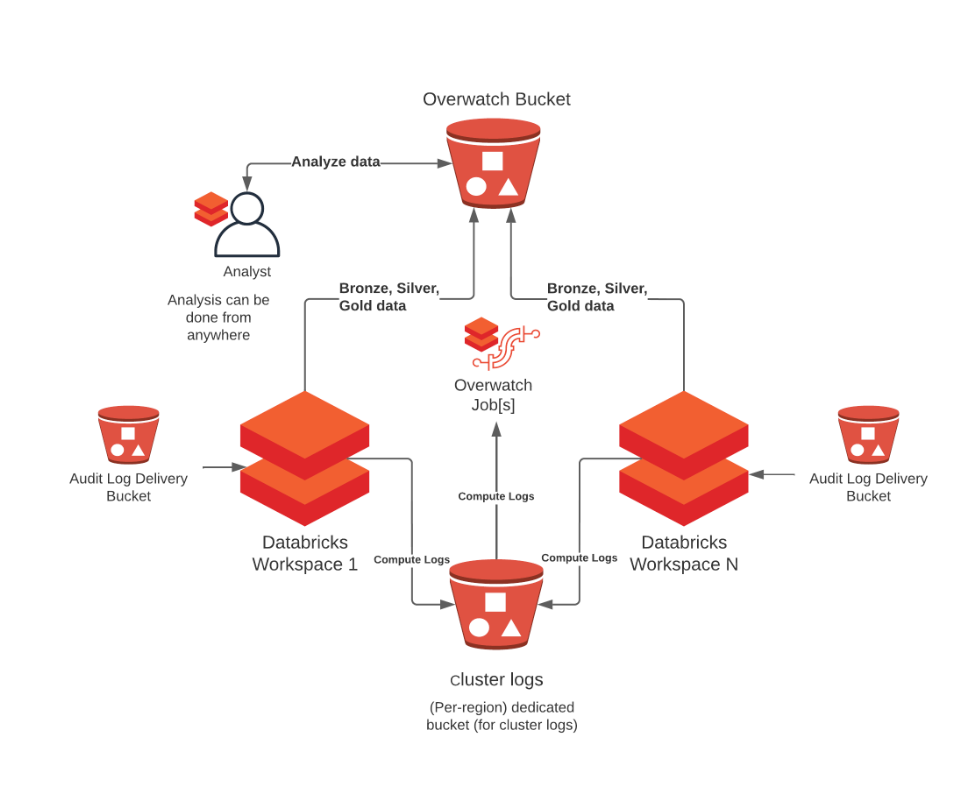

Architecture of a multi-Workspace Deployment:

Overwatch can be deployed on a single, primary Workspace and then retrieve data from all other Databricks Workspaces. For more details on requirements see Multi-Workspace Consideration. There are many cases where some Workspaces should be able to monitor many Workspaces and others should only monitor themselves. Additionally, co-location of the output data and who should be able to access what data also comes into play, this reference architecture can accommodate all of these needs. To learn more about the details walk through the deployment steps in the official Overwatch documentation.

How to perform a multi-Workspace deployment

- Download the CSV file.

- Fill the CSV with the workspace details which you want to monitor. Please refer the column descriptions to know more about the columns.

- Add dependant library.

- Run it via Notebook (example here), or run it as a JAR.

For more details and instructions, please visit the official site for Overwatch. You can directly raise an issue in this link.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Related Content

- Databricks Introduces Column Popularity in Unity Catalog: A Smarter Way to Understand Data Usage in MVP Articles

- [CUSTOMER BLOG] Enabling Seamless Inbound Data Sharing at Magnite in Technical Blog

- Multi-Agent Supervisor for Hybrid Retrieval with Agent Bricks and MLflow in Technical Blog

- Databricks SQL Just Dropped Some Massive Engine Upgrades for Data Engineers in Community Articles

- Building an AI Powered Autonomous Data Reliability Platform using Databricks & Gemini LLM in Community Articles