- 1282 Views

- 2 replies

- 3 kudos

Resolved! Error when trying to destory databricks_permissions with OpenTofu

Hi,In our company's project we created a databricks_user for a service account (which is needed for our deployment process) via OpenTofu and afterwards adjusted permissions to that "user's" user folder using the databricks_permissions resource.resour...

- 1282 Views

- 2 replies

- 3 kudos

- 3 kudos

Hi @MiriamHundemer , The issue occurs because the owner of the home folder (in this case, the databricks_user.databricks_deployment_sa service account) often has an unremovable CAN_MANAGE permission on its own home directory. When OpenTofu attempts t...

- 3 kudos

- 1453 Views

- 4 replies

- 1 kudos

Resolved! Deply databricks workspace on azure with terraform - failed state: legacy access

I'm trying to deploy a workspace on azure via terraform and i'm getting the following error:"INVALID_PARAMETER_VALUE: Given value cannot be set for workspace~<id>~default_namespace_ws~ because: cannot set default namespace to hive_metastore since leg...

- 1453 Views

- 4 replies

- 1 kudos

- 1 kudos

I found the issue, The setting automatically assigned workspaces to this metastore was checked. Unchecking this and manually assigning the metastore worked.

- 1 kudos

- 619 Views

- 1 replies

- 1 kudos

Clarification on Unity Catalog Metastore - Metadata and storage

Where does the Unity Catalog metastore metadata actually reside?Is it stored and managed in the Databricks account (control plane)?Or does it get stored in the customer-managed S3 bucket when we create a bucket for Unity Catalog metastore?I want to c...

- 619 Views

- 1 replies

- 1 kudos

- 1 kudos

@APJESK Replied here https://community.databricks.com/t5/data-governance/clarification-on-unity-catalog-metastore-metadata-and-storage/td-p/133389

- 1 kudos

- 893 Views

- 3 replies

- 3 kudos

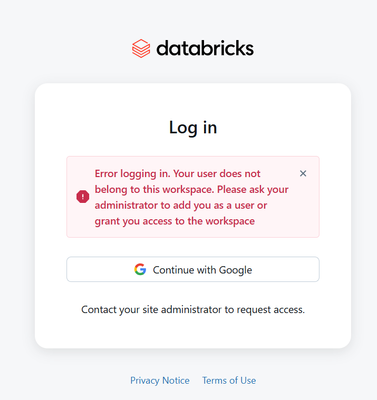

Resolved! Databricks GCP login with company account

I created gmail on my company account, then I logged in in gcp databricks, till yesterday it was working fine, yesterday I logged in into gmail account, there it asked for another gmail id, so I have provided new, but today I am not able to login usi...

- 893 Views

- 3 replies

- 3 kudos

- 3 kudos

Hello @xavier_db! Were you able to get this login issue resolved? If yes, it would be great if you could share what worked for you so others facing the same problem can benefit as well.

- 3 kudos

- 1367 Views

- 4 replies

- 6 kudos

Resolved! Is there a way to register S3 compatible tables?

Hi everyone,I have successfully registered AWS S3 tables in unity catalog, but I would like to register S3-compatible as well.But, to create an EXTERNAL LOCATION in unity catalog, it seems I must register a credential. But the only suported credentia...

- 1367 Views

- 4 replies

- 6 kudos

- 6 kudos

Hey @tabasco, did you check out the External Table documentation in the Databricks AWS docs?External Locationhttps://docs.databricks.com/aws/en/sql/language-manual/sql-ref-external-locationsCredentialshttps://docs.databricks.com/aws/en/sql/language-m...

- 6 kudos

- 1773 Views

- 1 replies

- 1 kudos

Workload identity federation policy

Dear allCan I create a single workload federation policy for all devops pipelines?Our set-up : we have code version controlled in Github repos. And, we use Azure DevOps pipelines to authenticate with Databricks via a service principal currently and d...

- 1773 Views

- 1 replies

- 1 kudos

- 1 kudos

Hi @noorbasha534 ,In docs they are giving following example of subject requirements for Azure Devops. So, the subject (sub) claim must uniquely identify the workload. So as long as all of your pipelines resides in the same organization, same project ...

- 1 kudos

- 3490 Views

- 6 replies

- 9 kudos

Resolved! Is it possible restore a deleted catalog and schema

Is it possible restore a deleted catalog and schema.if CASCADE is used even though schemas and tables are present in catalog, catalog will be dropped.Is it possible to restore catalog or is possible to restrict the use of CACADE command.Thank you.

- 3490 Views

- 6 replies

- 9 kudos

- 9 kudos

@Louis_Frolio I cannot click any "Accept as Solution" button, as I was not the one creating the post, I believe

- 9 kudos

- 1886 Views

- 1 replies

- 1 kudos

Resolved! Dev/Prod Environments in AWS: Separate Accounts vs. Separate Workspaces?

Hello everyone,I'm looking for some advice on best practices. When setting up development and production environments for Databricks on AWS, is it better to use completely separate AWS accounts, or is it sufficient to use separate workspaces within a...

- 1886 Views

- 1 replies

- 1 kudos

- 1 kudos

Hi @tana_sakakimiya , I allow myself copy and paste brilliant answer on similar question provided by user Isi:"Option A: Multiple Databricks accounts and multiple AWS accountsThis model offers the highest level of isolation. Each environment lives in...

- 1 kudos

- 5046 Views

- 3 replies

- 4 kudos

Resolved! Enable the "Billable Usage Download' Accounts API on our Azure

Hi Databricks Support,Could you please confirm whether the Billable Usage Download endpoint can be enabled on Azure Databricks account, similar to how it’s available on AWS/GCP? If yes, what are the steps that should be followed for the same.. else ...

- 5046 Views

- 3 replies

- 4 kudos

- 4 kudos

Thank you.. !! i finally got to know wat to do..!!

- 4 kudos

- 4626 Views

- 1 replies

- 1 kudos

Mismatch cuda/cudnn version on Databricks Runtime GPU ML version

I have a cluster on Databricks with configuration Databricks Runtime Version16.4 LTS ML Beta (includes Apache Spark 3.5.2, GPU, Scala 2.12), and another cluster with configuration 16.0 ML (includes Apache Spark 3.5.2, GPU, Scala 2.12). According to...

- 4626 Views

- 1 replies

- 1 kudos

- 1 kudos

There could be library related conflicts in 16.0ML that got fixed in 16.4ML. I would always recommend to use the LTS version. Thanks

- 1 kudos

- 820 Views

- 2 replies

- 1 kudos

DataBricks JDBC driver fails when unsupported property is submitted

Hello!The Databricks jdbc driver applies a property that is not supported by the connector as a Spark server-side property for the client session. How can I avoid that ? With some tools I do not have 100% control, eg they may add a custom jdbc connec...

- 820 Views

- 2 replies

- 1 kudos

- 1 kudos

Hi @Kaitsu , The documentation mentions, If you specify a property that is not supported by the connector, then the connector attempts to apply the property as a Spark server-side property for the client session. Unlike many other JDBC drivers that ...

- 1 kudos

- 4362 Views

- 2 replies

- 2 kudos

Pre-loading docker images to cluster pool instances still requires docker URL at cluster creation

I am trying to pre-load a docker image to a Databricks cluster pool instance.As per this article I used the REST API to create the cluster pool and defined a custom Azure container registry as the source for the docker images.https://learn.microsoft....

- 4362 Views

- 2 replies

- 2 kudos

- 2 kudos

@NadithK Pre-loading Docker images to cluster pool instances is for performance optimization (faster cluster startup), but you still must specify the Docker image in your cluster configuration. The pre-loading doesn't eliminate the requirement to dec...

- 2 kudos

- 2478 Views

- 4 replies

- 3 kudos

Resolved! Databricks Default package repositories

I have added an extra-index-url in the default package repository in databricks which points to a repository in azure artifact. The libraries from it are getting installed on job cluster but is not working on the all purpose cluster. Below is the rel...

- 2478 Views

- 4 replies

- 3 kudos

- 3 kudos

Hello @tariq! Did the suggestions shared above help address your issue? If so, please consider marking one of the responses as the accepted solution. If you found a different approach that worked for you, sharing it with the community would be really...

- 3 kudos

- 1697 Views

- 1 replies

- 3 kudos

Resolved! Databricks Usage Dashboard - Tagging Networking Costs

Problem OverviewOur team has successfully integrated Azure Databricks Usage Dashboards to monitor platform-related costs. This addition has delivered valuable insights into our spending patterns. However, we've encountered a tagging issue that's prov...

- 1697 Views

- 1 replies

- 3 kudos

- 3 kudos

There is no direct way to tag certain Azure networking resources (such as network interfaces, public IPs, or managed disks) so that their costs inherit custom tags like "projectRole" in cost reports, because many core networking resources either do n...

- 3 kudos

- 644 Views

- 1 replies

- 0 kudos

Why MLFlow version blocks library installation?

I have a Databricks Asset Bundle proyect running and when the MLFlow library released the 3.4.0 version it happened this error:When restricting the MLFlow version to < 3.4.0 it works.The libraries got stuck installing and the process finished in erro...

- 644 Views

- 1 replies

- 0 kudos

- 0 kudos

Hello @PabloCSD! Which Databricks Runtime version are you using?

- 0 kudos

-

Access control

1 -

Apache spark

1 -

Azure

7 -

Azure databricks

5 -

Billing

2 -

Cluster

1 -

Compliance

1 -

Data Ingestion & connectivity

5 -

Databricks Runtime

1 -

Databricks SQL

2 -

DBFS

1 -

Dbt

1 -

Delta Sharing

1 -

DLT Pipeline

1 -

GA

1 -

Gdpr

1 -

Github

1 -

Partner

79 -

Public Preview

1 -

Service Principals

1 -

Unity Catalog

1 -

Workspace

2

- « Previous

- Next »

| User | Count |

|---|---|

| 118 | |

| 54 | |

| 38 | |

| 36 | |

| 25 |