- 4022 Views

- 3 replies

- 2 kudos

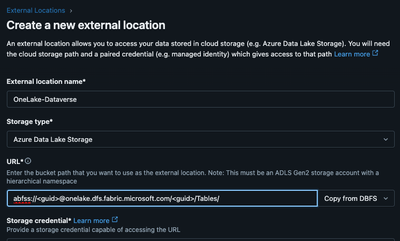

Support for Unity Catalog External Data in Fabric OneLake

Hi community!We have set up a Fabric Link with our Dynamics, and want to attach the data in Unity Catalog using the External Data connector.But it doesn't look like Databricks support other than the default dls endpoints against Azure.Is there any wa...

- 4022 Views

- 3 replies

- 2 kudos

- 2 kudos

Thanks @szymon_dybczak , do you know if support is on the roadmap? Since the current supported way of doing this with credential passthrough on the Compute is deprecated.Regards Marius

- 2 kudos

- 4476 Views

- 2 replies

- 3 kudos

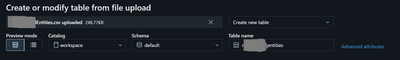

Resolved! Can't create a new table from uploaded file.

I've just started using the Community Edition through the AWS marketplace and I'm trying to set up tables to share with a customer. I've managed to create 3 of the tables, but when uploading a small version of the fourth file, I'm having problems. T...

- 4476 Views

- 2 replies

- 3 kudos

- 3 kudos

Thank you, Lou.By loading manually, I found the error that wasn't being displayed in the UI. Once I took care of this, everything loaded just fine.

- 3 kudos

- 1734 Views

- 3 replies

- 0 kudos

Usage of Databricks apps or UI driven approach to create & maintain Databricks infrastructure

Hi all,the CI/CD based process to create & maintain Databricks infrastructure (UC securables, Metastore securables, Workspace securables) is resulting into high time to market in our case. So, we are planning to make it UI driven, as on, create a Da...

- 1734 Views

- 3 replies

- 0 kudos

- 0 kudos

UI Driven approach is definetly a bad idea for deployment. I have seen most organisation using terraform or biceps for deployment.Why UI-Driven Infrastructure is Wrong1. No version control or audit trail2. Configuration Drift & Inconsistency3. No Dis...

- 0 kudos

- 3012 Views

- 4 replies

- 2 kudos

Resolved! Unable to get external groups from list group details API

Hi Team, I see that automatic group sync is enabled in our azure databricks by default but I am not able to get the groups using list groups endpoint. What is the right way to get it. I've an automated process to bring certain account groups to works...

- 3012 Views

- 4 replies

- 2 kudos

- 2 kudos

I checked it and I can confirm that it worked. I enabled automatic identity management, then I've added EntraId group to the workspace and using above endpoint I was able to get information about that group.

- 2 kudos

- 2111 Views

- 2 replies

- 0 kudos

Is there a way to see Job/Workflow in the lineage of a table/view

Hi allOne frequent question I get from the data users is - how often a view is refreshed? ((users are given access to views not tables))I was thinking to guide them to leverage lineage for the same. However, I noticed the lineage tab in the catalog e...

- 2111 Views

- 2 replies

- 0 kudos

- 0 kudos

@Vidhi_Khaitan the user wants to know the frequency of the schedule, not whether the view/table is fresh...

- 0 kudos

- 1448 Views

- 1 replies

- 0 kudos

Job Runs Permissions for Runs Submit API via ADF

I wanted to assign group permissions to Job runs in Azure Databricks which are triggered from ADF via Runs Submit API.How can we assign Job Runs Permissions in Azure Databricks for ADF triggers by Runs Submit API?

- 1448 Views

- 1 replies

- 0 kudos

- 0 kudos

Hi @hajojko ,Good Day!I believe this is what you can try for your use case - https://docs.databricks.com/api/workspace/jobs_21/submit#access_control_list

- 0 kudos

- 1731 Views

- 1 replies

- 0 kudos

Databricks compute failed to start due to EC2 security group id change in AWS default VPC

I have been using Databricks on AWS for almost 2 years. Recently, I cannot start up Databricks compute. "databricks_error_message": "The VM launch request to AWS failed, please check your configuration. [details] InvalidGroup.NotFound: The se...

- 1731 Views

- 1 replies

- 0 kudos

- 0 kudos

hey @sg-vtc You need to register a new network configuration in Databricks that points to the updated VPC and security group. Here’s how:- Go to the Databricks Account Console (not the workspace UI). (Click in workspaces dropbox and Manage Account)- ...

- 0 kudos

- 877 Views

- 1 replies

- 0 kudos

Costs incurred by systems table usage

Doc about system tables says "The billable usage and pricing tables are free to use. Tables in Public Preview are also free to use during the preview but could incur a charge in the future."https://learn.microsoft.com/en-us/azure/databricks/admin/sys...

- 877 Views

- 1 replies

- 0 kudos

- 0 kudos

Hi @alsetr ,The billable usage and pricing system tables are the clearly marked as free to use.Tables in Public Preview are also free for now.For the rest, there’s no mention of a direct cost, but you’ll still be billed for the compute (DBUs) used wh...

- 0 kudos

- 3384 Views

- 3 replies

- 1 kudos

Using logged in user's identity in Databricks Apps

Hi Databricks Community, I recently started using Datbricks apps where I list some schemas and tables in the UI.What I explicitly want to do is only show the schemas and tables on which user actually have permission. Currently the databricks apps wou...

- 3384 Views

- 3 replies

- 1 kudos

- 1 kudos

https://docs.databricks.com/aws/en/dev-tools/databricks-apps/auth#retrieve-user-authorization-credentials : they launched this recently to support user identity in databricks apps

- 1 kudos

- 2363 Views

- 1 replies

- 0 kudos

Custom hostname for Azure Databricks workspace

For a client it's required that all applications use a subdomain of an internal hostname. Does anyone have a suggestion on how to achieve this? Options could be in the line of CNAME or Reverse Proxy, but couldn't get that to work yet.Does Azure Datab...

- 2363 Views

- 1 replies

- 0 kudos

- 0 kudos

Hi @gt-ferdyh, Azure Databricks doesn’t natively support custom subdomains. However, to make it accessible via an internal subdomain, you can:Enable Azure Private Link for your Databricks workspace.In your internal DNS, create an A record or CNAME th...

- 0 kudos

- 3074 Views

- 2 replies

- 0 kudos

Resolved! "Aws Invalid Kms Key State" when trying to start new cluster on AWS

Hey,We just established new environment based on AWS. Our first step was to create Cluster but while doing so, we have encountered an error. We tried different policies, configurations and instance types. All resulted in:Aws Invalid Kms Key State: Th...

- 3074 Views

- 2 replies

- 0 kudos

- 0 kudos

Yes, I followed this document and that fixed it. Thanks.

- 0 kudos

- 2287 Views

- 2 replies

- 2 kudos

Databricks Logs

I’m trying to understand the different types of logs available in Databricks and how to access and interpret them. Could anyone please guide me on:What types of logs are available in Databricks?Where can I find these logs?How can I use these logs to ...

- 2287 Views

- 2 replies

- 2 kudos

- 2 kudos

Hi @APJESK,In addition, job logs are very useful to monitor and troubleshoot any job failures. They can be found under Workflows. Workspace admins role is required to have full access on all jobs unless explicitly granted to the user by Job Owner/adm...

- 2 kudos

- 4558 Views

- 3 replies

- 2 kudos

Resolved! Clarification on Databricks Access Modes: Standard vs. Dedicated with Unity Catalog

Hello,I’d like to ask for clarification regarding the access modes in Databricks, specifically the intent and future direction of the “Standard” and “Dedicated” modes.According to the documentation below:https://docs.databricks.com/aws/ja/compute/acc...

- 4558 Views

- 3 replies

- 2 kudos

- 2 kudos

Hi @Yuki Thank you, I am glad I could help!

- 2 kudos

- 2029 Views

- 2 replies

- 0 kudos

Have authenticator app set up but databricks still resorts to email mfa

Good monring,I set up an authenticator app through settings, profile, mfa. However, when I logout and log back into the workspace, I am still only getting the email verification to get in. Is there a way I can turn off email verification or have it d...

- 2029 Views

- 2 replies

- 0 kudos

- 0 kudos

Hi, @andreapeterson Do you have Account Admin privileges?If you do, you can enforce MFA from the account console, just as shown in the attached image.If you’re using the Databricks Free Edition rather than a paid account, you can’t enforce MFA. This ...

- 0 kudos

- 1697 Views

- 1 replies

- 0 kudos

- 1697 Views

- 1 replies

- 0 kudos

- 0 kudos

hey @prakash4 I understand you're having trouble connecting to a remote server / getting your Databricks workspace to start. Would you be able to share a little more detail to help track down your issue?Specifically:- What happens when you try to sta...

- 0 kudos

-

Access control

1 -

Apache spark

1 -

Azure

7 -

Azure databricks

5 -

Billing

2 -

Cluster

1 -

Compliance

1 -

Data Ingestion & connectivity

5 -

Databricks Runtime

1 -

Databricks SQL

2 -

DBFS

1 -

Dbt

1 -

Delta Sharing

1 -

DLT Pipeline

1 -

GA

1 -

Gdpr

1 -

Github

1 -

Partner

80 -

Public Preview

1 -

Service Principals

1 -

Unity Catalog

1 -

Workspace

2

- « Previous

- Next »

| User | Count |

|---|---|

| 118 | |

| 54 | |

| 38 | |

| 36 | |

| 25 |