- 1487 Views

- 2 replies

- 1 kudos

users' usage report (for frontend power bi)

Hi All,Hi All,I'm currently working on retrieving usage information by querying system tables. At the moment, I'm using the system.access.audit table. However, I've noticed that the list of users retrieved appears to be incomplete when compared to si...

- 1487 Views

- 2 replies

- 1 kudos

- 1 kudos

thank you for the replay.if i understand correctly, when using PBI direct query connectivity the users being used is not s service principle but the end user who open the PBI dashboard. correct?did you implement any usage report? Regards,Uri

- 1 kudos

- 3057 Views

- 1 replies

- 0 kudos

unable to enable external sharing when creating deltashare - azure databricks trial

I have started a PayGo Azure tenancy and a Databricks 14 day trialI have signed up using my gmail account.with the above user , I logged into azure and created a workspace and tried to share a schema deltasharing.I am unable to share to a open user ...

- 3057 Views

- 1 replies

- 0 kudos

- 0 kudos

Hi @mandarsu ,To enable external Delta Sharing in Databricks:Enable External Sharing:Go to the Databricks Account Console, open your Unity Catalog metastore settings, and enable the “External Delta Sharing” option.Check Permissions:Ensure you have th...

- 0 kudos

- 5660 Views

- 2 replies

- 2 kudos

Resolved! Cost

Do you have information that helps me optimize costs and follow up?

- 5660 Views

- 2 replies

- 2 kudos

- 2 kudos

@Athul97 provided a pretty solid list of best practices. To go deeper into Budgets & Alerts, I have found a lot of good success with the Consumption and Budget feature in the Databricks Account Portal under the Usage menu. Once you embed tagging in...

- 2 kudos

- 2104 Views

- 3 replies

- 0 kudos

python databricks sdk get object path from id

when using the databricks SDK to get permissions of objects we get inherited_from_object=['/directories/1636517342231743']from what I can see the workspace list and get_status methods only work with the actual path. Is there a way to look up that di...

- 2104 Views

- 3 replies

- 0 kudos

- 0 kudos

@BriGuy Here I have small code snippet which we have used. Hope this works well with youfrom databricks.sdk import WorkspaceClient w = WorkspaceClient() def find_path_by_object_id(target_id, base_path="/"): items = w.workspace.list(path=base_p...

- 0 kudos

- 2386 Views

- 2 replies

- 0 kudos

spark.databricks documentation

I cannot find any documentation related to the spark.databricks.* I was able to find the spark related documentation but it does not contain any information on possible properties or arguments for spark.databricks in particular. Thank you!

- 2386 Views

- 2 replies

- 0 kudos

- 0 kudos

Thus, as of now, the documentation is lacing an obvious and easy to provide element, that can only be found partially, spread around random threads over the internet, or gained by guess-asking the platform developers.When will it be made available?

- 0 kudos

- 1613 Views

- 1 replies

- 0 kudos

How worker nodes get the packages during scale-up?

Hi,We are working with one of the repository where we used to download the artifact/python package from that repository using index url in global init script but now the logic is going to be change we need give the cred to download the package and th...

- 1613 Views

- 1 replies

- 0 kudos

- 0 kudos

Yes, the new worker node will execute the global init script independently when it starts. It does not get the package from the driver or other existing nodes and will hit the configured index URL directly, and try to download the package on its own....

- 0 kudos

- 4728 Views

- 5 replies

- 4 kudos

Resolved! Account level groups

When I query my user from an account client and workspace client, I get different answers. Why is this? In addition, why can I only see some account level groups from my workspace, and not others?

- 4728 Views

- 5 replies

- 4 kudos

- 4 kudos

If you have a relatively modern Databricks instance, when you create a group in workspace UI, it creates an account-level group (which you can see in "Source" column – it says "Account"). So this process essentially consists of two steps: 1) create a...

- 4 kudos

- 1268 Views

- 1 replies

- 0 kudos

Resolved! How to create a function using the functions API in databricks?

https://docs.databricks.com/api/workspace/functions/createThis documentation gives the sample request payload, and one of the fields is type_json, and there is very little explanation of what is expected in this field. What am I supposed to pass here...

- 1268 Views

- 1 replies

- 0 kudos

- 0 kudos

Hi @chinmay0924 ,The type_json field describes your function’s input parameters and return type using a specific JSON format. You’ll need to include each parameter’s name, type (like "STRING", "INT", "ARRAY", or "STRUCT"), and position, along with th...

- 0 kudos

- 3103 Views

- 3 replies

- 1 kudos

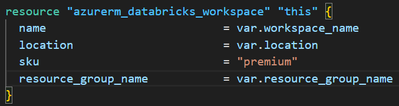

Terraform - Azure Databricks workspace without NAT gateway

Hi all,I have experienced an increase in costs - even when not using Databricks compute.It is due to the NAT-gateway, that are (suddenly) automatically deployed.When creating Azure Databricks workspaces using Terraform:A NAT-gateway is created. When ...

- 3103 Views

- 3 replies

- 1 kudos

- 1 kudos

In Azure Databricks, a NAT Gateway will be required (by Microsoft) for all egress from VMs, which affects Databricks compute: Azure updates | Microsoft Azure

- 1 kudos

- 6134 Views

- 2 replies

- 0 kudos

Resolved! External Locations to Azure Storage via Private Endpoint

When working with Azure Databricks (with VNET injection) to connect securely to an Azure Storage account via private endpoint, there's a few locations it needs to connect to, firstly the vnet that databricks is connected to, which works well when con...

- 6134 Views

- 2 replies

- 0 kudos

- 0 kudos

I've read that in the documentation, and when I now tried with an Access Connector for Azure Databricks instead of my own service principal, it seems to have worked, shockingly, even if I completely block network access on the storage account with ze...

- 0 kudos

- 1950 Views

- 3 replies

- 2 kudos

Resolved! Compute page Resolver error

I have an issue with my Databricks workspaceWhen i try to attach a notebook to my cluster, the cluster seems to have disappeared.After navigating to the Compute page, it gives me a 'Resolver Error' and no further information. None of the computes/clu...

- 1950 Views

- 3 replies

- 2 kudos

- 2 kudos

Hello @Maxadbuser! This was a known issue, and the engineering team has implemented a fix. Please check and confirm if you're still experiencing the problem.

- 2 kudos

- 1886 Views

- 3 replies

- 1 kudos

Resolved! NAT Gateway IP update

Hi, My Databricks (Premium) account was deployed on AWS.It was provisioned a few months ago from the AWS MarketPlace with the QuickStart method, based on CloudFormation. The NAT Gateway initially created by the CloudFormation stack has been incidenta...

- 1886 Views

- 3 replies

- 1 kudos

- 1 kudos

Thanks for your answer.Yes I did all of that, as well as allowing the traffic coming from my NAT Instance as an inbound rule of the Security Group of the private instances (and the other way around), and no restriction on the outbound traffic.I suspe...

- 1 kudos

- 33326 Views

- 6 replies

- 1 kudos

Resolved! Delete Databricks account

Hi everyone, as in the topic, I would like to delete unnnecesarily created account. I have found outdated solutions (e.g. https://community.databricks.com/t5/data-engineering/how-to-delete-databricks-account/m-p/6323#M2501), but they do not work anym...

- 33326 Views

- 6 replies

- 1 kudos

- 1 kudos

Hi @Edyta ,FOR AWS:Manage your subscription | Databricks on AWS" Before you delete a Databricks account, you must first cancel your Databricks subscription and delete all Unity Catalog metastores in the account. After you delete all metastores associ...

- 1 kudos

- 1198 Views

- 1 replies

- 0 kudos

Is it possible to let multiple Apps share the same compute?

Apparently, for every app deployed on Databricks, a separate VM is allocated, costing 0.5 DBU/hour. This seems inefficient, why can't a single VM support multiple apps? It feels like a waste of money and resources to allocate independent VMs per app ...

- 1198 Views

- 1 replies

- 0 kudos

- 0 kudos

Hi @Aminsn , You're understanding is correct in that only 1 running app can be deployed per app instance. If you want to maximize the utilization of the compute, one option could be to create a multi-page app, where the landing page will direct users...

- 0 kudos

- 8222 Views

- 2 replies

- 0 kudos

Databricks App : Limitations

I have some questions regarding Databricks App.1) Can we use Framework other than mentioned in documentation( Streamlit,Flask,Dash,Gradio,Shiny).2) Can we allocate compute more than 2 vCPU and 6GB memory to any App.3) Any other programming language o...

- 8222 Views

- 2 replies

- 0 kudos

- 0 kudos

1.) You can use most Python-based application frameworks, including some beyond those mentioned above.(Reference here) 2.) Currently, app capacity is limited to 2 vCPUs and 6 GB of RAM. However, future updates may introduce options for scaling out an...

- 0 kudos

-

Access control

1 -

Apache spark

1 -

Azure

7 -

Azure databricks

5 -

Billing

2 -

Cluster

1 -

Compliance

1 -

Data Ingestion & connectivity

5 -

Databricks Runtime

1 -

Databricks SQL

2 -

DBFS

1 -

Dbt

1 -

Delta Sharing

1 -

DLT Pipeline

1 -

GA

1 -

Gdpr

1 -

Github

1 -

Partner

82 -

Public Preview

1 -

Service Principals

1 -

Unity Catalog

1 -

Workspace

2

- « Previous

- Next »

| User | Count |

|---|---|

| 119 | |

| 54 | |

| 38 | |

| 36 | |

| 25 |