- 1078 Views

- 1 replies

- 0 kudos

Load assignment during Distributed training

Hi,I wanted to confirm, in a distributed training, if there is any way that I can control what kind/amount of load/data can be send to specific worker nodes, manually ..Or is it completely automatically handled by spark's scheduler, and we don't have...

- 1078 Views

- 1 replies

- 0 kudos

- 0 kudos

From what I know, Spark automatically handles how data and workload are distributed across worker nodes during distributed training, you can't manually control exactly what or how much data goes to a specific node. You can still influence the distrib...

- 0 kudos

- 1169 Views

- 1 replies

- 0 kudos

Running Driver Intensive workloads on all purpose compute

Recently observed when we run a driver intensive code on a all purpose compute. The parallel runs of the same pattern/kind jobs are getting failedExample:Job triggerd on all purpose compute with compute stats of 4 core and 8 gigs ram for driverLets s...

- 1169 Views

- 1 replies

- 0 kudos

- 0 kudos

this will help you # Cluster config adjustmentsspark.conf.set("spark.driver.memory", "16g") # Double current allocationspark.conf.set("spark.driver.maxResultSize", "8g") # Prevent large collects

- 0 kudos

- 1981 Views

- 3 replies

- 3 kudos

Resolved! how to modify workspace creator

Hi. Our 3 workspaces were created by a consultant who is no longer with us. The workspace shows his name still . How can we change it? Also what kind of account should be a creator of a workspace: Service principal, AD account, AD group? Pease see b...

- 1981 Views

- 3 replies

- 3 kudos

- 3 kudos

You can file an Azure ticket and request them to contact Databricks Support, who will contact Databricks Engineering, to change that 'workspace owner'. If you have a Databricks Account Team, they can also file an internal ticket to Databricks Enginee...

- 3 kudos

- 2253 Views

- 4 replies

- 0 kudos

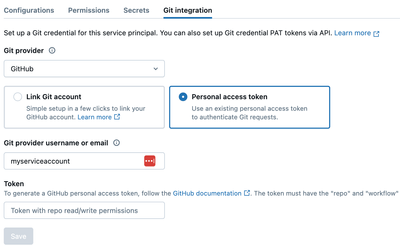

Terraforming Git credentials for service principals

I am terraforming service principals in my Databricks workspace and it works great until I need to assign Git credentials to my SP. In the UI we have these options to configure credentials on service principal page:However the Terraform resource I fo...

- 2253 Views

- 4 replies

- 0 kudos

- 0 kudos

You're a little bit ahead of me in this process, so I haven't tried the solution yet, but it looks like you create a git credential resource for the service principal. This requires a token, which I think must be generated in the console. My refere...

- 0 kudos

- 1850 Views

- 1 replies

- 0 kudos

Unity Catalog: 403 Error When Connecting S3 via IAM Role and Storage Credential

Hi,We're currently setting up Databricks Unity Catalog on AWS. We created an S3 bucket and assigned an IAM role (databricks-storage-role) to give Databricks access.Note: Databricks doesn't use the IAM role directly. Instead, it requires a Storage Cre...

- 1850 Views

- 1 replies

- 0 kudos

- 0 kudos

Have you follow any specific guide for the creation of the same? Are you setting up a Unity Catalog Metastore or the default storage for the workspace?For the Metastore creation have you follow steps in https://docs.databricks.com/aws/en/data-governa...

- 0 kudos

- 4051 Views

- 7 replies

- 1 kudos

How to install (mssql) drivers to jobcompute?

Hello, I'm having this issue with job-computes:The snippet of the code is as follows: 84 if self.conf["persist_to_sql"]: 85 # persist to sql 86 df_parsed.write.format( 87 "com.microsoft.sqlserver.jdbc.spark" 88...

- 4051 Views

- 7 replies

- 1 kudos

- 1 kudos

For a job compute, you would have to go init script route. Can you please highlight, the cause of the failure of library installation via init script?

- 1 kudos

- 2908 Views

- 1 replies

- 0 kudos

Static IP for existing workspace

Is there a way to have static IP addresses for Azure Databricks without creating new workspace?We have worked a lot in 2 workspaces (dev and main), but now we need static IP addresses for both to work with some APIs. Do we really have to recreate the...

- 2908 Views

- 1 replies

- 0 kudos

- 0 kudos

I don't think so, at least not on Azure. What you need to do depends on how you set up your workspaces. In Azure, if you just use a default install, a NAT gateway is created and configured for you, so you probably already have a static IP.If you us...

- 0 kudos

- 15076 Views

- 11 replies

- 0 kudos

What is the best practice for connecting Power BI to Azure Databricks?

I refer this document to connect Power BI Desktop and Power BI Service to Azure Databricks.Connect Power BI to Azure Databricks - Azure Databricks | Microsoft LearnHowever, I have a couple of quesitions and concerns. Can anyone kindly help?It seems l...

- 15076 Views

- 11 replies

- 0 kudos

- 0 kudos

To securely connect Power BI to Azure Databricks, avoid using PATs and instead configure a Databricks Service Principal with SQL Warehouse access. Power BI Service does not support Client Credential authentication, so Service Principal authentication...

- 0 kudos

- 1351 Views

- 1 replies

- 0 kudos

Gcs databricks community

Hello,I would like to know if it is possible to connect my Databricks community account with a Google cloud storage account via a notebook.I tried to connect it via the json key of my gcs service account but the notebook always gives this error when ...

- 1351 Views

- 1 replies

- 0 kudos

- 0 kudos

Hi @oricaruso To connect to GCS, you typically need to set the service account JSON key in the cluster’s Spark config, not just in the notebook. However, since the Community Edition has several limitations, like the absence of secret scopes, restrict...

- 0 kudos

- 3496 Views

- 3 replies

- 0 kudos

Multiple feature branches per user using Databricks Asset Bundles

I'm currently helping a team migrating to DABs from dbx and they would like to be able to work on multiple features at the same time.What I was able to do is pass the current branch as a variable in the root_path and various names, so when the bundle...

- 3496 Views

- 3 replies

- 0 kudos

- 0 kudos

@cmathieu can you provide an example inserting the branch name? I'm trying to do the same thing

- 0 kudos

- 1767 Views

- 1 replies

- 0 kudos

Resolved! Removing compute policy permissions using Terraform

By default, the "users" and "admins" groups have CAN_USE permission on the Personal Compute policy.I'm using Terraform and would like to prevent regular users from using this policy to create additional compute clusters.I haven't found a way to do th...

- 1767 Views

- 1 replies

- 0 kudos

- 0 kudos

I learned the Personal Compute policy can be turned off at the account level:https://learn.microsoft.com/en-us/azure/databricks/admin/clusters/personal-compute#manage-policy

- 0 kudos

- 1995 Views

- 4 replies

- 0 kudos

Resolved! Shall we opt for multiple worker nodes in dab workflow template if our codebase is based on pandas.

Hi team, I am working in a databricks asset bundle architecture. Added my codebase repo in a workspace. My question to do we need to opt for multiple worker nodes like num_worker_nodes > 1 or autoscale with range of worker nodes if my codebase has mo...

- 1995 Views

- 4 replies

- 0 kudos

- 0 kudos

Thanks @Shua42 . You really helped me a lot.

- 0 kudos

- 2274 Views

- 1 replies

- 0 kudos

Resolved! Azure Databricks Outage - Incident ES-1463382 - Any Official RCA Available?

We experienced service disruptions on May 15th, 2025, related to Incident ID ES-1463382.Could anyone from Databricks share the official Root Cause Analysis (RCA) or point us to the correct contact or channel to obtain it?Thanks in advance!

- 2274 Views

- 1 replies

- 0 kudos

- 0 kudos

Hello @angiezz! Please raise a support ticket with the Databricks Support Team. They will be able to provide you with the official documentation regarding this incident.

- 0 kudos

- 801 Views

- 1 replies

- 0 kudos

Databricks Cluster Downsizing time

Hello,It seems that the cluster downsizing at our end is occurring rapidly: sometimes the workers go from 5-3 in mere 2 minutes!Is that normal? Can I do something to increase this downsizing time?- Jahanzeb

- 801 Views

- 1 replies

- 0 kudos

- 0 kudos

Hi @jahanzebbehan, unfortunately, I don't believe there is any way to decrease the downsizing time for a cluster, as this mostly happens automatically depending on workload volume.Here are some helpful links on autoscaling:https://community.databrick...

- 0 kudos

- 4106 Views

- 5 replies

- 0 kudos

Resolved! Databricks Serverless Job : sudden random failure

Hi, I've been running a job on Azure Databricks serverless, which just does some batch data processing every 4 hours. This job, deployed with bundles has been running fine for weeks, and all of a sudden, yesterday, it started failing with an error th...

- 4106 Views

- 5 replies

- 0 kudos

- 0 kudos

Hey @thibault , Glad to hear it is working again. I don't see any specific mention of a bug internally that would be related to this, but it is likely that it was due to a change in the underlying runtime for serverless compute. This may be one of th...

- 0 kudos

-

Access control

1 -

Apache spark

1 -

Azure

7 -

Azure databricks

5 -

Billing

2 -

Cluster

1 -

Compliance

1 -

Data Ingestion & connectivity

5 -

Databricks Runtime

1 -

Databricks SQL

2 -

DBFS

1 -

Dbt

1 -

Delta Sharing

1 -

DLT Pipeline

1 -

GA

1 -

Gdpr

1 -

Github

1 -

Partner

82 -

Public Preview

1 -

Service Principals

1 -

Unity Catalog

1 -

Workspace

2

- « Previous

- Next »

| User | Count |

|---|---|

| 119 | |

| 54 | |

| 38 | |

| 36 | |

| 25 |