Hello All,

I would like to get your inputs with a scenario that I see while writing into the bad_records file.

I am reading a ‘Ԓ’ delimited CSV file based on a schema that I have already defined. I have enabled error handling while reading the file to write the error rows into a badRecordsPath if I have a schema mismatch.

I have new line characters coming in from the source file because of which a few columns get moved to the next line and since those new rows do not align with the schema defined, it writes the rows into a file in the bad_records path that I have specified.

This works well for almost all the scenarios EXCEPT when I define the schema with DateType(). If I try to write a non date type value to this column, instead of writing the whole row to the bad_records path, it creates blank files in the bad_records folder. It also creates another folder named bad_files and creates another file in it which shows the error –

"reason":"org.apache.spark.SparkUpgradeException: [INCONSISTENT_BEHAVIOR_CROSS_VERSION.PARSE_DATETIME_BY_NEW_PARSER] You may get a different result due to the upgrading to Spark >= 3.0:\nFail to parse '009-7-4-23 ' in the new parser. You can set \"spark.sql.legacy.timeParserPolicy\" to \"LEGACY\" to restore the behavior before Spark 3.0, or set to \"CORRECTED\" and treat it as an invalid datetime string."

I get this error only while defining the datatype as DateType(). For testing purposes, I tried replacing it with IntegerType/TimestampType/DoubleType,etc and all of them writes to the bad_records file as expected with the error data.

Any leads on why this happens?

Below is the sample code

modified_schema = StructType(

[

StructField(".....", StringType(), True),

.....

StructField("ENTRYDATE", DateType(), True),

.....

StructField(".....", IntegerType(), True)

]

)

df = spark.read.format("csv").option("header","true").option("sep",” Ԓ”).schema(modified_schema).option("badRecordsPath",badRecordsPath).load(filepath)

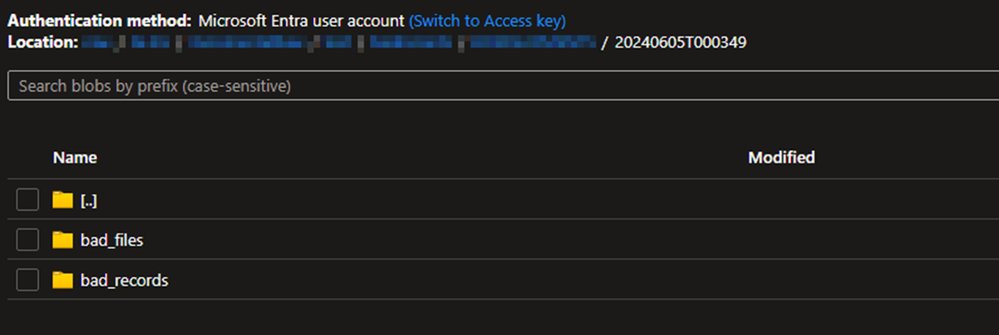

Below are the 2 folders generated inside my badRecordsPath.

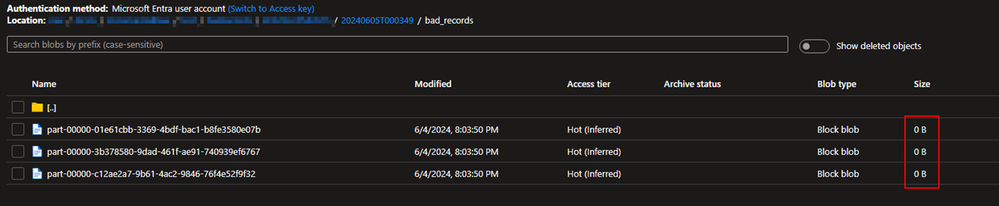

Below are the files generated inside the bad_records folder and it contains no information on the erroneous rows.