Hi all

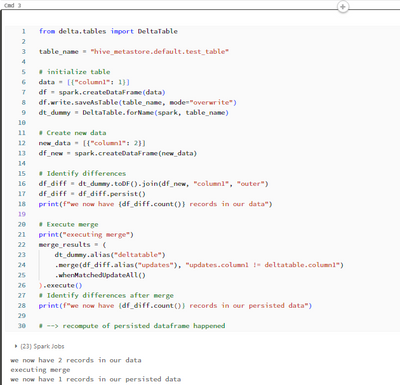

In the minimal example below you can see that executing a merge statement trigger recomputation of a persisted dataframe. How does this happen?

from delta.tables import DeltaTable

table_name = "hive_metastore.default.test_table"

# initialize table

data = [{"column1": 1}]

df = spark.createDataFrame(data)

df.write.saveAsTable(table_name, mode="overwrite")

dt_dummy = DeltaTable.forName(spark, table_name)

# Create new data

new_data = [{"column1": 2}]

df_new = spark.createDataFrame(new_data)

# Identify differences

df_diff = dt_dummy.toDF().join(df_new, "column1", "outer")

df_diff = df_diff.persist()

print(f"we now have {df_diff.count()} records in our data")

# Execute merge

print("executing merge")

merge_results = (

dt_dummy.alias("deltatable")

.merge(df_diff.alias("updates"), "updates.column1 != deltatable.column1")

.whenMatchedUpdateAll()

).execute()

# Identify differences after merge

print(f"we now have {df_diff.count()} records in our persisted data")

# --> recompute of persisted dataframe happened

This is run on DBR 13.2 and 13.3 LTS.