Details of the requirement is as below:

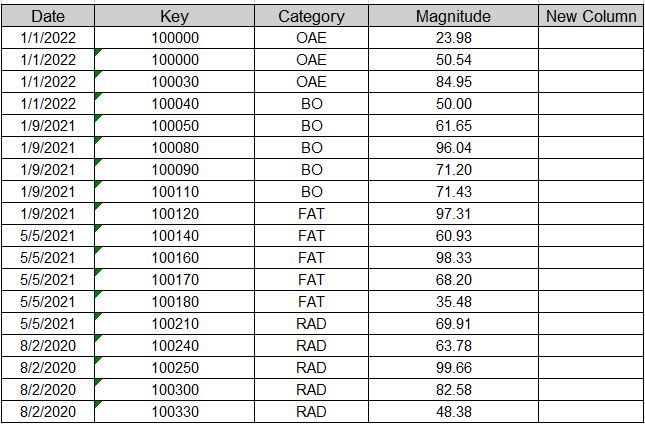

I have a table with below structure:

So i have to write a code in pyspark to calculate a new column.

So i have to write a code in pyspark to calculate a new column.

Logic for new column is Sum of Magnitude for different Categories divided by the total Magnitude.And it should be multiplied with 100 to show it in percentage.

For Example for Category OAE New Column should show (23.98+50.54+84.95)/Sum(Total Magnitude).

So there should be one row for each Date and Category.

Please help me in framing the code.

Let me know if you have any question.

I have attached sample data in the excel.

I am struct at this code. Basically how to divide the Sum for Each Category with the total sum of Magnitude.

import pyspark

from pyspark.sql import SparkSession

from pyspark.sql.functions import col,sum

from pyspark.sql import Window

from pyspark.sql import functions

df = sqlContext.sql(" select * from Table")

df1=df.withColumn("NewColumn",functions.sum("Magnitude").over(Window.partitionBy("Category")))

display(df1)

Thanks

Faizan