- 7931 Views

- 3 replies

- 0 kudos

50%-off Databricks certification voucher

Hello Databricks Community Team, I am reaching out to inquire about the Databricks certification voucher promotion for completing the Databricks Learning Festival (Virtual) courses.I completed one of the Databricks Learning Festival courses July 2024...

- 7931 Views

- 3 replies

- 0 kudos

- 0 kudos

I have already finished the course, how do I get the discount?

- 0 kudos

- 2095 Views

- 1 replies

- 0 kudos

How to Create Azure Key Vault and Assign Key Vault Administrator Role Using Terraform

Hi all,I’m currently working with Terraform to set up Azure resources, including OpenAI services, and I’d like to extend my configuration to create an Azure Key Vault. Specifically, I want to:Create an Azure Key Vault to store secrets/keys.Assign the...

- 2095 Views

- 1 replies

- 0 kudos

- 0 kudos

Hi @naveen0142 , 1. Create the Key Vault resource "azurerm_key_vault" "example" { name = var.key_vault_name location = azurerm_resource_group.example.location resource_group_name = azurerm_resource_group.example....

- 0 kudos

- 687 Views

- 0 replies

- 0 kudos

Atualização Incremental e/ou Modelos Compostos (Databricks x Power BI)

Gostaria de deixar meu modelo mais performático no Power BI, mas tenho encontrado algumas dificuldades ao conectá-lo em uma fonte no DataBricks. Queria saber se é possível fazer atualização incremenal e/ou trabalhar com modelos compostos (Direct Quer...

- 687 Views

- 0 replies

- 0 kudos

- 3252 Views

- 1 replies

- 0 kudos

User Unable to Access Key Vault Secrets Despite Role Assignment in Terraform

Hi All,I'm encountering an issue where a user is unable to access secrets in an Azure Key Vault, even though the user has been assigned the necessary roles using Terraform. Specifically, the user gets the following error when trying to access the sec...

- 3252 Views

- 1 replies

- 0 kudos

- 0 kudos

Are they accessing the Key Vault directly and not through Databricks? If so, based on your Terraform code, they should be able to directly read Secrets in the Azure Key Vault. You've configured the Key Vault with RBAC Authorization and assigned Key ...

- 0 kudos

- 1090 Views

- 1 replies

- 0 kudos

Prevent users from running shell commands

Hi, is there any way to prevent users from running shell commands in Databricks notebooks? for example, "%%bash" I read that REVOKE EXECUTE ON SHELL command can be used. but i am unable to make it to work. Thanks in advance for any help.

- 1090 Views

- 1 replies

- 0 kudos

- 0 kudos

Hi @bvraravind, You can use this spark setting "spark_conf.spark.databricks.repl.allowedLanguages": { "type": "fixed", "value": "python,sql" Over a cluster policy to prevent access to shell commands. https://docs.databricks.com/en/archive/compute/c...

- 0 kudos

- 3112 Views

- 2 replies

- 0 kudos

Terraform: Add Key Vault Administrator Role Assignment and Save Outputs to JSON Dynamically in Azure

Hi everyone,I am using Terraform to provision an OpenAI service and its modules along with a Key Vault in Azure. While the OpenAI service setup works as expected, I am facing two challenges:Role Assignment for Key VaultI need to assign the Key Vault ...

- 3112 Views

- 2 replies

- 0 kudos

- 0 kudos

For question two, you can use the local_file resource in Terraform: output "openai_api_type" {value = module.openai.api_type} output "openai_api_base" {value = module.openai.api_base} output "openai_api_version" {value = module.openai.api_version} ou...

- 0 kudos

- 5392 Views

- 4 replies

- 1 kudos

Cluster policy type

Hi guys,I am creating a cluster policy through json. "runtime_engine": {"type": "fixed""value": "PHOTON"}When I run the above code... PHOTON option is getting enabled but graying out... What would I specify in type field so that the photon option sho...

- 5392 Views

- 4 replies

- 1 kudos

- 1 kudos

Hey, If you change the type to "allowlist" and provide "PHOTON" and "STANDARD" as options that should fix your issue.here is an example: "runtime_engine": {"type": "allowlist""value": ["PHOTON", "STANDARD"]"defaultValue": "PHOTON"}

- 1 kudos

- 2575 Views

- 1 replies

- 1 kudos

All my replies has been deleted and new one is being moderated (deleted) - why?

I noticed that when I Reply to post and trying to help solve community problem - my post are being either moderated (deleted) or just not being saved. Old post/replies has been deleted.Is there any reason for that?I kind of lost my will to participat...

- 2575 Views

- 1 replies

- 1 kudos

- 1 kudos

Currently, I'm also facing same issue, my comments are automatically deleted and also not receiving email for same, which is happening to specific post.

- 1 kudos

- 4038 Views

- 2 replies

- 2 kudos

Error accessing file from dbfs inside mlflow serve endpoint

Hi,I have mlflow model served using serverless GPU which takes audio file name as input and then file will be passed as parameter to huggiung face model inside predict method. But I am getting following errorHFValidationError(\nhuggingface_hub.utils....

- 4038 Views

- 2 replies

- 2 kudos

- 2 kudos

I have the same issue.I have a large file that I cannot access from an MLFlow service.Things I have tried (none of these work):Read-only from DBFS`dbfs:/myfolder/myfile.chroma` does not work`/dbfs/myfolder/myfile.chroma` does not workRead-only from U...

- 2 kudos

- 1000 Views

- 0 replies

- 0 kudos

PowerBI reports migration from Synapse to Databricks sql

Hello Techie,Do someone please share detailed design consideration when migrating 250 powerBI reports from Azure Synapse, to Databricks (DB SQL).How to plan for cutover and strategy for PowerBI. what are the consideration to be done , is there any da...

- 1000 Views

- 0 replies

- 0 kudos

- 7482 Views

- 5 replies

- 1 kudos

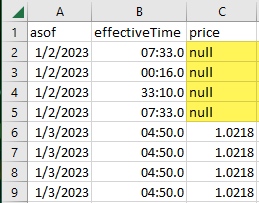

Query results in csv file include 'null' string for blank cell

After running a sql script, when downloading the results to a csv file, the file includes a null string for blank cells (see screenshot). Is ther a setting I can change to simply get empty cells instead?

- 7482 Views

- 5 replies

- 1 kudos

- 1 kudos

I understand, however this is more on CSV file format. Save your data in Delta format instead of CSV or text-based formats. Delta tables handle empty strings and NULL values more effectively, ensuring that empty strings are preserved during data ins...

- 1 kudos

- 7913 Views

- 6 replies

- 1 kudos

Materialized Views Without DLT?

I'm curious, is DLT *required* to use Materialized Views in Databricks? Is it not possible to create and refresh a Materialized view via a standard Databricks Workflow?

- 7913 Views

- 6 replies

- 1 kudos

- 1 kudos

Hi @ChristianRRL ,When creating a materialized view in Databricks, the data is stored in DBFS, cloud storage, or Unity Catalog volume. You can still create a materialized view by overwriting the same table each time, instead of using Append, Update, ...

- 1 kudos

- 5414 Views

- 4 replies

- 1 kudos

Purpose of DLT Table table_properties > quality:medallion

Hi there, silly question here but can anyone help me understand what practical purpose does labelling the table_properties with "quality":"<specific_medallion>"? For example: @Dlt.table( comment="Bronze live streaming table for Test data", name="...

- 5414 Views

- 4 replies

- 1 kudos

- 1 kudos

I'm with the same doubt @ChristianRRL, did you figured out something related to it?My doubt is to check if it's possible to apply any kind of access control based on this property.

- 1 kudos

- 4480 Views

- 6 replies

- 3 kudos

Resolved! Plotly Express not rendering in Firefox but fine in Safari

Using a basic example of plotly express i see no output in firefox but is fine in Safari. Any ideas why this may occur? import plotly.express as px import pandas as pd # Create a sample dataframe df = pd.DataFrame({ 'x': range(10), 'y': [2, 3, 5, 7...

- 4480 Views

- 6 replies

- 3 kudos

- 3 kudos

UPDATE: I reached out further to Databricks support and they have since deployed a fix. Works fine for me now!

- 3 kudos

- 9017 Views

- 8 replies

- 3 kudos

Unity catalog enabled workspace -Is there any way to disable workflow/job creation for certain users

Currently in unity catalog enabled workspace users with "Workspace access" can create workflows/jobs, there is no access control available to restrict users from creating jobs/workflows.Use case: In production there is no need for users, data enginee...

- 9017 Views

- 8 replies

- 3 kudos

- 3 kudos

@Lakshay Databricks offers a robust platform with a variety of features, including data ingestion, engineering, science, dashboards, and applications. However, I believe that some features, such as workflow/job creation, alerts, dashboards, and Genie...

- 3 kudos

-

.CSV

1 -

Access Data

2 -

Access Databricks

3 -

Access Delta Tables

2 -

Account reset

1 -

adcAws databricks

1 -

ADF Pipeline

1 -

ADLS Gen2 With ABFSS

1 -

Advanced Data Engineering

2 -

AI

5 -

Analytics

1 -

Apache spark

1 -

Apache Spark 3.0

1 -

api

1 -

Api Calls

1 -

API Documentation

4 -

App

2 -

Application

2 -

Architecture

1 -

asset bundle

1 -

Asset Bundles

3 -

Auto-loader

1 -

Autoloader

4 -

Aws databricks

1 -

AWS security token

1 -

AWSDatabricksCluster

1 -

Azure

7 -

Azure data disk

1 -

Azure databricks

16 -

Azure Databricks Delta Table

1 -

Azure Databricks Job

1 -

Azure Databricks SQL

6 -

Azure databricks workspace

1 -

Azure Unity Catalog

6 -

Azure-databricks

1 -

AzureDatabricks

1 -

AzureDevopsRepo

1 -

best practices

1 -

Big Data Solutions

1 -

Billing

1 -

Billing and Cost Management

2 -

Blackduck

1 -

Bronze Layer

1 -

Business Intelligence

1 -

CDC

1 -

Certification

3 -

Certification Exam

1 -

Certification Voucher

3 -

CICDForDatabricksWorkflows

1 -

Cloud_files_state

1 -

CloudFiles

1 -

Cluster

3 -

Cluster Init Script

1 -

Comments

1 -

Community Edition

4 -

Community Edition Account

1 -

Community Event

1 -

Community Group

2 -

Community Members

1 -

Community site

1 -

CommunityArticle

1 -

Compute

3 -

Compute Instances

1 -

conditional tasks

1 -

Connection

1 -

Contest

1 -

Credentials

1 -

csv

1 -

Custom Python

1 -

CustomLibrary

1 -

Data

1 -

Data + AI Summit

1 -

Data Engineer Associate

1 -

Data Engineering

4 -

Data Explorer

1 -

Data Governance

1 -

Data Ingestion & connectivity

1 -

Data Ingestion Architecture

1 -

Data Processing

1 -

Databrick add-on for Splunk

1 -

databricks

4 -

Databricks Academy

1 -

Databricks AI + Data Summit

1 -

Databricks Alerts

1 -

Databricks App

1 -

Databricks Assistant

1 -

Databricks autoloader

1 -

Databricks Certification

1 -

Databricks Cluster

2 -

Databricks Clusters

1 -

Databricks Community

10 -

Databricks community edition

3 -

Databricks Community Edition Account

1 -

Databricks Community Rewards Store

3 -

Databricks connect

1 -

Databricks Dashboard

3 -

Databricks delta

2 -

Databricks Delta Table

2 -

Databricks Demo Center

1 -

Databricks Documentation

4 -

Databricks genAI associate

1 -

Databricks JDBC Driver

1 -

Databricks Job

1 -

Databricks Lakeflow

1 -

Databricks Lakehouse Platform

6 -

Databricks Migration

1 -

Databricks Model

1 -

Databricks notebook

2 -

Databricks Notebooks

4 -

Databricks Platform

2 -

Databricks Pyspark

1 -

Databricks Python Notebook

1 -

Databricks Repo

1 -

Databricks Runtime

1 -

Databricks Serverless

2 -

Databricks SQL

5 -

Databricks SQL Alerts

1 -

Databricks SQL Warehouse

1 -

Databricks Terraform

1 -

Databricks UI

1 -

Databricks Unity Catalog

4 -

Databricks User Group

1 -

Databricks Workflow

2 -

Databricks Workflows

2 -

Databricks workspace

3 -

Databricks-connect

1 -

databricks_cluster_policy

1 -

DatabricksJobCluster

1 -

DataCleanroom

1 -

DataDays

1 -

Datagrip

1 -

DataMasking

2 -

DataVersioning

1 -

dbdemos

2 -

DBFS

1 -

DBRuntime

1 -

DBSQL

1 -

DDL

1 -

Dear Community

1 -

deduplication

1 -

Delt Lake

1 -

Delta Live Pipeline

3 -

Delta Live Table

5 -

Delta Live Table Pipeline

5 -

Delta Live Table Pipelines

4 -

Delta Live Tables

7 -

Delta Sharing

2 -

Delta Time Travel

1 -

deltaSharing

1 -

Deny assignment

1 -

Development

1 -

DevOps

1 -

DLT

10 -

DLT Pipeline

7 -

DLT Pipelines

5 -

Dolly

1 -

Download files

1 -

DQX

1 -

Dynamic Variables

1 -

Engineering With Databricks

1 -

env

1 -

ETL Pipelines

1 -

Event Driven

1 -

External Sources

1 -

External Storage

2 -

FAQ for Databricks Learning Festival

2 -

Feature Store

2 -

File Trigger

1 -

Filenotfoundexception

1 -

Free Edition

1 -

Free trial

1 -

friendsofcommunity

1 -

GCP Databricks

1 -

GenAI

2 -

GenAI and LLMs

1 -

GenAI Course Material

1 -

Getting started

3 -

Google Bigquery

1 -

HIPAA

1 -

Hubert Dudek

2 -

import

2 -

Integration

1 -

JDBC Connections

1 -

JDBC Connector

1 -

Job Task

1 -

JSON Object

1 -

LakeflowDesigner

1 -

Learning

2 -

Lineage

1 -

LLM

1 -

Login

1 -

Login Account

1 -

Machine Learning

3 -

MachineLearning

1 -

Materialized Tables

2 -

Medallion Architecture

1 -

meetup

2 -

Metadata

1 -

Migration

1 -

ML Model

2 -

MlFlow

2 -

Model

1 -

Model Serving

1 -

Model Training

1 -

Module

1 -

Monitoring

1 -

Networking

2 -

Notebook

1 -

Onboarding Trainings

1 -

OpenAI

1 -

Pandas udf

1 -

Permissions

1 -

personalcompute

1 -

Pipeline

2 -

Plotly

1 -

PostgresSQL

1 -

Pricing

1 -

provisioned throughput

1 -

Pyspark

1 -

Python

5 -

Python Code

1 -

Python Wheel

1 -

Quickstart

1 -

Read data

1 -

Repos Support

1 -

Reset

1 -

Rewards Store

2 -

Salesforce with Databricks

1 -

Sant

1 -

Schedule

1 -

Serverless

3 -

serving endpoint

1 -

Session

1 -

Sign Up Issues

2 -

Software Development

1 -

Spark

1 -

Spark Connect

1 -

Spark scala

1 -

sparkui

2 -

Speakers

1 -

Splunk

2 -

SQL

8 -

streamlit

1 -

Summit23

7 -

Support Tickets

1 -

Sydney

2 -

Table Download

1 -

Tags

3 -

terraform

1 -

Training

2 -

Troubleshooting

1 -

Unity Catalog

4 -

Unity Catalog Metastore

2 -

Update

1 -

user groups

2 -

Venicold

3 -

Vnet

1 -

Voucher Not Recieved

1 -

Watermark

1 -

Weekly Documentation Update

1 -

Weekly Release Notes

2 -

Women

1 -

Workflow

2 -

Workspace

3

- « Previous

- Next »

| User | Count |

|---|---|

| 144 | |

| 135 | |

| 57 | |

| 45 | |

| 42 |