- 3102 Views

- 1 replies

- 2 kudos

MLOps on Azure: API vs SDK vs Databricks CLI?

Hello fellow community members,In our organization, we have developed, deployed and utilized an API-based MLOps pipeline using Azure DevOps.The CI/CD pipeline has been developed and refined for about 18 months or so, and I have to say that it is pret...

- 3102 Views

- 1 replies

- 2 kudos

- 2 kudos

Hello @ManiMar, In my opinion it's up to you to choose, and you're in the right path by comparing the pros/cons of each approach. I'd like to highlight that one of the advantages of the Databricks CLI is being able to use Databricks Asset Bundles. I...

- 2 kudos

- 1544 Views

- 1 replies

- 0 kudos

Unity Catalog - Quality - Monitor error

Monitor errorAn error occurred while configuring your monitor for this table:Error while creating dashboard for unity-catalog-xxx: com.databricks.api.base.DatabricksServiceException: INTERNAL_ERROR: An internal error occurredPlease delete and recreat...

- 1544 Views

- 1 replies

- 0 kudos

- 0 kudos

If you have too many dashboards, there's a chance that the workspace reached the quota. I recommend you contacting Databricks Support for a more in-depth analysis.

- 0 kudos

- 3999 Views

- 2 replies

- 0 kudos

count or toPandas taking too long

Hi,I am fetching data from unity catalog from notebooks using spark.sql(). The query takes just a few seconds - I am actually trying to retrieving 2 rows - but some operations like count() or toPandas() take forever. I wonder why does it take so long...

- 3999 Views

- 2 replies

- 0 kudos

- 0 kudos

Hey @jimcast how are you? You can check the internals and have a good hint of what's happening using the SparkUI. Filter and select the jobs that are taking the longest and check what is being requested on the SQL/Data Frame tab, as well as their pla...

- 0 kudos

- 2514 Views

- 2 replies

- 2 kudos

- 2514 Views

- 2 replies

- 2 kudos

- 2 kudos

Yes, storage-partitioned joins can be optimized for data skewness. Techniques like adaptive query processing and dynamic repartitioning help distribute the workload evenly across nodes. clipping path service provider By identifying and addressing dat...

- 2 kudos

- 2957 Views

- 3 replies

- 0 kudos

DataBricks Certification Exam Got Suspended. Require support for the same.

Hello Team, I encountered Pathetic experience while attempting my 1st DataBricks certification. Abruptly, Proctor asked me to show my desk, after showing he/she asked multiple times.. wasted my time and then suspended my exam without giving any reaso...

- 2957 Views

- 3 replies

- 0 kudos

- 0 kudos

@Kaniz @Cert-Team @Sujitha I have sent multiple emails to the Support team to reschedule my exam with Date, but I have not received any confirmation from them.Please look into this issue and reschedule the exam as soon as possible. This certification...

- 0 kudos

- 1139 Views

- 0 replies

- 0 kudos

Got suspended which attempting Databricks certified Associate Developer for Apache Spark 3.0 Python

Hi Team, My Databricks Certified exam got suspended.I was continuously in front of the camera and an alert appeared and then my exam resumed. Then later a support person asked me to show the entire table and entire room, I have showed around the room...

- 1139 Views

- 0 replies

- 0 kudos

- 6535 Views

- 1 replies

- 1 kudos

Resolved! capture return value from databricks job to local machine by CLI

Hi,I want to run a python code on databricks notebook and return the value to my local machine. Here is the summary:I upload files to volumes on databricks. I generate a md5 for local file. Once the upload is finished, I create a python script with t...

- 6535 Views

- 1 replies

- 1 kudos

- 1 kudos

Hello @pshuk, You could check the below CLI commands: get-run-output Get the output for a single run. This is the REST API reference, which relates to the CLI command: https://docs.databricks.com/api/workspace/jobs/getrunoutput export-run There's al...

- 1 kudos

- 4034 Views

- 1 replies

- 0 kudos

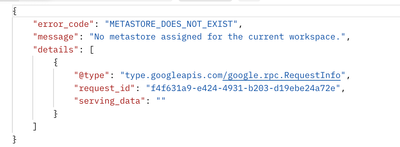

Resolved! Error Code: METASTORE_DOES_NOT_EXIST when using Databricks API

Hello, I'm attempting to use the databricks API to list the catalogs in the metastore. When I send the GET request to `/api/2.1/unity-catalog/catalogs` , I get this error I have checked multiple times and yes, we do have a metastore associated with t...

- 4034 Views

- 1 replies

- 0 kudos

- 0 kudos

Turns out I was using the wrong databricks host url when querying from postman. I was using my Azure instance instead of my AWS instance.

- 0 kudos

- 27443 Views

- 3 replies

- 5 kudos

Resolved! Use SQL Server Management Studio to Connect to DataBricks?

The Notebook UI doesn't always provide the best experience for running exploratory SQL queries. Is there a way for me to use SQL Server Management Studio (SSMS) to connect to DataBricks? See Also:https://learn.microsoft.com/en-us/answers/questions/74...

- 27443 Views

- 3 replies

- 5 kudos

- 5 kudos

What you can do is define a SQL endpoint as a linked server. Like that you can use SSMS and T-SQL.However, it has some drawbacks (no/bad query pushdown, no caching).Here is an excellent blog of Kyle Hale of databricks:Tutorial: Create a Databricks S...

- 5 kudos

- 4269 Views

- 1 replies

- 2 kudos

ingest csv file on-prem to delta table on databricks

Hi,So I want to create a delta live table using a csv file that I create locally (on-prem). A little background: So I have a working ELT pipeline that finds newly generated files (since the last upload), and upload them to databricks volume and at th...

- 4269 Views

- 1 replies

- 2 kudos

- 2 kudos

Hello @pshuk , Based on your description, you have an external pipeline that writes CSV files to a specific storage location and you wish to set up a DLT based on the output of this pipeline. DLT offers has access to a feature called Autoloader, whic...

- 2 kudos

- 3092 Views

- 3 replies

- 3 kudos

I am facing an issue while generating the DBU consumption report and need help.

I am trying to access the following system tables to generate a DBU consumption report, but I am not seeing this table in the system schema. Could you please help me how to access it?system.billing.inventory, system.billing.workspaces, system.billing...

- 3092 Views

- 3 replies

- 3 kudos

- 3976 Views

- 2 replies

- 0 kudos

Delta Sharing - Info about Share Recipient

What information do you know about a share recipient when they access a table shared to them via Delta Sharing?Wondering if we might be able to utilize something along the lines of is_member, is_account_group_member, session_user, etc for ROW and COL...

- 3976 Views

- 2 replies

- 0 kudos

- 0 kudos

Now that I'm looking closer at the share credentials and the recipient entity you would really need a way to know the bearer token and relate that back to various recipient properties - databricks.name and any custom recipient property tags you may h...

- 0 kudos

- 3355 Views

- 0 replies

- 0 kudos

Parallel kafka consumer in spark structured streaming

Hi,I have a spark streaming job which reads from kafka and process data and write to delta lake.Number of kafka partition: 100number of executor: 2 (4 core each)So we have 8 cores total which are reading from 100 partitions of a topic. I wanted to un...

- 3355 Views

- 0 replies

- 0 kudos

- 2117 Views

- 0 replies

- 1 kudos

how to develop Notebooks on vscode for git repos?

I am able to use vscode extension + databricks connect to develop Notebooks on my local computer and run them on my databricks cluster. However I can not figure out how to develop the Notebooks that have the file `.py` extension but identified by Dat...

- 2117 Views

- 0 replies

- 1 kudos

- 2120 Views

- 1 replies

- 0 kudos

Error While Running Table Schema

Hi All,I am facing issue while running a new table in bronze layer.Error - AnalysisException: org.apache.hadoop.hive.ql.metadata.HiveException: Unable to alter table.com.databricks.backend.common.rpc.SparkDriverExceptions$SQLExecutionException: org.a...

- 2120 Views

- 1 replies

- 0 kudos

- 0 kudos

Hello @Mirza1 , Could you please share the source code that is generating the exception, as well as the DBR version you are currently using? This will help me better understand the issue.

- 0 kudos

-

.CSV

1 -

Access Data

2 -

Access Databricks

3 -

Access Delta Tables

2 -

Account reset

1 -

adcAws databricks

1 -

ADF Pipeline

1 -

ADLS Gen2 With ABFSS

1 -

Advanced Data Engineering

2 -

AI

5 -

Analytics

1 -

Apache spark

1 -

Apache Spark 3.0

1 -

api

1 -

Api Calls

1 -

API Documentation

4 -

App

2 -

Application

2 -

Architecture

1 -

asset bundle

1 -

Asset Bundles

3 -

Auto-loader

1 -

Autoloader

4 -

Aws databricks

1 -

AWS security token

1 -

AWSDatabricksCluster

1 -

Azure

7 -

Azure data disk

1 -

Azure databricks

16 -

Azure Databricks Delta Table

1 -

Azure Databricks Job

1 -

Azure Databricks SQL

6 -

Azure databricks workspace

1 -

Azure Unity Catalog

6 -

Azure-databricks

1 -

AzureDatabricks

1 -

AzureDevopsRepo

1 -

best practices

1 -

Big Data Solutions

1 -

Billing

1 -

Billing and Cost Management

2 -

Blackduck

1 -

Bronze Layer

1 -

Business Intelligence

1 -

CDC

1 -

Certification

3 -

Certification Exam

1 -

Certification Voucher

3 -

CICDForDatabricksWorkflows

1 -

Cloud_files_state

1 -

CloudFiles

1 -

Cluster

3 -

Cluster Init Script

1 -

Comments

1 -

Community Edition

4 -

Community Edition Account

1 -

Community Event

1 -

Community Group

2 -

Community Members

1 -

Community site

1 -

CommunityArticle

1 -

Compute

3 -

Compute Instances

1 -

conditional tasks

1 -

Connection

1 -

Contest

1 -

Credentials

1 -

csv

1 -

Custom Python

1 -

CustomLibrary

1 -

Data

1 -

Data + AI Summit

1 -

Data Engineer Associate

1 -

Data Engineering

4 -

Data Explorer

1 -

Data Governance

1 -

Data Ingestion & connectivity

1 -

Data Ingestion Architecture

1 -

Data Processing

1 -

Databrick add-on for Splunk

1 -

databricks

4 -

Databricks Academy

1 -

Databricks AI + Data Summit

1 -

Databricks Alerts

1 -

Databricks App

1 -

Databricks Assistant

1 -

Databricks autoloader

1 -

Databricks Certification

1 -

Databricks Cluster

2 -

Databricks Clusters

1 -

Databricks Community

10 -

Databricks community edition

3 -

Databricks Community Edition Account

1 -

Databricks Community Rewards Store

3 -

Databricks connect

1 -

Databricks Dashboard

3 -

Databricks delta

2 -

Databricks Delta Table

2 -

Databricks Demo Center

1 -

Databricks Documentation

4 -

Databricks genAI associate

1 -

Databricks JDBC Driver

1 -

Databricks Job

1 -

Databricks Lakeflow

1 -

Databricks Lakehouse Platform

6 -

Databricks Migration

1 -

Databricks Model

1 -

Databricks notebook

2 -

Databricks Notebooks

4 -

Databricks Platform

2 -

Databricks Pyspark

1 -

Databricks Python Notebook

1 -

Databricks Repo

1 -

Databricks Runtime

1 -

Databricks Serverless

2 -

Databricks SQL

5 -

Databricks SQL Alerts

1 -

Databricks SQL Warehouse

1 -

Databricks Terraform

1 -

Databricks UI

1 -

Databricks Unity Catalog

4 -

Databricks User Group

1 -

Databricks Workflow

2 -

Databricks Workflows

2 -

Databricks workspace

3 -

Databricks-connect

1 -

databricks_cluster_policy

1 -

DatabricksJobCluster

1 -

DataCleanroom

1 -

DataDays

1 -

Datagrip

1 -

DataMasking

2 -

DataVersioning

1 -

dbdemos

2 -

DBFS

1 -

DBRuntime

1 -

DBSQL

1 -

DDL

1 -

Dear Community

1 -

deduplication

1 -

Delt Lake

1 -

Delta Live Pipeline

3 -

Delta Live Table

5 -

Delta Live Table Pipeline

5 -

Delta Live Table Pipelines

4 -

Delta Live Tables

7 -

Delta Sharing

2 -

Delta Time Travel

1 -

deltaSharing

1 -

Deny assignment

1 -

Development

1 -

DevOps

1 -

DLT

10 -

DLT Pipeline

7 -

DLT Pipelines

5 -

Dolly

1 -

Download files

1 -

DQX

1 -

Dynamic Variables

1 -

Engineering With Databricks

1 -

env

1 -

ETL Pipelines

1 -

Event Driven

1 -

External Sources

1 -

External Storage

2 -

FAQ for Databricks Learning Festival

2 -

Feature Store

2 -

File Trigger

1 -

Filenotfoundexception

1 -

Free Edition

1 -

Free trial

1 -

friendsofcommunity

1 -

GCP Databricks

1 -

GenAI

2 -

GenAI and LLMs

1 -

GenAI Course Material

1 -

Getting started

3 -

Google Bigquery

1 -

HIPAA

1 -

Hubert Dudek

2 -

import

2 -

Integration

1 -

JDBC Connections

1 -

JDBC Connector

1 -

Job Task

1 -

JSON Object

1 -

LakeflowDesigner

1 -

Learning

2 -

Lineage

1 -

LLM

1 -

Login

1 -

Login Account

1 -

Machine Learning

3 -

MachineLearning

1 -

Materialized Tables

2 -

Medallion Architecture

1 -

meetup

2 -

Metadata

1 -

Migration

1 -

ML Model

2 -

MlFlow

2 -

Model

1 -

Model Serving

1 -

Model Training

1 -

Module

1 -

Monitoring

1 -

Networking

2 -

Notebook

1 -

Onboarding Trainings

1 -

OpenAI

1 -

Pandas udf

1 -

Permissions

1 -

personalcompute

1 -

Pipeline

2 -

Plotly

1 -

PostgresSQL

1 -

Pricing

1 -

provisioned throughput

1 -

Pyspark

1 -

Python

5 -

Python Code

1 -

Python Wheel

1 -

Quickstart

1 -

Read data

1 -

Repos Support

1 -

Reset

1 -

Rewards Store

2 -

Salesforce with Databricks

1 -

Sant

1 -

Schedule

1 -

Serverless

3 -

serving endpoint

1 -

Session

1 -

Sign Up Issues

2 -

Software Development

1 -

Spark

1 -

Spark Connect

1 -

Spark scala

1 -

sparkui

2 -

Speakers

1 -

Splunk

2 -

SQL

8 -

streamlit

1 -

Summit23

7 -

Support Tickets

1 -

Sydney

2 -

Table Download

1 -

Tags

3 -

terraform

1 -

Training

2 -

Troubleshooting

1 -

Unity Catalog

4 -

Unity Catalog Metastore

2 -

Update

1 -

user groups

2 -

Venicold

3 -

Vnet

1 -

Voucher Not Recieved

1 -

Watermark

1 -

Weekly Documentation Update

1 -

Weekly Release Notes

2 -

Women

1 -

Workflow

2 -

Workspace

3

- « Previous

- Next »

| User | Count |

|---|---|

| 144 | |

| 135 | |

| 57 | |

| 45 | |

| 42 |