delta live tables linkage leneage

not able to see linkage/lineage details for delta live tables and MVs.Getting pipeline error : use live.table_name instead of catalog.schema.table_name

- 1514 Views

- 0 replies

- 0 kudos

not able to see linkage/lineage details for delta live tables and MVs.Getting pipeline error : use live.table_name instead of catalog.schema.table_name

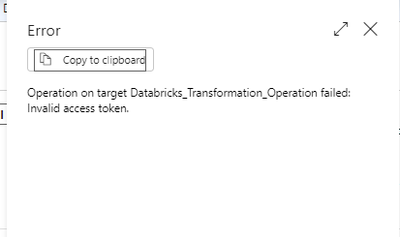

Hi Team,I have created devops pipeline for databricks deployment on different environments and which got succussed but recently i have implemented the PEP's on databricks and devops pipeline getting failed with below error.Error: JSONDecodeError: Exp...

Problem Statement: We are currently utilizing customer-managed keys for Databricks compute encryption at the workspace level. As part of our key rotation strategy, we find ourselves needing to bring down the entire compute/clusters to update storage ...

Maybe you can use azure key vault to store customer-managed keyshttps://learn.microsoft.com/en-us/azure/databricks/security/secrets/secret-scopes#--create-an-azure-key-vault-backed-secret-scope

We're looking for feedback on the Databricks free trial experience, and we need your help! Whether you've used it for data engineering, data science, or analytics, Sujit Nair, our Product Manager on the free trial experience, and our journey archite...

I want to get whether photon was used for a job or not. The api lets me get this for maybe 40% of jobs through the runtime_engine field, but the majority of jobs are unspecified. How do I get whether photon was used for those cases? The docs mention ...

Hi I am new to databricks and need some inputs.I am trying to create Delta External table in databricks using existing path which contains csv files.What i observed is below code will create EXTERNAL table but provider is CSV.------------------------...

@tajinder123 - can you please modify the syntax as below to create as a delta table CREATE TABLE employee123 USING DELTA LOCATION '/path/to/existing/delta/files';

My post was marked as Spam after trying to post the description of my issue so now I have posted the question on stackoverflow.

When I attach a notebook to my cluster and run a cell the notebook is detached.Cell execution states:Waiting for compute to be readyThen the attached message is shown.Notebook detachedException execution context: java.net.SocketTimeoutException: Conn...

Hi, First foray into DLT and following code exerts from the sample-DLT-notebook.I'm creating a notebook with the SQL below:CREATE STREAMING LIVE TABLE sales_orders_rawCOMMENT "The raw sales orders, ingested from /databricks-datasets."TBLPROPERTIES ...

If you change the notebook default language as opposed to using magic command. I normally have it set to Python, I've wrongly assumed DLT would transpose as can't use magic command but have to change default in order for it to work.

Whenever I try validating a pipeline that already runs productively without any issue, it throws me the following error:BAD_REQUEST: Failed to load notebook '/Repos/(...).sql'. Only SQL and Python notebooks are supported currently.

how can I download the run and event logs? spark UI is loading them from somewhere but I couldn't find them in dbfs nor on s3

Seeking efficient strategies for re solving errors in Azure Data Factory pipelines. Any valuable tips or techniques?

We have a cluster running on 13.3 LTS (includes Apache Spark 3.4.1, Scala 2.12).We want to test with a different type of cluster (14.3 LTS (includes Apache Spark 3.5.0, Scala 2.12))And all of a sudden we get errors that complain about a casting a Big...

I have logged the issue with Microsoft last week and they confirmed it is a Databricks bug. A fix is supposedly being rolled out at the moment across Databricks regions. As anticipated, we have engaged the Databricks core team to further investigate ...

in spark, table1 is small and broadcasted and joined with table 2. output is stored in df1. again, table1 is required to join with table3 and output need to be stored in df2. do it need to be broadcasted again?

Hello,I was experimenting with a ML model with different parameters and check the results. However, the important part of this code is contained in a couple of cells (say cell # 12, 13 & 14). I like to proceed to the next cell only when the results a...

| User | Count |

|---|---|

| 143 | |

| 135 | |

| 57 | |

| 45 | |

| 42 |