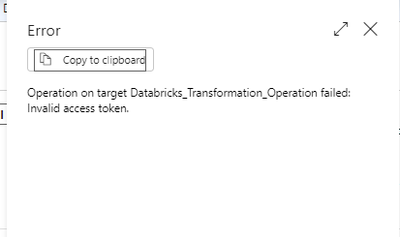

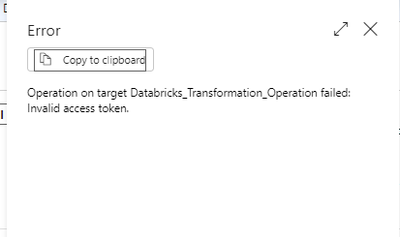

Operation on target Databricks_Transformation_Operation failed: Invalid access token.

Seeking efficient strategies for re solving errors in Azure Data Factory pipelines. Any valuable tips or techniques?

- 1695 Views

- 0 replies

- 0 kudos

Seeking efficient strategies for re solving errors in Azure Data Factory pipelines. Any valuable tips or techniques?

We have a cluster running on 13.3 LTS (includes Apache Spark 3.4.1, Scala 2.12).We want to test with a different type of cluster (14.3 LTS (includes Apache Spark 3.5.0, Scala 2.12))And all of a sudden we get errors that complain about a casting a Big...

I have logged the issue with Microsoft last week and they confirmed it is a Databricks bug. A fix is supposedly being rolled out at the moment across Databricks regions. As anticipated, we have engaged the Databricks core team to further investigate ...

in spark, table1 is small and broadcasted and joined with table 2. output is stored in df1. again, table1 is required to join with table3 and output need to be stored in df2. do it need to be broadcasted again?

Hello,I was experimenting with a ML model with different parameters and check the results. However, the important part of this code is contained in a couple of cells (say cell # 12, 13 & 14). I like to proceed to the next cell only when the results a...

I have data in my API Endpoint but am unable to read it using Databricks. My data is limited to my private IP address and can only be accessed over a VPN connection. I can't read data into Databricks as a result of this. I can obtain the data in VS C...

Hi AravindNaniThis is more of infrastructure questions, you have to make sure that:1) Your databricks Workspace is provisioned in VNET Injection mode2) Your VNET is either peered to "HUB" network where you have S2S VPN Connection to API or you have t...

I'm trying to use the API of billable usage and I do get a report but I have not been able to get the usd cost report, only the dbuHours. I guess I've to change the meter_name but I cannot find the key for that parameter anywhere

In databricks, if a job fails, then an email is sent off as notification.The recipient, receives the email with the link to the databricks workspace.Question:How is it possible the email is sent without any link, just the plain text in the email is w...

Hi everyone, I am looking for a way to automate initial setup of Azure Databricks workspace and Unity Catalog but can't find anything on this topic other than Terraform. Can you share if this is possible with powershell, for example. Thank you un adv...

I'm trying to do fuzzy matching on two dataframes by cross joining them and then using a udf for my fuzzy matching. But using both python udf and pandas udf its either very slow or I get an error. @pandas_udf("int")def core_match_processor(s1: pd.Ser...

I'm now getting the error: (SQL_GROUPED_AGG_PANDAS_UDF) is not supported on clusters in Shared access mode.Even though this article clearly states that pandas udf is supported for shared cluster in databrickshttps://www.databricks.com/blog/shared-clu...

Hey Folks anyone put Databricks behind Okta and enabled Unified Login with workspaces that have a Unity Catalog metastore applied and some that don't?There are some workspaces we can't move over yet and it isn't clear in documentation if Unity Catalo...

Yes, users should be able to use a single Okta application for all workspaces, regardless of whether the Unity Catalog metastore has been applied or not. The Unity Catalog is a feature that allows you to manage and secure access to your data across a...

I am trying to hit /api/2.1/unity-catalog/artifact-allowlists/as a part of INIT migration script. Its is in public preview, do we need to enable anything else to use a API which is in Public preview. I am getting 404 error. But using same token for ...

How to enable "Create Vector Search Index" button in DB workspace?Following is the screenshot from the Microsoft Ignite 2023 Databricks presentation:

The feature is in public preview only in some regions, you can check the available regions in the documentation here. In addition there are certain requirements, such as a UC enabled workspace and Serverless Compute enabled, you can check all requir...

I can run a query that uses the CONVERT_TIMEZONE function in a SQL notebook. When I move the code to my DLT notebook the pipeline produces this error:Cannot resolve function `CONVERT_TIMEZONE`Here is the line: CONVERT_TIMEZONE('UTC', 'America/Phoen...

Yes, the notebook is set to SQL and the convert_timezone function is within a select statement.

Let's say i have run a query and it showed me results. we can find the respective query execution plan on the UI. Is there any way we can get that execution plan through programmatically or through API?

You can obtain the query execution plan programmatically using the EXPLAIN statement in SQL. The EXPLAIN statement displays the execution plan that the database planner generates for the supplied statement. The execution plan shows how the table(s) r...

I recently saw a link to the Kudos Leaderboard for the Community Discussions. It has always been my hope and fantasy , ever since I was a little child that I would someday be the #1 Kudoed Author on Community Discusions on community.Databricks.com....

| User | Count |

|---|---|

| 142 | |

| 135 | |

| 57 | |

| 45 | |

| 42 |