Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Machine Learning

Dive into the world of machine learning on the Databricks platform. Explore discussions on algorithms, model training, deployment, and more. Connect with ML enthusiasts and experts.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks Community

- Machine Learning

- Error when running job in databricks

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Error when running job in databricks

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-25-2022 11:47 PM

Hello, I am very new with databricks and MLflow. I faced with the problem about running job. When the job is run, it usually failed and retried itself, so it incasesed running time, i.e., from normally 6 hrs to 12-18 hrs.

From the error log, it shows that the error came from this point.

# df_master_scored = df_master_scored.join(df_master, ["du_spine_primary_key"], how="left")

df_master_scored.write.format("delta").mode("overwrite").saveAsTable(

delta_table_schema + ".l5_du_scored_" + control_group

)Furthermore, the error I found usually showed like this:

Py4JJavaError: An error occurred while calling o36819.saveAsTable.

: org.apache.spark.SparkException: Job aborted.Then, it shows the cause that:

Caused by: org.apache.spark.SparkException: Job aborted due to stage failure: Task 6 in stage 349.0 failed 4 times, most recent failure: Lost task 6.3 in stage 349.0 (TID 128171, 10.0.2.18, executor 22): org.apache.spark.api.python.PythonException: 'mlflow.exceptions.MlflowException: API request to https://southeastasia.azuredatabricks.net/api/2.0/mlflow/runs/search failed with exception HTTPSConnectionPool(host='southeastasia.azuredatabricks.net', port=443): Max retries exceeded with url: /api/2.0/mlflow/runs/search (Caused by ResponseError('too many 429 error responses'))'.Sometimes, the cause changed to be like this (but only showed in the latest job running):

Caused by: org.apache.spark.SparkException: Job aborted due to stage failure: Task 13 in stage 366.0 failed 4 times, most recent failure: Lost task 13.3 in stage 366.0 (TID 128315, 10.0.2.7, executor 19): ExecutorLostFailure (executor 19 exited caused by one of the running tasks) Reason: Executor heartbeat timed out after 153563 msI don't know how to solve this issue. It would be related to MLflow problem. Anyway, it increase a lot of cost.

Any suggestion for solving this issue?

5 REPLIES 5

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-26-2022 12:06 AM

Can you try with .option("overwriteSchema", "true")

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-26-2022 12:42 AM

Okay, I have added already. Let's see the result tonight. 😀

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

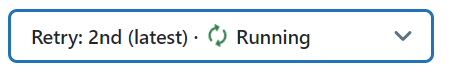

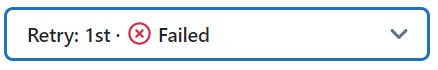

07-26-2022 06:41 PM

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-27-2022 04:10 AM

I think you will have to debug your notebook to see where the issue actually arises.

The error pops up at writing the data because that is an action (and spark code is only executed at an action).

But the cause of the error seems to be somewhere upstream.

So try a .show or display(df) cell by cell to see where you get an error.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

09-05-2022 06:25 AM

Hey there @Tanawat Benchasirirot

Hope all is well! Just wanted to check in if you were able to resolve your issue and would you be happy to share the solution or mark an answer as best? Else please let us know if you need more help.

We'd love to hear from you.

Thanks!

Announcements

Related Content

- Databricks CLI token creation fails with “cannot configure default credentials” in Administration & Architecture

- Databricks integrating with ServiceNow via Lakeflow Connect for data ingestion in Administration & Architecture

- Disable Public Network Access on Databricks Managed Storage Account - Deny Assignment in Data Engineering

- Lakehouse sync tables over rolling history in Data Engineering

- Materialized view creation fails in Data Engineering