Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Machine Learning

Dive into the world of machine learning on the Databricks platform. Explore discussions on algorithms, model training, deployment, and more. Connect with ML enthusiasts and experts.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Databricks Community

- Machine Learning

- Permission denied: Lightning Logs

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Permission denied: Lightning Logs

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-12-2023 08:47 AM

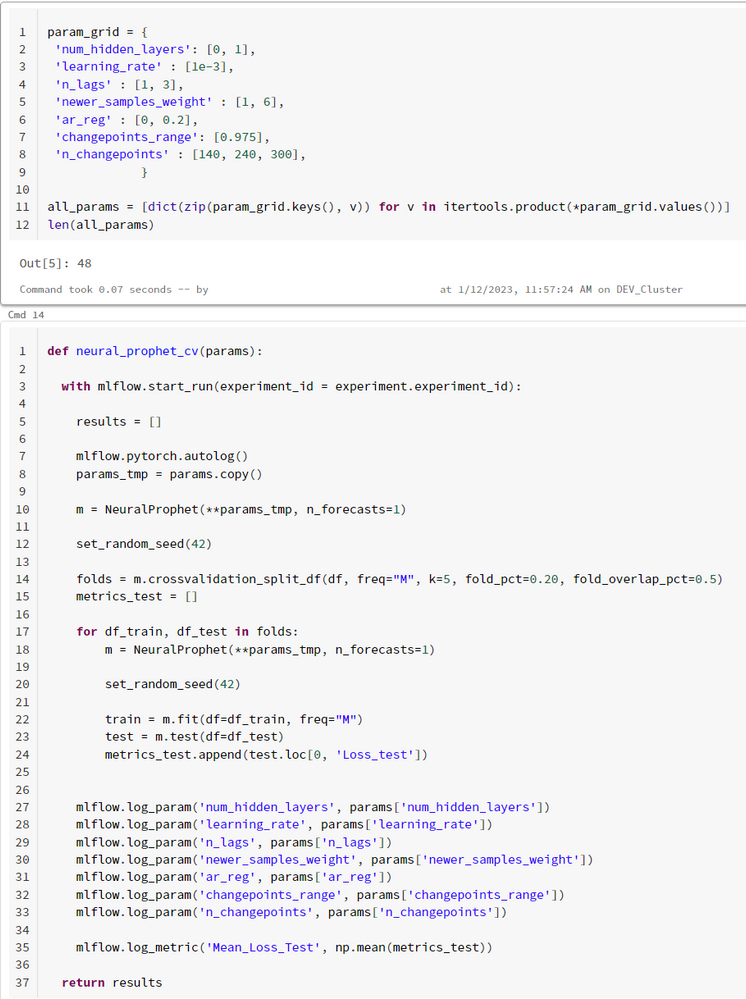

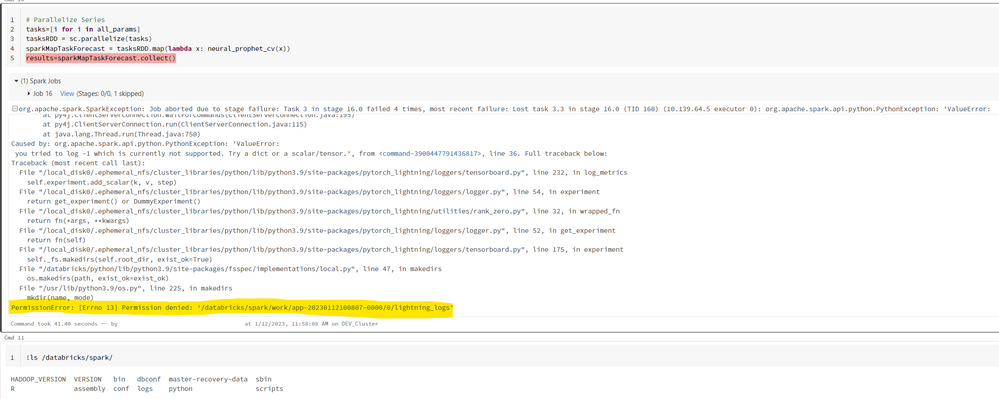

I'm doing parameter tuning for a NeuralProphet model (you can see in the image the parameters and code for training)

I also leave the error traceback. Thank you

Traceback (most recent call last):

File "/databricks/spark/python/pyspark/worker.py", line 876, in main

process()

File "/databricks/spark/python/pyspark/worker.py", line 868, in process

serializer.dump_stream(out_iter, outfile)

File "/databricks/spark/python/pyspark/serializers.py", line 325, in dump_stream

vs = list(itertools.islice(iterator, batch))

File "/databricks/spark/python/pyspark/util.py", line 84, in wrapper

return f(*args, **kwargs)

File "<command-3900447791436819>", line 4, in <lambda>

File "<command-3900447791436817>", line 36, in neural_prophet_cv

File "/local_disk0/.ephemeral_nfs/cluster_libraries/python/lib/python3.9/site-packages/neuralprophet/forecaster.py", line 795, in fit

metrics_df = self._train(

File "/local_disk0/.ephemeral_nfs/cluster_libraries/python/lib/python3.9/site-packages/neuralprophet/forecaster.py", line 2657, in _train

self.trainer.fit(

File "/local_disk0/.ephemeral_nfs/cluster_libraries/python/lib/python3.9/site-packages/mlflow/utils/autologging_utils/safety.py", line 555, in safe_patch_function

patch_function(call_original, *args, **kwargs)

File "/local_disk0/.ephemeral_nfs/cluster_libraries/python/lib/python3.9/site-packages/mlflow/utils/autologging_utils/safety.py", line 254, in patch_with_managed_run

result = patch_function(original, *args, **kwargs)

File "/local_disk0/.ephemeral_nfs/cluster_libraries/python/lib/python3.9/site-packages/mlflow/pytorch/_pytorch_autolog.py", line 370, in patched_fit

result = original(self, *args, **kwargs)

File "/local_disk0/.ephemeral_nfs/cluster_libraries/python/lib/python3.9/site-packages/mlflow/utils/autologging_utils/safety.py", line 536, in call_original

return call_original_fn_with_event_logging(_original_fn, og_args, og_kwargs)

File "/local_disk0/.ephemeral_nfs/cluster_libraries/python/lib/python3.9/site-packages/mlflow/utils/autologging_utils/safety.py", line 471, in call_original_fn_with_event_logging

original_fn_result = original_fn(*og_args, **og_kwargs)

File "/local_disk0/.ephemeral_nfs/cluster_libraries/python/lib/python3.9/site-packages/mlflow/utils/autologging_utils/safety.py", line 533, in _original_fn

original_result = original(*_og_args, **_og_kwargs)

File "/local_disk0/.ephemeral_nfs/cluster_libraries/python/lib/python3.9/site-packages/pytorch_lightning/trainer/trainer.py", line 696, in fit

self._call_and_handle_interrupt(

File "/local_disk0/.ephemeral_nfs/cluster_libraries/python/lib/python3.9/site-packages/pytorch_lightning/trainer/trainer.py", line 650, in _call_and_handle_interrupt

return trainer_fn(*args, **kwargs)

File "/local_disk0/.ephemeral_nfs/cluster_libraries/python/lib/python3.9/site-packages/pytorch_lightning/trainer/trainer.py", line 735, in _fit_impl

results = self._run(model, ckpt_path=self.ckpt_path)

File "/local_disk0/.ephemeral_nfs/cluster_libraries/python/lib/python3.9/site-packages/pytorch_lightning/trainer/trainer.py", line 1154, in _run

self._log_hyperparams()

File "/local_disk0/.ephemeral_nfs/cluster_libraries/python/lib/python3.9/site-packages/pytorch_lightning/trainer/trainer.py", line 1222, in _log_hyperparams

logger.log_hyperparams(hparams_initial)

File "/local_disk0/.ephemeral_nfs/cluster_libraries/python/lib/python3.9/site-packages/pytorch_lightning/utilities/rank_zero.py", line 32, in wrapped_fn

return fn(*args, **kwargs)

File "/local_disk0/.ephemeral_nfs/cluster_libraries/python/lib/python3.9/site-packages/pytorch_lightning/loggers/tensorboard.py", line 211, in log_hyperparams

self.log_metrics(metrics, 0)

File "/local_disk0/.ephemeral_nfs/cluster_libraries/python/lib/python3.9/site-packages/pytorch_lightning/utilities/rank_zero.py", line 32, in wrapped_fn

return fn(*args, **kwargs)

File "/local_disk0/.ephemeral_nfs/cluster_libraries/python/lib/python3.9/site-packages/neuralprophet/logger.py", line 29, in log_metrics

super(MetricsLogger, self).log_metrics(metrics, step)

File "/local_disk0/.ephemeral_nfs/cluster_libraries/python/lib/python3.9/site-packages/pytorch_lightning/utilities/rank_zero.py", line 32, in wrapped_fn

return fn(*args, **kwargs)

File "/local_disk0/.ephemeral_nfs/cluster_libraries/python/lib/python3.9/site-packages/pytorch_lightning/loggers/tensorboard.py", line 236, in log_metrics

raise ValueError(m) from ex

ValueError:

you tried to log -1 which is currently not supported. Try a dict or a scalar/tensor.

Labels:

- Labels:

-

Cluster

-

Databricks Cluster

-

Forecasting

-

Logs

4 REPLIES 4

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-12-2023 01:55 PM

Hi, Could you please check on cluster-level permissions and let us know if it helps?

Please refer: https://docs.databricks.com/security/access-control/cluster-acl.html#cluster-level-permissions

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-13-2023 03:13 AM

Hello. Thank you for your answer. I have 'Can manage' permissions.

P.S. I'm using Azure Databricks.

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-20-2023 02:13 AM

Options

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

02-18-2025 11:52 AM

Hi Ruben!

I am facing exactly the same error running a similar approach when using runtime 16.2 ML. I didn't have this issue when using runtime 12.2 LTS ML or 13.3 ML. Did you find a solution?

Many thanks!

Announcements

Related Content

- Disable Public Network Access on Databricks Managed Storage Account - Deny Assignment in Data Engineering

- Job compute fails due to BQ permissions in Machine Learning

- Redeploy Databricks Asset Bundle created by others in Data Engineering

- How to create an information extraction agent (Trial Version) in Generative AI

- mount cifs volume on all purpose compute results in permission denied in Administration & Architecture